MSI Big Bang Fuzion: Pulling The Covers Off Of Lucid’s Hydra Tech

Benchmark Results: S.T.A.L.K.E.R.: Call Of Pripyat

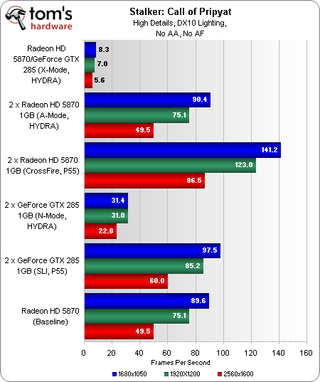

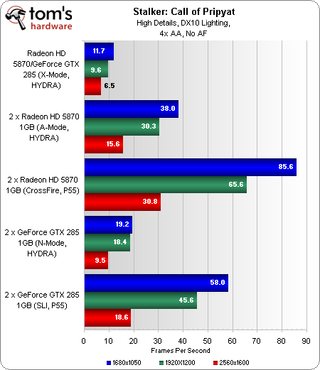

Although the latest version of S.T.A.L.K.E.R. represents a tough first real-world app for MSI’s Fuzion and its Hydra engine, it at least demonstrates the technology functioning exactly as Lucid explained to us.

Call of Pripyat isn’t in the control panel’s list of validated games, though its predecessor is supported in A-mode. Thus, we added the game manually, discovering that the GeForce GTX 285 has some serious compatibility issues to overcome. Meanwhile, it looks like the more agreeable Radeon HD 5870s are running in single-card mode, as they match our baseline run with no AA applied.

The CrossFire and SLI scores are included to show how each pair of cards would perform on a conventional P55 platform dividing PCI Express into a pair of x8 links.

Stay on the Cutting Edge

Join the experts who read Tom's Hardware for the inside track on enthusiast PC tech news — and have for over 25 years. We'll send breaking news and in-depth reviews of CPUs, GPUs, AI, maker hardware and more straight to your inbox.

Current page: Benchmark Results: S.T.A.L.K.E.R.: Call Of Pripyat

Prev Page Benchmark Results: 3DMark Vantage Next Page Benchmark Results: Crysis-

Maziar Nice article,its very good for users for upgrading,because for current SLI/CF you need 2 exact cards but with Lucid you can use different cards as well,but it still needs to be more optimized and has a long way ahead of it,it looks very promising thoughReply -

Von_Matrices I'm highly doubtful of the Steam hardware survey. I think it is underestimating the number of multi-GPU systems. I for one am running 4850 crossfire and steam has never detected a multi-GPU system when I was asked for the hardware survey. The 90% NVIDIA SLI seems also seems a little too high to me.Reply -

Bluescreendeath The CPU scores for the 3D vantage tests are way off. You need to turn off PhysX when benchmarking the CPU or it will skewer the results...Reply -

Bluescreendeath So far, the best scaling has been in Crysis. The 5870/GTX285 combo benchmarks looked very promising.Reply -

cangelini BluescreendeathThe CPU scores for the 3D vantage tests are way off. You need to turn off PhysX when benchmarking the CPU or it will skewer the results...Reply

It's explained in the analysis ;-) -

kravmaga "But when you spend $350 on a motherboard, you’re using graphics cards that cost more than that. If you’re not, you aren’t doing it right"Reply

Quoted from the last page; I disagree with that statement.

There are plenty of people in situations where using this board is a better investment performance per dollars. This is all the more relevant as this technology will undoubtedly find its way into cheaper boards and budget oriented setups where it will make all the sense in the world to bench it using mid-end value parts.

I, for one, would have liked to see what using gtx260s and 5770s would look like in this same setup. As is, this review leaves many questions unanswered. -

SpadeM Well the review does give an answer in the form of: It's better to run a ATI card for rendering and a nvidia card for physics and cuda (if u're into transcoding/accelerating with coreavc etc.) with windows 7 installed.Reply

Or at least that is the conclusion i'm comfortable with at the moment. -

HalfHuman i also agree with the fact that a person who will buy this board will necessarily go for the highest priced vid boards. maybe some will but not all. there will be more who will try to save the older vid cards.Reply

i also understand why you paired the 5870 with nvidia's greatest. there is a catch however... lucid guys did not have the chance to play with 5xxx series too much and you may be evaluating something that is not too ripe. i guess the 4xxx series would have been a better chance to see how well the technology works. couple that with games that are not yet certified for lucid, couple that with how much complexity this technology has to overcome... i think this is a magnificent accomplishment o lucid team part.

i also think that in order for this technology to become viable it will go down in price and will be found in much cheaper boards. for the moment the "experimenting phase" is done on the expensive spectrum. i saw some early comparisons and the scaling was beautiful. i know that the system put together by lucid... but that is fine since that was only a demo to show that it works. judging on how fast this guys are evolving i guess that they will go mainstream this year.

Most Popular