ASRock Brings Supercomputing to X58 Mobos

Yesterday ASRock said that it will unveil its personal Supercomputer system at CeBIT '09, sporting its ASRock X58 Supercomputer motherboard.

ASRock said that its X58 SuperComputer motherboard spawned from the world's need for something practical and cost-effective. "High specification and exquisite are not adequate anymore these days," and the company may be right in many aspects, especially when consumers are pinching fannies as well as pennies during economical hard times. While the global economy continues to plunge down into the galactic toilet, manufacturers looking to caress enthusiasts with "more bang for the buck" are a welcome ideal indeed.

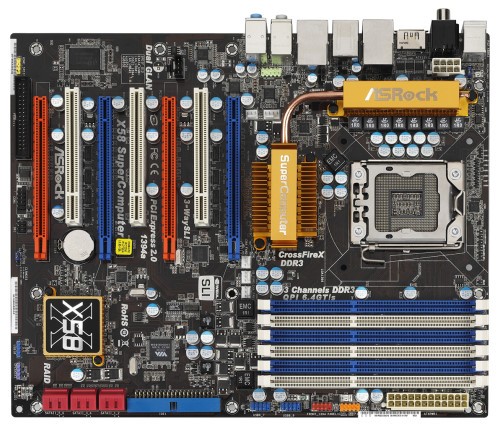

Although the term Supercomputer brings up images of HAL and R2-D2, ASRock says that its X58 Supercomputer is not just any ordinary X58 chipset motherboard on the market. In fact it's the only X58 motherboard worldwide that can be set up as a Nvidia Tesla Personal supercomputer. Instead of housing gigantic and expensive workstations that can make any hardware enthusiast claustrophobic, the ASRock X58 Supercomputer can bring all that monstrous processing power to consumers through a practical and affordable budget. Thus, the "personal" Supercomputer is born.

“The new X58 Supercomputer motherboard from ASRock is an excellent platform upon which to build an Nvidia Tesla Personal Supercomputer," said Andrew Walsh, general manager, personal supercomputing at NVIDIA. "Supporting up to 4 Nvidia GPUs, each delivering 1 teraflop of processing power, desktop systems built around the X58 Supercomputer motherboard will accelerate the pace of discovery for scientists and engineers around the world."

According to ASRock, the X58 motherboard is "exclusively designed" with 4 PCI-E x16 with double-wide spacing slots, making it very flexible when installing any Nvidia SLI or ATI CrossFire graphics combination. Supposedly, the computing power of a personal Supercomputer is equal to the performance of 250 workstations--although what kind of workstationsn ASRock is referring to is beyond us. Finally, ASRock's product blew the socks off Richard Chou, Leadtek's General Manager of Computer Product Business of Leadtek Research Inc.

“We are thrilled to collaborate with ASRock to showcase at Cebit 2009," he said. “As a leading manufacturer of professional graphics card solutions, this is a great opportunity to work with ASRock, a leading brand in motherboard manufacturing and design. We are confident this cooperation not only demonstrate the personal super computer capabilities of Tesla and X58 motherboard but also brings Workstation users a more valuable workstation experience."

ASRock said that three Tesla C1060 cards and an additional Quadro card can fit within the motherboard's 4 double-wide spacing PCI-E x16 slots. LL Hsu, Chief Operation Officer of ASRock Inc, said that the motherboard now offers supercomputing power to the Intel Core i7 platform, and is a good choice for gamers wanting to stuff their high-end gaming rigs with SLI or CrossFireX technologies and still be able to pick up a Big Mac meal at McDonald's. Is the motherboard the ideal component for taking over the world? That has yet to be seen, but we're betting the frame rates will melt your eyeballs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Just as a taste, here are a few specs to make you salivate in utter hardware bliss:

- Intel Socket 1366 Core i7 Processor Extreme Edition / Core i7 Processor Supports Intel Dynamic Speed Technology

- System Bus up to 6400 MT/s; Intel® QuickPath Interconnect

- ASRock DuraCap (2.5 x longer life time), 100% Japan-made high-quality Conductive Polymer Capacitors

- Intel X58 + ICH10R Chipsets

- Supports Triple Channel DDR3 2000(OC)/1866(OC)/1600(OC)/1333(OC)/1066 (6 x DIMM slots), non-ECC, un-buffered memory, Max. capacity up to 24GB

- Supports DDR3 ECC, buffered memory with Intel® Workstation 1S Xeon processors 3500 series

- Supports Intel Extreme Memory Profile (XMP)

- 4 x PCI Express 2.0 x16 slots (blue @ x8 / x16 mode, orange @ x8 / N/A mode) (Double-wide slot spacing between each PCI-E slot)

Consumers can visit ASRock and it's X58 SuperComputer at Cebit 2009, located at Hall 21, Stand C40.

Kevin Parrish has over a decade of experience as a writer, editor, and product tester. His work focused on computer hardware, networking equipment, smartphones, tablets, gaming consoles, and other internet-connected devices. His work has appeared in Tom's Hardware, Tom's Guide, Maximum PC, Digital Trends, Android Authority, How-To Geek, Lifewire, and others.

-

A Stoner Wonder why they cannot get more PCIe pathways for full X16 on all 4 slots, if it is supposed to be a super computer, you would think it would need more bandwidth.Reply -

dacman61 Call me crazy, but what is so special about this board? It has all of the same X58 Chipset Specs as most of the other models out there today. The X58 chip basically determines the features of a motherboard regardless of the manufacturer. Maybe just a few different slot options and how they chop up the different x1 PCI-Express lanes and such.Reply

Also, when are motherboard manufacturers going to stop putting in old PCI Slots? It's exactly like when they kept around those really old ISA slots back in the day. -

A StonerWonder why they cannot get more PCIe pathways for full X16 on all 4 slots, if it is supposed to be a super computer, you would think it would need more bandwidth.Because there's just no chipset out there that supports this!Reply

The speed is subject to the North/southbridge of a board.

I'm interested in just how much this setup will cost!

Seems to support upto 6x4GB DDR3!

So what else can you do with it, besides playing crysis and running the 'folding@home' project?

-

Old news are old. Tesla never needed any SLI enabled to work on X38. You could already build this last year with almost any X38 motherboard.Reply

-

bustapr Who would actually use 4 pci-e2.0 slots and for what. All I know is that for gaming people prefer putting 2 cards on sli and not 3. Plus a super computer would benefit more from more pci slots instead of an extra pci-e.Reply -

dacman61Call me crazy, but what is so special about this board? It has all of the same X58 Chipset Specs as most of the other models out there today. The X58 chip basically determines the features of a motherboard regardless of the manufacturer. Maybe just a few different slot options and how they chop up the different x1 PCI-Express lanes and such.Also, when are motherboard manufacturers going to stop putting in old PCI Slots? It's exactly like when they kept around those really old ISA slots back in the day.Because lots of users still use extension board audiocards like Soundblaster Audigy, network cards, etc... Most of them still use PCI.Reply

I wondered if someone knew if the pci slots on a board use different lanes compared to PCIE slots?

I know one thing, that's PCIE reduces speed depending on the amount of slots that are used. -

Anonymous CowardOld news are old. Tesla never needed any SLI enabled to work on X38. You could already build this last year with almost any X38 motherboard.Perhaps the extra memory bandwidth of this board (2000 oc, compared to 1600 on the X38) made the computer topple 1Teraflop of data, which technically put it into the supercomputer category?Reply

-

bustaprWho would actually use 4 pci-e2.0 slots and for what. All I know is that for gaming people prefer putting 2 cards on sli and not 3. Plus a super computer would benefit more from more pci slots instead of an extra pci-e.Could be on a dual/quadcore system where the memory controller used part of the FSB speed.Reply

There is a slight chance that the improved design of the corei7 frees up some bandwidth, and that using 3 or 4 cards could improve performance, something we could not see with tom's benchmark results on a Core2Quad Extreme.