Facebook Expands GDPR Privacy Protections Outside Europe

Facebook announced that it plans to introduce many of the privacy changes required by the General Data Protection Regulation (GDPR), which goes into effect on May 25, to users around the world. Those changes are supposed to make it easier for you to know how Facebook uses your personal data--which is exactly what many people are worried about in the wake of the ongoing Cambridge Analytica data collection scandal.

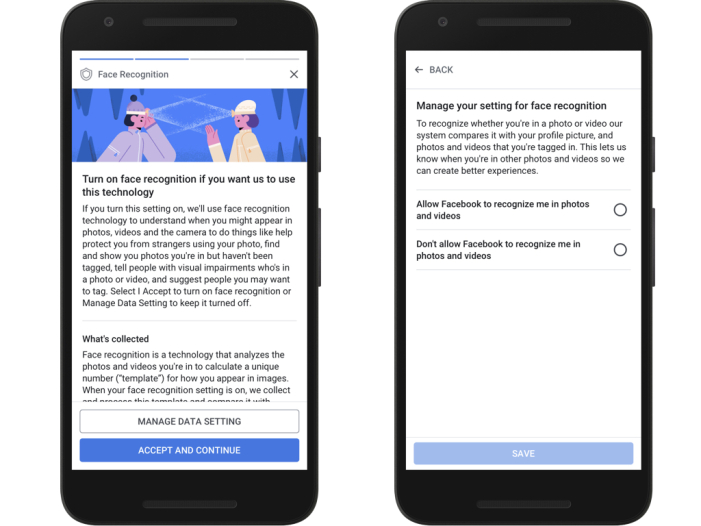

These changes will start with Facebook asking its users to decide if they want the company to use data from outside sources when it decides on what ads to show them; if they want to keep sharing political, religious, or relationship data from their profiles; and if they want to allow face recognition on their photos. Facebook will also ask users to agree to an updated terms of service and data policy accompanying these changes.

Here's what Facebook had to say about these new policies in its announcement:

We’re not asking for new rights to collect, use or share your data on Facebook, and we continue to commit that we do not sell information about you to advertisers or other partners. While the substance of our data policy is the same globally, people in the EU will see specific details relevant only to people who live there, like how to contact our Data Protection Officer under GDPR. We want to be clear that there is nothing different about the controls and protections we offer around the world.

These changes will start to be shown to Facebook users in the EU this week. Facebook said that "people in the rest of the world will be asked to make their choices on a slightly later schedule," though it didn't offer any details about what that schedule might look like. The company said that it's planning to "present the information in the ways that make the most sense for other regions," again without details about what that means.

Facebook did say that its updated privacy settings will start to roll out this week. These updated settings are supposed to bring everything privacy-related into one easy-to-find place instead of requiring people to navigate a labyrinth of settings whenever they want to control how their information is used. (Which, of course, many people might want to do after the Cambridge Analytica saga exposed how insecure that data is.)

Finally, the company said it's introducing some features especially for children and teens:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We’ve built many special protections into Facebook for all teens, regardless of location. For example, advertising categories for teens are more limited, and their default audience options for posts do not include “public.” We also keep face recognition off for anyone under age 18 and limit who can see or search specific information teens have shared, like hometown or birthday. Later this year we’ll introduce a new global online resource center specifically for teens, and more education about their most common privacy questions.

Facebook will also require users between the ages of 13 and 15 who live in the EU to get permission from their parents to use some aspects of its service. Those who don't get that permission "will see a less personalized version of Facebook with restricted sharing and less relevant ads until they get permission from a parent or guardian to use all aspects of Facebook." Aside from ads, it's not clear how young users will be restricted.

It's important to remember that Facebook was required by law to make most of these changes. The company didn't have to roll them out to users outside the EU, but with lingering questions about how it manages user data, expanding features it was already required to develop to additional areas seems like a no-brainer. The motivation for these changes is not rooted in altruism; they're being made as a matter of necessity.

Nathaniel Mott is a freelance news and features writer for Tom's Hardware US, covering breaking news, security, and the silliest aspects of the tech industry.

-

Olle P They try to make it seem as if this is an "expansion", but because of Facebook's global nature the truth is rather that it would be a lot more difficult and expensive for them to be GDPR compliant for European users while excluding "rest of the World".Reply