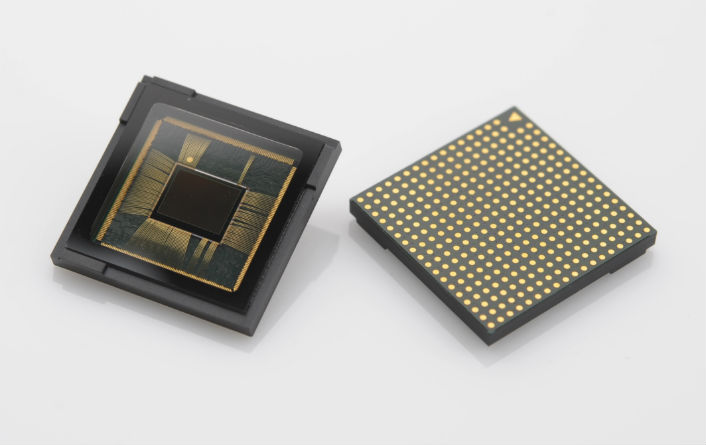

Samsung Announces Dual Pixel Sensor (Which Is In The Galaxy S7)

Samsung announced its latest image sensor for its own high-end smartphones, such as the recent Galaxy S7, that comes with 1.4μm pixels and Dual Pixel technology for much faster autofocus.

Samsung’s Galaxy S7, much like the latest Nexus devices, adopted a lower-resolution sensor that offers better low-light performance because of the resulting larger pixels. Somewhere around 12MP seems to be the optimum resolution for smartphone cameras right now, because the fewer pixels there are, the easier it is to do computational photography. When there are fewer pixels, less processing power is required to combine pictures or add various effects to them in real time.

Another big improvement for Samsung’s new sensor is the addition of the Dual Pixel technology, usually found in DSLRs. The technology enables significantly faster autofocus by employing two photodiodes on the left and right side of a pixel. The photodiodes then convert the light into measurable photocurrent for phase detection. That means that each and every one of those 12 million pixels will be capable of detecting phase differences of perceived light, resulting in the faster autofocus.

“With 12 million pixels working as a phase detection auto-focus (PDAF) agent, the new image sensor brings professional auto-focusing performance to a mobile device,” said Ben K. Hur, Vice President of Marketing, System LSI Business at Samsung Electronics. “Consumers will be able to capture their daily events and precious moments instantly on a smartphone as the moments unfold, regardless of lighting conditions,” he added.

Samsung’s new sensor also employs the ISOCELL technology, which isolates every pixel from one other to reduce color cross-talk and improve image quality.

Just a few years ago, most people thought that a phone camera could never achieve the performance of a DSLR. Although we’re not quite fully there yet, the adoption of what were typically DSLR technologies into smartphone sensors has increased lately. We’ve seen larger sensors (in some devices), higher quality lenses, optical image stabilization, phase detection autofocus, laser autofocus, optical zoom, and now Dual Pixel technology, too.

As smartphone chips achieve higher and higher performance, computational photography will only play a bigger role as well -- perhaps an even bigger one than in DSLRs. This could level the playing field somewhat and reduce other natural shortcomings that smartphone cameras have (such as the physical size of the sensors) compared to DSLRs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Lucian Armasu is a Contributing Writer for Tom's Hardware. You can follow him at @lucian_armasu.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

Scrim Pinion Now, if only we could get the top phone manufacturers to include optical image stabilization in their phone cameras...Reply -

rhysiam It's great to see premium features on phone camera senses, but there's still one massive issue for phones... the lens. An entry level SLR with a premium lens will, in most situations, produce significantly better pictures than a premium SLR with a standard kit lens (which are still vastly better than phone lenses). Large, high quality sensors with advanced imaging processing undoubtedly help, but it's just no substitute for a quality lens. IMHO any talk of phones being able to "achieve the performance of a DSLR" may be true if we're talking about sensor and processing performance, but ignores the most important components in the photographer's kit, lenses.Reply -

therealduckofdeath @tom10167, it's insanely fast. Borderline instantaneous. https://www.youtube.com/watch?v=kD6Cabc6q4QReply -

paladinnz ReplyNow, if only we could get the top phone manufacturers to include optical image stabilization in their phone cameras...

What you mean like the iPhone 6 plus, Galaxy Note 4, Nexus 6 and a bunch of Nokia Luminas? -

Pailin ReplySo this proves Microsoft's 41 MP was fail after all.

I'd rather do my photoshopping on my PC with better quality images than on my smartphone with lower quality images... ?