AMD MI300X Guzzles Power, Rated for 750 Watts

With great performance come great power requirements.

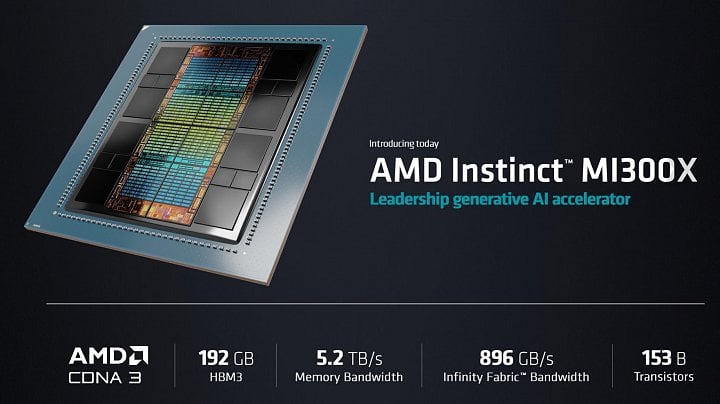

We're still on on the heels of AMD's official announcement of its AI datacenter accelerator, the MI300X. It's certainly a processing force to be reckoned with - one which AMD aims to use as a cudgel to try and dislodge Nvidia from its perch as the dominant player in the AI acceleration world. But increasing performance does sometimes translate into higher power draws, despite each new architecture usually improving power efficiency (consuming lees energy for the same unit of work). And AMD's OAM-based (OCP Accelerator Module) - the MI300X - is certainly a power guzzler: at 750 W, it's actually the product with the highest-rated TDP ever in its form-factor. Don't worry, though: the specifications for OAM solutions go all the way up to 1000 W of deliverable power, so there's still room to scale performance further.

While 750 W is an egregious amount of power to be consumed by any individual piece of PC hardware (at least from the perspective of an individual), we do have to keep in mind that those watts are powering hardware that's much faster and more specialized than even AMD's most powerful graphics cards. For that wattage, AMD is offering what it claims to be the most performant accelerator for AI-related workloads (both in generative AI and Large Language Model [LLM] processing).

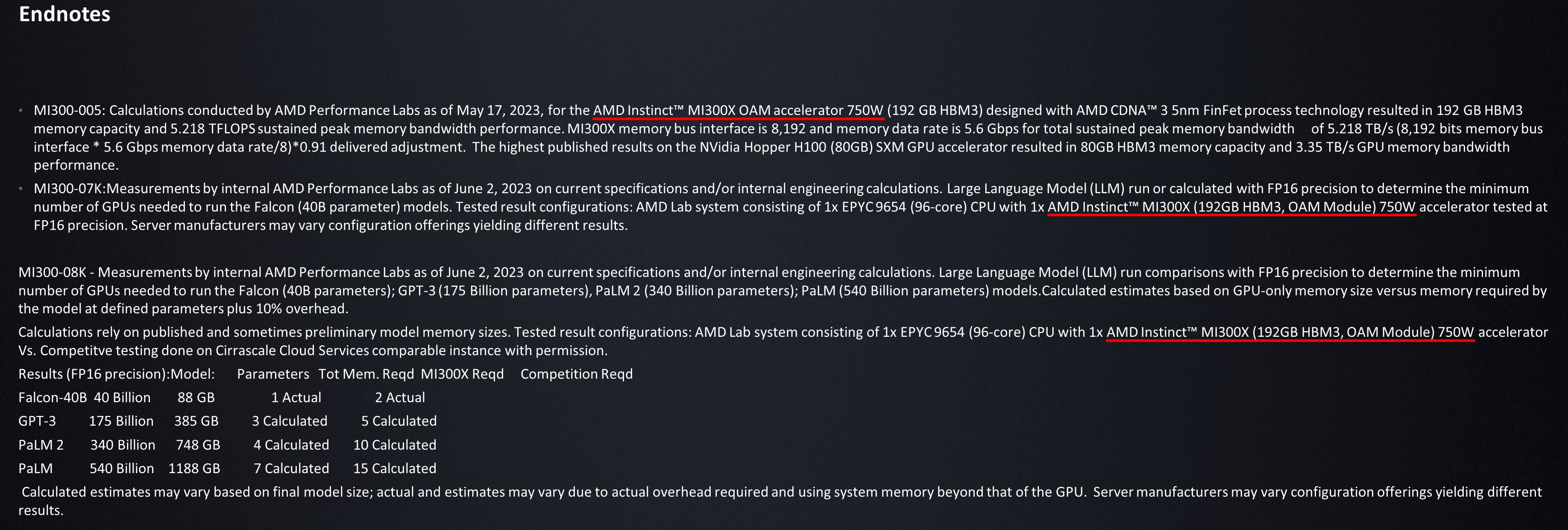

Considering how AMD managed to cram 12 chiplets built across two fabrication processes (8x 5nm [GPU] and 4x 6nm nodes [I/O die] for a total of 153 billion transistors, that claim may have some backing. Of course, there's also the matter that AMD managed to run a 40-billion parameter LLM model (Falcon 40-B) atop a single MI300X. Now that's impressive, especially considering AMD aims for the MI300X to scale to up to eight accelerators in a single package.

| Row 0 - Cell 0 | AMD MI300X | AMD MI300A | AMD MI250X | AMD RX 7900 XTX |

| CPU cores | 0 | 3x 8-core CCD (24-cores) [Zen 4] | - | - |

| GPU cores | 8x GCD (304 CUs) [CDNA 3] | 6x GCD (228 CUs) [CDNA 3] | (220 CUs) [CDNA 2] | (RDNA 3) |

| Adressable Memory | 192 GB (8x 24 GB HBM3) | 128 GB (8x 16 GB HBM3) | 128 GB (8x 16 GB HBM2e) | 24 GB GDDR6 |

| Memory Bandwidth | 5.2 TB/s | 5.2 TB/s | ~ 3.28 TB/s | 960 GB/s |

| Infinity Fabric Bandwidth | 896 GB/s | 896 GB/s | 800 GB/s | - |

| Transistor Count | 153 Billion | 146 Billion | ~ 58.2 Billion | ~ 57 Billion |

| TDP | 750 W | ? | 560 W | 355 W |

As we see from the table above, AMD's focus on increased power efficiency hasn't been enough to offset the increasing computing requirements for High Performance Computing (HPC) scenarios, which now include the processing of LLM models that seem to be springing left and right. Increased performance requirements mean that even with AMD's latest power-saving technologies, techniques, and the latest fabrication technology from TSMC, there was still the need for a 190 W power envelope increase.

But that 190 W TDP increase (around 33% higher power draw) does translate into roughly three times the transistors being powered up compared to the MI250X - an impressive showing on efficiency gains, even without considering the MI300X's improved support for sparse algorithms (incredibly important for LLM and AI processing). That's not to say anything about the difference between AMD's compute accelerators and the company's flagship gaming GPU the comparably puny RX 7900 XTX.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Francisco Pires is a freelance news writer for Tom's Hardware with a soft side for quantum computing.

-

So the power requirements have gone up by 34-50% in a single generation. Doesn't come as a surprise though.Reply

At the same time, this power increase was to be expected considering the design of the chip itself and the performance it has on offer. The chip delivers an 8x performance boost in AI workloads while being 5 times more efficient.

A recent Gigabyte Server roadmap also showcased how CPUs, GPUs & APUs are getting close to the 1KW power barrier. AMD certainly has the most power-hungry chip of all but the red team has also invested in a host of chiplet and packing technologies that allow them to significantly reduce power requirements.

Having chips stacked and packaged together also helps to reduce the relative Bits/Joule cost. So far, advanced packaging has alone provided a 50x reduction in communication power compared to when these chips were all stand-alone and put far to each other across the board.

Though efficiency is not a linear progression-like performance. Based on CPU & GPU efficiency trends, we are starting to see the progress flattening out so while achieving "Zettascale" performance in the next 10 years or so will be achievable, it will come at a significant efficiency cost.

https://i.imgur.com/wcZijro.jpg -

bit_user I see at least 2 trends driving this:Reply

The more expensive new process nodes get per mm^2, the more incentive there's going to be to run it at higher clocks.

Chiplet technology has unlocked a path towards transistor counts increasing faster than process node density increases, which naturally means it's going to take more power to operate them. -

bit_user Reply

HPC facilities are ultimately somewhat constrained in how much power they can consume and how much heat they can dissipate. Lisa Su was clear about the need to find technological innovations to improve efficiency, since the default trajectory would consume far too much power. IIRC, the difference is like orders of magnitude.Metal Messiah. said:Based on CPU & GPU efficiency trends, we are starting to see the progress flattening out so while achieving "Zettascale" performance in the next 10 years or so will be achievable, it will come at a significant efficiency cost. -

The Historical Fidelity Reply

Hi just want to point out an error in the article. In the comparison table, the memory type and memory bandwidth for the 7900xtx are wrong. It uses GDDR6 not GDDR5 and bandwidth is close to 1TB/s not 384GB/sAdmin said:AMD's latest product for the AI accelerator market, the MI300X, is a tour de force for the company's High Performance Computing (HPC) chops, but with great performance also come great power requirements.

AMD MI300X Guzzles Power, Rated for 750 Watts : Read more -

prtskg Compared to previous gen, 250X, TDP has increased 50% while transistors has increased just north of 2.62 times. Let's see how performance increases.Reply -

Kamen Rider Blade That 750 Watts is powering 8x GPU Chiplets shiped & 192 GiB of HBM3 on a single large Tile.Reply

That's not half bad IMO. -

ReplyThe Historical Fidelity said:Hi just want to point out an error in the article. In the comparison table, the memory type and memory bandwidth for the 7900xtx are wrong. It uses GDDR6 not GDDR5 and bandwidth is close to 1TB/s not 384GB/s

+1 for this. -

gg83 Reply

I like the idea of turning the heat back to electricity to run the stuff like fans and led's. Who cares about power draw if none of it is wasted right?bit_user said:HPC facilities are ultimately somewhat constrained in how much power they can consume and how much heat they can dissipate. Lisa Su was clear about the need to find technological innovations to improve efficiency, since the default trajectory would consume far too much power. IIRC, the difference is like orders of magnitude. -

bit_user Reply

It's pretty difficult to recover energy from waste heat, or it'd be a lot more common. I'd be surprised if they could even achieve 5% efficiency.gg83 said:I like the idea of turning the heat back to electricity to run the stuff like fans and led's. Who cares about power draw if none of it is wasted right?

The only way that waste heat isn't actually wasted is if you use it to heat a swimming pool or an eel farm, where you'd have needed some form of heating, regardless.

https://www.tomshardware.com/news/japanese-data-center-starts-eel-farming-side-hustlehttps://www.tomshardware.com/news/heata-server-hot-water-trial-uk