Raspberry Pi Robot Maps Its World with LiDAR

Autonomous Raspberry Pi robots navigate their environment without bumping into things. Using an ultrasonic sensor, such as the HC-SR04, to detect obstacles is a fairly common tool for Pi-based robots and cars but this robot car project, created by maker and developer エス ラボ (S Lab), is going even further by mapping out a room with the help of a LiDAR sensor.

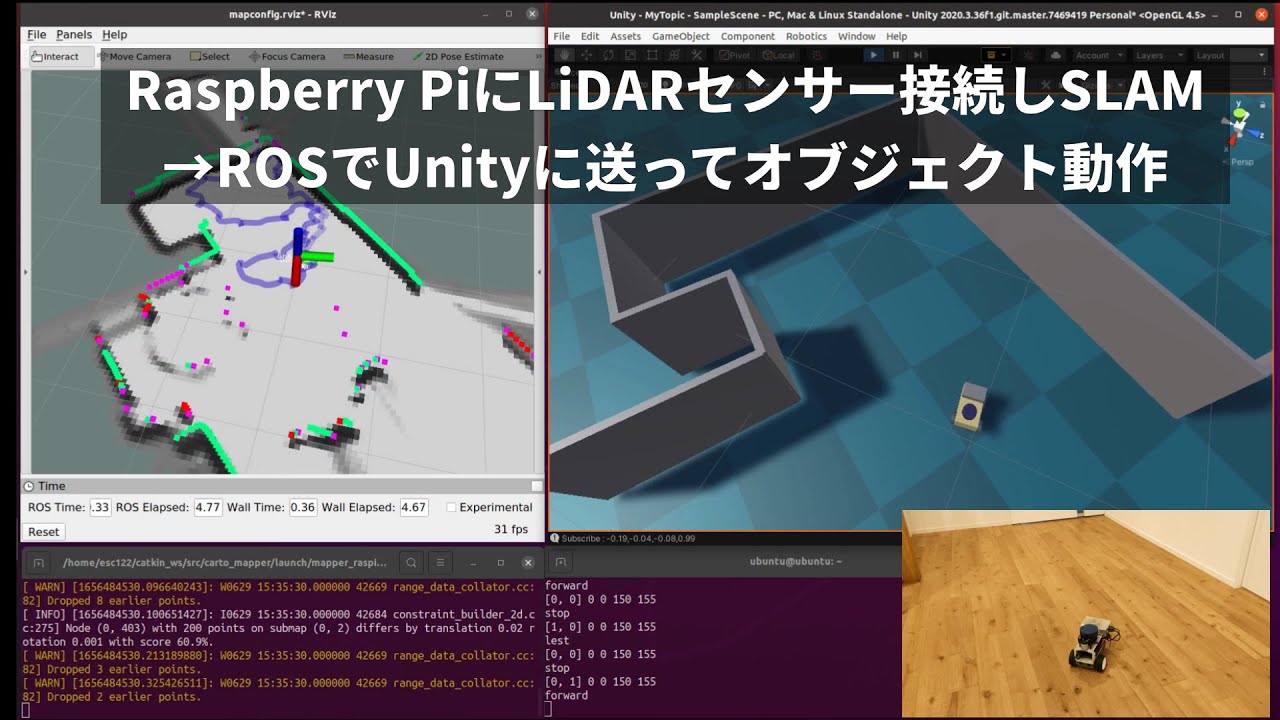

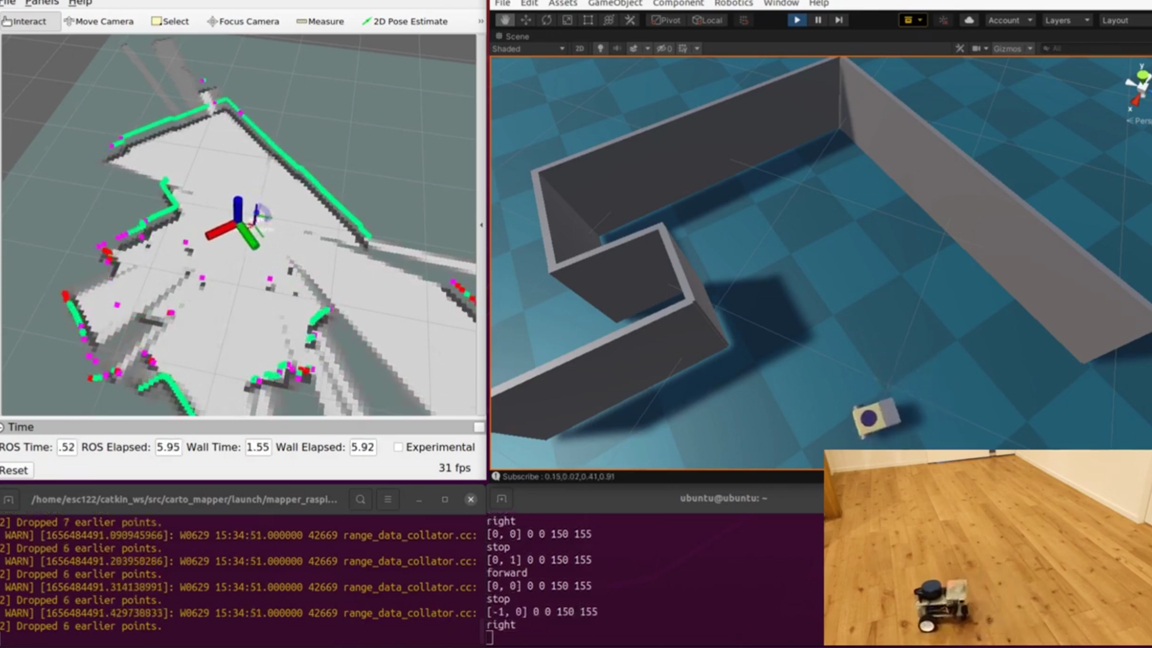

The car has a Raspberry Pi 4 4GB at its core and as it drives around, LiDAR sensor data is transmitted from the Pi to a nearby PC which uses a SLAM-based (simultaneous localization and mapping) system to create a virtual, 3D replica of the room surrounding the Raspberry Pi. The program also provides a virtual representation of the car in Unity to estimate its position in the room.

This is one of many projects created by S Lab who specializes in various microelectronics projects. They’ve created several Pi-based systems that synchronize with Unity to create complimentary virtual assets. According to S Lab, they also have experience with JetsonNano boards, M5Stack modules and programming in Python. You can find a history of projects on their official blog.

This project was built using a Raspberry Pi 4 4GB model and uses a YDLIDAR X2L LiDAR sensor to detect the 3D space around it. The Linux-based PC used for processing the data is in the same room and running Ubuntu 20.04.4. If you wanted to make something similar, a Raspberry Pi 3B+ may work but you’ll benefit from the processing power of the Raspberry Pi 4 especially with 4GB of RAM.

S Lab was kind enough to make the project open source and has shared the code for interested parties over at GitHub. S Lab explains, the rotation angle and position data is transmitted to unity using ROS (robotics operating system) along with ROS-TCP-Connector 0.7.0 which is also available at GitHub.

If you want to recreate this Raspberry Pi project, you should check out the official demo video shared by S Lab over at YouTube. Be sure to follow S Lab for more impressive projects and fun creations using the Raspberry Pi.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ash Hill is a contributing writer for Tom's Hardware with a wealth of experience in the hobby electronics, 3D printing and PCs. She manages the Pi projects of the month and much of our daily Raspberry Pi reporting while also finding the best coupons and deals on all tech.