Eight Computing Advancements At IBM Research

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Introduction

Although the company hasn’t used the “think” marketing term in its television ads for years, IBM is definitely one of the smartest tech companies around. In many ways, IBM transitioned from bring a PC manufacturer to a think tank that creates ideas—and charges the analyst fees you would expect if you want to hear about those ideas. Yet, in the IBM Research arm, there are lofty goals: to create the next kind of storage technology; one that is much faster yet less harmful on the environment, or to invent ways to read data from physical objects, such as a bridge or a waterway, that have not been known for being highly connected.

Each of these research projects is particularly interesting from a PC computing perspective because they will likely make their way into your home or place of work in the next ten years. In some cases, the research has already made an impact. Each project solves a puzzling computing problem, and will help usher in an age of more ubiquitous computing.

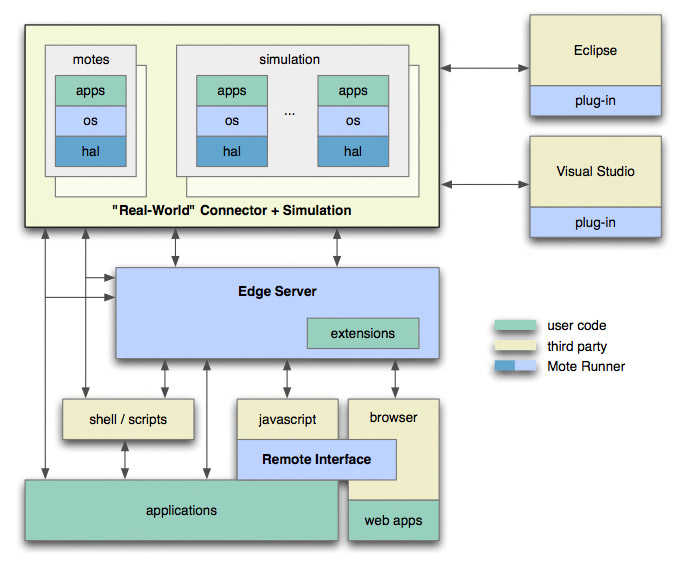

Mote Runner

Sensor networks are becoming increasingly popular. They consist of small wireless devices that feed data back to a computer system. For example, you might have a sensor on a bridge that reports back on the amount of traffic that has crossed in any given month. At IBM’s Zurich lab, researchers are developing run-time software that resides on the sensor itself.

Today, sensor networks tend to be heterogeneous and autonomous from each other. Each new sensor uses a closed software framework, meaning there’s no standard set of protocols allowing them to interoperate with other sensor networks. Mote Runner is one answer, according to IBM. As a run-time environment that runs in a virtual machine, the “motes” (or endpoint sensors) are re-usable, scalable, and hardware agnostic; the software routines are easier to program. And, because they are self-contained programs, they are easier to deploy on a variety of sensor hardware.

“Mote Runner makes best use of the available resources—especially power—by requiring only an 8-bit processor, 8KB of RAM, and 128KB of flash,” says Dr. Thorsten Kramp, a research staff member at IBM Research in Zurich. “Some of the practical uses for the technology are in water management, glacier movements, forest fires, building and facility management, smart metering and energy efficiency, medicine and health care, sports medicine, and assisted living and patient monitoring.”

Easy English Communication

Workgroup collaboration on systems such as WebEx.com work well for discussing a topic and making decisions, as long as all of the participants speak the same language. At IBM’s China Research Laboratory, a new project called Easy English Communication is underway that uses audio, video, and text in a Web conferencing session, where a text stream is generated automatically by a speech transcription engine.

The text transcription is important because it is a more accurate way to do real-time translation. Speech-to-speech translation systems tend to be error-prone, not only in the pronunciation but in the translation itself. For speech-to-text transcriptions, error rates are only about 10% when the speaker users a near-field microphone, compared to greater than 20% error rate for standard speech recognition systems.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

“EEC proposes an alternative approach [to speech recognition] using real-time speech transcription, called captions, to help non-native speakers achieve a better comprehension in computer-mediated communication,” says Yong Qin, the senior manager leading the speech team at IBM Research in China.

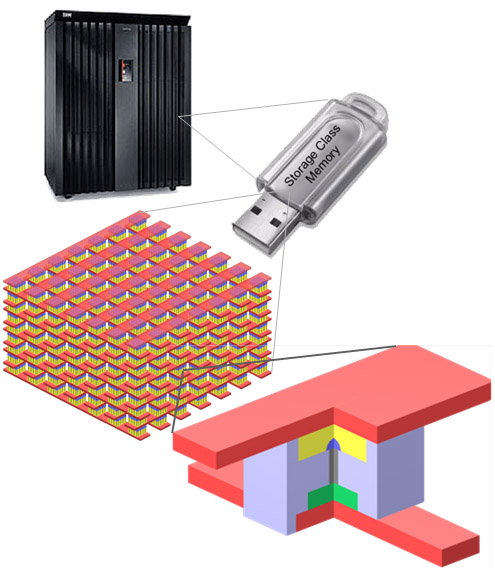

Storage-Class Memory

Two methods of storing data on computers are popular today, and both have their advantages. The NAND flash used on SSDs is both fast and small. Since flash memory uses semiconductors for storage, SSDs look more like the RAM on your motherboard than the mechanical hard disk. However, SSD drives are expensive and capacities are still relatively low. Hard disk drives, which use a high-speed motor to access information stored on a magnetic disk, are less costly but are also lower-performance.

IBM’s Almaden Research Center has developed storage-class memory (SCM), a hybrid of flash and hard disk technology (IBM claims) in which solid-state drives are arranged in an array and accessed in a way similar to a magnetic drive instead of the usual single-flash chip you find in a USB flash drive. Each solid-state component would purportedly last longer and operate faster than magnetic media.

“A storage-class memory device would require a solid-state nonvolatile memory technology that could be manufactured at an extremely high effective areal density using some combination of sublithographic patterning techniques, multiple bits per cell, and multiple layers of devices,” says IBM researcher Geoffrey Burr. “Using SCM as a disk drive replacement, storage system products would be able to offer orders of magnitude better random and sequential I/O performance than comparable disk-based systems, yet SCM would require far less space and power in the data center. However, the success of SCM depends critically on its cost, and thus on the attainment of ultra-high densities--higher than current 2-bit Multi-Level Cell NAND flash--which is where our current research and development is focused.”

With storage class memory, an array of solid state disks is arranged in a similar fashion to how magnetic platters used to be stacked on hard drives (many drives are now on a single platter), but accessed directly as a semiconductor chip instead of by rotating the platter.

IBM And CyberAgent

Blog traffic reporting software can only tell you part of the story of who's reading your page. Such apps provide a synopsis of where users have visited, and which comments they have posted, but they do not show a history of the user activity and the text they have either posted or read online. Along with Japan-based CyberAgent, IBM’s Tokyo Research Lab has developed a monitoring system for the Ameba blog in Japan (ameba.jp, which has about 4.5 million users) that provides a well-rounded analysis of user interests, showing the user’s blog posting frequency, viewing patterns (for example, which sections of a blog the user visited), and summaries of text inputs.

With the new analysis engine, Ameba can suggest more targeted blogs for users that take into account their actual viewing preferences, text inputs, and visited links and not just recommendations based on their text input. “We believe this is the world's first analysis platform which can conduct composite analysis of both text data and user activity all together,” says Akiko Murakami, a researcher at IBM Research Tokyo.

At the Ameba blog network in Japan, new research shows not just the mouse clicks and usage patterns, but the interests and preferences a user has, leading to a better suggestion system.

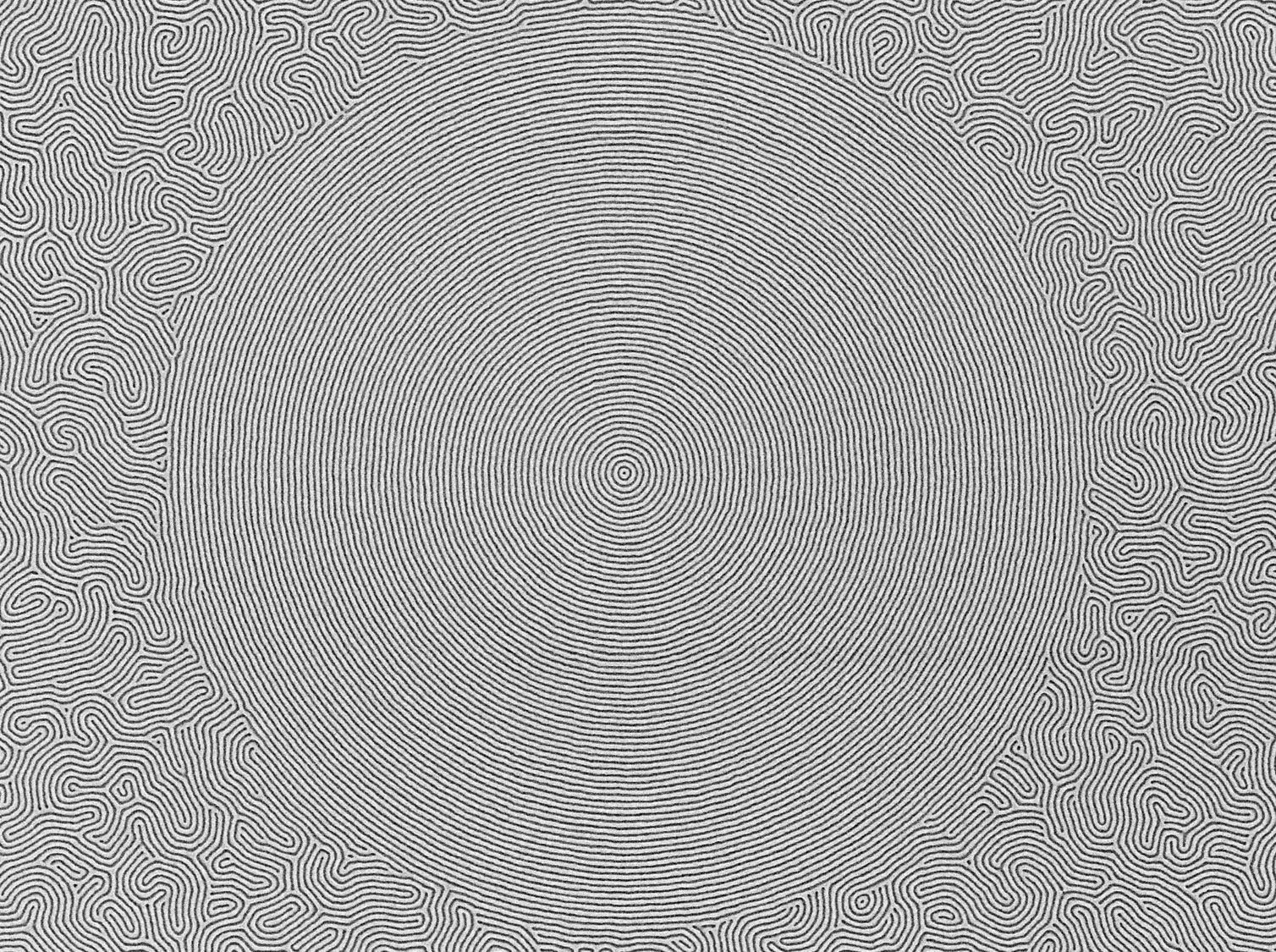

Directed Self-Assembly (DSA)

As anyone who studies computer science knows, the perceived limitations of Moore’s Law, which dictates that the number of transistors on a chip tends to double every 18 months, have caused some consternation with companies like AMD and Intel. It’s getting difficult to increase processing speeds because transistor size is now so small that hundreds of millions of transistors exist on one chip, and it is becoming even harder to include more. Intel has invented new manufacturing methods, and new materials to help decrease the size of transistors, but there is a ceiling, according to IBM, and we are already bumping into it.

“Historically [companies have reduced the size of transistors] by technological advances that allow the process engineers to use smaller and smaller wavelengths in the essential step of photolithography, where a pattern of light is used in a multi-step process to form the circuit patterns,” says Bill Hinsberg, a researcher at IBM Research Almaden. Initially, integrated circuits were patterned using violet light with a wavelength of about 440nm, and today deep-ultraviolet light from a laser source with a wavelength of 193nm is used in the photolithography step. Further reductions in wavelength grow increasingly difficult for technical reasons, so alternate methods for forming circuit patterns are under investigation.”

This is why parallel processing, where multiple cores are used inside a PC, is shaping up to be the future. IBM has also invented a way for nano-particles to “self-assemble” and fit more transistors on a processor core, using a technique where block polymers form a pattern that is not possible for the 193nm photolithography process. With self-assembly, the polymer molecule contains two sections (called blocks) that tend to separate from each other into a pattern, yet do not completely separate.

“Under the right conditions, a thin film of such block copolymers can form regular array patterns of spheres, cylinders, or lines,” says Hinsberg. “The dimensions of these nanostructures depend on the size of the polymer molecules and features smaller than 10nm have been demonstrated. In our work at IBM Almaden, we are designing methods to control this pattern formation in a way useful for semiconductor fabrication by using larger scale templates to orient the patterns. This approach is termed directed self-assembly. Our work has shown that it is possible to do this in a way that produces multiple nanoscale lines from a larger guiding pattern, and that the intrinsic properties of block copolymers allow defects in the guide pattern to heal, improving pattern quality, an important and useful attribute.”

With directed self-assembly, the polymer molecules automatically separate into a pattern, but a chemical bonding agent keeps them from separating completely. The result is a nano-structure that can be used on a transistor that increases processing power beyond the limitations of current photolithography methods.

Watson

Like the chess-match supercomputer tests from a few years ago, the Watson project has a more obvious consumer element. It seeks to make computing seem more human. Watson, which is known as a Question/Answering system, is a supercomputing project where the computer not only solves complex problems, but can solve them as quickly and accurately as a human. To test the project, IBM has arranged for the supercomputer to compete on the show Jeopardy! at some point. The real test is not whether the computer provides an accurate response in a timely manner, but whether the response seems as though it was uttered by a human in a logical phrase (or as the show dictates, a question).

“The scientists believe that the computing system will be able to understand complex questions and answer with enough precision and speed to compete on Jeopardy!, which is a game demanding knowledge and quick recall, covering a broad range of topics,” says Sara Delekta Galligan, an IBM Research spokesperson.

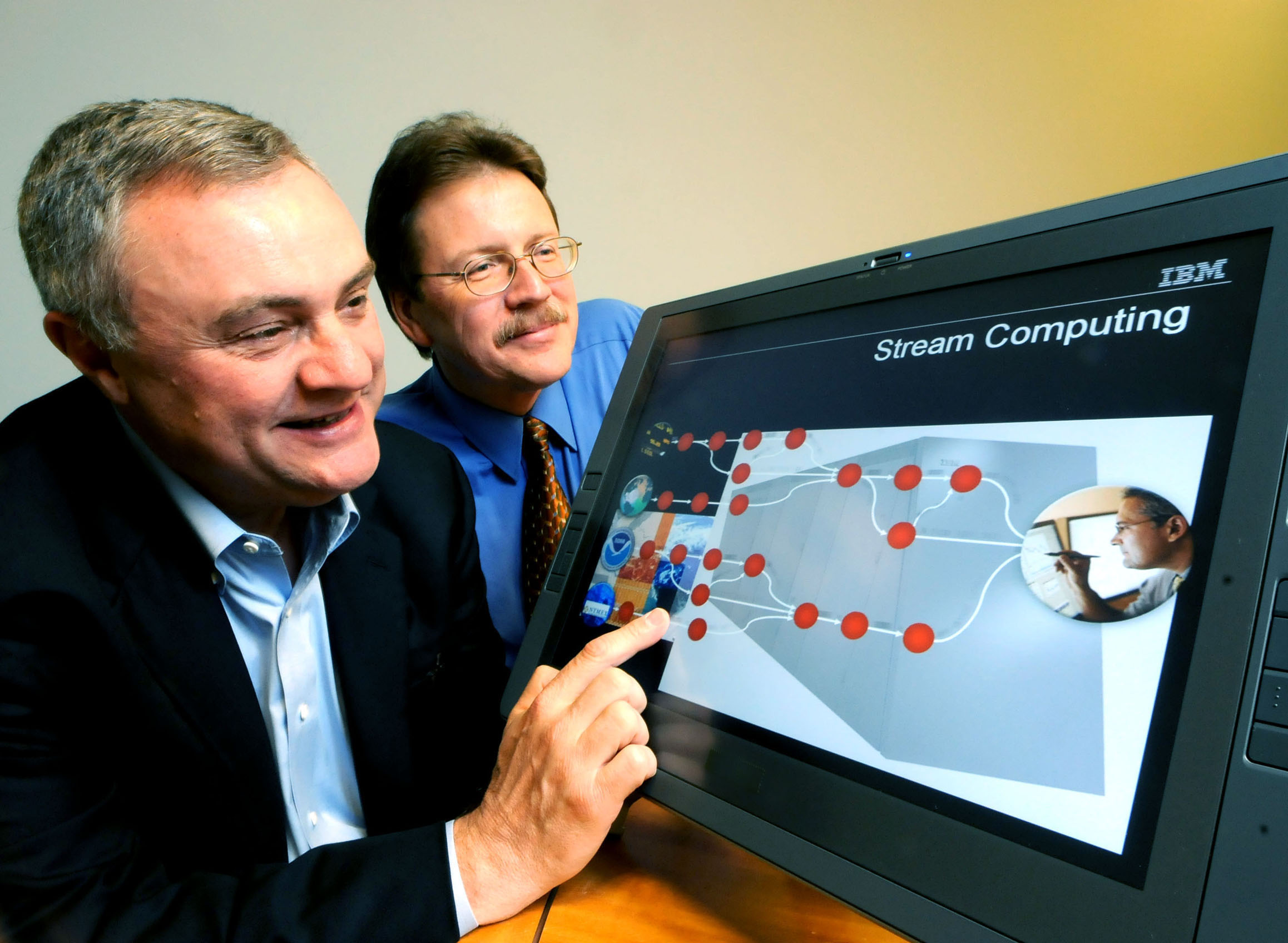

Stream Computing

Stream computing is one of the most interesting projects at IBM Research because it could have a major impact on all facets of computing. In traditional information analysis, you feed a data set into an application, which performs the analysis and then provides the results. To perform additional analysis, you change the data and re-start the test. With stream computing, the data keeps feeding to the application constantly, and the analysis continues in real-time. From a consumer standpoint, this would be akin to taking photographs with a camera, feeding them into Photoshop continually, adjusting pixels for color quality, and changing the images and improving them as you snap each new image--except that, you could keep snapping the same photo at different times of the day and with different settings, and Photoshop would provide the best possible image using automated filters and effects.

The problem that stream computing addresses is that, in the time it takes to run a query using a given data set, especially if you have scheduled time on a supercomputer for the test, the original data may have changed. This means scientists are dealing with out-of-date information.

“What's unique about IBM's stream computing software is that it utilizes a new streaming architecture and breakthrough mathematical algorithms to create a forward-looking analysis of data from any source, narrowing down what people are looking for and continuously refining the answer as additional data is made available,” says Nagui Halim, the chief scientist for stream computing at IBM. “The stream computing software has the ability to assemble applications on the fly based on the inquiry it is trying to solve, using a new software architecture that pulls in the components it needs, when they are needed, that are best at handling a specific task,” adds Halim. “Millions of pieces of data from multiple sources are streaming into systems that are not capable of processing the data fast enough. We constructed a computing system built for perpetual analytics that analyzes information as it happens, which has very powerful applications in financial services, government, astronomy, traffic control, healthcare and many other scientific and business areas. This is computing at the speed of life.”

Personal Information Environments

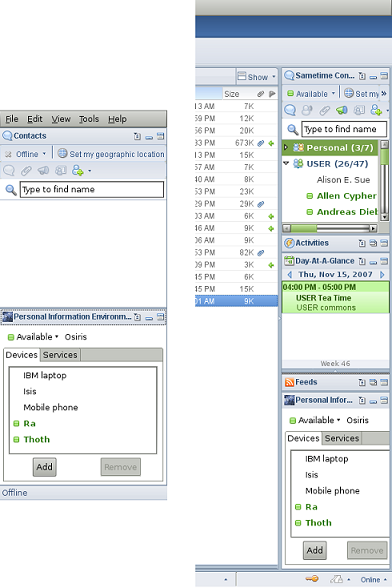

Addressing the problem that most of us have with carrying multiple gadgets, using multiple computers, and signing into to a variety of Web services, the IBM Personal Information Environment project is a new platform that attempts to unify several disparate messaging systems and computers. There is a central PIE server and a PIE client, but they are not tied to one particular device. Each client you use (a laptop, a smartphone, a desktop computer) runs as a client to the PIE server. The real advantage is that all communicate filters through the PIE server, be it an e-mail, status update, or instant message, in one familiar interface. There is also a file syncing service that makes sure you have access to the same files regardless of which device you use, and a search function that searches all personal data on the server.

Another example of how PIE works: if you create a task list of things you need to do in your job, you can access the same task list from any device. If you add an item to the list, and then access it from a different device, you will see the updated list. It’s essentially a communication system in the cloud that is not tied to any particular Web site, operating system, or software program.

“We're recognizing that users employ persistent collections of devices and we are supporting that practice with software, leveraging the instant messaging protocols, that makes it easy for developers to build services that communicate information, events, and commands across devices,” says Jeff Pierce, the manager of Mobile Computing Research at IBM Research Almaden.

The concept behind PIE is to unify all communication on a central server and make it searchable, accessible from any device, and synced automatically so that data is always up to date.

-

neiroatopelcc I wonder if mote runner could solve the problem siemens has with its cts systems! (ie they don't work anywhere near as good as they do in theory, because they employ a million different sensors and need different staffing depending on the hardware - and at siemens there's no such word as teamwork)Reply -

powerbaselx Also it's a pitty IBM couldn't convince Apple to keep PowerPC laptops (even moving to Intel Macbooks at the sametime), and also for not bring back a new version of OS/2 operating system in the same line of MacOS.Reply

For the ones that remember the old OS/2 Warp it was a great operating system very stable with excelent multitasking capabilities.

-

void_pointer ReplyIn many ways, IBM transitioned from bring a mere PC manufacturer to a think tank that creates ideas

IBM? A mere PC manufacturer? OMG! That is mind-shatteringly terrible -- 30 seconds of research would have told you that your understanding of IBM's history is hopelessly and ineptly inaccurate!

Poor.

Seriously poor. -

neiroatopelcc powerbaselxAlso it's a pitty IBM couldn't convince Apple to keep PowerPC laptops (even moving to Intel Macbooks at the sametime), and also for not bring back a new version of OS/2 operating system in the same line of MacOS.For the ones that remember the old OS/2 Warp it was a great operating system very stable with excelent multitasking capabilities.was it? I remember we bought it simply because it was cheaper to buy the os and format the floppies than buy floppies individually!Reply -

njalterio ReplyIn many ways, IBM transitioned from bring a mere PC manufacturer...

Nope. Not reading any further! -

The move to multi-core is not driven by lithographic limitations but instead by device leakage/Power/thermal envelope putting a limitation on maximum clock frequency. Jobs can only be executed faster now if they can be distributed over many cores. Lithography is enabling multi-cores: 2 cores per chip 2 years ago, now 6 and soon 8 cores per chip.Reply