Nvidia Kepler GK104: More Leaks, Rumors on Specifications

Nvidia's Kepler goes big with its 1536 CUDA cores, a vast improvement over the Fermi's 512 CUDA cores.

As reported on February 7th, we got a first glimpse of the rumored specifications for Nvidia's Kepler based graphics cards. The leaked specifications were met with both "wow.. can't wait" and "wow... those are so fake why even post" from both sides of the comment fence. Now, we are starting to get more pieces of information on the upcoming Kepler series. Based on information coming out of German-based 3dcenter.org, we may have a clearer picture of the true specifications for Kepler GK104.

Outside of the switch to the 28 nm process, one of the major changes in the Kepler architecture is to allow for more CUDA cores. This is achieved by no longer having shader frequency, just GPU frequency. Each Stream Multiprocessor will contain 96 CUDA cores, unlike the 32 - 48 that Fermi had. This change in layout of the CUDA cores will have the GK104 sporting up to 1536 CUDA cores, which is a big boost from GF110 and GTX 580. The number of texture units have doubled from 64 to 128 on GK104. The GK104 will only have 32 ROPS versus 48 in GF110 but it shouldn't affect performance compared to the Fermi.

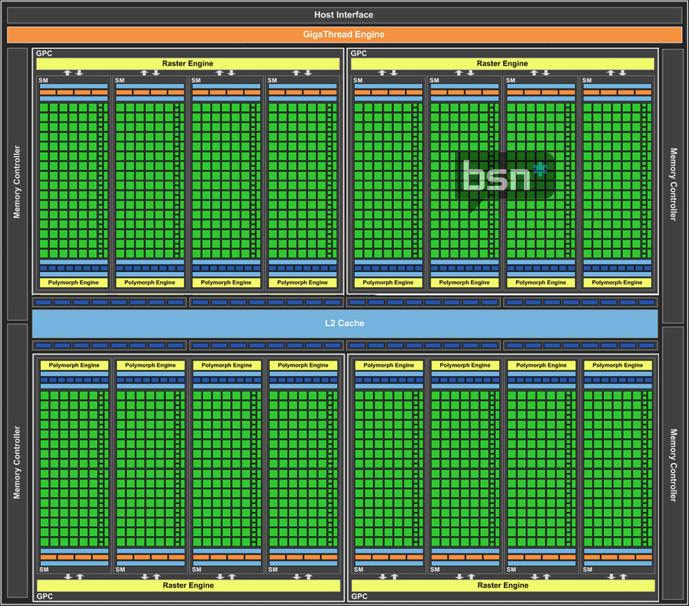

The above GK104 architectural overview comes from Bright Side of News.

Nvidia Kepler GK104:

- 28nm production at TSMC,

- Die size 340mm²

- 4 Graphics Processing Clusters (GPC)

- 4 Streaming Multiprocessors (SM) per GPC = 16 SM

- 96 Stream Processors (SP) per SM = 1536 CUDA cores

- 8 Texture Units (TMU) per SM = 128 TMUs

- 32 Raster OPeration Units (ROPs)

- Chip clock (top model): 950 MHz

- 1250 MHz actual (5.00 GHz effective) memory, 160 GB/s memory bandwidth

- 256-bit DDR memory interface (up to GDDR5)

- 2048 MB (2 GB) memory amount standard

- 2.9 TFLOP/s single-precision floating point compute power

- 486 GFLOP/s double-precision floating point compute power

- Elimination of Hotclocks

The GK104's performance is expected to exceed the GTX 580 at the $350 to $400 price range. In addition, it is expected to outperform AMD's HD 7950 at similar price point and challenge the HD 7970 for the performance crown. The GK104 looks to be the similar to the current generation GTX 560 Ti with regards to price to performance in its category.

Please keep in mind, of course, that these specifications are from 3dcenter's supposed reliable source. We won't know for sure until Nvidia shows its hand. Stay tuned!

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

billybobser Given the lies (ahem, rumours) they were shilling out, the Nvidia was expected to beat the 7970 for half the price!Reply

I see that's been downgraded to 'challenge', which is wholly disappointing, seeing as AMD are milking people with their new pricing strategy, it doesn't look like nVidia are going to convert anyone. -

sarcasm billybobserGiven the lies (ahem, rumours) they were shilling out, the Nvidia was expected to beat the 7970 for half the price!I see that's been downgraded to 'challenge', which is wholly disappointing, seeing as AMD are milking people with their new pricing strategy, it doesn't look like nVidia are going to convert anyone.Reply

Who cares, I'm loving the competition. I love how they keep trying to out do each other over and over because the consumers end up winning. It's like Intel vs AMD except on a different front. When it comes to cpu recommendations, its always "Intel Intel Intel." But with GPUs, its a really huge toss up between the two which gives consumers more options but still able to get their money's worth. -

RazorBurn It doesn't matter if the source is reliable or not, because whats important is that all of them tells there's a Huge improvement in the GPU..Reply -

welshmousepk I just hope there are plenty of chips to go around. When quantities are low, backwater countries like mine (new zealand) get totally ripped off with pricing. I recently upgraded my GPUs, and while I had really wanted a 7970, they were going for 1200 dollars here. I ended up just getting a GTX 580 for a little under 700. that's almost have the price. where's the logic in that?!Reply -

nohode I just want the prices to drop since recently AMD 79xx pricing scheme which is bullsh1tReply -

EDVINASM $%ing boring already. Give us GPU a real world benchmark suite to compare with existing GPUs. Not even a test product in the bench. We need one and need one now not in April. Unless gamers can hibernate the wait would simply ruin NVidia in short term. Am NVidia user but boy am I starting to shop around for AMD.Reply -

viridiancrystal Looks to my like each CUDA core is going to be much less powerful than on Fermi.Reply -

No, it seems the cores have similar performance with Fermi cores, only the frequency is different.Reply

-

mosu simple math: 512 CUDA cores=250wattsReply

1536CUDA cores=750Watts, assuming that 28nm tech gives them a 40% reduction on power usage, will consume at least 500 watts...not feasible.

-

SchizoFrog nVidia always said that their cards would launch in March/April and as such AMD rushed a couple of their cards out to be first for this current generation. Unless your PC just blew up, anyone that can't wait an extra few of weeks to see exactly where they stand is either an idiot or has far too much money on their hands.Reply

It would not surprise me if nVidia has a solid launch with a good mid range card and a high end card that just about tops what AMD has to offer. I bet Kepler will be able to do far more than initial cards will suggest though and we'll see many, many iterations of virtually the same cards with different clock speeds to cover various price points, much like the current 560/560Ti/560Ti(448)...