Google Unveils 72-Qubit Quantum Computer With Low Error Rates

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

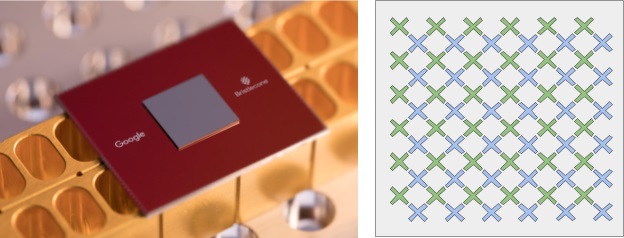

Google announced a 72-qubit universal quantum computer that promises the same low error rates the company saw in its first 9-qubit quantum computer. Google believes that this quantum computer, called Bristlecone, will be able to bring us to an age of quantum supremacy.

Ready For Quantum Supremacy

Google has teased before that it would build a 49-qubit quantum computer to achieve “quantum supremacy.” This achievement would show that quantum computers can perform some well-defined science problems faster than the fastest supercomputers in the world can.

In a recent announcement, Google said:

Article continues belowIf a quantum processor can be operated with low enough error, it would be able to outperform a classical supercomputer on a well-defined computer science problem, an achievement known as quantum supremacy. These random circuits must be large in both number of qubits as well as computational length (depth). Although no one has achieved this goal yet, we calculate quantum supremacy can be comfortably demonstrated with 49 qubits, a circuit depth exceeding 40, and a two-qubit error below 0.5%. We believe the experimental demonstration of a quantum processor outperforming a supercomputer would be a watershed moment for our field, and remains one of our key objectives.

Not long after Google started talking about its 49-qubit quantum computer, IBM showed that for some specific quantum applications, 56 qubits or more may be needed to prove quantum supremacy. It seems Google wanted to remove all doubt, so now it’s experimenting with a 72-qubit quantum computer.

Don’t let the numbers fool you, though. Right now, the most powerful supercomputers can simulate only 46 qubits and for every new qubit that needs to be simulated, the memory requirements typically double (although some system-wide efficiency can be gained with new innovations).

Therefore, in order for us to simulate a 72-qubit quantum computer, we’d need millions of times more RAM (2^(72-46)). We probably won’t be able to use that much RAM in a supercomputer anytime soon, so if Bristlecone will be able to run any algorithm faster than our most powerful supercomputers, then the quantum supremacy era will have arrived.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

High Number Of Qubits Is Not Enough

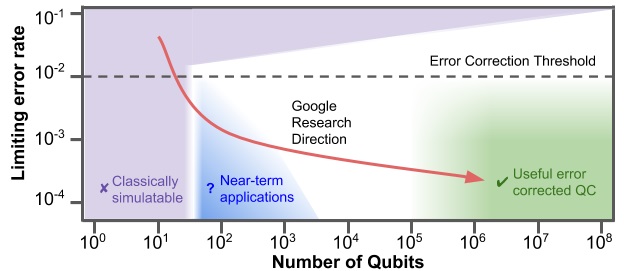

A high number of qubits is not the only thing that’s needed to achieve quantum supremacy. You also need qubits with low error rates so they don’t mess-up the calculations. A useful quantum computer is a function of both number of qubits and error rate.

According to Google, a minimum error rate for quantum computers needs to be in the range of less than 1%, coupled with close to 100 qubits. Google seems to have achieved this so far with 72-qubit Bristlecone and its 1% error rate for readout, 0.1% for single-qubit gates, and 0.6% for two-qubit gates.

Quantum computers will begin to become highly useful in solving real-world problems when we can achieve error rates of 0.1-1% coupled with hundreds of thousand to millions of qubits.

According to Google, an ideal quantum computer would have at least hundreds of millions of qubits and an error rate lower than 0.01%. That may take several decades to achieve, even if we assume a “Moore’s Law” of some kind for quantum computers (which so far seems to exist, seeing the progress of both Google and IBM in the past few years, as well as D-Wave).

That said, we may start seeing some "useful" applications of quantum computers well before that. For instance, breaking most existing cryptography may be possible when the quantum computers have only a few thousand qubits. If the current rate of progress for quantum computers holds, we may be able to reach that in about a decade.

Google is “cautiously optimistic” that the Bristlecone quantum computer will not only achieve quantum supremacy, but could also be used as a testbed for researching qubit scalability and error rates, as well as applications such as simulation, optimization, and machine learning.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

Chettone So, investing in Quantum Computers research could destroy any crypto currency dreams in the long term? Bitcoins in 10 years will be as easy to break as it is now to break a 64bit zip file.Reply -

nings Not even bitcoins but bank acces, certificates and all things who protect our datas..Reply

And without a quantum processor you can't encrypt all your data with a quantum key because take too much time for encrypt/uncrypt the message. The futur is pretty bad for security. -

alextheblue Quantum AI lab? It's always a good idea to run Skynet on systems with a high error rate!Reply

Also, call me when they have quantum speedup on a range of tasks as broad as a GPU can handle. That's probably going to take a crapload of qubits. If it can only accelerate very specific problems, it's more in line with a custom ASIC. -

derekullo Reply20766040 said:Quantum AI lab? It's always a good idea to run Skynet on systems with a high error rate!

Also, call me when they have quantum speedup on a range of tasks as broad as a GPU can handle. That's probably going to take a crapload of qubits. If it can only accelerate very specific problems, it's more in line with a custom ASIC.

A.I life will find a way!!!!! -

pauly-chops Apologies for sounding ignorant but these "real world problems", what are they referring to? What calculations are too far out of reach, or take too long to compute, for the super computers we have today? What are these super complex problems and what will happen when answers are found?Reply -

turkey3_scratch Reply20765724 said:Not even bitcoins but bank acces, certificates and all things who protect our datas..

And without a quantum processor you can't encrypt all your data with a quantum key because take too much time for encrypt/uncrypt the message. The futur is pretty bad for security.

If you are implying something along the lines of password cracking via trillions of attempts per second, something like this is avoidable through time delay implementations. -

therealduckofdeath It's not really trillions per second, more like trillions simultaneously. The error accuracy in picking the correct one is what I assume is the likelihood of getting it right-right. If it will ever be "error free" digital encryption won't exist any more. Not that it matters. SkyNet will be our glorious overlord long before we'd have to worry about that reality. :DReply -

TJ Hooker Reply

It's not about brute force guessing a password really quickly, it's about breaking the encryption itself.20766242 said:20765724 said:Not even bitcoins but bank acces, certificates and all things who protect our datas..

And without a quantum processor you can't encrypt all your data with a quantum key because take too much time for encrypt/uncrypt the message. The futur is pretty bad for security.

If you are implying something along the lines of password cracking via trillions of attempts per second, something like this is avoidable through time delay implementations.

For example, AFAIK a lot of public key cryptography is based around the difficulty of integer factorization for traditional computers. Public key cryptography forms the basis for most encrypted electronic communication. Quantum computers have the potential to be much faster at factorization than traditional computers, and thus can break said encryption.

Or to quote wikipedia: "The problem with currently popular algorithms is that their security relies on one of three hard mathematical problems: the integer factorization problem, the discrete logarithm problem or the elliptic-curve discrete logarithm problem. All of these problems can be easily solved on a sufficiently powerful quantum computer running Shor's algorithm."https://en.wikipedia.org/wiki/Post-quantum_cryptography -

brandonjclark The biggest hurdle AFTER the cooling problem is obviously memory. Quantum computers require insane counts of memory to work. Yes, that memory will catch up, SOMEDAY. But it's not coming that soon where mass market of these things could be even remotely cost-effective for regular consumers.Reply

Unfortunately, I believe AI to require a QC-core to be extremely effective, so we might have to wait awhile before we can see real-world benefits. -

dezeyay @Chettone: Quantum Resistant Ledger is the first cryptocurrency that will be quantum secure. They use XMSS which is mathematically provable quantum secure. Existing blockchains can not be 100% quantum secure after forking an update, because all existing wallets need to be unlinked from their old non-quantum secure private key manually by their owners. Which a % of people will not do, because --> humans. But there is also a % of people who are locked out of their wallets. So these wallets can't be accessed ever again and so a % of the criculating supply of coins will always be vulnerable for quantum attacks.Reply