Raspberry Pi Powers Mind Controlled 'ScreenDress'

The Raspberry Pi is no stranger to industries outside the realm of tech and today’s project highlights a great example of just such a case. Today we’re sharing a project thanks to Anouk Wipprecht and her new mind-controlled 3D printed ScreenDress. This dress was designed from scratch and relies on our favorite SBC to drive some cool AI-focused components.

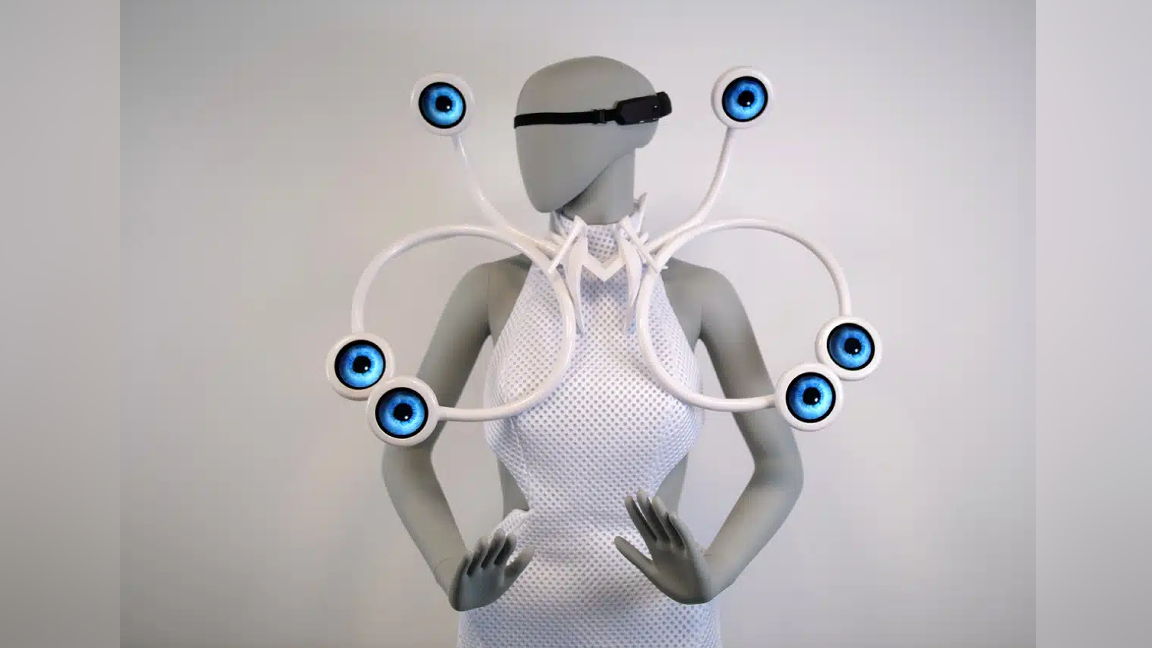

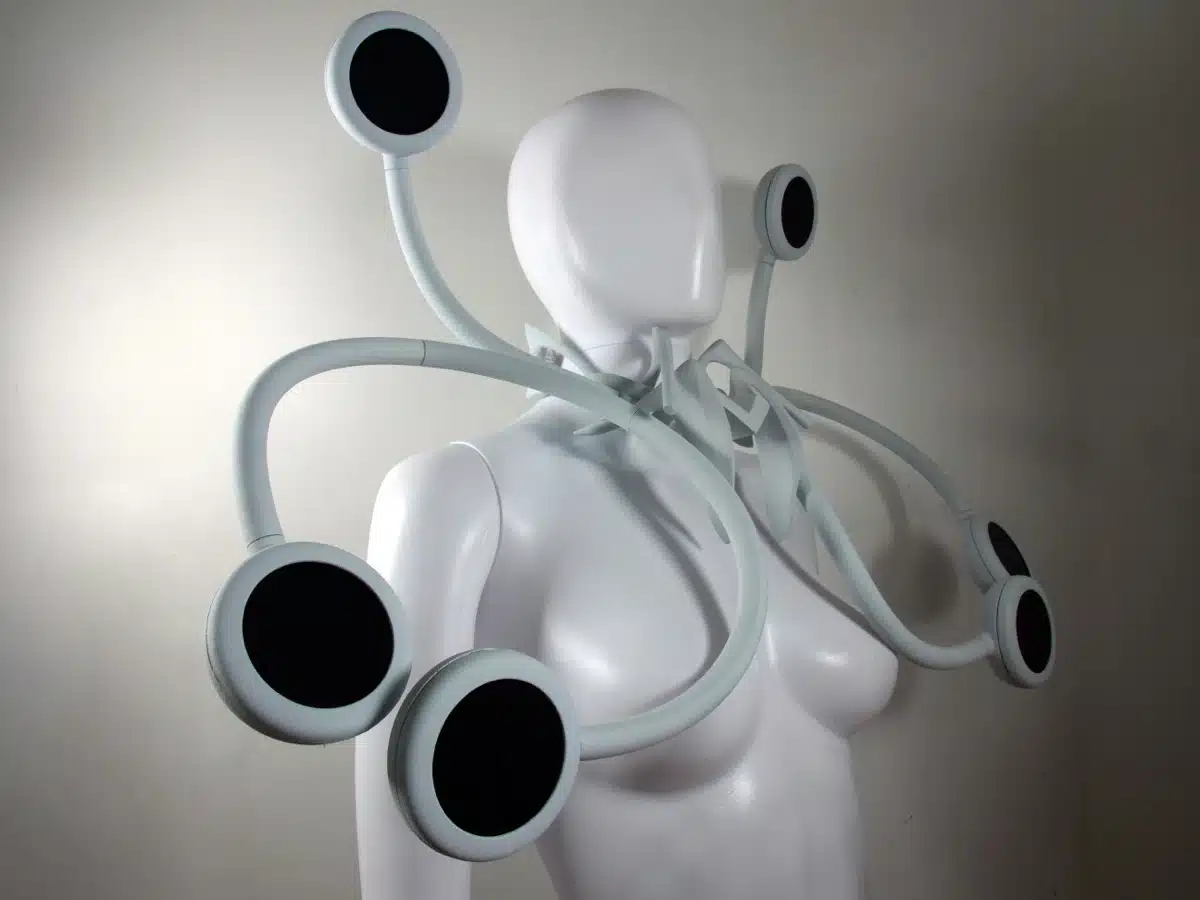

The dress features a variety of screens that change depending on real-time monitoring of brain waves. Wipprecht dubs the creation “ScreenDress”. The idea behind its design was to merge technology and fashion in a way that enables the wearer to directly influence the dress—in this case, their brain waves impact the round LCD panels.

The wearer of the dress wears an EEG (electroencephalogram) sensor that needs to be trained to the wearer so it can distinguish base brain waves against stimulated or focused ones. When the wearer’s brain waves get to a certain degree of intensity, the six round LCDs will respond. They each display an eyeball with a pupil that will widen in response to the wearer’s focus.

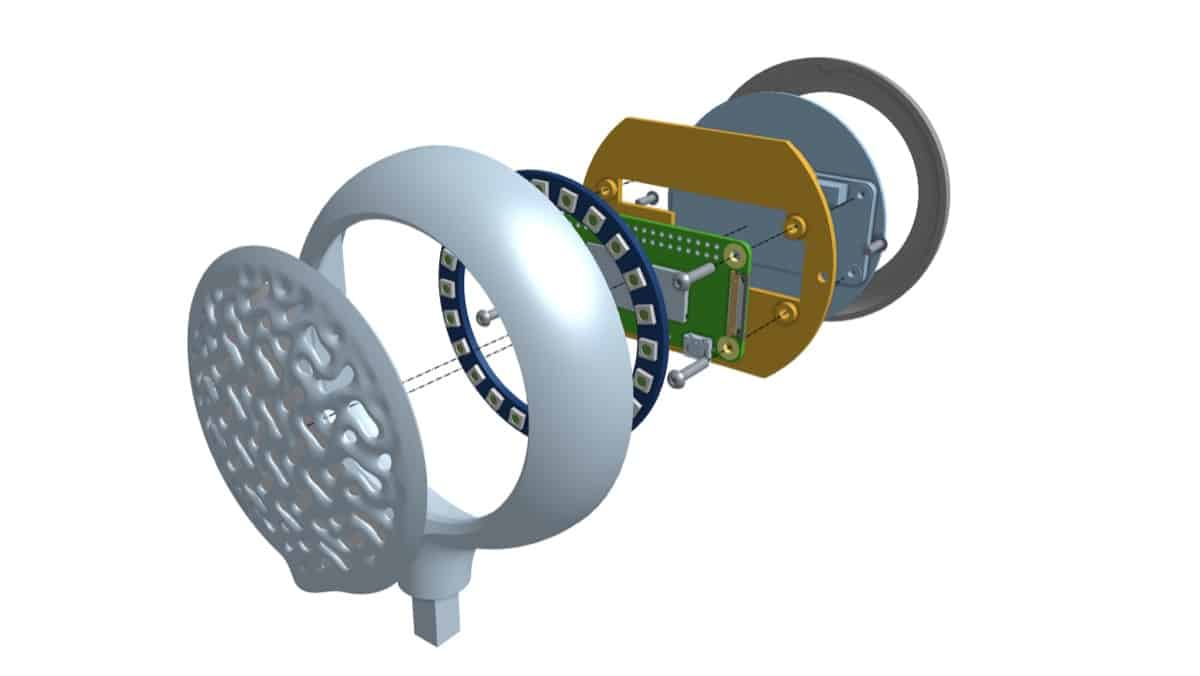

Each eyeball is supported by a Raspberry Pi Zero which connects to the LCD screen and updates it accordingly. The EEG sensor is a 4-channel BCI headset known as the Unicorn Headband and it was 3D-printed just for this project. All of the 3D-printed components were printed using an HP Jet Fusion 5420W printer.

The 3D-printed components were designed by Wipprecht using an application called Onshape. This is a free, browser-based CAD application that makes it possible to create 3D assets from scratch. Wipprect also explains that it only takes two minutes to train the machine learning system for each new wearer.

According to Voxel Matters, Wipprecht showed the ScreenDress creation at the ARS Electronica Festival in Linz, Austria and has plans to share it at other events. If you’ve been looking for a Raspberry Pi project to inspire your inner seamster or seamstress, this is surely it.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ash Hill is a contributing writer for Tom's Hardware with a wealth of experience in the hobby electronics, 3D printing and PCs. She manages the Pi projects of the month and much of our daily Raspberry Pi reporting while also finding the best coupons and deals on all tech.

-

bit_user Make a dress out of E-Ink paper, or some other kind of flexible display technology. That would be mesmerizing, especially if you animated it to shift the texture in the opposite speed & direction as you're moving. Wow, there are so many neat things you could do with a garment like that.Reply