Nvidia CEO says Samsung HBM3e not yet ready for AI accelerator certification — Jensen Huang suggests more engineering work is required

Samsung neither confirms nor denies problems with its HBM chips, but Nvidia says more engineering is needed.

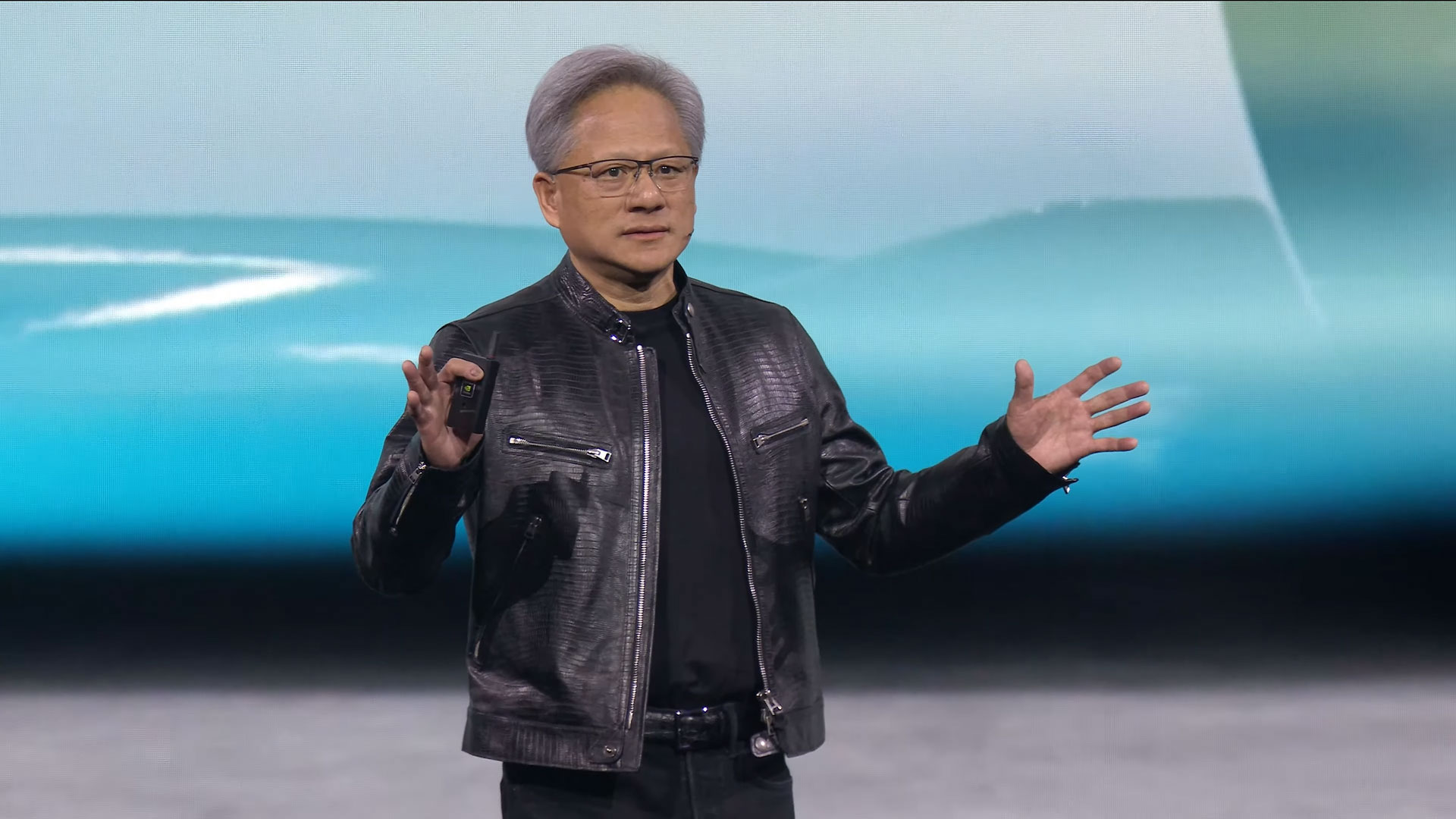

Nvidia CEO Jensen Huang says Samsung’s advanced High Bandwidth Memory chips still aren’t ready for official certification. Nvidia’s sign-off is the last step before Samsung can begin supplying HBM3 and HBM3e components, essential to training Nvidia artificial intelligence (AI) platforms.

SK hynix is currently the primary supplier of HBM3 and HBM3e memory to Nvidia. These chips are important for the fast and efficient training of AI models, including ChatGPT and others. Nvidia is examining HBM chips produced by Samsung and Micron, but it hasn’t yet endorsed their usage. More engineering work is needed, Huang told reporters. However, it isn't entirely certain which engineers have the most work to do - those at Samsung or Nvidia (or a team involving both).

Recent reports suggested that Samsung’s latest HBM modules struggle with excessive heat and power consumption issues. Huang pointed out that the modules haven’t yet failed any qualification tests, but the HBM product isn’t quite ready for deployment. “We just have to do the engineering. It’s just not done,” Huang told reporters at a Tuesday briefing at Computex 2024.

Samsung, on the other hand, denied the accuracy of reports raising concerns over heat and power. According to Samsung, the testing of its most advanced HBM3e memory modules is progressing smoothly. It says its latest HBM products work fine with a wide range of processors, but it doesn’t specifically deny problems with Nvidia processors.

Samsung is still the overall largest producer of memory chips globally, even if it lags in HBM production capabilities. Samsung says it has begun mass production of its eight-layer HBM3e memory and will soon begin mass production of 12-layer modules. It expects to increase its supply of HBM by at least three times in 2024 compared with last year.

Asked directly about the alleged overheating and power consumption issues, Nvidia’s Huang also dismissed those reports. “There is no story there,” he said.

Korean-based SK hynix leads the pack in delivering HBM3 and HBM3e chips. The company’s production capacity for the chips is fully booked through next year, and SK hynix plans to spend $14.6 billion to build a new production complex to meet demand.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Samsung’s investors have grown concerned that the electronics maker has yet to catch up with its smaller rival SK hynix. This may be one of the key factors that led to Samsung recently replacing the head of its semiconductor division.

Jeff Butts has been covering tech news for more than a decade, and his IT experience predates the internet. Yes, he remembers when 9600 baud was “fast.” He especially enjoys covering DIY and Maker topics, along with anything on the bleeding edge of technology.