Ashes Of The Singularity Beta: Async Compute, Multi-Adapter & Power

DirectX 12 has been available since Windows 10, but there aren't any games for it yet, so we're using the Ashes of the Singularity beta to examine DX12 performance.

Bottlenecks & Render Times

Bottleneck Origins

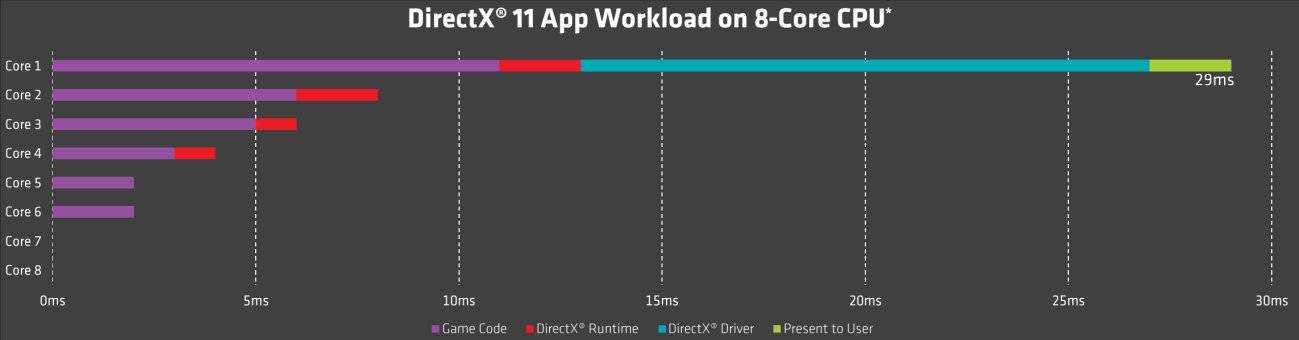

Before we get to the actual data analysis, let’s take another look at the fixed string of commands under DirectX 11 and its predecessors. The graph shows that the load on each CPU core isn't even close to equal, and that it takes a long time for you to actually see the end result, which is called Present Time in the log. It’s also interesting to see the large amount of time spent by the driver and CPU.

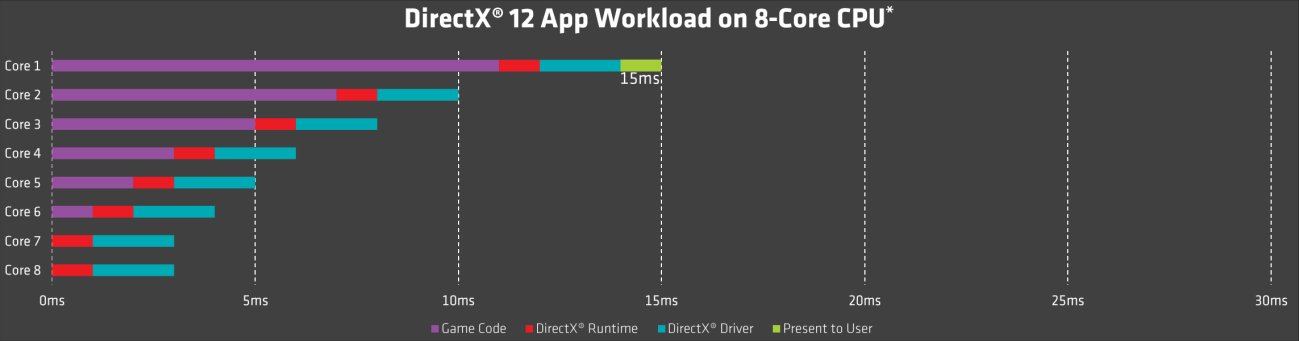

The same picture looks very different under DirectX 12. The load on each CPU core is more evenly distributed, which leads to more of the tasks being completed in parallel. The time spent by the driver is now spread across all of the threads. It’s not like the process takes less processor time, but the time to reach Present Time is cut down significantly because parts of the process are executed in parallel.

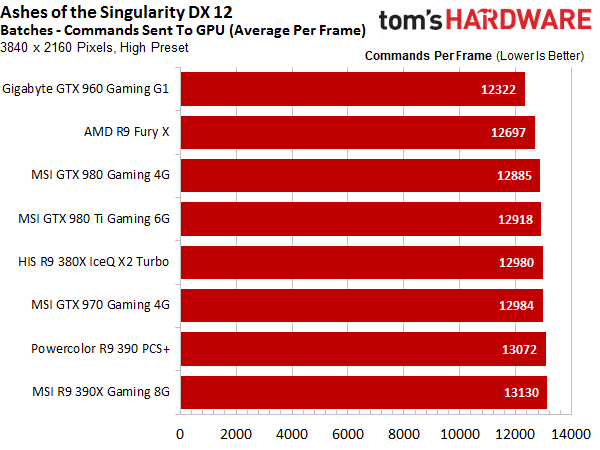

Batches (GPU Commands)

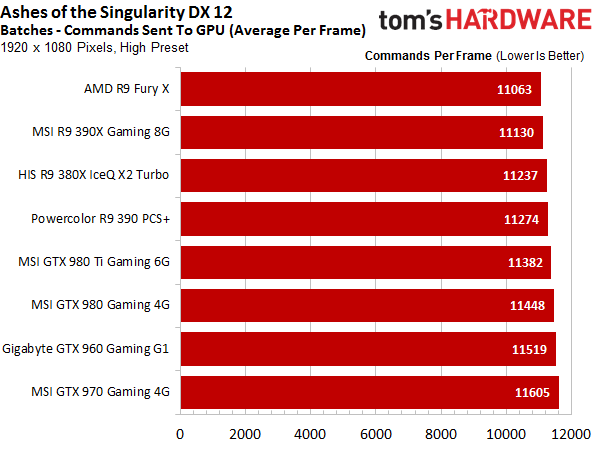

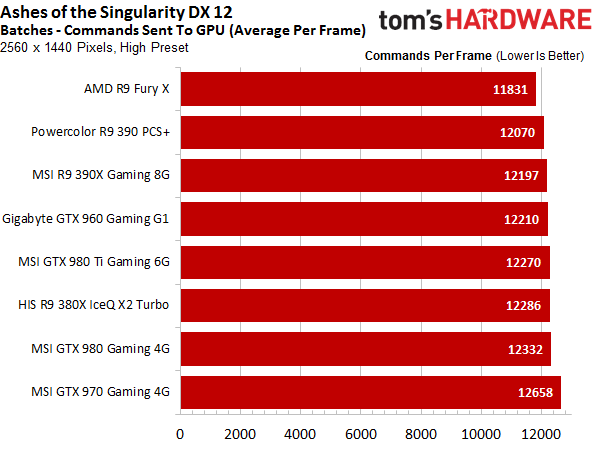

We start out by comparing the number of GPU commands (batches) per rendered frame. It turns out that this ratio actually isn’t all that different for different CPUs or even different manufacturers.

Article continues below

CPU Time

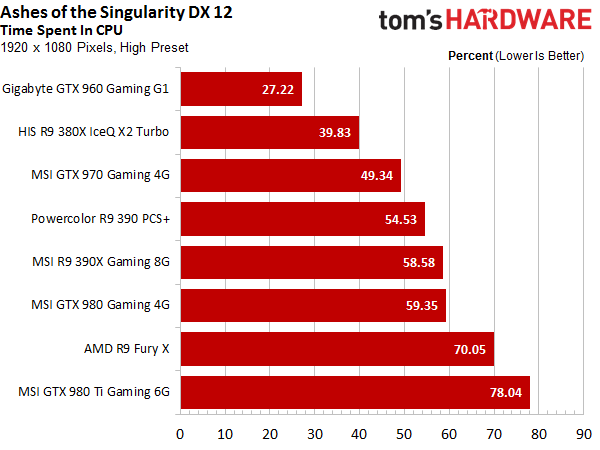

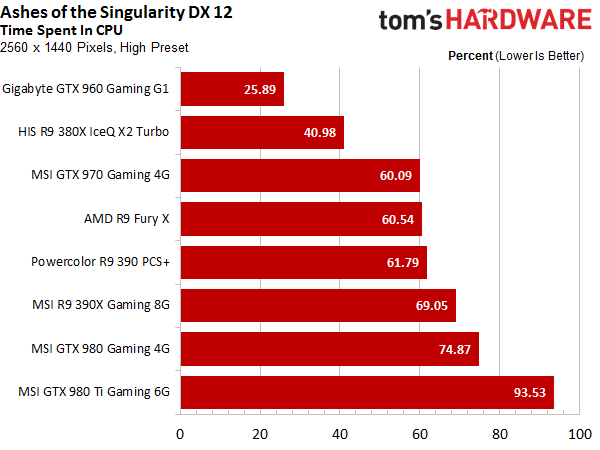

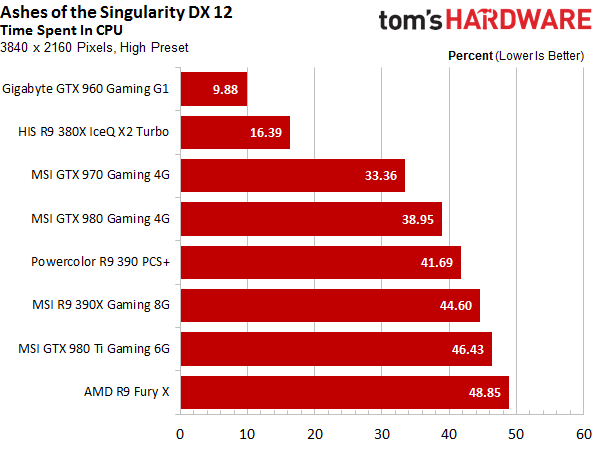

The analysis above is just a starting point. Next, we need to examine how the CPU’s load is distributed by analyzing the percentage of time that the CPU is in use. Even while making good use of parallelization, this measure is still a mirror image of gaming performance. Fast graphics cards produce more frames in the same period of time than slower ones, which means that the CPU has to complete more tasks in the same time period as well.

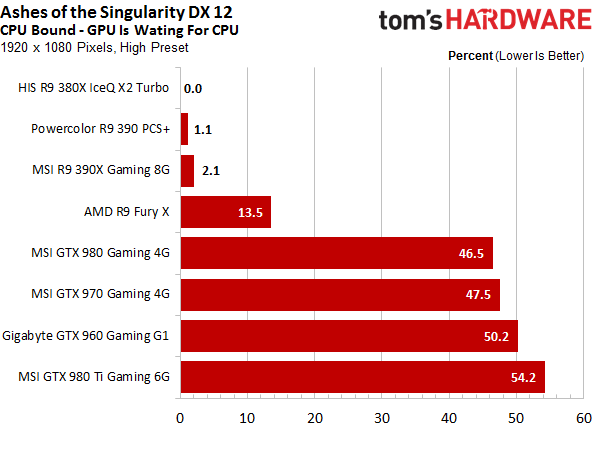

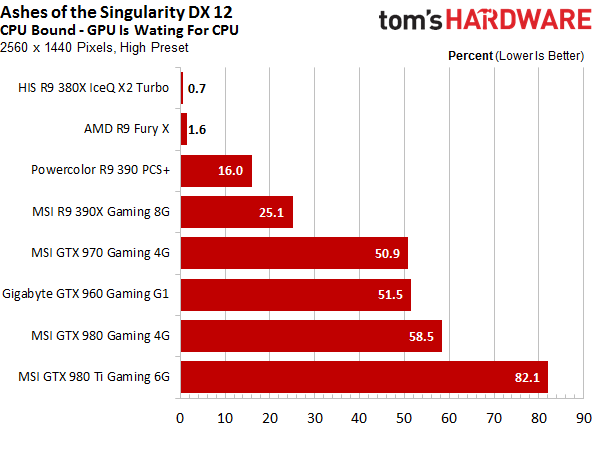

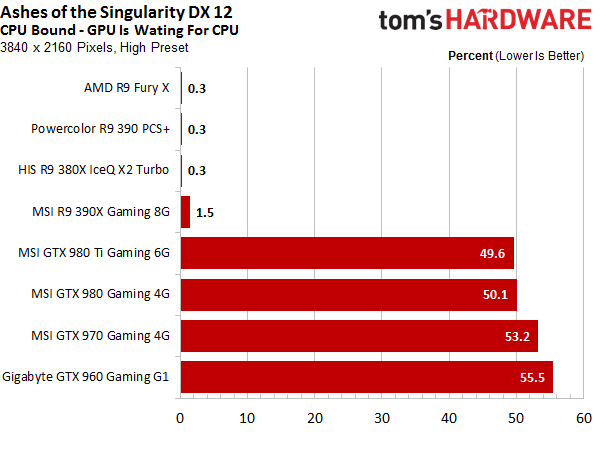

CPU Bottleneck

So, at which point does the CPU become a bottleneck? It’s either when too few cores are actually working on the tasks to get them done on time due to a lack of parallelization, or if there is just not enough computing power available even with all cores working in parallel. The latter basically means that the CPU isn’t fast enough to keep up with the GPU, even when working at maximum capacity.

There are other potential CPU-related bottlenecks as well. For instance, if a lot of data is worked on in parallel, and then needs to be shuffled back and forth between other subsystems, some other interface could slow you down.

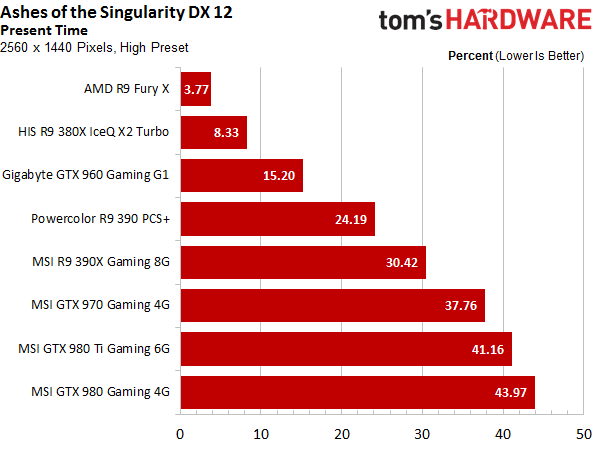

The more the GPU is kept busy, the easier the CPU’s job gets. This effect is easy to see when comparing different resolutions. AMD’s Radeon R9 Fury X bucks the trend in a good way, though. It’s a fast graphics card, but doesn’t torture the CPU as much as some of the other cards in its segment. Clearly, parallelization improved markedly between AMD’s Hawaii and Fiji architectures.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

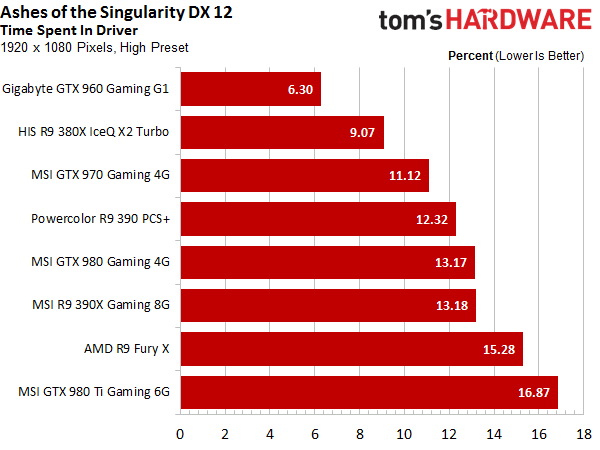

Driver Time

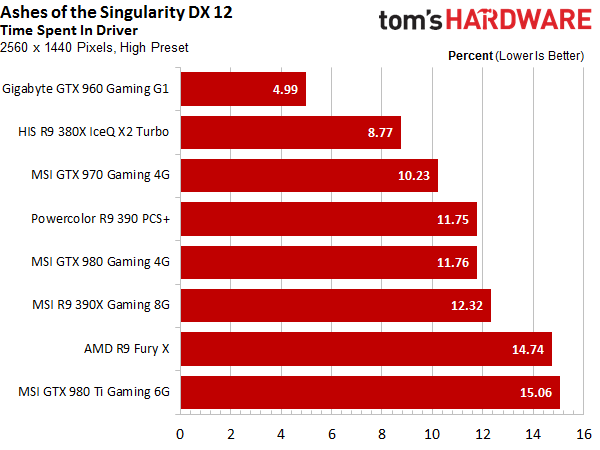

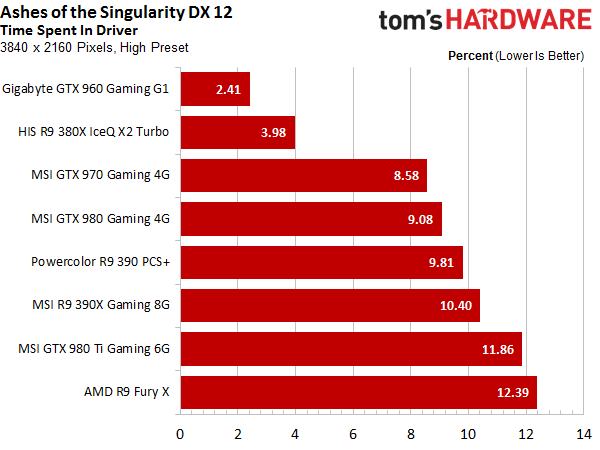

Again, a lot of a frame’s overall render time is consumed by the driver. AMD loses a lot more time this way than Nvidia. This phenomenon could just be blamed on driver overhead, but then again, running many asynchronous tasks does create some additional work.

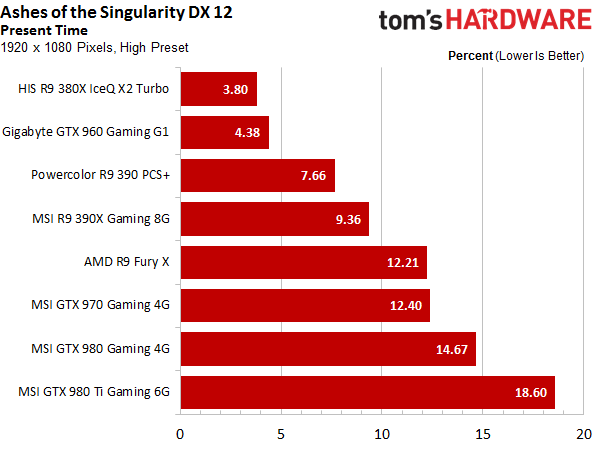

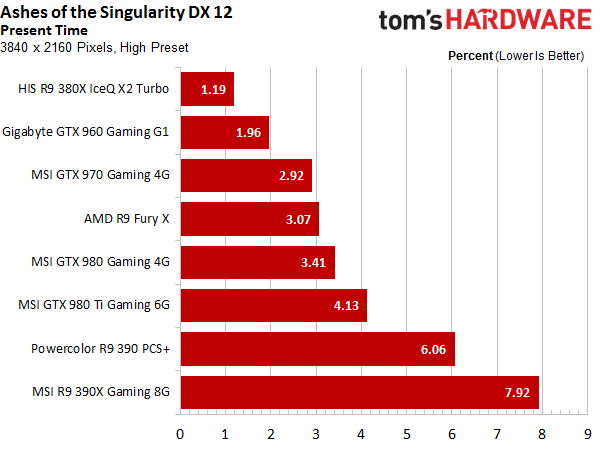

Present Time

This is the time used to actually output the frame to the observer. Consequently, it’s the last step. The overall time varies quite a bit depending on the resolution. This is due to the resolution’s influence on the CPU bottlenecks that we discussed above and the additional GPU load with higher resolutions.

If the GPU is the bottleneck, then the Present Time is very short, since the CPU already had a lot of time to finish its computations.

Render Time Bottom Line

AMD is the clear winner with its current graphics cards. Real parallelization and asynchronous task execution are just better than splitting up the tasks via a software-based solution. It’s not entirely clear just how much of a difference this will make in real-life gaming scenarios, but it's certainly a technology that could see more use in the future.

Current page: Bottlenecks & Render Times

Prev Page Frame Rates & Times Next Page Multi-GPU In Detail

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

In other words DX12 is business gimmick which doesn't translate to squat in real game scenario and I am glad I stayed on Windows 7...running crossfire R9 390x.Reply

-

FormatC Especially Hawaii / Grenada can benefit from asynchronous shading / compute (and your energy supplier). :)Reply -

17seconds An AMD sponsored title that shows off the one and only part of DirectX 12 where AMD cards have an advantage. The key statement is: "But where are the games that take advantage of the technology?" Without that, Async Compute will quickly start to take the same road taken by Mantle, remember that great technology?Reply -

FormatC ReplyAn AMD sponsored title

Really? Sponsoring and knowledge sharing are two pairs of shows. Nvidia was invited too. ;)

Async Compute will quickly start to take the same road taken by Mantle

Sure? The design of the most current titles and engines was started long time before Microsoft started with DirectX 12. You can find the DirectX 12 render path in first steps now in a lot of common engines and I'm sure that PhysX will faster die than DirectX12. Mantle was the key feature to wake up MS, not more. And: it's async compute AND shading :) -

turkey3_scratch Well, there's no denying that for this game the 390X sure is great performing.Reply -

James Mason ReplyAn AMD sponsored title

Really? Sponsoring and knowledge sharing are two pairs of shows. Nvidia was invited too. ;)

Async Compute will quickly start to take the same road taken by Mantle

Sure? The design of the most current titles and engines was started long time before Microsoft started with DirectX 12. You can find the DirectX 12 render path in first steps now in a lot of common engines and I'm sure that PhysX will faster die than DirectX12. Mantle was the key feature to wake up MS, not more. And: it's async compute AND shading :)

Geez, Phsyx has been around for so long now and usually only the fanciest of games try and make use of it. It seems pretty well adopted, but it's just that not all games really need to add an extra layer of physics processing "just for the lulz." -

Wisecracker Thanks for the effort, THG! Lotsa work in here.Reply

What jumps out at me is how the GCN Async Compute frame output for the R9 380X/390X barely moves from 1080p to 1440p ---- despite 75% more frames. That's sumthin' right there.

It will be interesting to see how Pascal responds ---- and how Polaris might *up* AMD's GPU compute.

Neat stuff on the CPU, too. It would be interesting to see how i5 ---> i7 hyperthreads react, and how the FX 8-cores (and 6-cores) handle the increased emphasis on parallelization.

You guys don't have anything better to do .... right? :)

-

For someone who runs Crossfire R9 390x (three cards) DX12 makes no difference in term of performance. For all BS Windows 10 brings not worth *downgrading to considering that lot of games under Windows 10 are simply broken or run like garbage where no issue under Windows 7.Reply

-

ohim ReplyAn AMD sponsored title that shows off the one and only part of DirectX 12 where AMD cards have an advantage. The key statement is: "But where are the games that take advantage of the technology?" Without that, Async Compute will quickly start to take the same road taken by Mantle, remember that great technology?

Instead of making random assumptions about the future of DX12 and Async shaders you should first be mad at Nvidia for stating they have full DX12 cards and that`s not the case, and the fact that Nvidia is trying hard to fix this issues trough software tells a lot.

PS: it`s so funny to see the 980ti being beaten by 390x :) -

cptnjarhead ReplyFor someone who runs Crossfire R9 390x (three cards) DX12 makes no difference in term of performance. For all BS Windows 10 brings not worth *downgrading to considering that lot of games under Windows 10 are simply broken or run like garbage where no issue under Windows 7.

There are no DX12 games yet for review, so why would you assume that you should see better performance in games made for windows 7 DX11, in win10 DX12? Especially in "tri-Fire". DX12 has significant advantages over DX11 so you should wait till these games actually come out before making assumptions on performance, or the validity of DX12's ability to increase performance.

My games, FO4, GTAV and others run better in Win10 and i have had zero problems. I think your issue is more Driver related, which is on AMD's side, not MS's operating system.

I'm on the Red team by the way.