OCZ Vector 256 GB Review: An SSD Powered By Barefoot 3

Maintaining Performance Over Time: The Vector Looks Resilient

NAND flash can be read from or written to one page at a time (each page 8 KB in size on OCZ's Vector). But lets say you fill our 256 GB sample up with data and then delete everything that was on it. Those pages still have data on them, even if Windows reports the capacity as available. The only way to write over them again is to perform an erase, and erasures only happen at the block level (again, in the case of the Vector, you're looking at 256 pages per block, or 2 MB).

As you might imagine, particularly when you've written a lot of random data to a drive, freeing up blocks isn't as easy as erasing 2 MB at a time. Some of the pages in a given block may contain good information, after all. So, in order to prepare a block of 256 pages for the next write (or for simply maintaining equal wear across the available NAND), valid data is moved to a new block through a process called garbage collection.

Now, the challenge is that flash memory cells are only rated for so many of those program and erase cycles, so a controller doesn't want to move data around too much and risk prematurely wearing out the media. Postponing garbage collection as long as possible helps reduce write amplification, since you aren't moving data around as much. But it only delays the inevitable, affecting performance as you wait on an erase before your write operation can complete. Doing this proactively optimizes for performance, generally trading off faster wear-out.

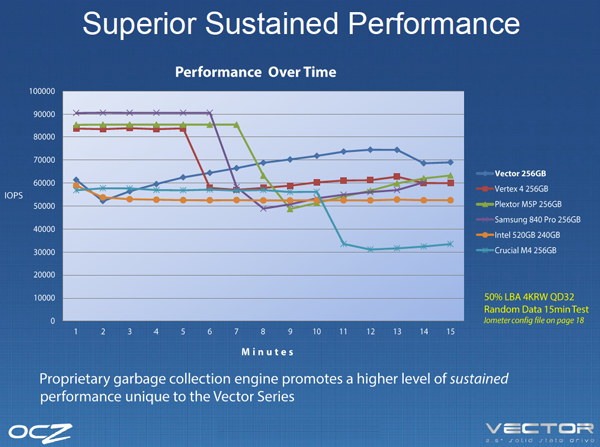

In its marketing material, OCZ makes the point that its Vector is optimized for sustained performance over time, crediting its garbage collection technology for keeping the drive running quickly as certain competing drives run quickly for a while, but then slow down as they're forced to contend with moving pages and freeing blocks.

Unfortunately, the test OCZ uses to illustrate this (50% LBA, 4 KB writes, and a queue depth of 32) isn't at all relevant to a desktop environment, where lower queue depths are the rule. So, we're creating our own little torture test, hopefully with a little more applicability to the client space. First, we write data sequentially to fill every block on the drive. Then, we torture it with 30 minutes of 4 KB random writes. Because the drive is already packed with data, the controller does not have any empty blocks available for background or idle garbage collection. Thus, whatever performance we see writing data back sequentially at a queue depth of one should be the impact of whatever happens immediately after thrashing the NAND with 4 KB random writes.

This isn't to say one drive is better than another. Rather, it helps us determine whether an architecture relies more on foreground or background garbage collection.

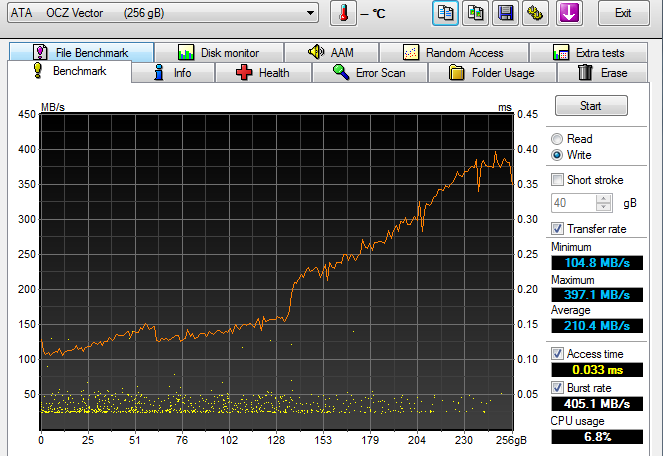

In the chart above, the Vector starts out fairly slow (around 100 MB/s), but its performance ramps up quickly as garbage collection consolidates all of those blocks that look like Swiss cheese. Eventually, sequential throughput returns to the out-of-box performance we saw from Iometer on the previous page.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

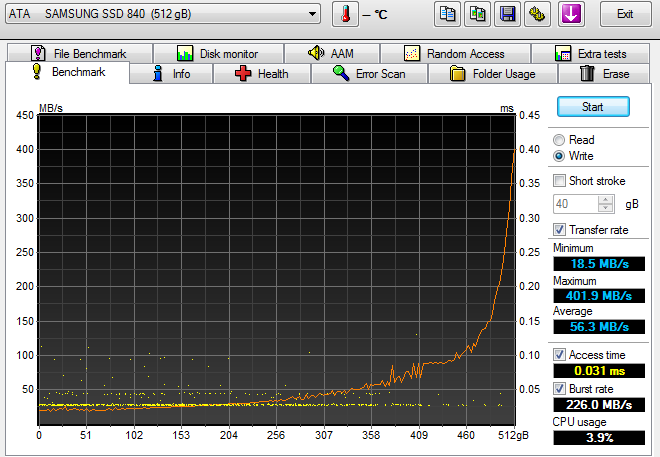

Samsung's 840 Pro, in comparison, has a much steeper climb. Its throughput starts at a painfully-low 18.5 MB/s and doesn't hit 100 MB/s until we've written to 90% of the drive.

Again, these two charts don't implicate Samsung's design or advocate OCZ's. We're simply demonstrating the speed at which the Vector recovers its performance in the event that you really hammer it hard (if you ever do, which most people won't).

Current page: Maintaining Performance Over Time: The Vector Looks Resilient

Prev Page 128 KB Sequential Performance Next Page Tom's Hardware Storage Bench And PCMark 7-

gnesterenko "In the real world, it's almost a certainty that you wouldn't be able to tell the difference between them (or a number of the nearly-as-fast but tangibly less expensive models featured each month in Best SSDs For The Money)."Reply

This, This, This. All SSDs are pretty amazing at this point for me, the average user. What I care fare more about is - Are they reliable. At the moment, it still seems that Intel holds the reliability crown. Reviews like this are great, for sure, but they don't answer the most important question sadly. -

acku gnesterenko"In the real world, it's almost a certainty that you wouldn't be able to tell the difference between them (or a number of the nearly-as-fast but tangibly less expensive models featured each month in Best SSDs For The Money)."This, This, This. All SSDs are pretty amazing at this point for me, the average user. What I care fare more about is - Are they reliable. At the moment, it still seems that Intel holds the reliability crown. Reviews like this are great, for sure, but they don't answer the most important question sadly.Reply

Let's make one thing clear. Endurance, reliability, durability, they all refer to different things.

When it comes to reliablity, everything we know is rather anecdotal. There are no published RMA rates (only return rates and for a 500 sample size), so its rather flawed. Second, two users subject their SSDs in different ways. The first may have more random data in their workload. You can't make an evaluation that drive x failed for user y therefore its bad. What you do matters. Unlike HDDs, performance and characteristics of the drive change based on what you do to it. Since no two users do the same thing with their system, the only real way to test this out is to get a few thousand SSDs, subject them to the same workload in a big data center for a few years. I'd love to do this but naturally, it's really not feasible. :)

Cheers,

Andrew Ku -

mayankleoboy1 So basically all good SSD's are constrained by the SATA3 interface. I cant wait for the direct PCIE interface(express pcie ?) to become standard.Reply -

Hupiscratch Do these drives (specially the Samsung 840) support TRIM in RAID 0 arrays or this is a property related to the chipset?Reply -

acku HupiscratchDo these drives (specially the Samsung 840) support TRIM in RAID 0 arrays or this is a property related to the chipset?Reply

That's a mobo thing. You're going to want a 7-series chipset from Intel.

Cheers,

Andrew Ku -

edlivian i dont care how much slower the crucial m4 is compared to the new kids on the block, I will keep stocking them for myself and company, since that is the only one that has never failed me.Reply -

acku Reply10447277 said:i dont care how much slower the crucial m4 is compared to the new kids on the block, I will keep stocking them for myself and company, since that is the only one that has never failed me.

Glad to hear the m4s are working out for you! Indeed, they have a pretty good track record.

Cheers,

Andrew Ku -

dingo07 So should I buy some of thier stock now...? It can't get much lower than them going out of business from the lawsuits... :/Reply -

I just hope that there won't be as many firmware updates with this drive. I got tired of that with my past 2 OCZ SSD's (Vertex 3 & Vertex Turbo). It was way too often, almost as much as my GPU drivers. That being said, they both have given me no issues whatsoever and run like champs. I see a 256GB Vector in my future.Reply