Kioxia preps XL-Flash SSD that's 3x faster than any SSD available — 10 million IOPS drive has peer-to-peer GPU connectivity for AI servers

10 million IOPS per drive, but there is a slight catch.

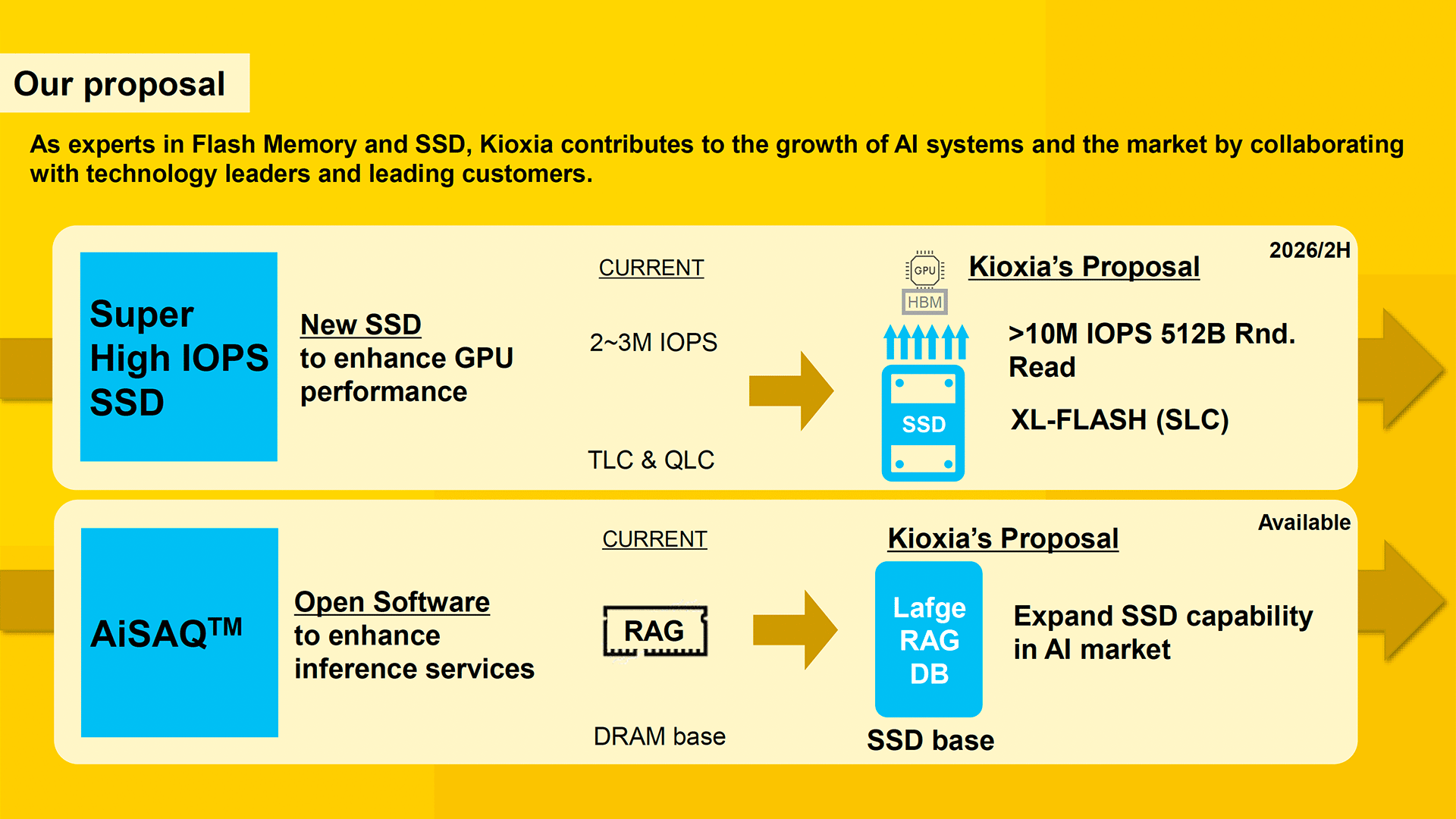

Kioxia aims to change the storage paradigm with a proposed SSD designed to surpass 10 million input/output operations per second (IOPS) in small-block workloads, the company revealed at its Corporate Strategy Meeting earlier this week. That's three times faster than the peak speeds of many modern SSDs.

One of the performance bottlenecks of modern AI servers is the data transfer between storage and GPUs, as data is currently transferred by the CPU, which significantly increases latencies and extends access times.

To reach the performance target, Kioxia is designing a new controller specifically tuned to maximize IOPS — beyond 10M 512B IOPS — to enable GPUs to access data at speeds sufficient to keep their cores 100% used at all times. The proposed Kioxia 'AI SSD' is set to utilize the company's single-level cell (SLC) XL-Flash memory, which boasts read latencies in the range of 3 to 5 microseconds, significantly lower than the read latencies of 40 to 100 microseconds offered by SSDs based on conventional 3D NAND. Additionally, by storing one bit per cell, SLC offers faster access times and greater endurance, attributes that are crucial for demanding AI workloads.

Current high-end datacenter SSDs typically achieve 2 to 3 million IOPS for both 4K and 512-byte random read operations. From a bandwidth perspective, using 4K blocks makes a lot of sense, whereas 512B blocks do not. However, large language models (LLMs) and retrieval-augmented generation (RAG) systems typically perform small, random accesses to fetch embeddings, parameters, or knowledge base entries. In these scenarios, small block sizes, such as 512B, are more representative of actual application behavior than 4K or larger blocks. Therefore, it makes more sense to use 512B blocks to meet the needs of LLMs and RAGs in terms of latencies and use multiple drives for bandwidth. Using smaller blocks could also enable more efficient use of memory semantics for access.

It is noteworthy that Kioxia does not disclose which host interface its 'AI SSD' will use, although it does not appear to require a PCIe 6.0 interface from a bandwidth perspective.

The 'AI SSD' from Kioxia will also be optimized for peer-to-peer communications between the GPU and SSD, bypassing the CPU for extra performance and lower latency. To that end, there is another reason why Kioxia (well, and Nvidia) plan to use 512B blocks as GPUs typically operate on cache lines of 32, 64, or 128 bytes internally and their memory subsystems are optimized for burst access to many small, independent memory locations, to keep all the stream processors busy at all times. To that end, 512-byte reads align better with GPU designs.

Kioxia's 'AI SSD' is designed to support AI training setups where large language models (LLMs) require fast, repeated access to massive datasets. Also, Kioxia envisions it being deployed in AI inference applications, particularly in systems that employ retrieval-augmented generation techniques to enhance generative AI outputs with real-time data (i.e., for reasoning). Low-latency, high-bandwidth storage access is crucial for such machines to ensure both low response times and efficient GPU utilization.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Kioxia 'AI SSD' is scheduled for release in the second half of 2026.

Follow Tom's Hardware on Google News to get our up-to-date news, analysis, and reviews in your feeds. Make sure to click the Follow button.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

Pierce2623 I’m assuming using SLC will be a big bottleneck on density unless this uses larger stacks than we’ve ever seen…Reply -

Li Ken-un To add to this, I’ve benchmarked the Intel Optane P5800X (3.2 TB) with FIO and it’s capable of hitting 5 million IOps with 512-byte random reads. When the NAND SSDs hit with 10 million IOps and “read latencies in the range of 3 to 5 microseconds,” that’ll finally lay any doubts to rest that Optane will have been ancient technology. Though that’ll be technology released in 2026 versus technology that was released in 2022, four years will have been a remarkably small gap given how far ahead Optane was in 2017 when it was first available.Reply

Given 128 TB-class QLC SSDs today, they could already do 32 TB SLC SSDs, but chose not to (largest capacity available currently being 3.2 TB). 32 TB would dwarf the amount of DRAM you could stuff into a server.Pierce2623 said:I’m assuming using SLC will be a big bottleneck on density unless this uses larger stacks than we’ve ever seen…

It’s also an Iron Triangle problem here. Pick any two: speed, latency, or density. Even Optane never had more than one bit per cell. -

jeremyj_83 Reply

This uses either MLC or TLC in SLC mode.Pierce2623 said:I’m assuming using SLC will be a big bottleneck on density unless this uses larger stacks than we’ve ever seen… -

bit_user Reply

In the latest I found of Jens Axboe's exploits, he managed to squeeze 13M IOPS out of a pair of P5800X drives. That was just on a single core of an Alder Lake CPU:Li Ken-un said:To add to this, I’ve benchmarked the Intel Optane P5800X (3.2 TB) with FIO and it’s capable of hitting 5 million IOps with 512-byte random reads.

https://www.phoronix.com/news/Core-i9-12900K-King-IOPS

The P5800X started shipping in early 2021.Li Ken-un said:that’ll be technology released in 2026 versus technology that was released in 2022, four years will have been a remarkably small gap given how far ahead Optane was in 2017 when it was first available.

XL-NAND is optimized for data access, not density. I don't know how much overhead that adds, but it's not trivial or else you'd expect a lot more NAND would be structured the same way.Li Ken-un said:Given 128 TB-class QLC SSDs today, they could already do 32 TB SLC SSDs, but chose not to (largest capacity available currently being 3.2 TB). 32 TB would dwarf the amount of DRAM you could stuff into a server.

Optane's plan for density was to scale in the 3rd dimension. Except NAND got there first and turned out to be a lot more scalable in 3D than Optane was.Li Ken-un said:It’s also an Iron Triangle problem here. Pick any two: speed, latency, or density. Even Optane never had more than one bit per cell. -

bit_user Reply

XL-NAND is fundamentally different. From what I've seen, the maximum density supported by this generation appears to be just MLC.jeremyj_83 said:This uses either MLC or TLC in SLC mode. -

Pierce2623 Reply

Is that confirmed? That it will just run as pseudo-SLC like every cache already does on NVME drives? Is Samsung still manufacturing the 970 evo? It’s the last MLC drive i remember.jeremyj_83 said:This uses either MLC or TLC in SLC mode. -

bit_user Reply

XL-Flash is purpose-built to be low-latency and high-endurance. So, it's not just using standard NAND chips and running them in pSLC or pMLC mode. I think it's natively MLC.Pierce2623 said:Is that confirmed? That it will just run as pseudo-SLC like every cache already does on NVME drives? Is Samsung still manufacturing the 970 evo? It’s the last MLC drive i remember.

There's not a lot of info about their new version, but here's a slide from their original 2018 presentation, explaining how it differs:

Source: https://www.tomshardware.com/news/toshiba-3d-xl_flash-optane,37564.html

You can find a little more about it, here:

https://www.tomshardware.com/pc-components/ssds/custom-pcie-5-0-ssd-with-3d-xl-flash-debuts-special-optane-like-flash-memory-delivers-up-to-3-5-million-random-iops

I'm not sure if that uses newer generation chips or not, but it's definitely fewer IOPS than whatever this article is talking about. The P5800X, Optane's swan song, was good for up to 6.5M IOPS, although that's a fair bit more than Intel claimed.