Microsoft Chooses Exotic "Topological Qubits" as Future of Quantum Computing

Microsoft is taking the road less traveled, hoping it will make all the difference.

Microsoft Research announced a major breakthrough in its quantum computing pursuit — the foundation for a new type of qubit, one which had never left the world of theory before... and still hasn't. Microsoft ultimately still hasn't produced devices based on its new qubit design but is adding credence to their feasibility with proofs produced by immense simulations within and without Microsoft's Azure Quantum cloud infrastructure. Microsoft’s research into quantum computing focuses on a special, exotic type of qubit, topological qubits, that it has touted as its vehicle into the future of quantum since 2016.

Despite Microsoft’s investments in the field, there has been relatively little heard from the company. Moreover, Microsoft's tech giant competitors Google and IBM and much smaller companies than Microsoft, like Riggetti Computing and IonQ, have already deployed quantum computing systems, while Microsoft hasn't. So one might think that two-trillion-dollar Microsoft has been dragging its feet in the race towards scalable quantum computing.

The Road Less Travelled By

However, Microsoft would say that it has chosen to go after a type of qubit that its competitors wouldn't. Topological qubits were initially proven to exist in a 2018 Nature magazine publication and then disproven, as the original researchers retracted the article “for insufficient scientific rigour in our original manuscript.”

Article continues belowMicrosoft is not only demonstrating that topological qubits are on the verge of becoming a reality: the company says they are ultimately the only currently valid bet for sustainable, scalable (to the tune of millions of harnessed qubits), and ultimately meaningful quantum computing.

"The qubits of today are not going to be the basis of the quantum computers of tomorrow," Microsoft DIstinguished Engineer Chetan Nayak told Ars Technica. "The qubits we have today are very interesting, very impressive - you can learn a lot and do a lot of research and make good incremental progress. But some kind of new idea is going to be necessary to make a commercial-scale quantum computer."

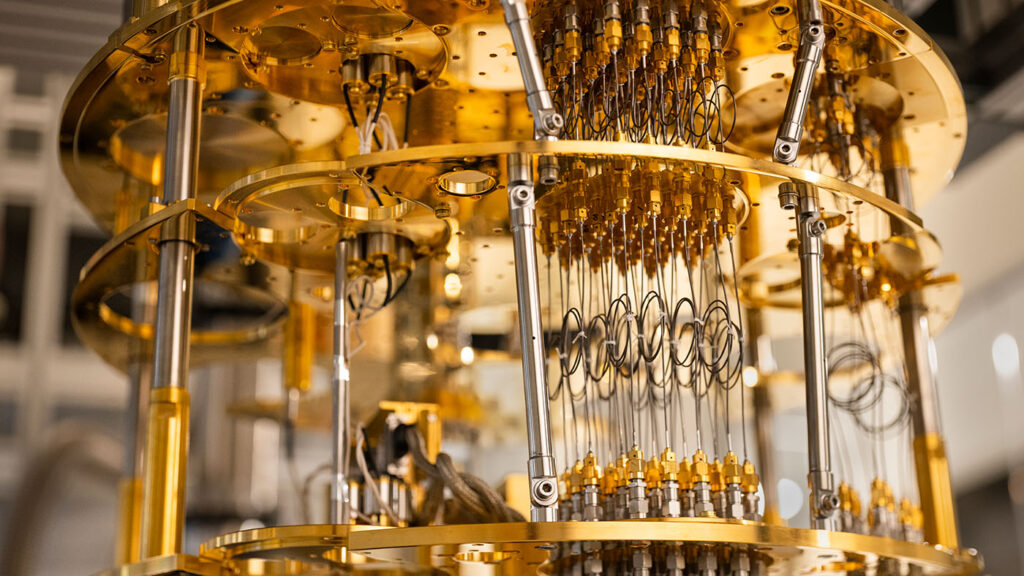

The world’s record for the highest qubit-count on a single device, IBM’s Eagle, currently stands at 127 addressable qubits — a far cry from the million qubit figure Microsoft expects will be needed. And because IBM uses transmon-based qubits, the company’s devices have to be cooled to absolute zero (-273.15 ºC) to keep the qubits safe from environmental interference.

Another element of note is that because non-topological qubits are particularly sensitive to decoherence, these quantum architectures usually include additional qubits whose only function is to provide a measure of error-correction capabilities, meaning that they aren’t directly employed in the calculations. This is inefficient, especially when considering the current difficulties in scaling the number of qubits. Hence Microsoft’s choice to go the long way around. But what is this long way around – what are topological qubits?

Absence of Proof ≠ Proof of Absence

The first thing to remember about topological qubits is that they still haven't materialized. Instead, they are theorized to exist (as we've covered and explored in further detail here) as pairs of Majorana zero modes (MZMs), a special type of quasiparticle that naturally behaves as if it were only half of an electron. These MZMs have been shown to deposit as a layer on the surface of superconducting materials, and showcase extreme resilience to environmental noise (such as heat, stray subatomic particles or magnetic fields). Left unchecked, or not engineered against, this environmental noise leads to decoherence — the process by which qubits fall out of their superposition state and reveal their value. When qubits reveal their value too early, an error appears before the calculation is finished.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Microsoft’s topological qubit design features a U-shaped wire with a Majorana zero mode at each end, thus providing physical separation, in proximity to a quantum dot. This quantum dot serves as a control mechanism because its capacitance changes whenever it interacts with either of the Majorana zero modes, which allows it to be measured. This is the part Microsoft still hasn’t figured out: its design still hasn’t incorporated a quantum dot.

The resilience of topological qubits comes from the fact that both MZMs (in Microsoft’s design, at each end of the U-shaped wire) are responsible for encoding the quantum information. However, because the information is only reachable by looking at both quasiparticles’ states simultaneously, the qubit’s state doesn’t decohere unless both MZMs are equally affected and “forced” to reveal their contents. And depending on the engineering design, researchers can provide a measure of distance between the MZMs, creating a gap between them that reduces the chance of both particles decohering.

To better visualize this, imagine you’re a world-class villain, and you write the password to your doomsday device on a piece of paper. You then give half of it to two of your trusted subordinates (before forgetting it forever). Whatever happens, no single one of them can disclose the password, irrespective of what methods are applied to reveal the information. In quantum terms, the information has become non-local. The only way for someone to recover your full password would be to take both pieces of information and join them together to reveal the final result. In an extremely simplified way, this is what grants MZMs their resilience to decoherence.

To achieve the final superconducting wire design that allows all this, Microsoft's research had to simulate the materials and their shape across 23 adjustable parameters. This step requires tremendous amounts of computing power, but Microsoft does have one of the most powerful computing networks in the world with Azure. In addition, the simulations allowed Microsoft’s team to quickly iterate on both fronts, a process that would have been unfeasible with the stock, hands-on approach to materials engineering. According to Nayak, "If you had to sort [the materials] experimentally by trial and error, you would never be able to optimize over all those parameters in any reasonable amount of time."

Ultimately, Microsoft settled on aluminum as the superconducting wire and indium arsenide as the semiconductor that surrounds it, and the company fabricates the devices itself. This new data and materials-driven approach have been the glue that holds Microsoft’s approach to quantum computing together.

“We are now led by designs that are based on simulations, not just someone batting ideas around in a conference room,” said Nayak. “And now we have the unique growth and fabrication technologies to bring those ideas to life. It doesn’t matter if you have the best designs in the world — if you can’t make them, they just stay on paper.”

It seems that Microsoft's intention of being a hardware provider goes way beyond Xbox and its devices division — the company wants to be the one to provide the fundamental hardware for quantum computing systems, both for on-premises installations and in cloud environments. Microsoft's quantum hardware could very well end up powering a hypothetical Apple "iQuantum" product. In theory, of course.

Future Computing

Microsoft has expectations for the compute density of machines powered by its future topological qubits: Microsoft says a million of these qubits can be fit on a wafer that’s smaller than the security chip on a credit card. It’s the transistor revolution all over again, but with qubits.

Despite Microsoft still not having delivered a working quantum computing product, we have to remember this is one of the most complex fields possible at a nightmarish interception between theoretical physics, practical engineering, and pure economics. But there is also a time-critical factor: the company is ceding ground on a market that is estimated to be worth up to $76 billion dollars by 2030. But then again, should Microsoft truly deliver on an openly scalable quantum computing system before anyone else, the market won't care who moved first in the space.

Experts in the quantum computing field are even more aware of how feeble the status quo still is for quantum computing systems. One of the critics of the since-retracted 2018 Nature publication, Marco Valentini, believed at the time that Majorana Modes would eventually be created, detected, and harnessed as qubits — just not as they were presented in the retracted 2018 paper.

That same paper was subject to an investigation by an independent committee of experts which found no evidence of data tampering. In the end, it was the most common cause for error: human failure. The original data fell to confirmation bias, as the authors of the report noted, writing that “The research program the authors set out on is particularly vulnerable to self-deception, and the authors did not guard against this.”

Microsoft knows what is at stake with its choice of qubits - and was already deep into its research when the entire drama surrounding the paper exploded. Perhaps because of this, Microsoft is keen to defend its results: The company's research wing had one separate team for analyzing the output data precisely to avoid confirmation bias. And the company also submitted its research data to "an expert council of independent consultants," which sounds a lot like inviting eminent peers in the field to constructively look at the researcher's work. Microsoft is pressing on with topological qubit research while attempting to insulate its efforts from previous vices.

“It would be irresponsible of the physics community to decide now that we already know the only way,” Ady Stern of the Weizmann Institute of Science in Rehovot, Israel, told Quanta Magazine. “We’re on page 10 of a thriller, and we’re trying to guess how it’s going to end.”

With its topological qubits, Microsoft is aiming to be the BluRay of the quantum format conflict. And perhaps it will be – the company seems to be convinced of the physics behind its calculated choice. Time, as always, will tell.

Francisco Pires is a freelance news writer for Tom's Hardware with a soft side for quantum computing.

-

w_barath ReplyAdmin said:Microsoft has announced a breakthrough in demonstrating the theoretical feasibility of particularly exotic qubits, topological qubits, in an approach that isn't shared by any of its quantum competitors.

Microsoft Chooses Exotic "Topological Qubits" as Future of Quantum Computing : Read more

THG should update this article to reflect that the "Majorana particle" has been debunked and Microsoft has withdrawn their claims.

https://spectrum.ieee.org/majorana-microsoft-backed-quantum-computer-research-retracted

Also of note: this was published well before THG's article.

Maybe try googling "my story debunked" before publishing...