Micron Reveals HBMnext as Successor to HBM2e

Micron Tech Brief just keeps on giving!

In addition to confirming the RTX 3090 and its insanely fast GDDR6X memory, Micron also confirmed the successor to HBM2e: HBMnext. This new generation of HBM (high-bandwidth memory) will be even faster than its predecessors, but no details are available on its speeds or capacities yet.

"HBMnext is projected to enter the market toward the end of 2022. Micron is fully involved in the ongoing JEDEC standardization. As the market demands more data, HBM-like products will thrive and drive larger and larger volumes." explains Micron.

Meanwhile, Micron expects its HBM2e solution to land before the end of the year. Its modules will come stacked to four and eight layers for 8 GB and 16 GB capacities per stack. They will have speeds of up to 3200 MHz, though Micron notes speeds might be 'potentially higher' so we're curious if they'll overclock like Samsung's HBM2e stacks.

SK Hynix also kicked off volume production of its HBM2e stacks last month.

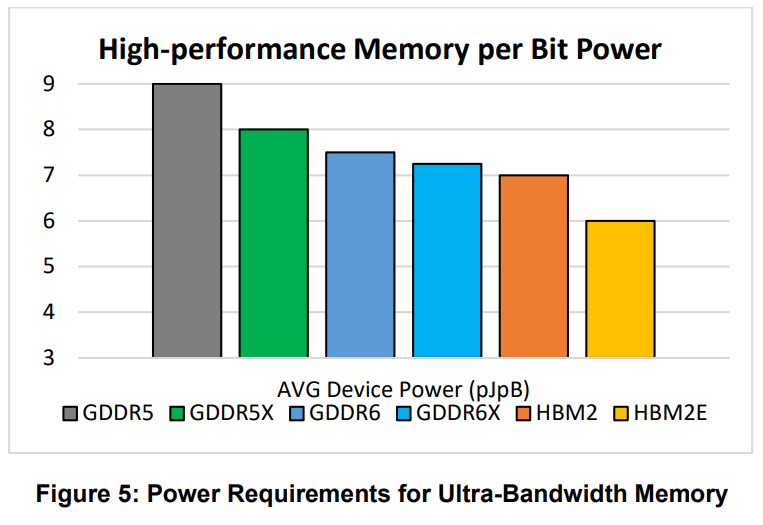

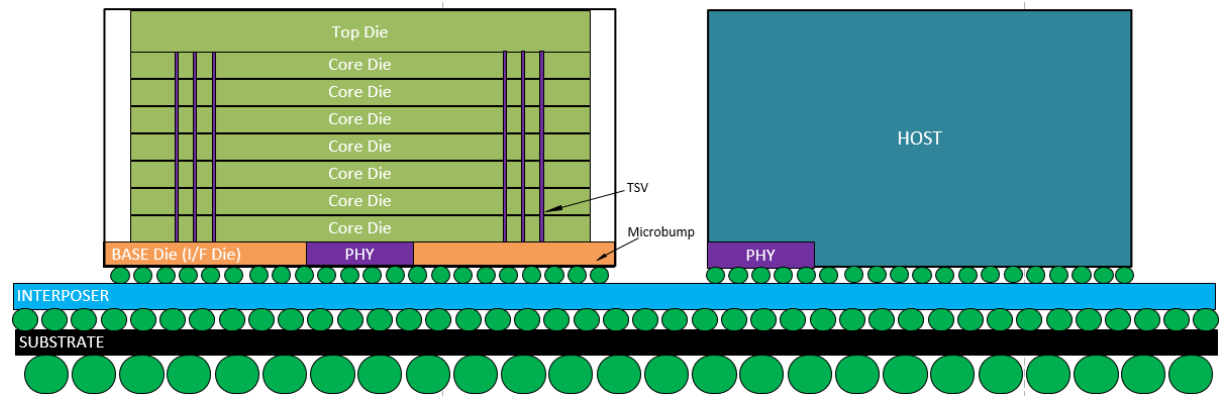

HBM memory essentially stacks memory layers on top of each other, and these memory stacks are placed on the same silicon interposer as the GPU. This enables much wider memory interfaces, resulting in extremely high bandwidth memory, a more compact total package, and lower power consumption. But HBM has a price tag on it, which is why it's a more premium memory solution mostly found in GPUs for scientific use, such as Nvidia's A100.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Niels Broekhuijsen is a Contributing Writer for Tom's Hardware US. He reviews cases, water cooling and pc builds.

-

thisisaname Nice tech but unless it gets cheaper to implement it is never going to be anything other than a niche product.Reply -

TerryLaze Reply

Yes this is true for any technology, it's the same thing people used to say about SSD,heck about PCs in general.thisisaname said:Nice tech but unless it gets cheaper to implement it is never going to be anything other than a niche product.