Workstation Shootout: Nvidia Quadro 5000 Vs. ATI FirePro V8800

Nvidia sure didn't waste any time introducing its Fermi architecture to the workstation space. Its Quadro 5000 is one of the first models to use the company's GF100 graphics processor. How does this card stack up against ATI’s flagship FirePro V8800?

Nvidia Quadro 5000: Features, Connectors, And Driver

DisplayPort is the graphics card industry’s new favorite connector, since it guarantees high scalability for upcoming display solutions. There’s just one catch. How do you establish a new connector if the monitor makers aren’t willing to play along, and the majority of users have only just made the switch from VGA to DVI? Many folks may not even quite know what to make of HDMI yet, much less DisplayPort.

Nvidia’s approach is a cautious one, and while the company equips the Quadro 5000 with two DisplayPort connectors, it also provides a single dual-link DVI output. However, unless your monitor is already compatible with DisplayPort, you’ll still need to buy additional adapters if you’re planning to use a multi-monitor setup.

The memory system has also undergone an evolutionary change in that it now supports ECC (error correction code), making the Quadro 5000 the first card with this capability. The technique is not necessarily all that relevant to image processing. However, it is of great importance in medical analysis, financial computation, and cluster-based configurations. Even small single-bit errors can have a tremendous impact on the final result. ECC allows the graphics card to detect and correct this type of error, just like server and workstation motherboards can with system memory. The downside is that it results in a performance penalty. By default, Nvidia deactivates this feature in its drivers.

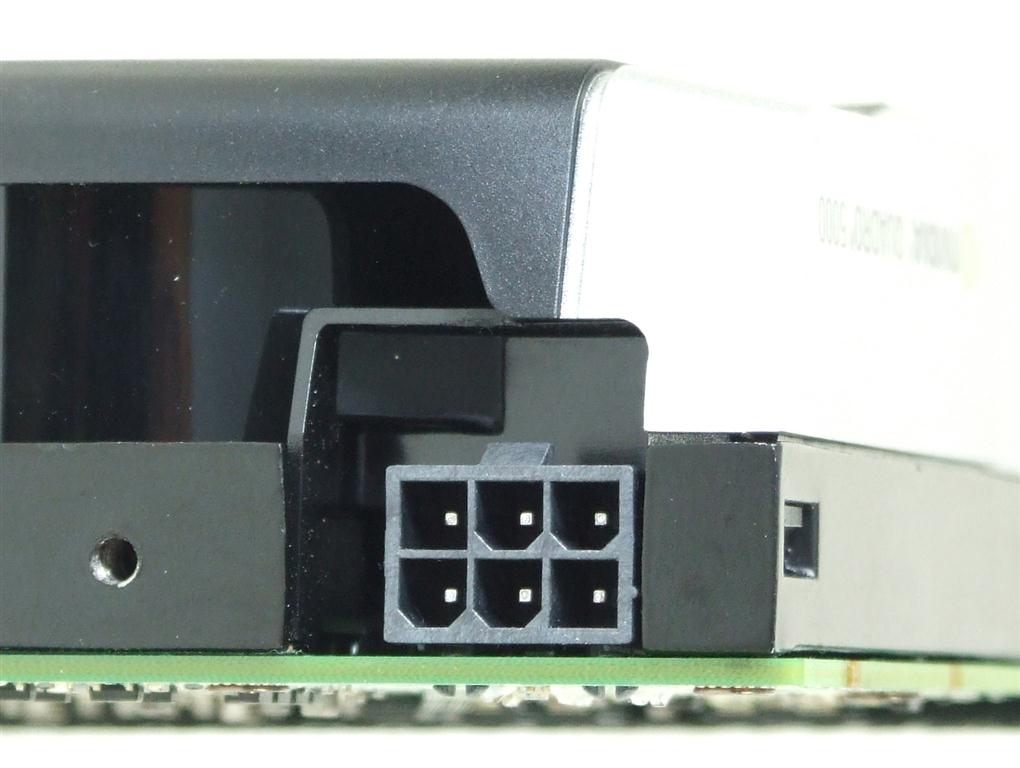

Nvidia also provides a 3-pin DIN port for use with 3D shutter glasses on the card’s backplate. The company already has compatible wireless solutions in its product portfolio as well.

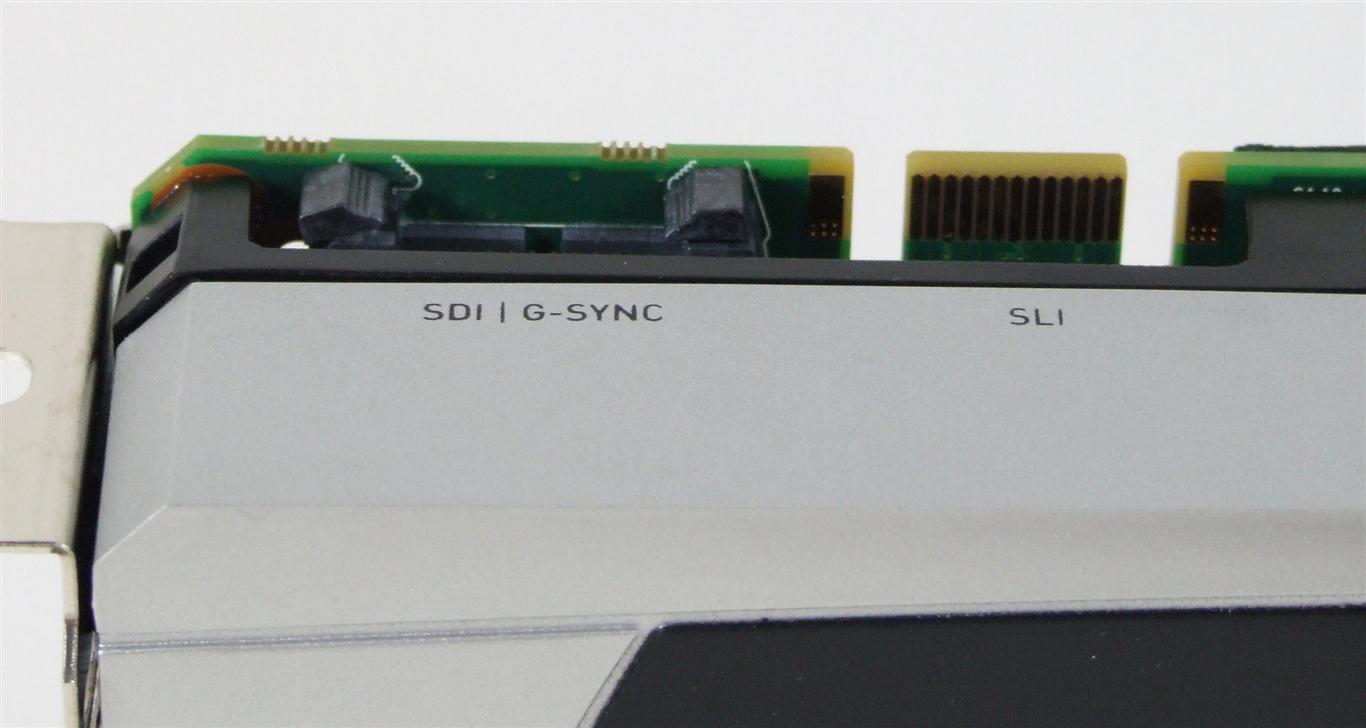

Feature-wise, Nvidia is competitive with AMD once again. Shader Model 5, DirectX 11, OpenGL 4.1, and OpenCL 1.0 are all finally supported in this generation of GPUs after several delays. Special solutions like Framelock, Genlock, and Serial Digital Interface required by the broadcast industry are also provided by this card.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Nvidia Quadro 5000: Features, Connectors, And Driver

Prev Page Nvidia Quadro 5000: Overview Next Page ATI FirePro V8800: Overview-

tacoslave if amd put a little more work on their drivers (i.e crossfire and firepro performance)they would be the clear performance champion.Reply -

Gin Fushicho I really wish I knew what these numbers meant.Reply

For someone who doesn't do 3-D design these benchmarks are kinda confusing. -

joytech22 You need to remember, Fermi is designed not "Just" for games, but was also designed, from day one, with computing in mind as well.Reply -

SchizoFrog Once again the arguement regarding AMD Drivers is brought to the fore. But more than this, when AMD has a line of products that could be said to 'miss' they absolutely FAIL. nVidia on the otherhand seem to have learned their lesson well from the 5xxxFX series and can still produce products that can compete at least at some level, ie: GTX460. Although these are Workstation products, nVidia have a complete package with GPUs and Drivers that work from the off.Reply -

davefb sort of interesting, but why is there no comparison to mainstream boards? There is a massive premium of cost here but nothing to be able to say 'hey boss, the onboard graphics we use really don't cut it any more, how about a quadro'.Reply

(or have I sped-read past the reason why ;) )