AMD Details EPYC Bergamo CPUs With 128 Zen 4C Cores, Available Now

Dropping the hammer.

AMD announced a range of new products today at its Data Center and AI Technology Premiere event in San Francisco, California. The company finally shared more details about its 5nm EPYC Bergamo processors for cloud native applications, and the chips are shipping to customers now.

AMD also announced its Instinct MI300 processors that feature 3D-stacked CPU and GPU cores on the same package with HBM, along with a new GPU-only MI300X model that is also used to bring eight accelerators onto one platform that wields an incredible 1.5TB of HBM3 memory. AMD also announced that its EPYC Genoa-X processors with up to 1.1GB of L3 cache. All three of these products are available now, but AMD also has its EPYC Sienna processors for telco and the edge coming in the second half of 2023.

AMD EPYC Bergamo

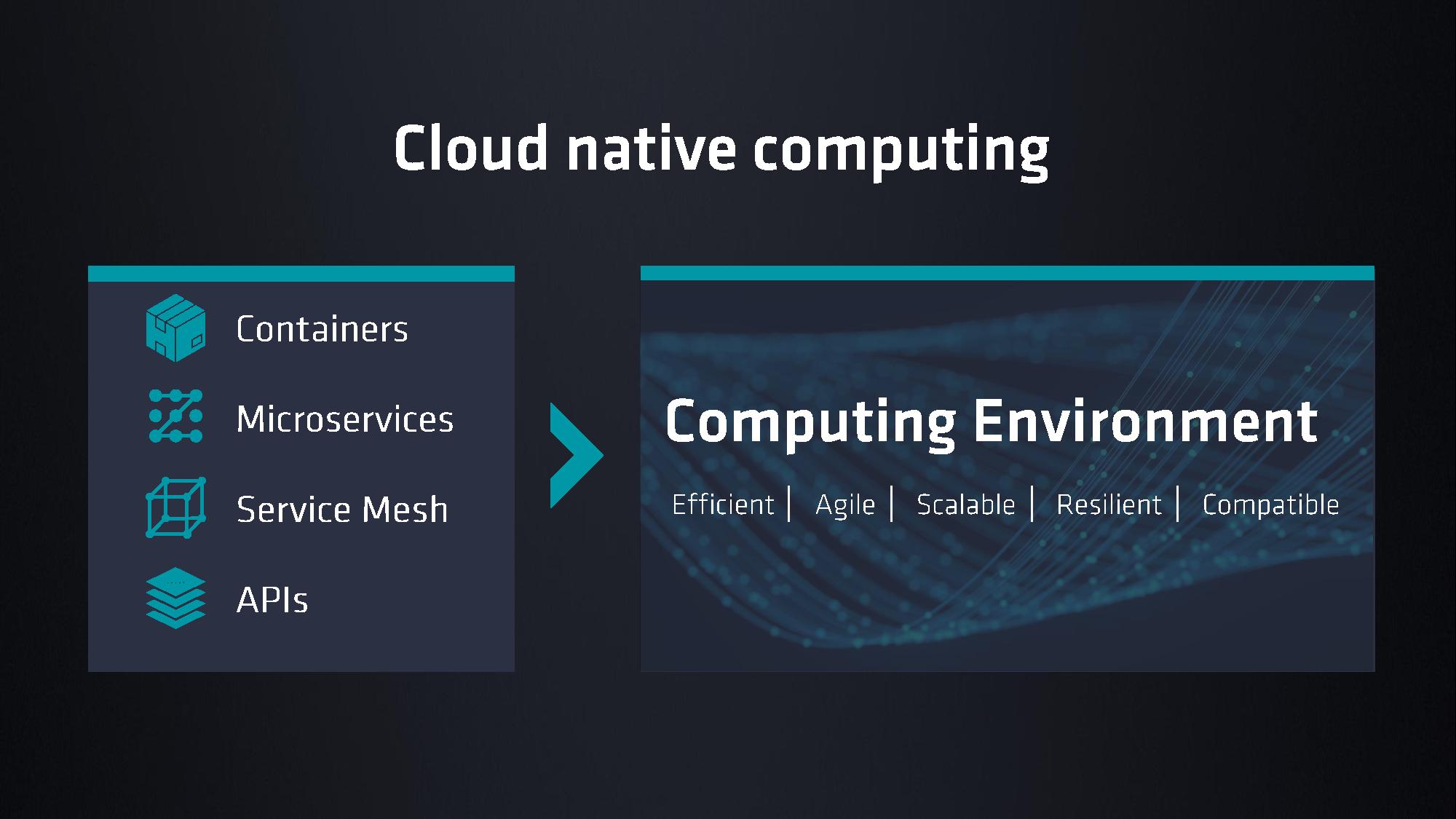

AMD's 128-core EPYC Bergamo processors are the industry's first x86 cloud native CPUs, which are designed for the highest core density with an optimized Zen 4c core that halves the area needed for each core. These chips will compete with Intel's 144-core Sierra Forest chips, which mark the debut of Intel's Efficiency cores (E-cores) in its Xeon data center lineup, and Ampre's 192-core AmpereOne processors, not to mention the custom silicon being developed or employed by Google and Microsoft.

All of these offerings are designed to maximize power efficiency for highly-parallel and latency-tolerant workloads. Examples include high-density VM deployments, data analytics, and front-end web services. The chips offer higher core counts than standard data center solutions, with a lower frequency and power envelope.

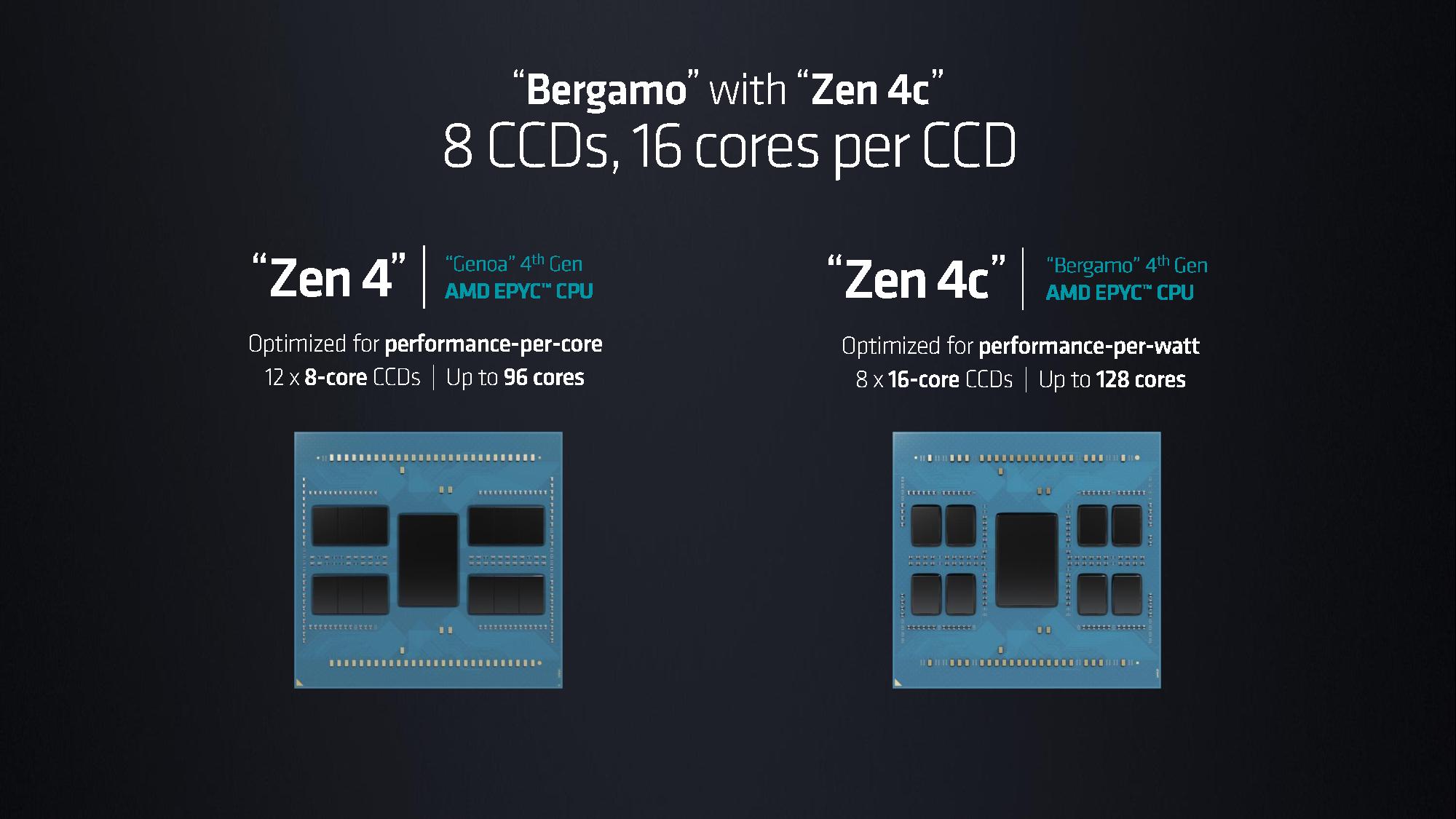

AMD's Bergamo has 128 cores and drops into server platforms that utilize the same socket SP5 as the standard 96-core EPYC Genoa processors. Like their regular counterparts, Bergamo supports 12-channel memory running at DDR5-4800. AMD forges the chips by combining chiplets with Zen 4c cores with the company's existing 'Floyd' central I/O die, thus tying the compute chiplets to a memory and I/O chiplet based on an older process node.

| Row 0 - Cell 0 | Cores / Max Threads | Base/Boost (GHz) | Default TDP | L3 Cache |

| 9754 | 128 / 256 | 2.25 / 3.1 | 360W | 256 MB |

| 9754S | 128 / 128 | 2.25 / 3.1 | 360W | 256 MB |

| 9734 | 112 / 224 | 2.2 / 3.0 | 320W | 256 MB |

For now, AMD has announced the above two Bergamo processors, the EPYC 9754 with 128-cores/256-threads, and the EPYC 9734 with 112-cores/224-threads. The latter has two cores per CCD disabled. Most of the remaining specs other than core counts are the same, so the 9734 still has the full 16MB of L3 cache per CCX and 256MB of L3 cache total. AMD claims a 2.7X increase in energy efficiency with the Bergamo chips.

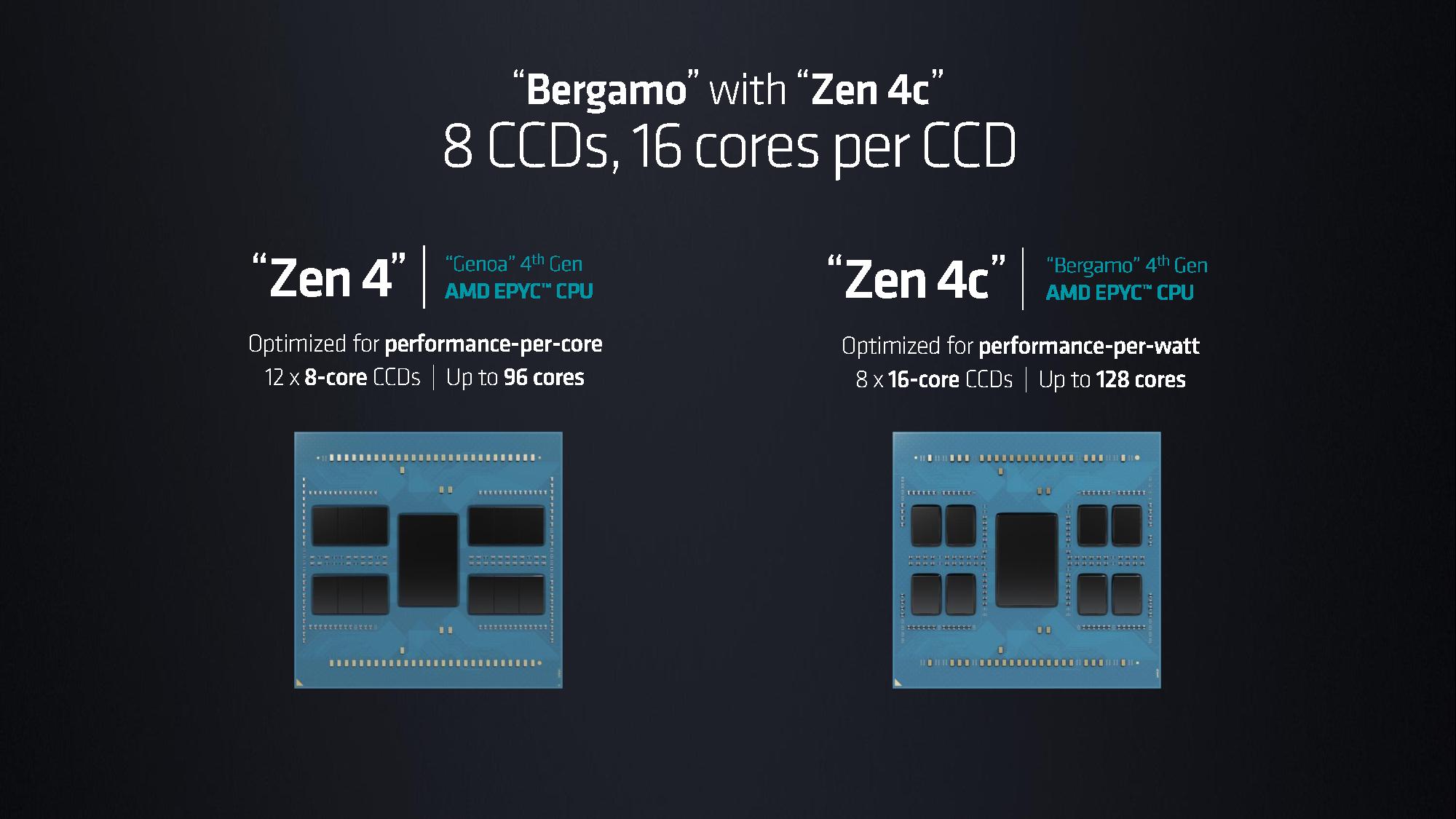

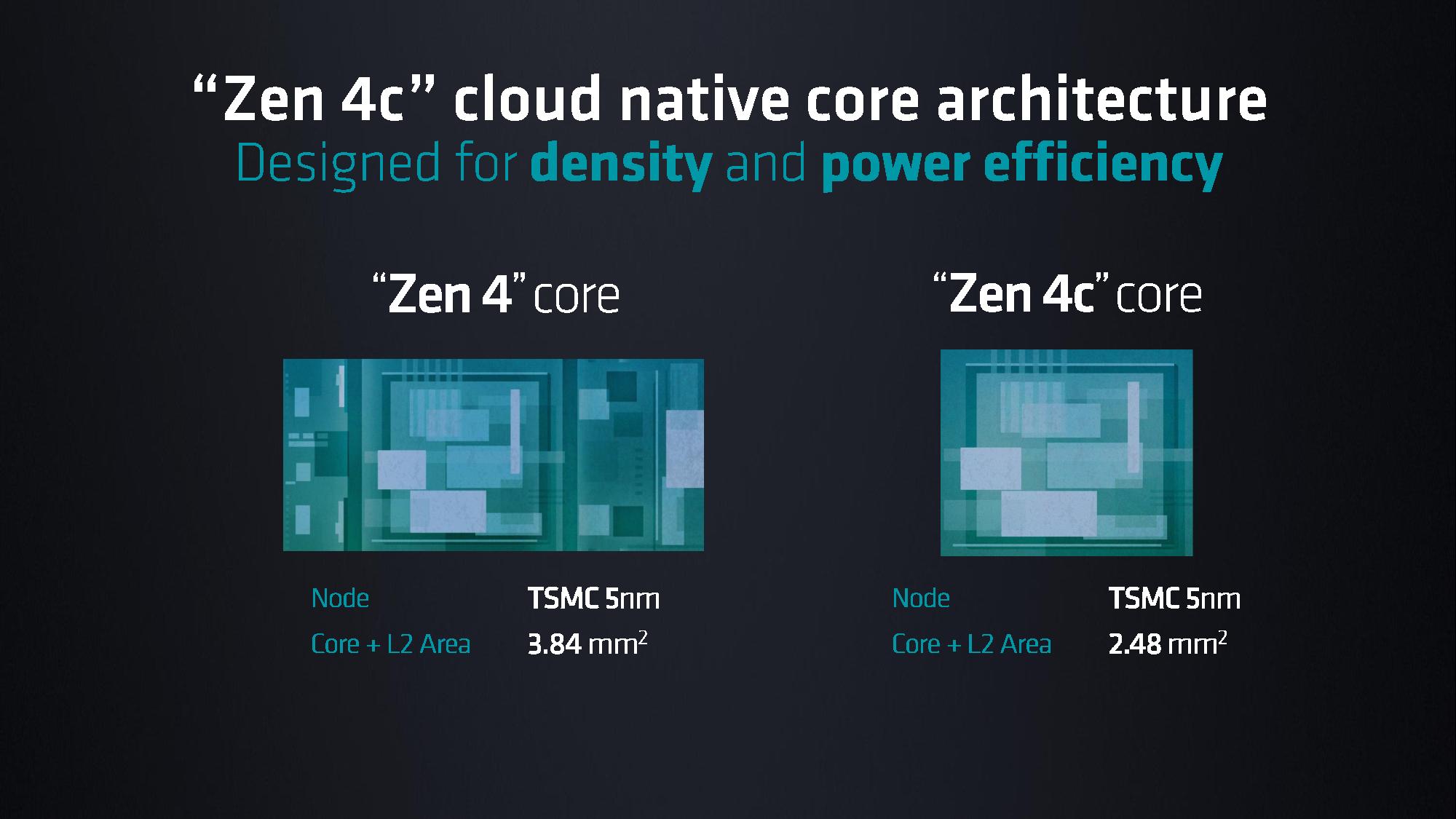

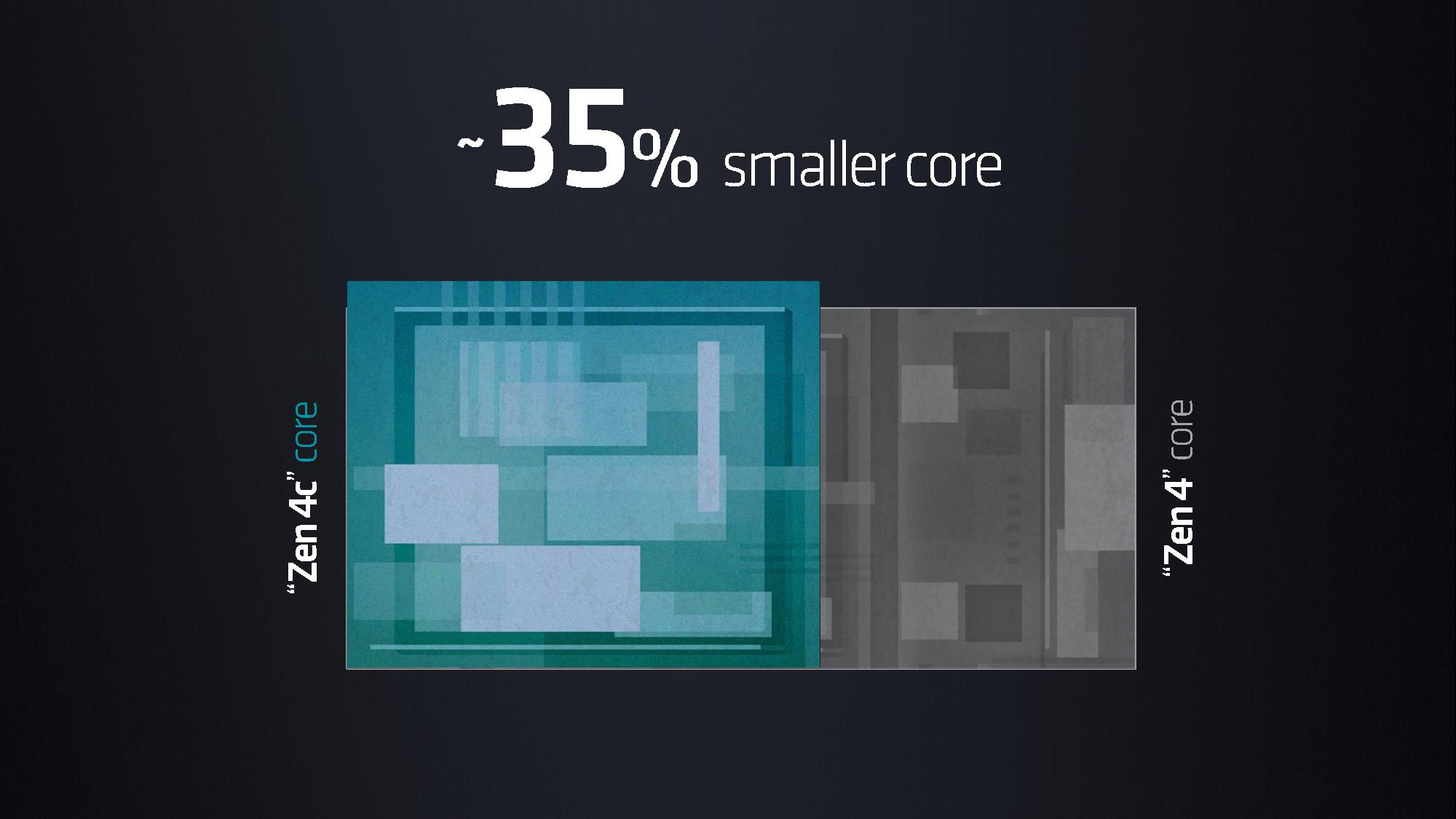

AMD shared a few broad strokes about the Bergamo architecture, including that it has a core + L3 cache area of 2.48mm^2, which is 35% smaller than the 3.84mm^2 that it achieved on the same process node with the standard Zen 4 cores. AMD employs eight 16-core CCDs to reach the peak core count of 128 cores.

It's also interesting to note that at present, AMD uses just eight Zen 4C chiplets with the central IO chiplet, whereas the standard EPYC chips use up to twelve Zen 4 chiplets. Could we see a future Zen 4C solution with twelve chiplets and 192-cores? Perhaps, though AMD hasn't announced such a design yet so we'll have to wait and see.

We're learning more deep-dive architectural details of the chips today, stay tuned for further coverage.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

bit_user ReplyAMD's Bergamo has 128 cores ... These chips will compete with Intel's 144-core Sierra Forest chips

Okay, so the headline on the live blog about "144-Core EPYC Bergamo" was just a mix up, then? I thought AMD had pulled a fast one!

Seemed plausible, as they could use 12 CCDs with 12 Zen 4c cores, each. We know the IO Die of EPYC can handle 12 CCDs, so it's not a big stretch to imagine. -

JarredWaltonGPU Reply

I would assume so. (I confirmed with Paul that it's 128-core and have updated that HL.) More importantly, a future 12 CCD variant with 192-cores should be possible. It's just not ready (or not needed?) yet.bit_user said:Okay, so the headline on the live blog about "144-Core EPYC Bergamo" was just a mix up, then? I thought AMD had pulled a fast one!

Seemed plausible, as they could use 12 CCDs with 12 Zen 4c cores, each. We know the IO Die of EPYC can handle 12 CCDs, so it's not a big stretch to imagine. -

bit_user Reply

I figured 144 cores, because maybe that's all they had enough memory bandwidth or power envelope to support. Perhaps we'll see.JarredWaltonGPU said:I would assume so. More importantly, a future 12 CCD variant with 192-cores should be possible. It's just not ready (or not needed?) yet. -

-Fran- So it was 2:1 (ish)! That's a nice ratio for the dense/smol/concentrated/efficient/little/cucumber cores, for sure.Reply

Looking forward to the benchmarks in server racks!

Regards. -

HideOut "AMD's 128-core EPYC Bergamo processors are the industry's first x86 could native CPUs, which are designed for the highest core density with an optimized Zen 4c core that halves the area needed for each core."Reply

What the heck is that supposed to say? -

motocros1 Reply

I believe it should be cloud native CPUs instead of could native CPUsHideOut said:"AMD's 128-core EPYC Bergamo processors are the industry's first x86 could native CPUs, which are designed for the highest core density with an optimized Zen 4c core that halves the area needed for each core."

What the heck is that supposed to say? -

bit_user Reply

That must be including L3 cache, because the article then goes on to show the slide comparing the cores and states:-Fran- said:So it was 2:1 (ish)! That's a nice ratio for the dense/smol/concentrated/efficient/little/cucumber cores, for sure.

"AMD shared a few broad strokes about the Bergamo architecture, including that it has a core + L3 cache area of 2.48mm^2, which is 35% smaller than the 3.84mm^2"

-

bit_user Reply

There are rumors of hybrid AMD CPUs, but they've so far focused on the laptop market.deesider said:I assume these Zen 4c cores are a precursor to the mixed core desktop lineup? -

Xajel ReplyJarredWaltonGPU said:I would assume so. (I confirmed with Paul that it's 128-core and have updated that HL.) More importantly, a future 12 CCD variant with 192-cores should be possible. It's just not ready (or not needed?) yet.

I personally thought they will release the 192 core version already, but it could be for demand or some other technical difficulties.

Technical challenges I can think of it now:

1. Denser cores required denser traces in the interposer, they might need more time to engineer this.

2. The IO die doesn't have enough resources to handle 12x16C chiplets, they might need a new IO die for this.

3. Going beyond 128C will give very little benefits because of memory bandwidth limitations.

4. Power limitations means going beyond 128C means even lower clocks than now, making the benefits questionable.

5. A combination of above and other factors I missed.