AMD Reveals Instinct MI210 PCIe Card for Exascale HPC

MI210 PCIe does 22.6 TFLOPS

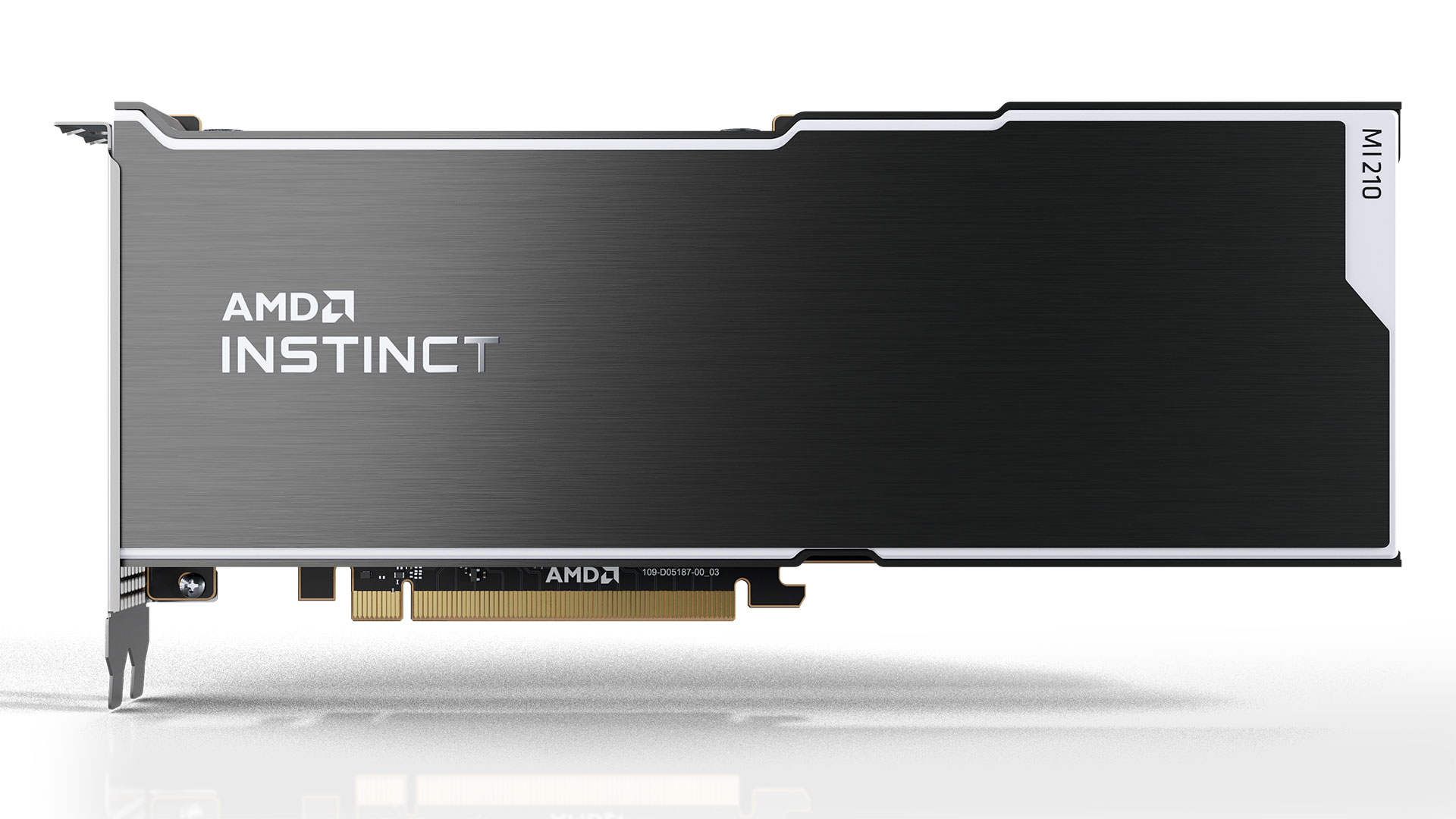

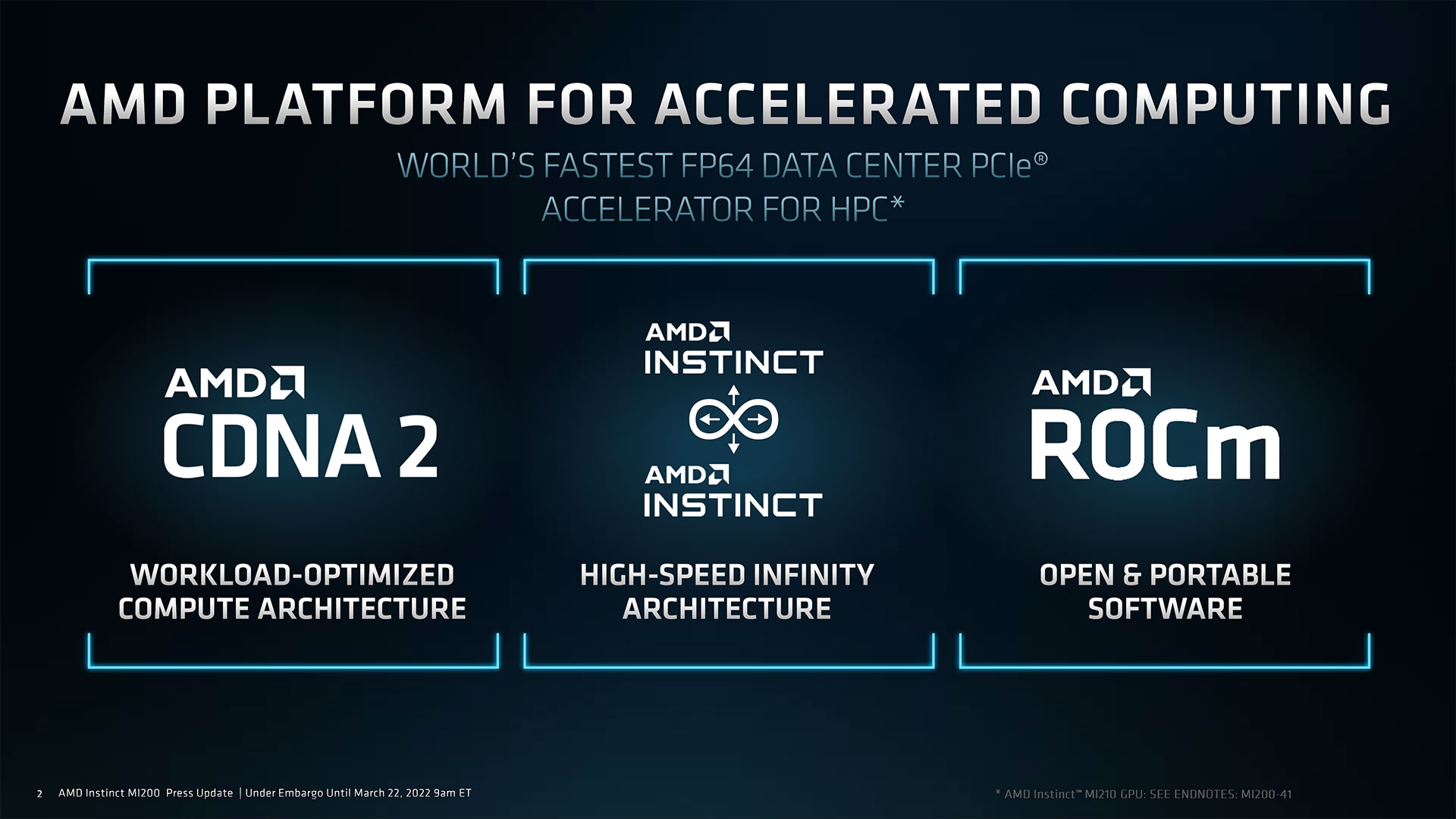

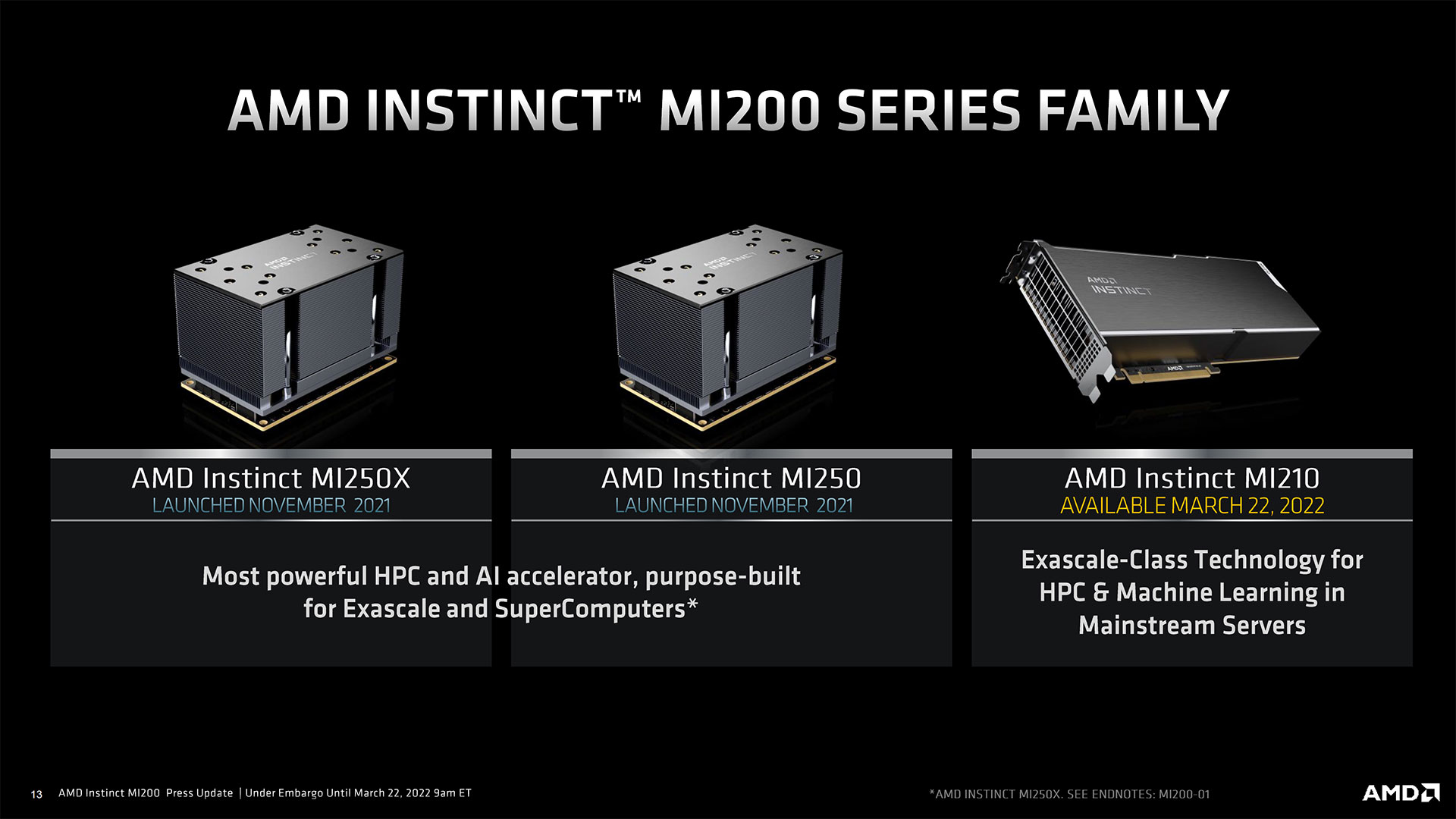

AMD officially introduced its Instinct MI210 PCIe accelerator card for datacenters, servers, and workstations that need compute performance. The Instinct MI210 is based on one CDNA 2 compute GPU and carries 64GB of HMB2E memory. With this card, AMD kicks off its offensive on the market of commercial high-performance computing (HPC), where Nvidia has been thriving for well over a decade.

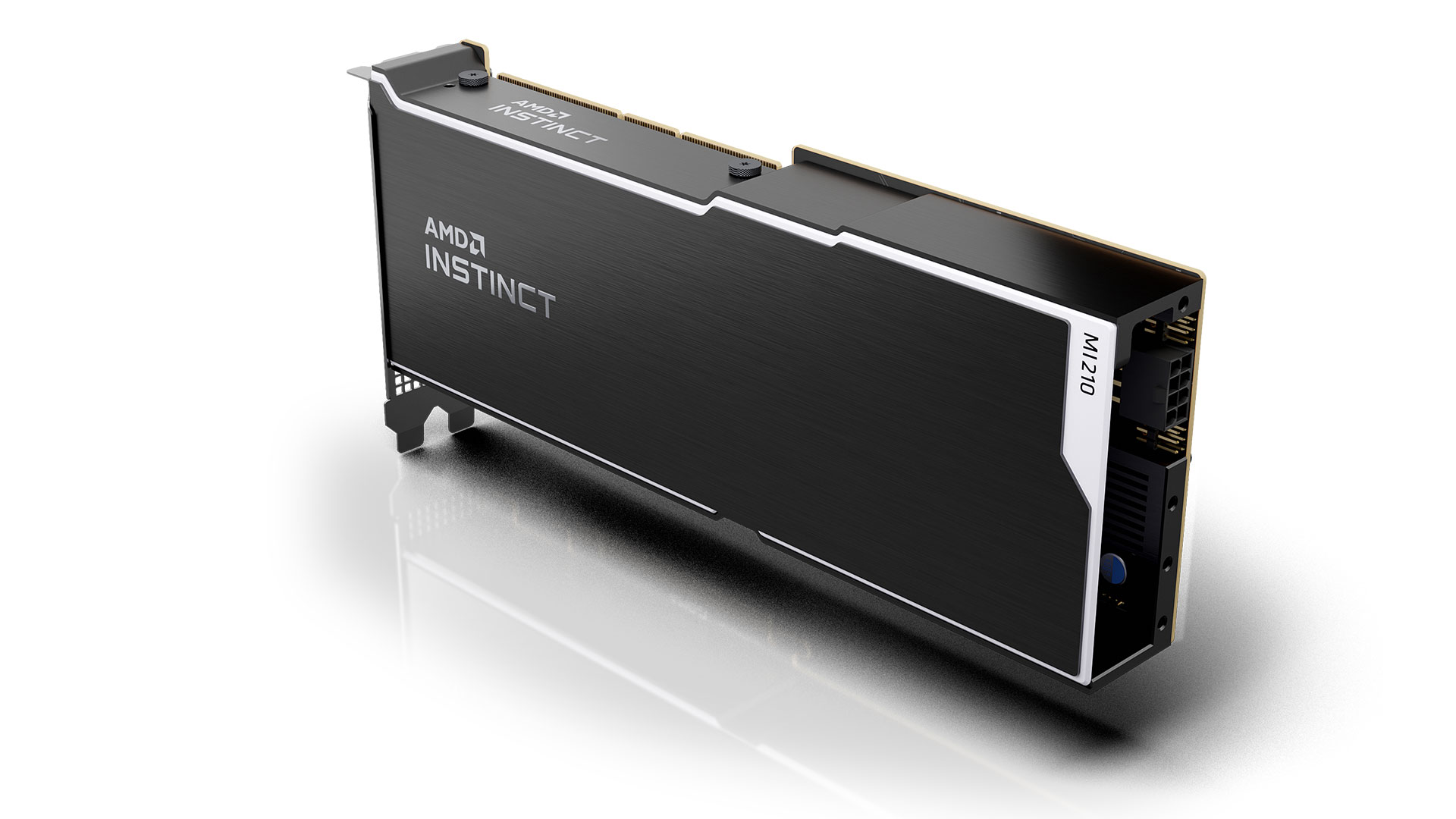

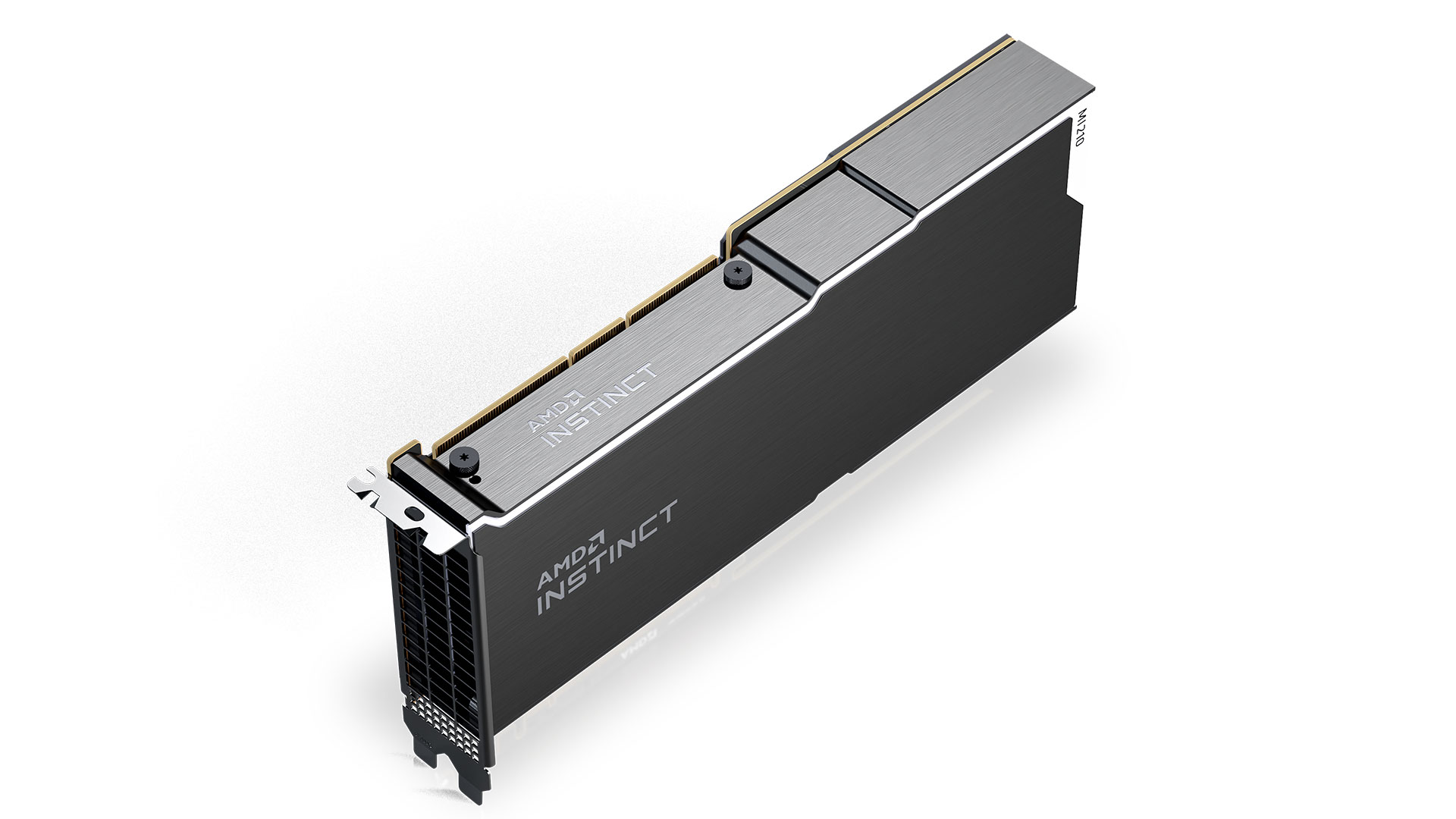

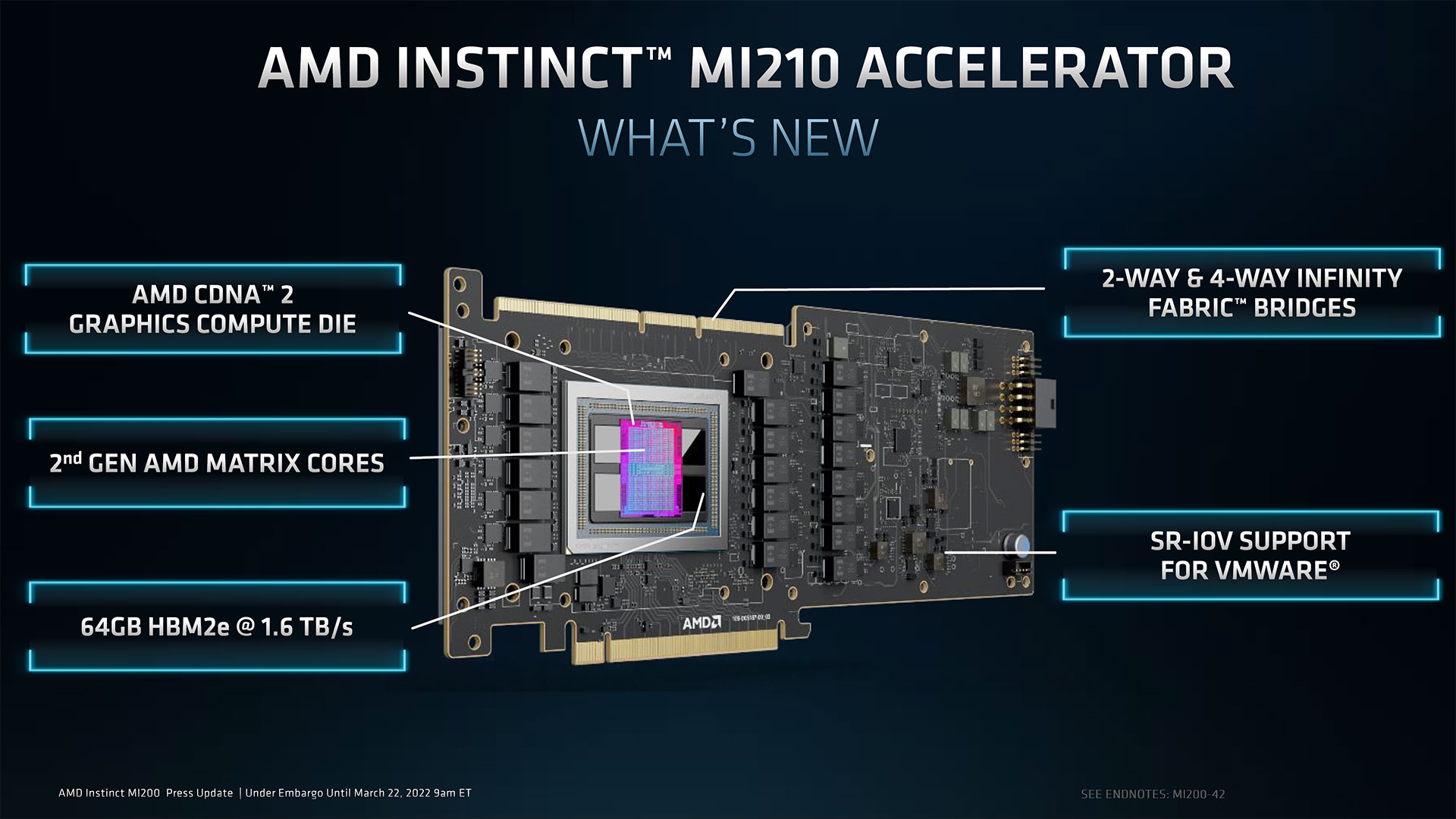

Unlike the larger MI250/MI250X that use dual chips, the AMD Instinct MI210 card uses a single CDNA 2 graphics compute die (GCD) with 104 compute units (6656 stream processors), and the 64GB of HBM2E ECC memory uses a 4096-bit interface. The card has 2-way and 4-way Infinity fabric connectors, so two or four cards can work together in a single system to double or quadruple performance. The board uses a passive dual-wide cooling system, so it's designed for rack mounted servers or special workstations and not your typical desktop PC. It requires one eight-pin auxiliary PCIe power connector, which means its maximum power consumption using the PCIe slot and connector is only 225W.

In terms of compute capabilities, the Instinct MI210 board supports the same data formats (FP64/FP32 vector/matrix, FP16, bfloat16, INT8) as supported by other Instinct MI200-series CDNA 2 compute GPUs, but in terms of performance, the Instinct MI210 offers half the oomph of the Instinct MI250 that has two GCDs, comes in an OCP accelerator module (OAM) form-factor, and naturally consumes significantly more power.

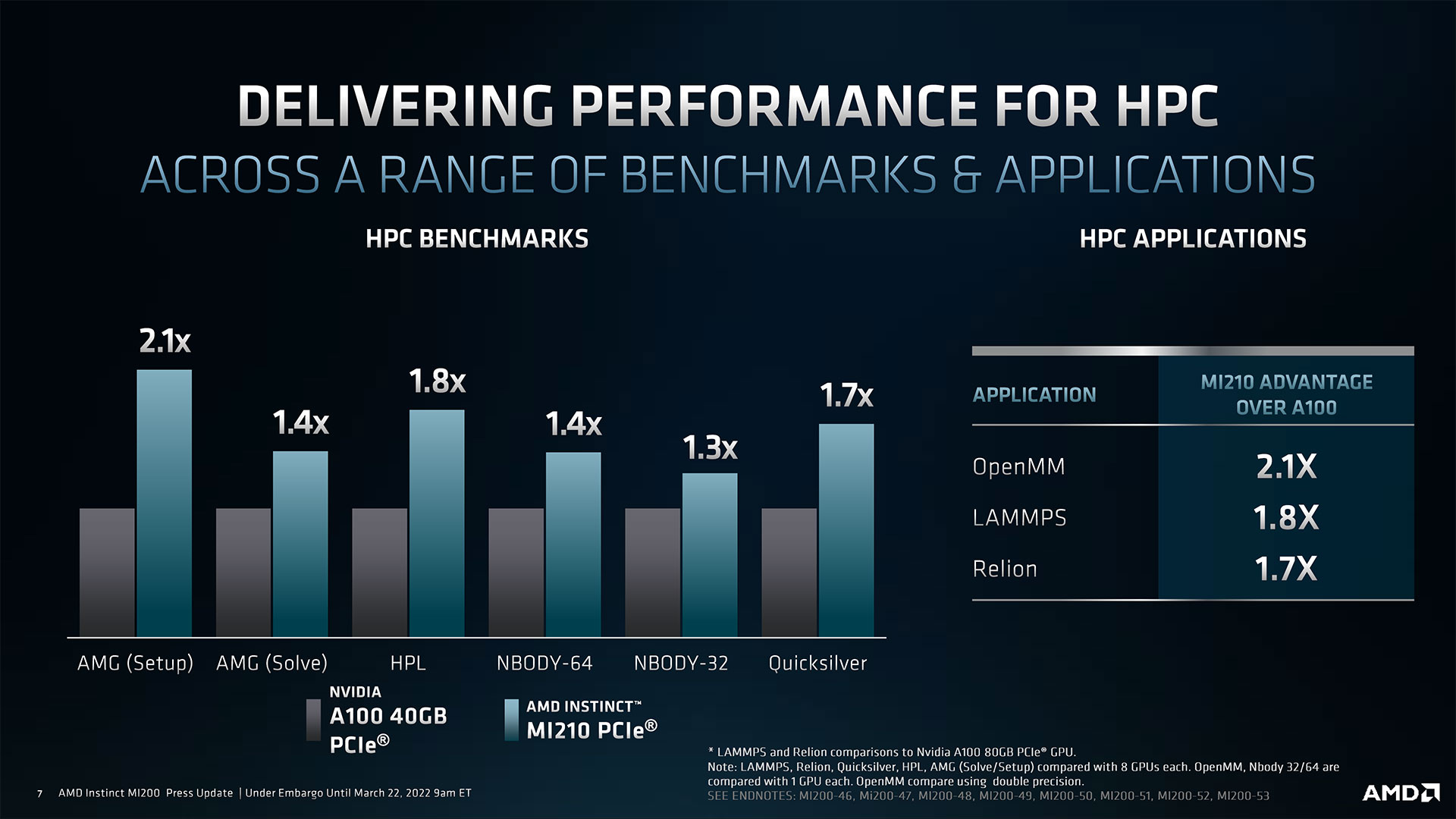

The obvious rival for AMD's Instinct MI210 board is Nvidia's A100 80GB PCIe card that was launched about a year ago (in its 80GB version). On paper, AMD's CDNA 2-based solution outpaces its rival in FP64/FP32 vector and matrix operations by over two times. By contrast, Nvidia's A80 is faster with AI/ML-specific formats like FP16, bfloat16, and INT8.

| Row 0 - Cell 0 | Instinct MI210 | Nvidia A100 80GB PCIe | Instinct MI250 | Instinct MI250X |

| Compute Units | 104 | - | 208 | 220 |

| Stream Processors | 6,656 | 6,912 | 13,312 | 14,080 |

| FP64 Vector (Tensor) | 22.6 TFLOPS | 19.5 TFLOPS | 45.3 TFLOPS | 47.9 TFLOPS |

| FP64 Matrix | 45.3 TFLOPS | 9.7 TFLOPS | 90.5 TFLOPS | 95.7 TFLOPS |

| FP32 Vector | 22.6 TFLOPS | 9.7 TFLOPS (?) | 45.3 TFLOPS | 47.9 TFLOPS |

| FP32 Tensor Float | - | 156 | 312 TFLOPS | - | - |

| FP32 Matrix | 45.3 TFLOPS | 19.5 TFLOPS | 90.5 TFLOPS | 95.7 TFLOPS |

| FP16 | 181 TFLOPS | 312 | 624* TFLOPS | 362.1 TFLOPS | 383 TFLOPS |

| bfloat16 | 181 TFLOPS | 312 | 624* TFLOPS | 362.1 TOPS | 383 TOPS |

| INT8 | 181 TOPS | 624 | 1248 TOPS | 362.1 TOPS | 383 TOPS |

| HBM2E ECC Memory | 64GB | 80GB | 128GB | 128GB |

| Memory Bandwidth | 1.6 TB/s | 1.935 TB/s | 3.2 TB/s | 3.2 TB/s |

| Form-Factor | PCIe card | PCIe card | OAM | OAM |

*with sparcity

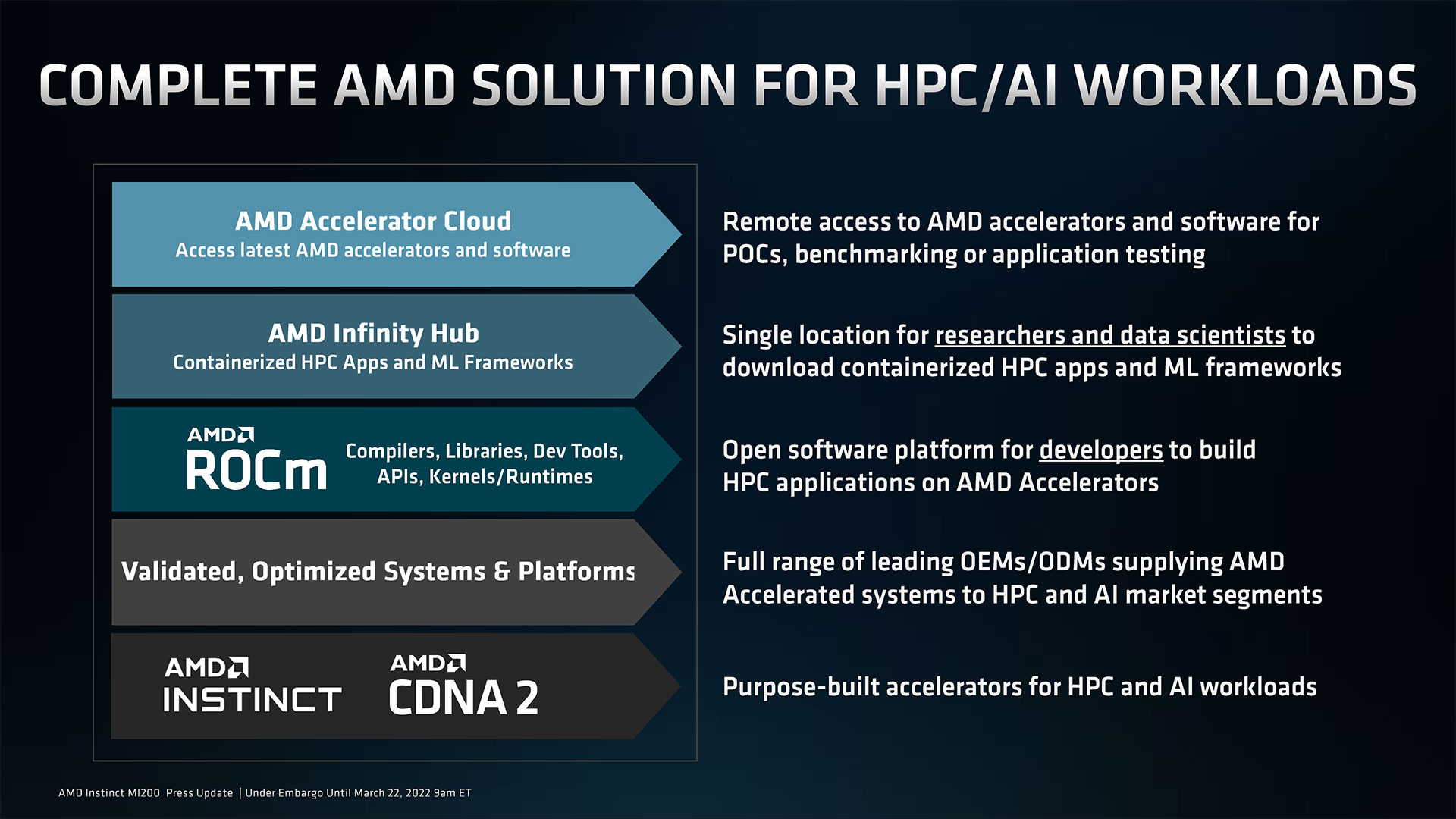

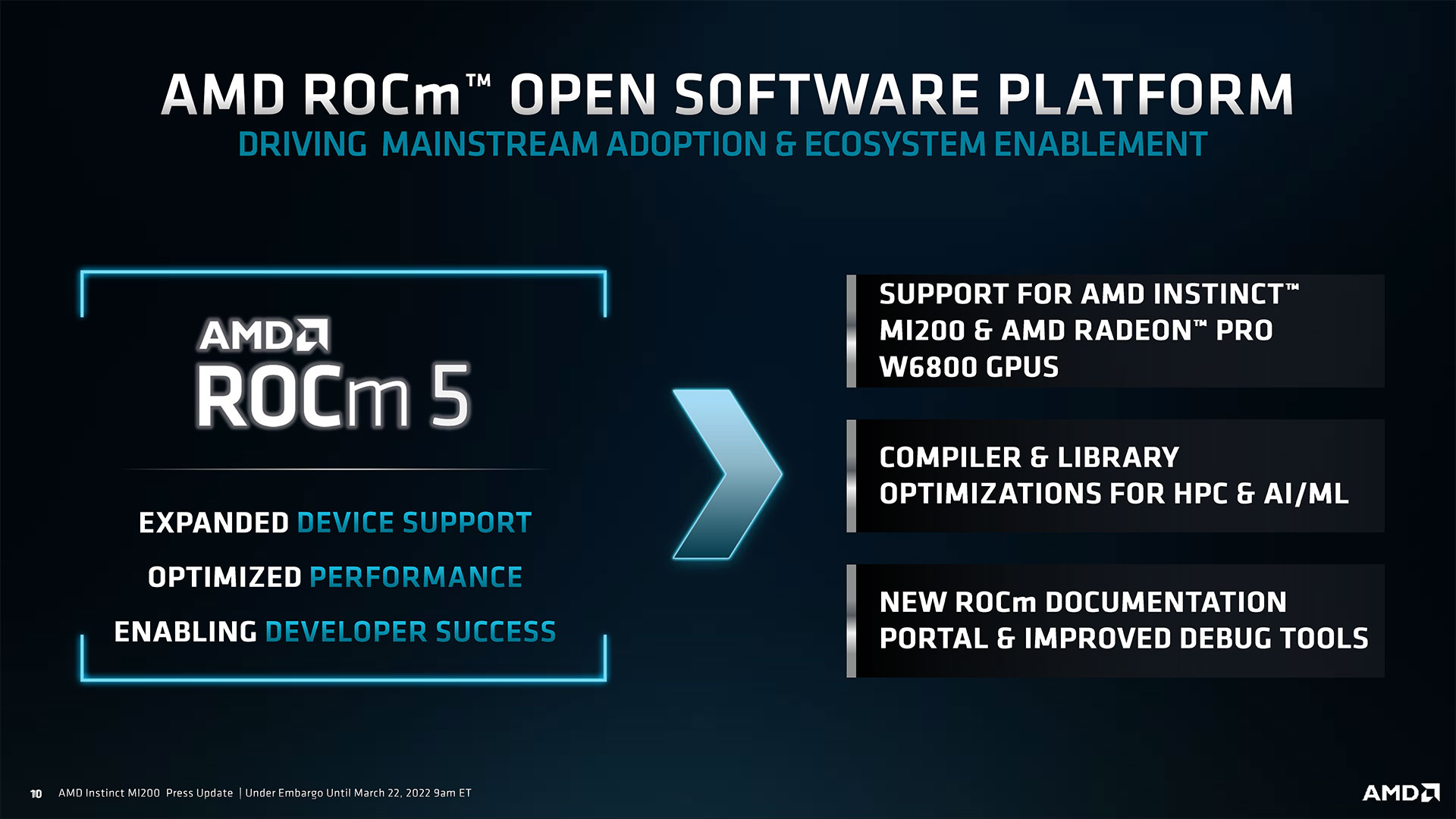

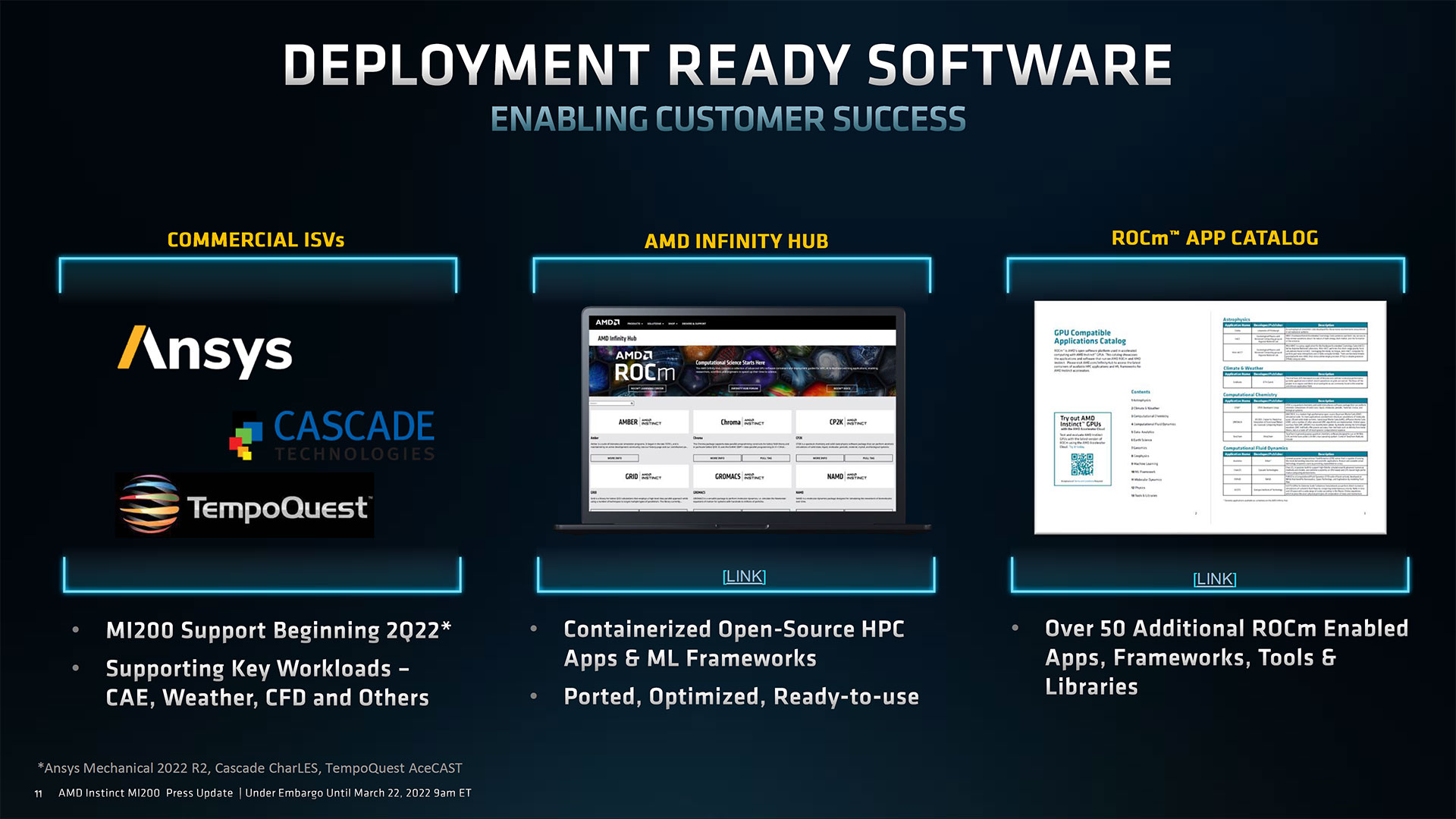

Along with its Instinct MI210 card, AMD also launches its ROCm 5 software development platform that allows users to efficiently program AMD's GPUs and supports various open frameworks (Tensorflow/PyTorch), libraries (MIOpen/Blas/RCCL), programming model (HIP), inter-connect (OCD), and so on. In addition, AMD has Infinity Hub that hosts HPC and AI/MP apps already optimized for Nvidia's GPUs.

But perhaps the most important part about today's announcement is that AMD is teaming up with various server makers to offer machines equipped with its Instinct MI210 cards. In the coming months such systems will be available from Asus, Colfax, Dell, Exxact, Gigabyte, HPE, KOI, Lenovo, Nor-Tech Supermicro, and Penguin.

At present many businesses and enterprises that require high-performance AI/ML or HPC capabilities can only get Nvidia-based machines from well-known OEMs. With AMD's Instinct MI210, they now have another option. We do not expect AMD to capture a sizeable share of this commercial HPC market shortly because there are dozens of programs already developed and optimized for Nvidia's CUDA platform and it is going to take a long time before a comparable library of software will be available for AMD's ROCm platform.

.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.