Graphics processors supercharge everyday apps

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Chapel Hill (NC) - Originally developed to remove a massive processing workload from the CPU, some scientists examine how the graphics processors can accelerate non-graphic applications as well. The Geometric Algorithms for Modeling, Motion, and Animation (GAMMA) Research Group at the University of North Carolina at Chapel Hill, reported this week that Nvidia's 7800 GTX reference card increased the speed of test applications by up to 35x.

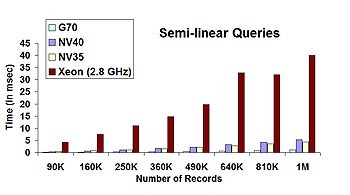

Researchers with GAMMA said they found enormous performance capabilities in Nvidia's newest graphics card that substantially outpaces its predecessor, the GeForce 6800 Ultra. Compared to the 6800, the 7800 tripled performance; without the help of a graphics chip, the speed gains were between 8x and up to 35x. The discovery points to the removal of a bandwidth bottleneck, which may lead to the unimpeded development of co-processing libraries and software development kits (SDKs) for everyday applications, such as spreadsheets and database management systems.

"It seems to me that the floating-point bandwidth on the new hardware is much more than on a 6800 Ultra," reported Naga K. Govindaraju, Research Assistant Professor at UNC's Department of Computer Science, in an interview with Tom's Hardware Guide. "On a 6800 Ultra, we are, in some manners, very limited...Since the bandwidth is not good enough on the card, we were still not able to use the full performance of the card. On a 7800 GTX, it seems to me that the floating-point bandwidth is much higher."

This technique of essentially pretending everything is a game, stated Prof. Govindaraju, reduces the critical elements of such everyday functions as sorting algorithms to a single instruction, which the graphics processor then applies to multiple pipelines at once. Recent test results presented by the GAMMA team compared the performance of their GPUsort algorithm to a traditional linear QuickSort algorithm, compiled first under Microsoft Visual C++, then under Intel's C++, which is optimized for Hyperthreading. For sorting an array of 18 million elements, the Visual C++ routine required about 21 seconds to accomplish what the GPUsort routine produced in under 2. Hyperthreading and the Intel compiler boosted QuickSort performance to about 17 seconds.

One of the purposes of the GAMMA team's work is to demonstrate the extent to which computing power in everyday PCs lies dormant, especially with regard to mere productivity applications as opposed to computation-rich 3D games. Profs. Manocha and Govindaraju agree that general purpose computation libraries for such programs as Excel and Matlab could be the first step to the future development of SDKs that make full-time use of graphics chips as math coprocessors.

But what Prof. Manocha also pointed out is that the performance increase in GPUs is exceeding the rate of CPUs. "If you look at [both] computation power and rasterization power," stated Prof. Manocha, "in the last six years, [performance for] PC graphics cards has grown at a [factor] of 2 or 2.25 per year, whereas CPUs are barely doubling every 18 months." He added that he expects this trend to continue as both ATI and NVIDIA produce their next generations of graphics cards in 2006.

Related stories:

Audio supercomputer hidden in your graphics card?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.