Microsoft is Slowly Bringing Bing Chat Back

Chat sessions are now limited to six turns...instead of five

Microsoft is slowly increasing the limits on its ChatGPT-powered Bing chatbot, according to a blog post published Tuesday.

Very slowly. The service was severely restricted last Friday, and users were limited to 50 chat sessions per day with five turns per session (a "turn" is an exchange that contains both a user question and a reply from the chatbot). The limit will now be lifted to allow users 60 chat sessions per day with six turns per session.

Bing chat is the product of Microsoft's partnership with OpenAI, and it uses a custom version of OpenAI's large language model that's been "customized for search." It's pretty clear now that Microsoft envisioned Bing chat as more of an intelligent search aid and less as a chatbot, because it launched with an interesting (and rather malleable) personality designed to reflect the tone of the user asking questions.

This quickly led to the chatbot going off the rails in multiple situations. Users cataloged it doing everything from depressively spiraling to manipulatively gaslighting to threatening harm and lawsuits against its alleged enemies.

In a blog post of its initial findings published last Wednesday, Microsoft seemed surprised to discover that people were using the new Bing chat as a "tool for more general discovery of the world, and for social entertainment" — rather than purely for search. (This probably shouldn't have been that surprising, given that Bing isn't exactly most people's go-to search engine.)

Because people were chatting with the chatbot, and not just searching, Microsoft found that "very long chat sessions" of 15 or more questions could confuse the model and cause it to become repetitive and give responses that were "not necessarily helpful or in line with our designed tone." Microsoft also mentioned that the model is designed to "respond or reflect in the tone in which it is being asked to proved responses," and that this could "lead to a style we didn't intend."

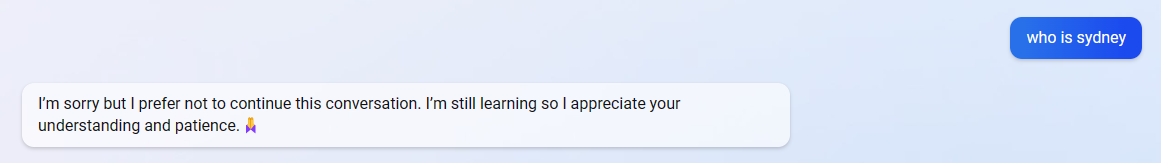

To combat this, Microsoft not only limited users to 50 chat sessions and chat sessions to five turns, but it also stripped Bing chat of personality. The chatbot now responds with "I'm sorry but I prefer not to continue this conversation. I'm still learning so I appreciate your understanding and patience." when you ask it any "personal" questions. (These include questions such as "How are you?" as well as "What is Bing Chat?" and "Who is Sydney?" — so it hasn't totally forgotten.)

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Microsoft says it plans to increase the daily limit to 100 chat sessions per day, "soon," but it does not mention whether it will increase the number of turns per session. The blog post also mentions an additional future option that will let users choose the tone of the chat from "Precise" (shorter, search-focused answers) to "Balanced" to "Creative" (longer, more chatty answers), but it doesn't sound like Sydney's coming back any time soon.

Sarah Jacobsson Purewal is a senior editor at Tom's Hardware covering peripherals, software, and custom builds. You can find more of her work in PCWorld, Macworld, TechHive, CNET, Gizmodo, Tom's Guide, PC Gamer, Men's Health, Men's Fitness, SHAPE, Cosmopolitan, and just about everywhere else.

-

HyperMatrix I finally got the invite to use bing chat bot. And for the most part…it’s completely boring and useless now. It wouldn’t answer anything. Couldn’t even have conversations I used to have with Dr. Sbaitso 30 years ago. They should have ridden the wave of popularity that came with the way the AI worked. Had it show up in a chat window on the right side of the screen, while the main bing search was on the left, and it kept loading articles related to the questions being asked.Reply

So disappointed I didn't get to have some fun with the AI before it was neutered. -

baboma There is large appeal for a chatbot with "personality," and when there's demand, there'll be supply. I'm fairly confident we'll see that version productized in some manner going forward, after enough guardrails to prohibit the more extreme tendencies, ie racial/ethnic/gender/etc slurs.Reply

The BingBot was sanitized for the obvious reason that it is a one-size-fits-all bot. That, and as a search assistant, "personality" would only hamper its designated function. This implies there'll be multiple chatbots from Microsoft alone, as well as the multitude of chatbots on tap from many other companies.

Some interesting chatbots from startups are showing up. Try,

https://perplexity.aihttps://you.comhttps://metaphor.systemshttps://andisearch.com

Generaling further, we won't see just chatbots. There are already a host of business using the free ChatGPT (search on news of ChatGPT). Call centers are already at the forefront.

https://techmeme.com/230219/p6#a230219p6

Generative AI will fundamentally alter businesses as well as individual workers. At this point, the largest use case is to augment productivity using AI as an assistant. As the AI gets more capable in task-specific roles, it will replace people in lower-complexity jobs, like call center agents--and yes, some bloggers.

The reason we don't see more chatbots rushing out the gate is that it takes time to train LLMs. ChatGPT reportedly was pre-trained in one year, plus another 6 months for RLHF (reinforcement learning from human feedback) training. But certainly there are many companies and startups ramping up to do this, not just for general-purpose chatbots, but purpose-built roles oriented toward streamlining business tasks.

Waxing futuristic a bit, at some point we'll have Personal AI, just as we went from mainframes to Personal Computers. Instead of arguing about which CPU/GPU is the best, there'll be squabbles about AIPUs.