Nvidia Hints at Hopper GPU Reveal Next Week

'A can't miss' keynote coming March 22.

Being a company that certainly knows how to attract attention to itself and its products, Nvidia issued a blog post that unambiguously hints at its plan to introduce its next-generation compute GPU architecture codenamed Hopper. The company’s blog post is titled ‘Hopped Up,’ and it announces Jensen Huang’s keynote at the GTC conference that will take place on Tuesday, March 22, 2022.

“Each GTC, Huang introduces powerful new ways to accelerate computing of all kinds, and tells a story that puts the latest advances in perspective,” the blog post reads. “Expect Huang to introduce new technologies, products and collaborations with some of the world’s leading companies.”

The head of Nvidia traditionally announces new flagship architectures and key technologies, so it will not be a surprise to see Jensen Huang announce the company’s next-generation Hopper architecture for high-performance computing (HPC) and data centers on Tuesday.

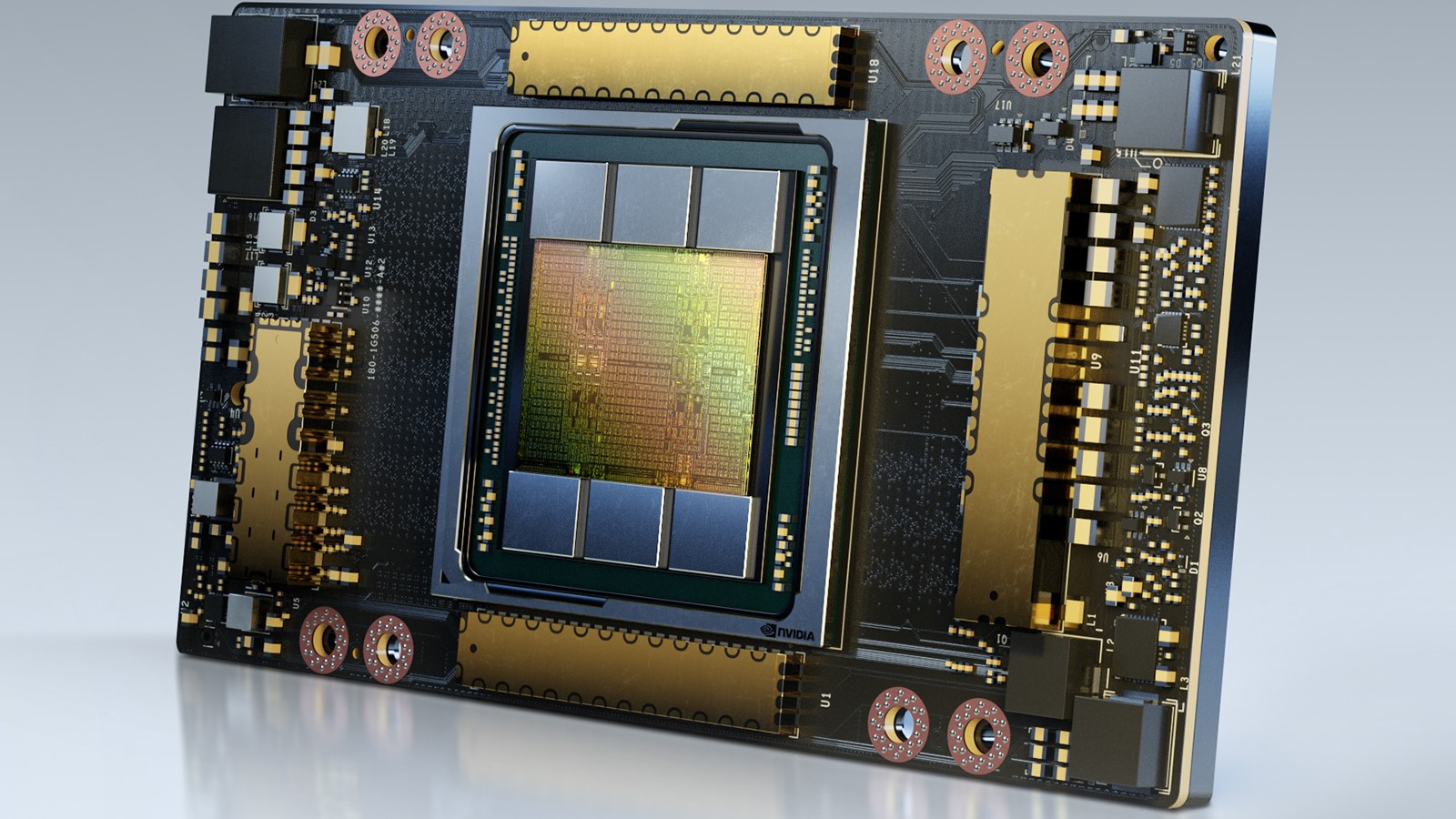

Nvidia’s Hopper will presumably be the company’s first multi-die compute GPU made using TSMC’s N5 (5nm) fabrication technology. With its compute-oriented Ampere GPUs, Nvidia put a lot of emphasis on AI/DL/ML workloads and appropriate data formats. These GPUs offered formidable performance in traditional HPC workloads (which require FP64 precision); their key advantages lay in the field of emerging workloads. We do not know the critical feature of Hopper at this point, but we will undoubtedly learn more about it next week.

Since modern datacenter and HPC workloads require immense compute power, developers of computing GPUs tend to build processors with the maximum number of execution units to offer maximum performance. But building a very complex and large GPU on a modern fabrication process is expensive in terms of development and costly in manufacturing since a tiny defect can ruin a pricey piece of silicon. As a result, companies like AMD, Nvidia, and Intel are switching to multi-tile designs with their next-generation compute GPU architectures.

Unfortunately, the description of the event does not reveal any precise details about Hopper. This year’s GTC will focus on “accelerated computing, deep learning, data science, digital twins, networking, quantum computing and computing in the data center, cloud and edge.”

Even before its formal introduction, Nvidia’s Hopper architecture has won at least one supercomputer contract. In late 2021, the National Renewable Energy Laboratory (NREL) announced its new supercomputer called Kestrel that will leverage what Nvidia calls ‘A100Next Tensor Core compute GPU.’ The machine will come online in 2023 and will provide the performance of approximately 44 FP64 PetaFLOPS, which is in line with what the No. 7 most powerful supercomputer in the world offers today.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.