Intel Core i5-661: Clarkdale Rings The Death Knell Of Core 2

Intel HD Graphics: Clarkdale’s On-Package GPU

In a fairly incredible contrast to the confusion that is its current processor model naming, Intel has done away with the numbering system used to identify past graphics and memory controller hubs by simply calling its integrated GPU Intel HD Graphics.

The company isn’t trying to spin this as a revolutionary update to its GMA X4500-series cores, which represented an improvement to past graphics cores and accelerated Blu-ray movie playback, but still wasn’t an option for gaming enthusiasts. Rather, the message is that the home theater-oriented features have been improved, and the folks who play games like Bejeweled and The Sims should be able to enjoy their mainstream entertainment without a need for discrete graphics.

The 3D Core

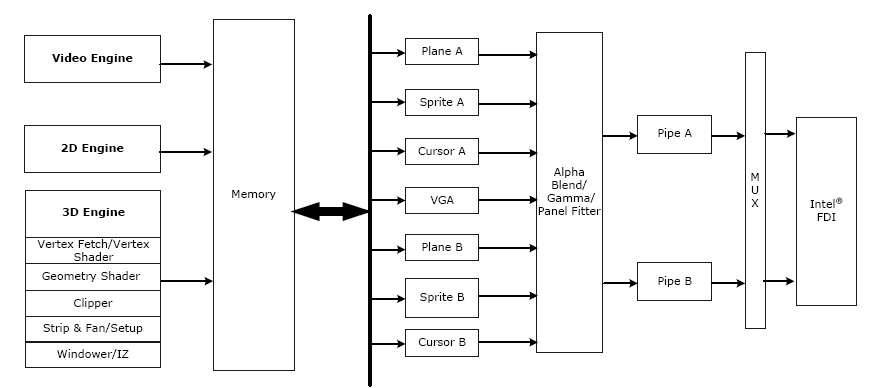

The engine driving Intel’s HD Graphics is very similar to what we’ve already seen in the G45’s GMA X4500HD, launched a year and a half ago. Organized as a unified shader architecture, HD Graphics does get a bit more compute horsepower via 12 scalar execution units (versus 10 in the generation prior). Moreover, the clock rate of Intel’s Core i5-661 is uniquely high at 900MHz—100 MHz higher than G45’s GMA X4500HD. The rest of Intel’s launch SKUs sport lower 533 and 733 MHz graphics processors.

Official API support remains fixed at DirectX 10 and OpenGL 2.1 via hardware-accelerated Shader Model 4.0-compliant vertex and pixel shaders. Those are largely check-box specifications, though. In reality, the integrated GPU isn’t fast enough to drive a DirectX 10-class title at sufficient speed.

Of course, the on-package graphics core with its on-die memory controller promises a substantial boost to memory bandwidth—and not only in theory (from two channels of DDR3-1066 serving up to 17 GB/s to two channels of DDR3-1333 pushing up to 21 GBs). The gain should also be palpable in the real world, as lower latencies enable higher utilization of available throughput, which you’ll see in our synthetic memory bandwidth numbers.

Performance is further improved by Hierarchical Z and Fast Z Clear—two components originally featured in ATI’s HyperZ suite, designed to maximize the use of available memory bandwidth and prevent unnecessary overdraw on Radeon GPUs back in 2000. Intel makes up to 1.7GB of system memory available to graphics, as with its previous-generation integrated graphics core, but there’s really no reason to dedicate that much RAM in light of the GPU’s performance characteristics.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Just how significant of an improvement is HD Graphics over the GMA X4500HD? We set both platforms up in World of Warcraft and ran circuits around Dalaran for 60 seconds at a time with the aim of finding out.

| World of Warcraft, Dalaran Circuit, 60 Seconds (FRAPS) | ||||

|---|---|---|---|---|

| Row 0 - Cell 0 | 1680x1050, Ultra | 1280x1024, Ultra | 1024x768, Ultra | 1024x768, Low |

| Intel GMA HD Graphics | 5.017 | 6.000 | 6.550 | 22.317 |

| Intel GMA X4500HD Graphics | 3.550 | 3.800 | 3.667 | 14.700 |

| AMD Radeon HD 4200 (IGP) | 3.967 | 4.583 | 5.633 | 39.150 |

Performance is clearly relative here. Yes, the HD Graphics implementation is significantly better than anything Intel has offered in the past. Yes, you can play World of Warcraft on a Clarkdale-based processor (specifically, the Core i5-661—the only model with a 900 MHz graphics clock). But in order to do so, you’ll need to drop your resolution and detail settings so far as to make the game not enjoyable.

Intel can put a feather in its cap for besting AMD's 785G-based Radeon HD 4200 graphics core across the board in World of Warcraft with Ultra settings enabled. Drop to Low quality settings, though, and the 785G takes off ahead. And even then, I wouldn't want to play this fairly mainstream game on the Radeon HD 4200, either.

As a general rule, if you’re working with 3D, this is not the GPU you’ll want to use. If your games of choice are online, in 2D, Intel’s HD Graphics processor will likely suffice.

Current page: Intel HD Graphics: Clarkdale’s On-Package GPU

Prev Page Accelerating Encryption: AES-NI Next Page New Drivers And Great Home Theater Features-

Zoonie Well... I think that takes care of the dreaded "But can it play Crysis?" question regarding its GMA :D :P :PReply -

eklipz330 can i ask why you teased us at the end with the 4.5ghz OC but didn't include them in the benchmarks? =Reply -

cangelini xc0mmiexVideo on page 1 not working ... "This is a private video..."Reply

Fixed! Had to keep it private pre-launch :) -

I really like the improvements Larrabee brought about....not! I do like the fact they are making progress but they really need to skip ahead a few generations or buy out some other company to design a GPU for themselves.Reply

-

gkay09 ^ Many more reasons to buy AMD Phenoms II X4 in the mid-range segment...Reply

Only drawback with the AMD CPUs is the power consumption, that I feel can be brought down with slight undervolting... -

dtemple I'm looking to upgrade from my Athlon X2 @ 2.7GHz because I do more with the computer now than I did before - sometimes I'll play a game while my TV tuner is recording from my cable signal, and having more cores would help these multiple tasks run more smoothly.Reply

I was waiting until the Clarkdale-based i5 launched, thinking it would be a quad-core that was more competitively priced against the Phenom II X4, but it looks like a Phenom II X4 is my only option to get more cores for less money.

The only good news coming out of this launch is that LGA1156 is not changing for the Clarkdale chips, so it looks to be the most future-proof platform to upgrade to, if one was so inclined. I'm personally going with a Phenom II since I can get one without changing motherboards. This is one of the more disappointing launches in the last year or so. -

cangelini eklipz330can i ask why you teased us at the end with the 4.5ghz OC but didn't include them in the benchmarks? =Reply

We have another overclocking piece planned--I wanted to get a Core i3, at least, to include :) -

I would love to see what GTA IV would do do the dual cores in gaming! I do know that its a bear of a game on the CPU and it would truly show off if hyperthreading could actually make a major difference.Reply

-

maximus20895 Great video once again! Thanks for this and the review itself. Very informative. I really liked the graph on the first page too :)Reply