Why you can trust Tom's Hardware

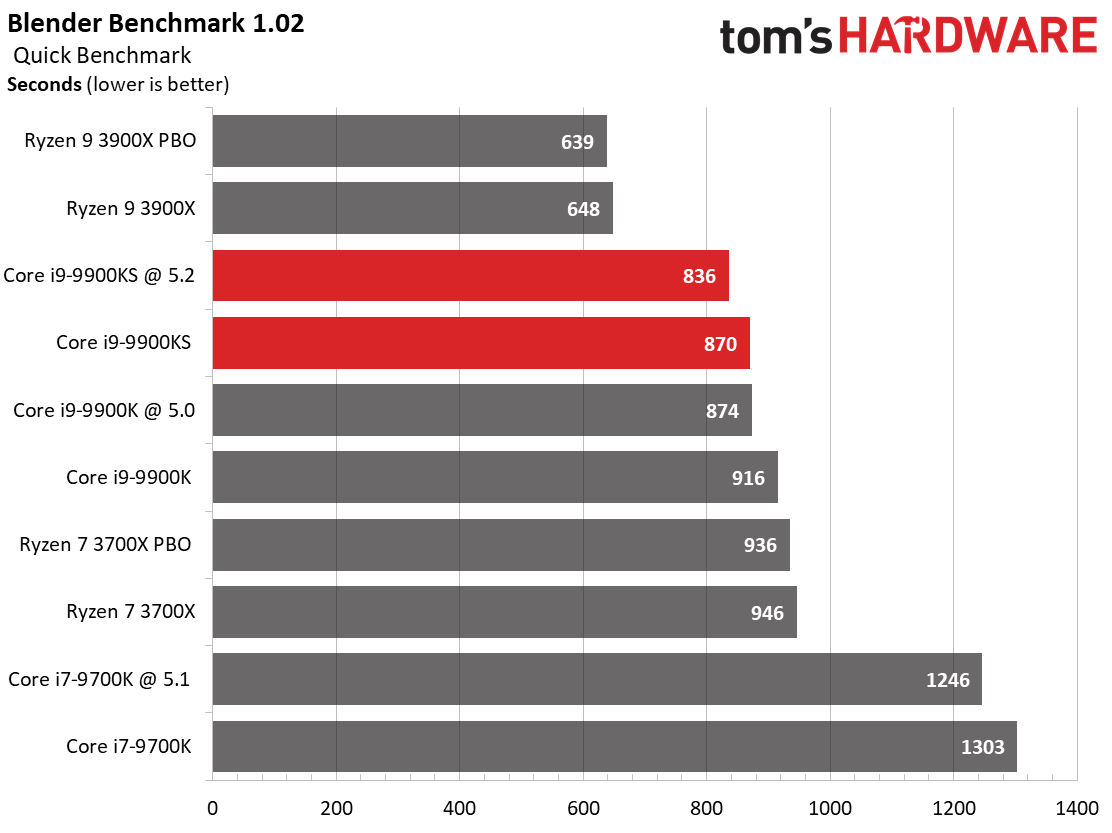

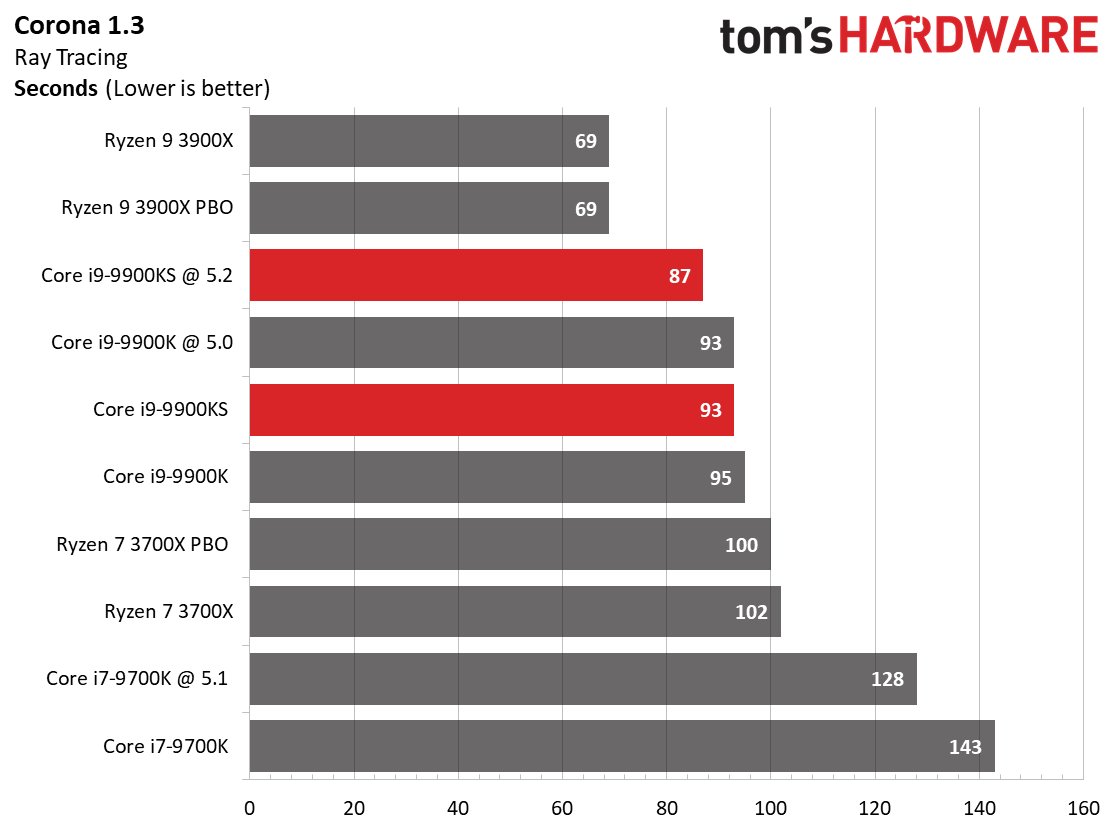

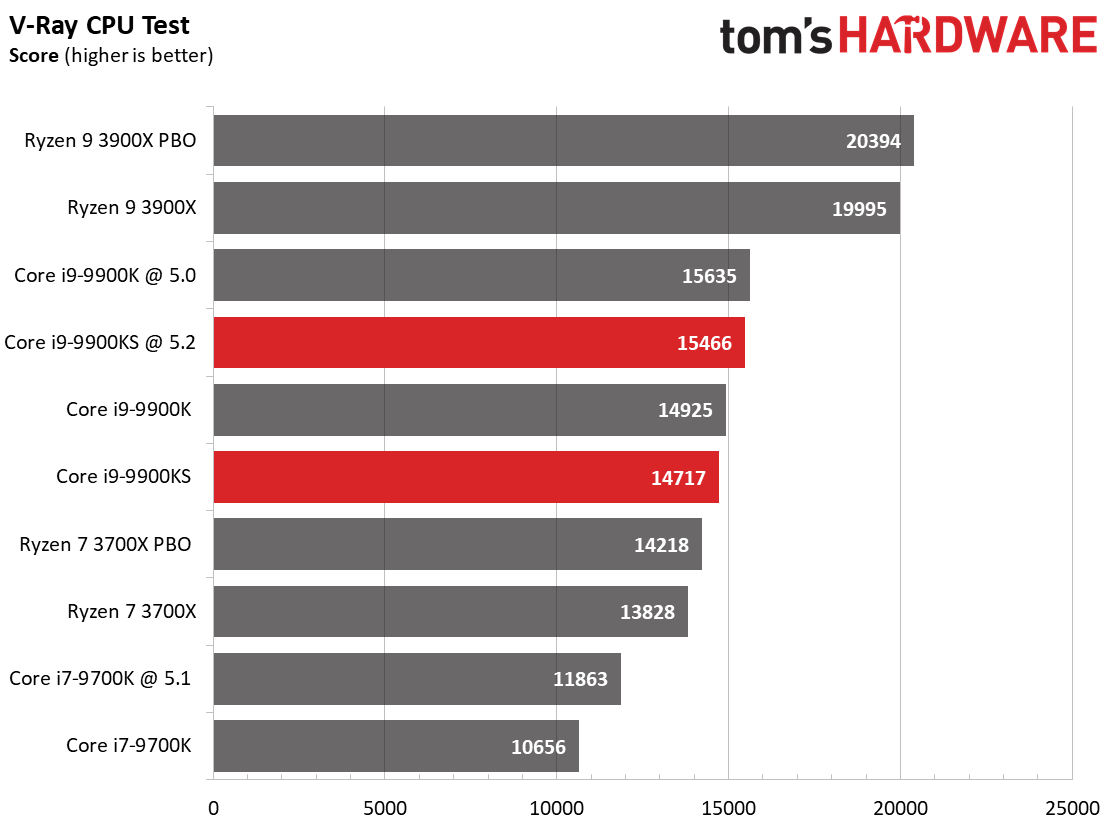

Rendering

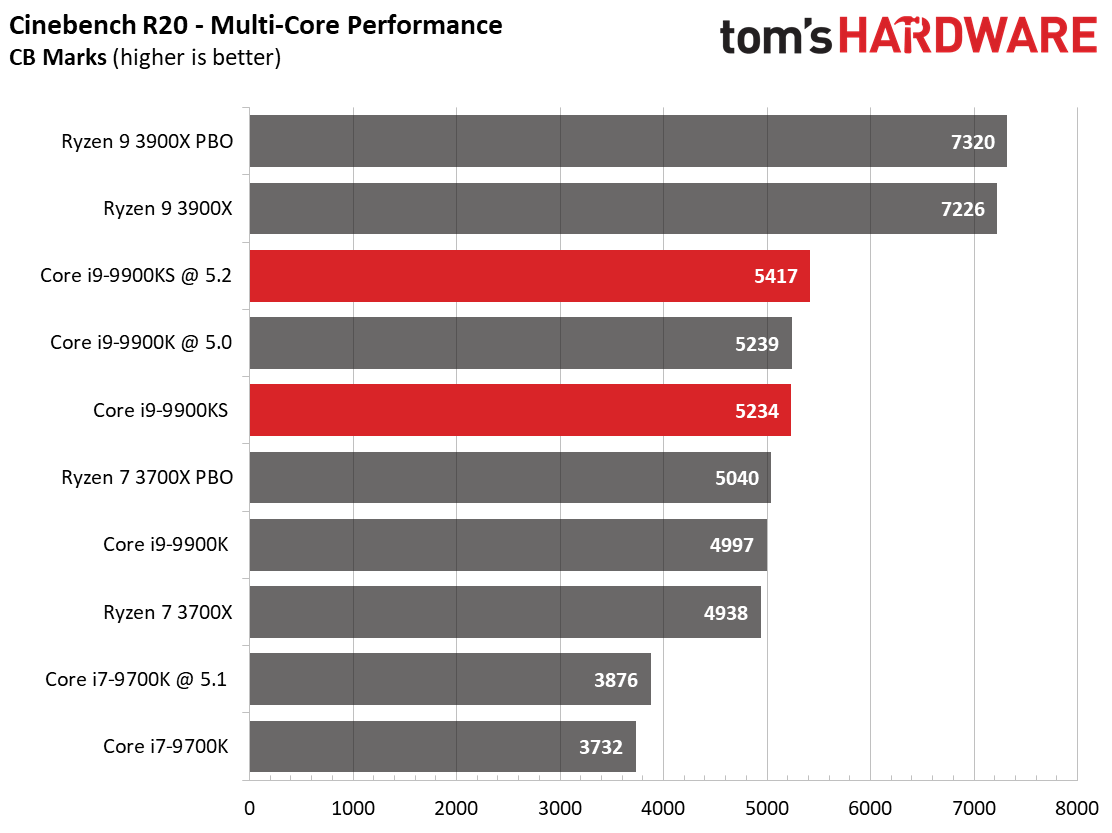

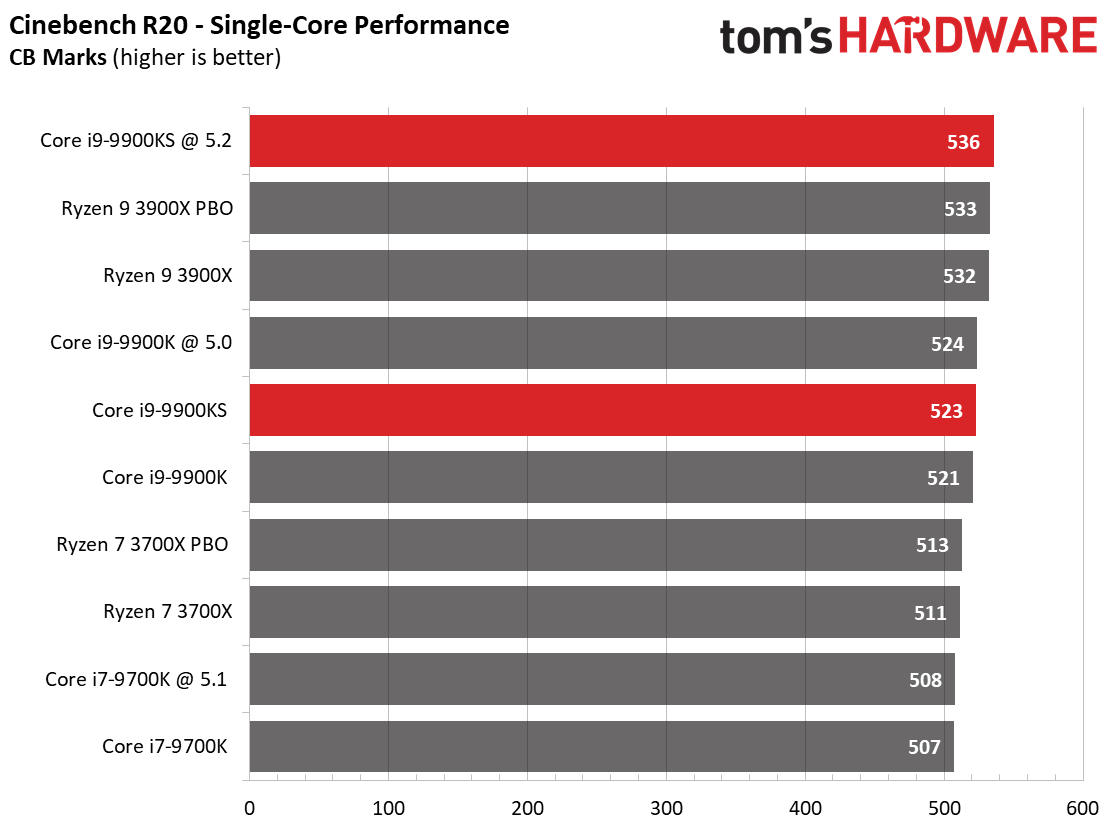

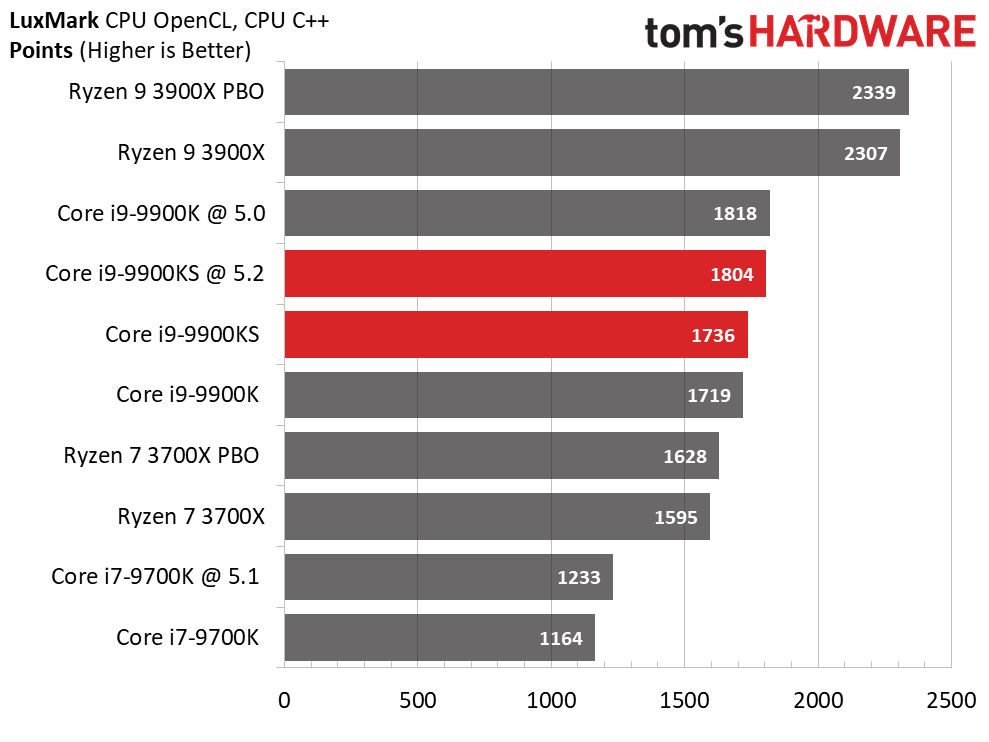

The 12-core 24-thread 3900X brings HEDT-like performance to the multi-threaded rendering benchmarks. The tuned -9900KS doesn't fare as well as the overclocked Core i9-9900K in the LuxMark test, despite its 200 MHz frequency advantage and similar memory data transfer rates.

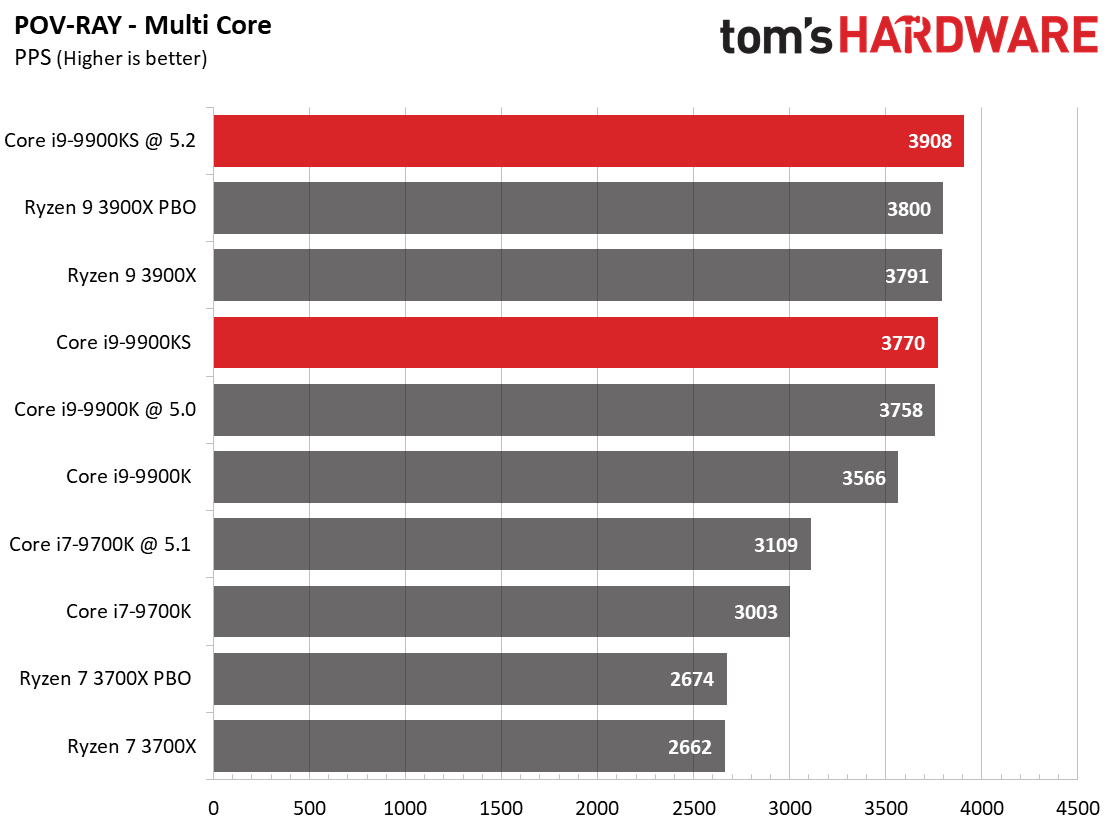

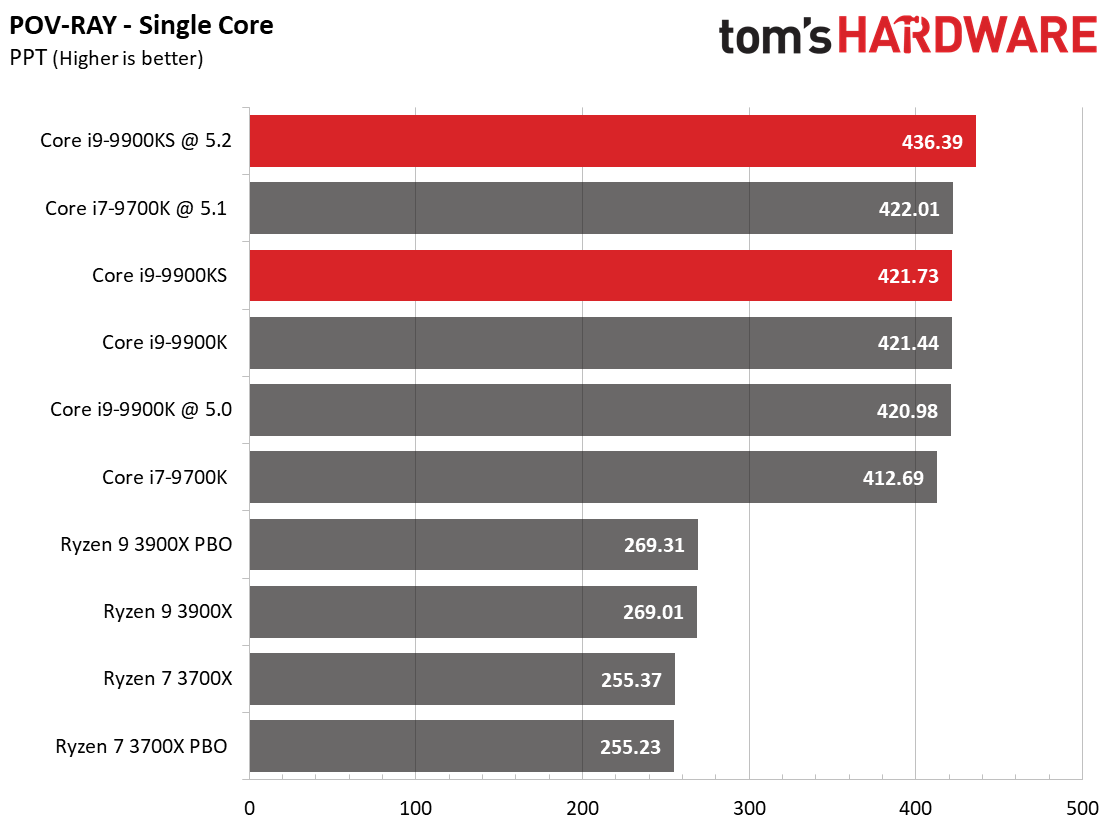

The Core i9-9900KS leads the Pov-Ray testing and gives us the expected performance improvements in several tests, but Ryzen 9 3900X retains its lead in almost all of these threaded tests.

Encoding

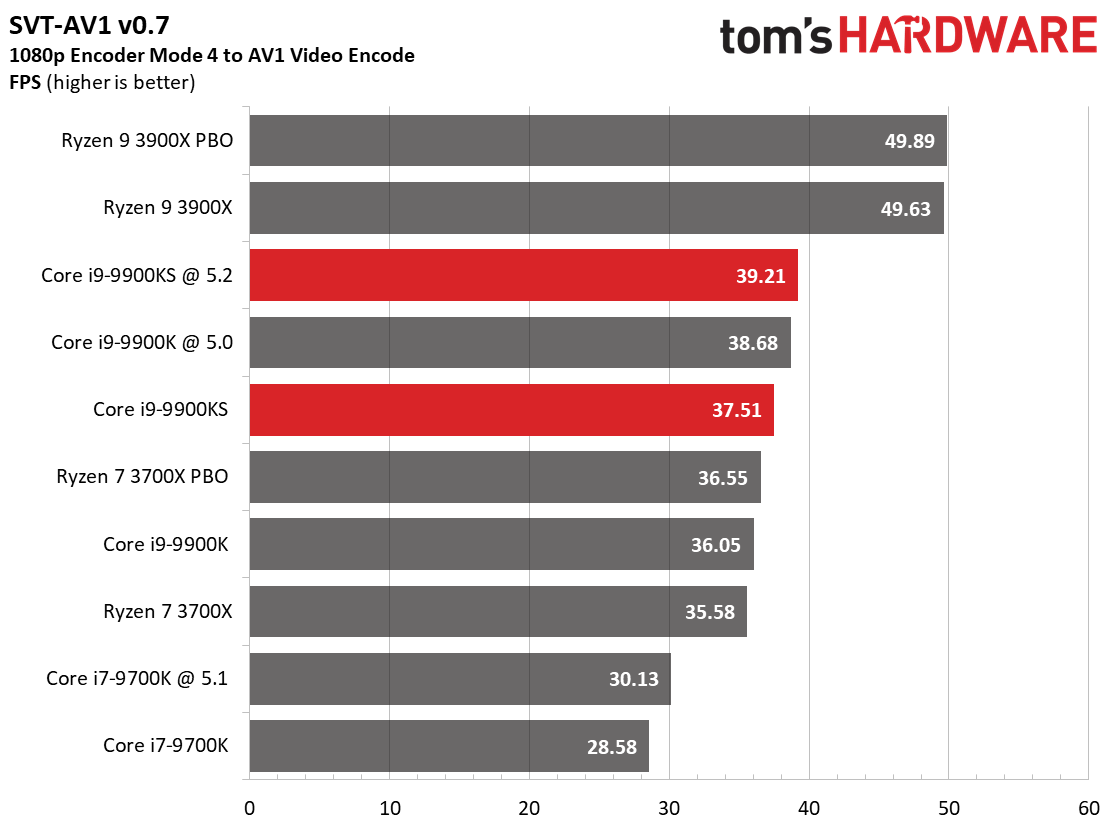

The SVT-AV1 encoder is a new Intel- and Netflix-designed software video encoder that became available earlier this year. The encoder is more scalable than other encoders, thus offering faster encoding times paired with efficient compression. While it may seem counter-intuitive to use an Intel-designed encoder for testing AMD processors, consider that most encoders are inherently reliant upon per-core performance, which is a strength of Intel, while SVT-AV1 exposes the power of threading, a strength of Ryzen. The Ryzen 9 3900X leads the benchmark with ease.

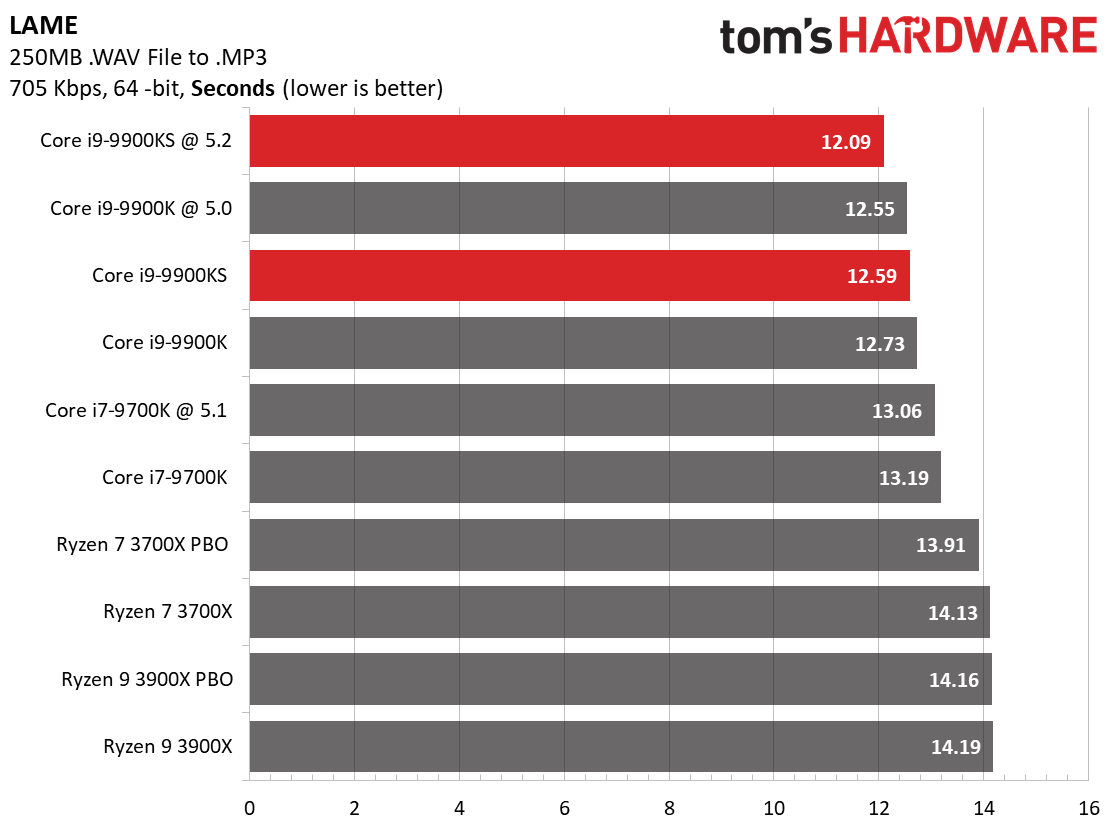

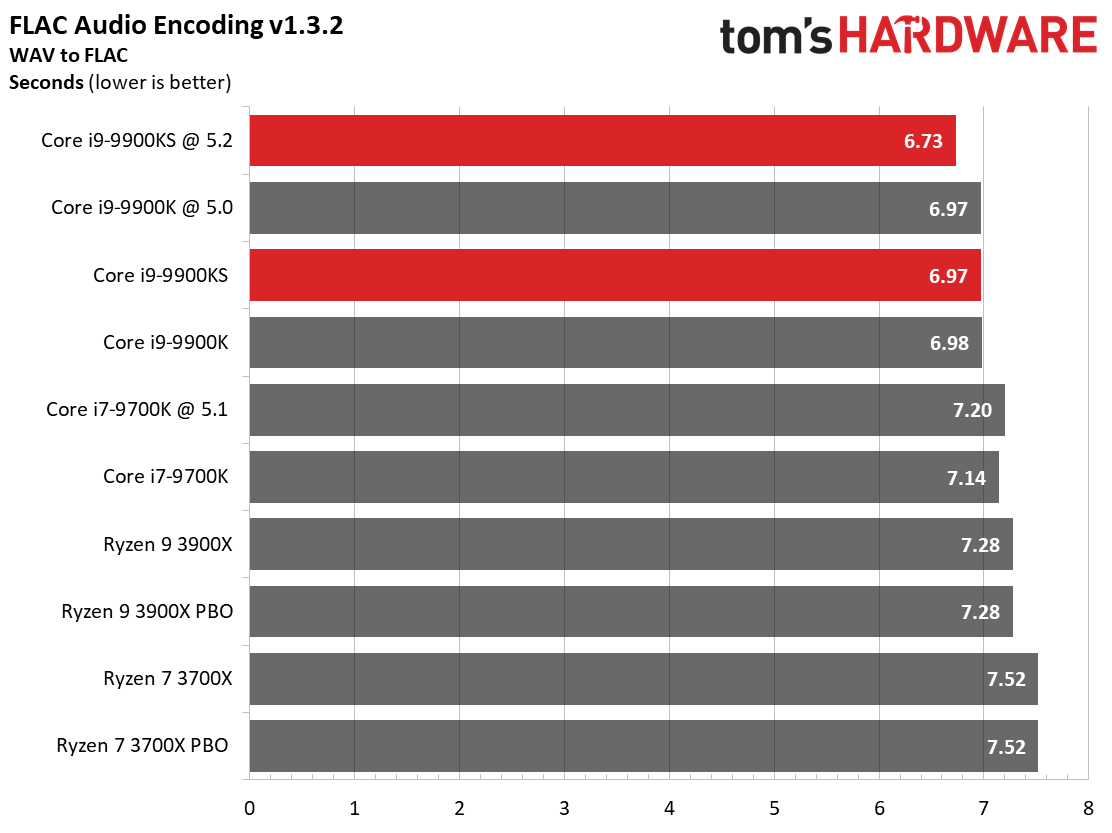

Our LAME and FLAC tests, like many encoders, rely heavily upon per-core performance. That means Intel's frequency advantage comes into play, handing the -9900KS the lead in both tests.

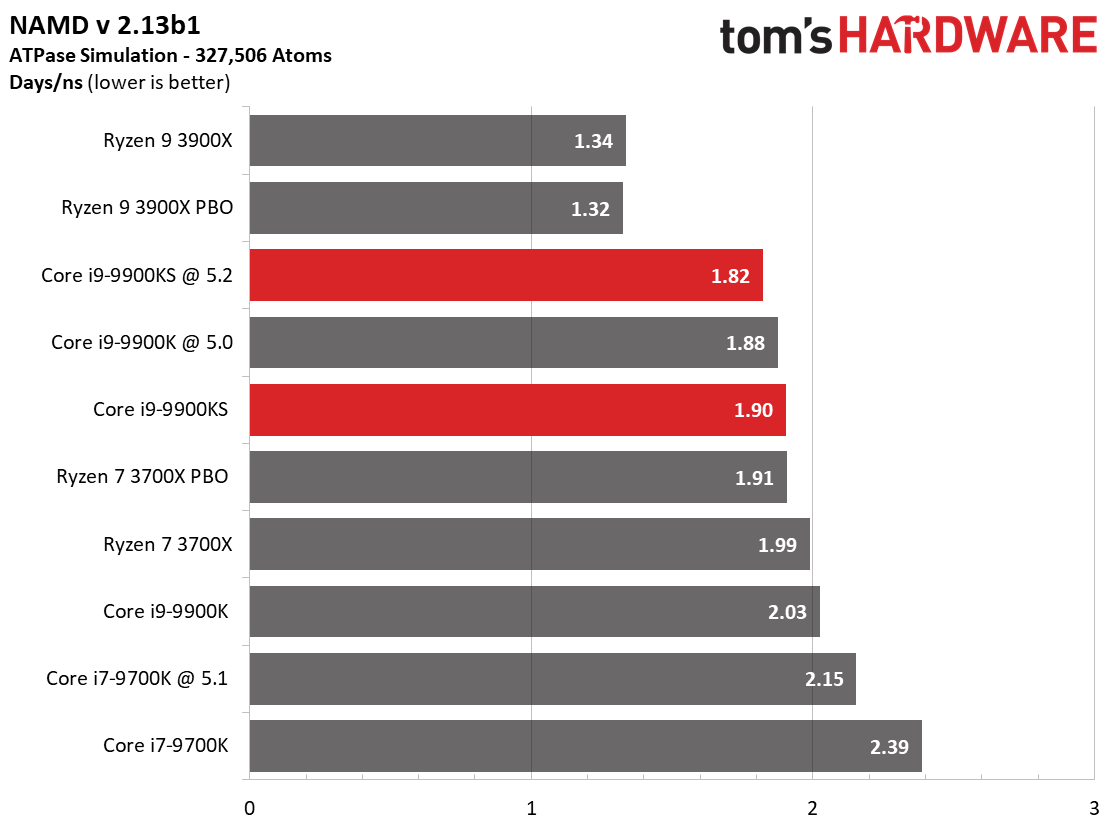

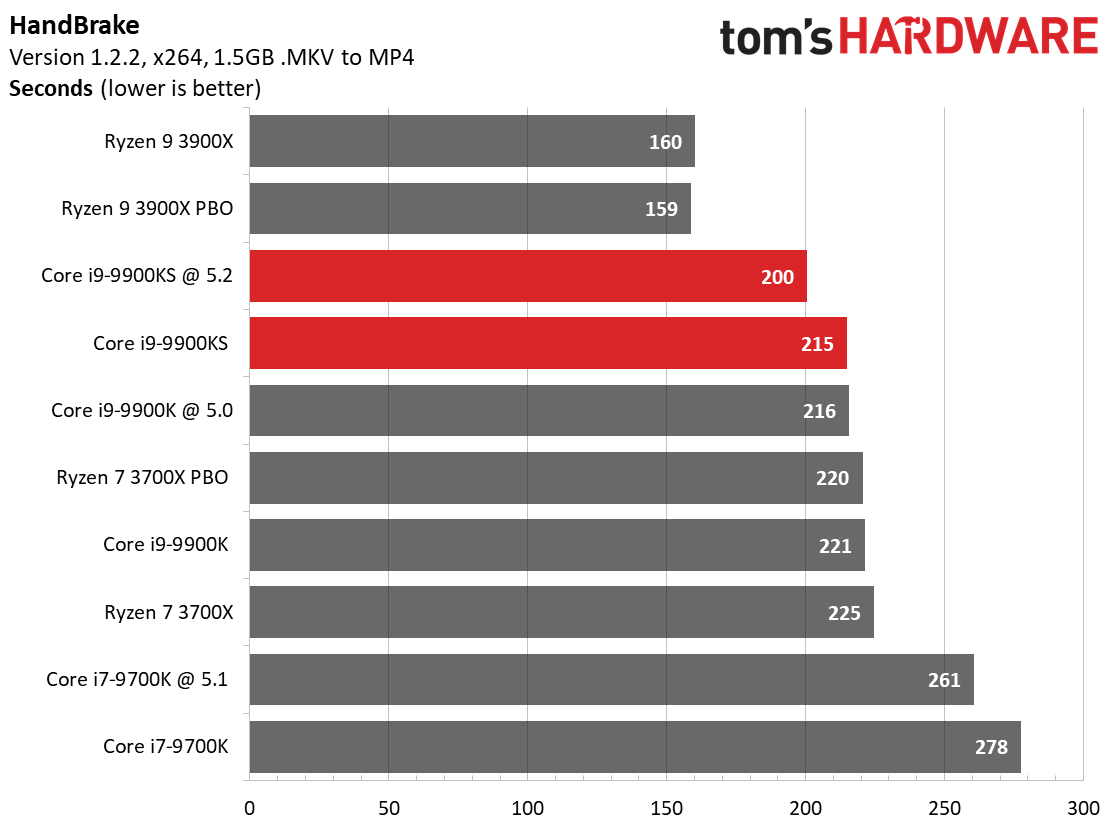

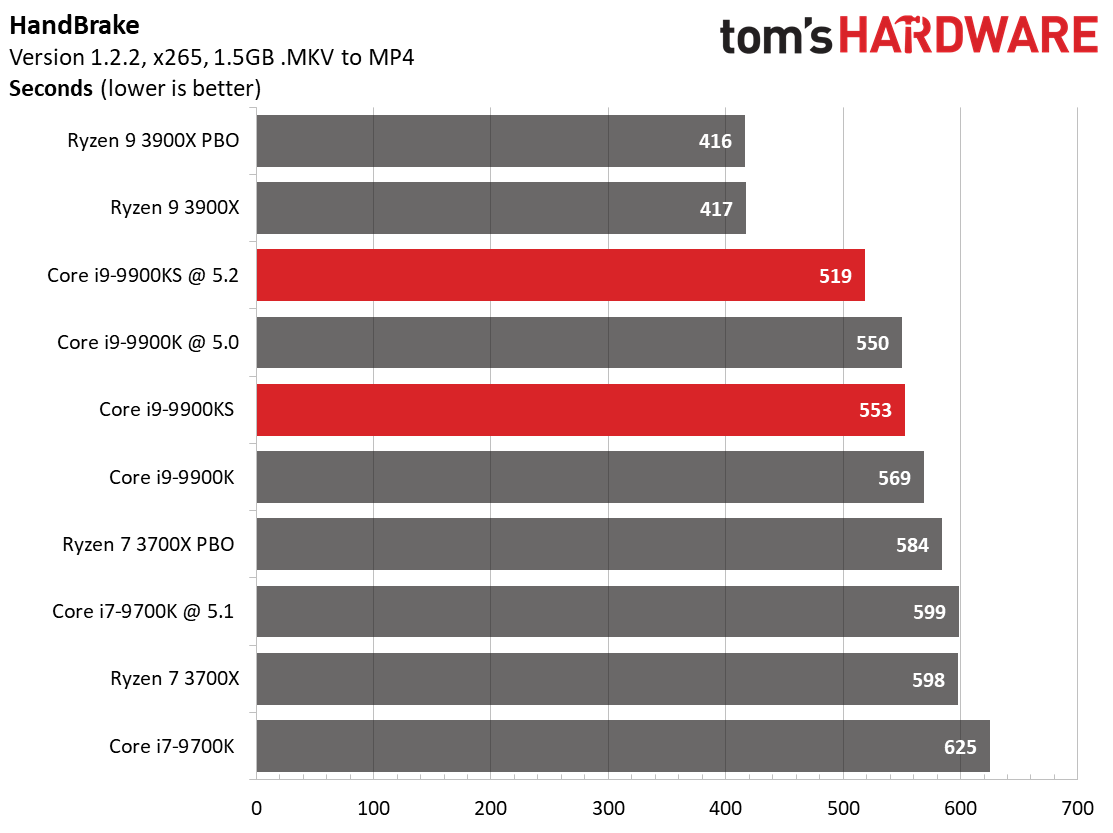

Intel processors traditionally leverage high frequencies to dominate the HandBrake x265 test, which relies heavily on AVX instructions, and the H.264 test. But Intel's higher clock speed isn't too much of an advantage in these tests when the similarly-priced competition has far more threads at their disposal. AMD has also worked diligently to improve Ryzen's performance in AVX workloads, and here we can see that work pay off.

Compression, Decompression, Encryption, AVX

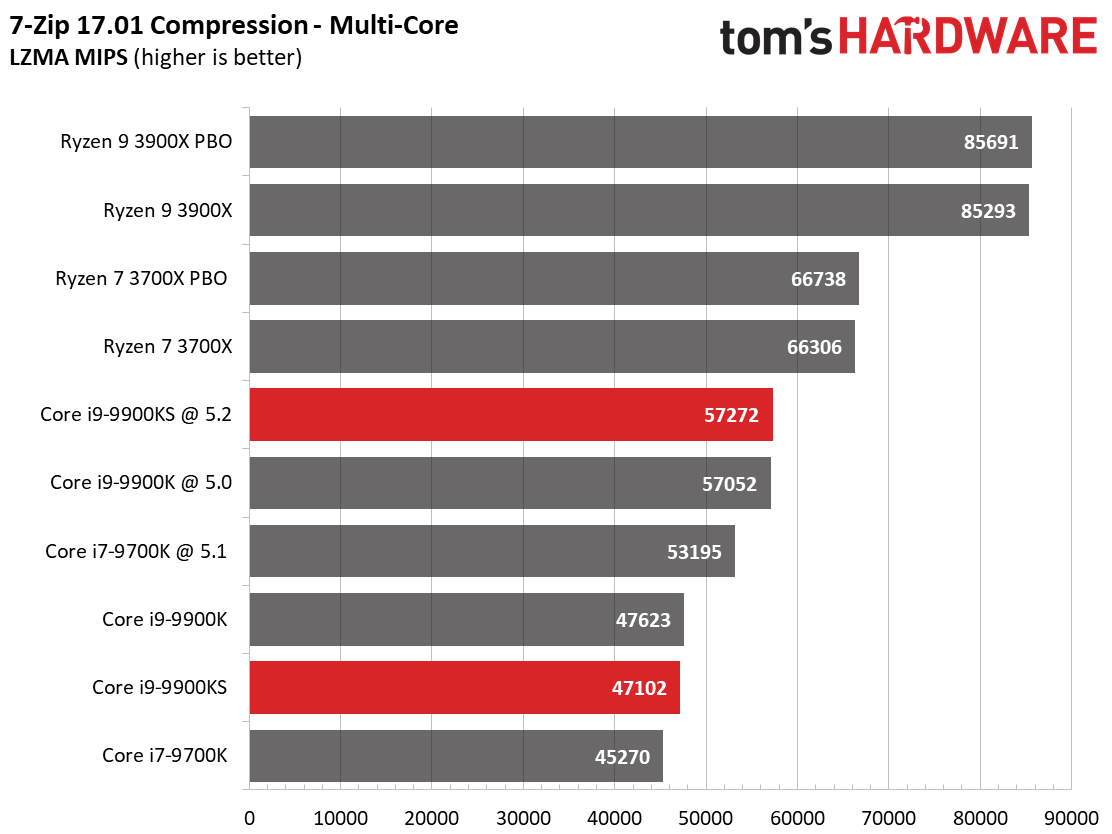

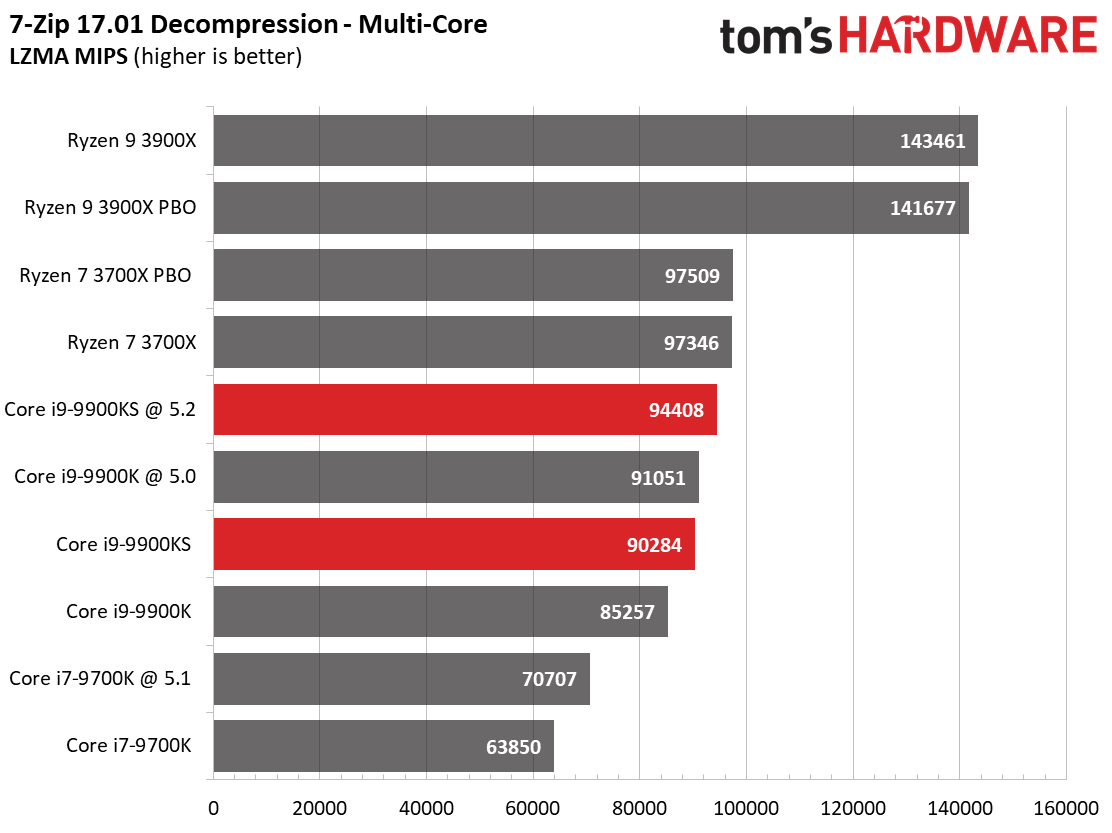

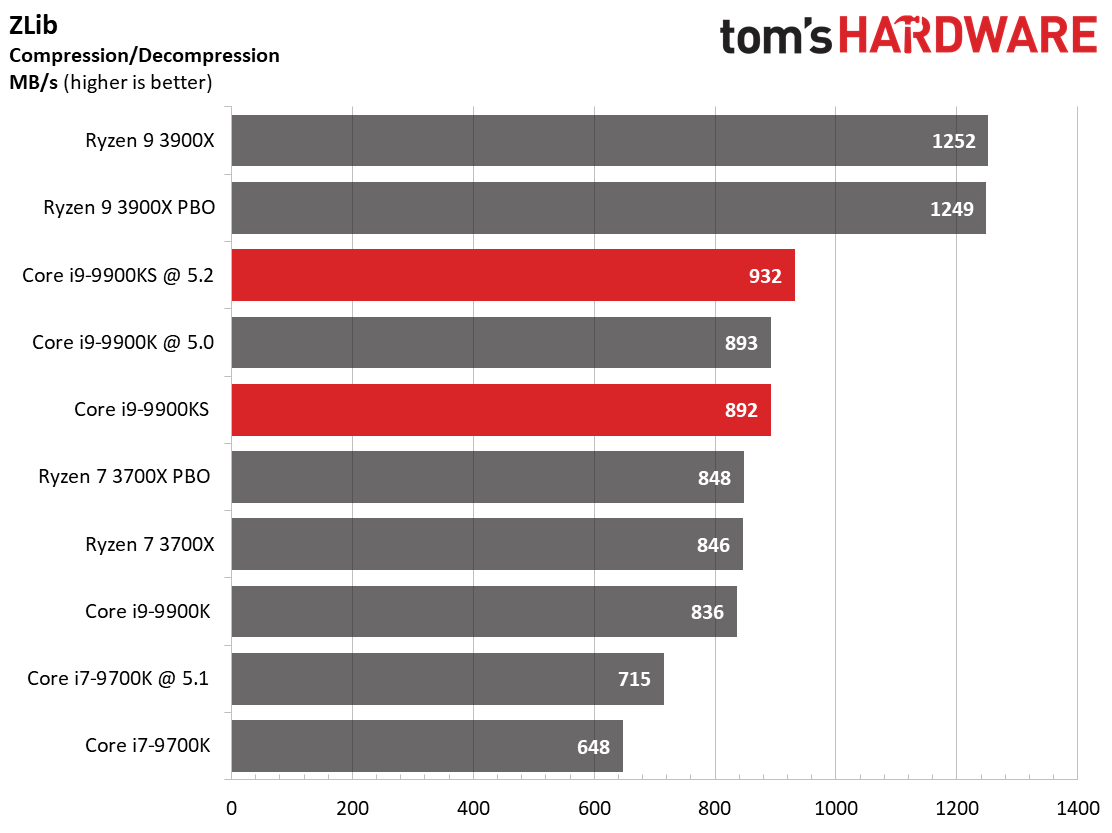

Our threaded compression and decompression 7-Zip and ZLib tests work directly from system memory, removing storage throughput from the equation. The combination of Ryzen 3900X's improved memory subsystem and generous helping of cores helps it take an easy lead over the Intel challengers. The Ryzen 7 3700X also performs extremely well in the 7-Zip tests.

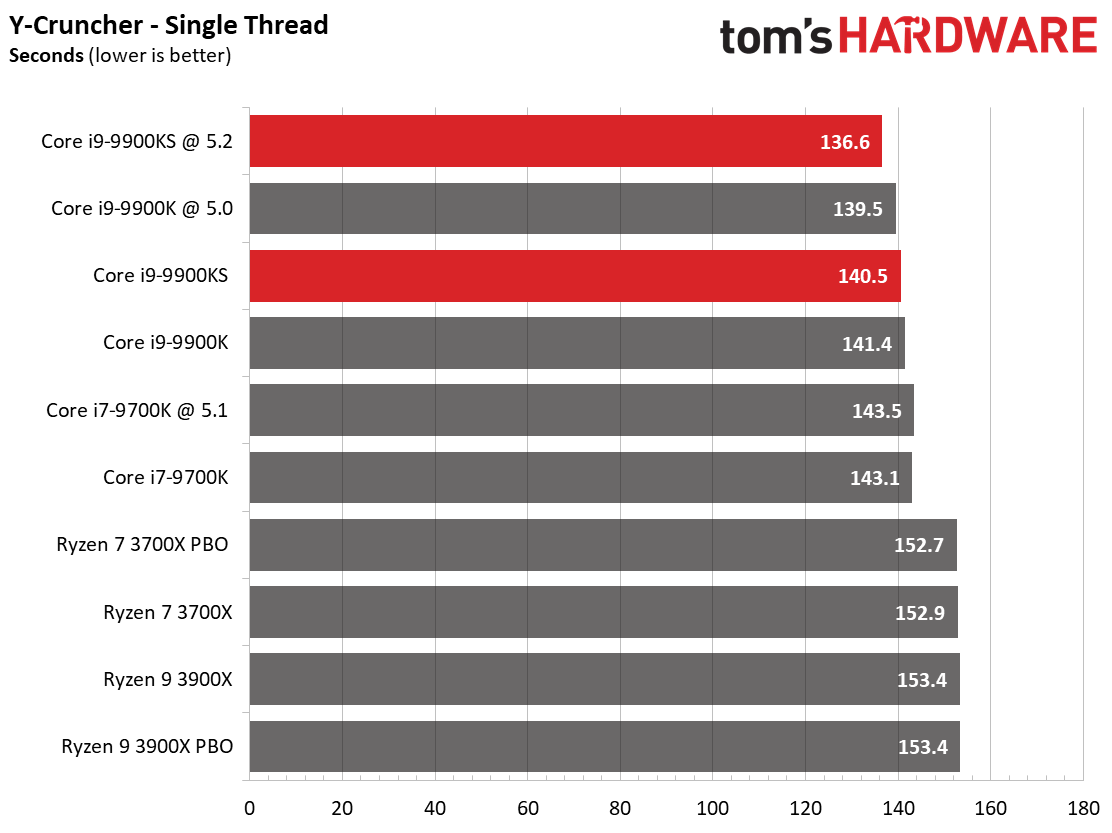

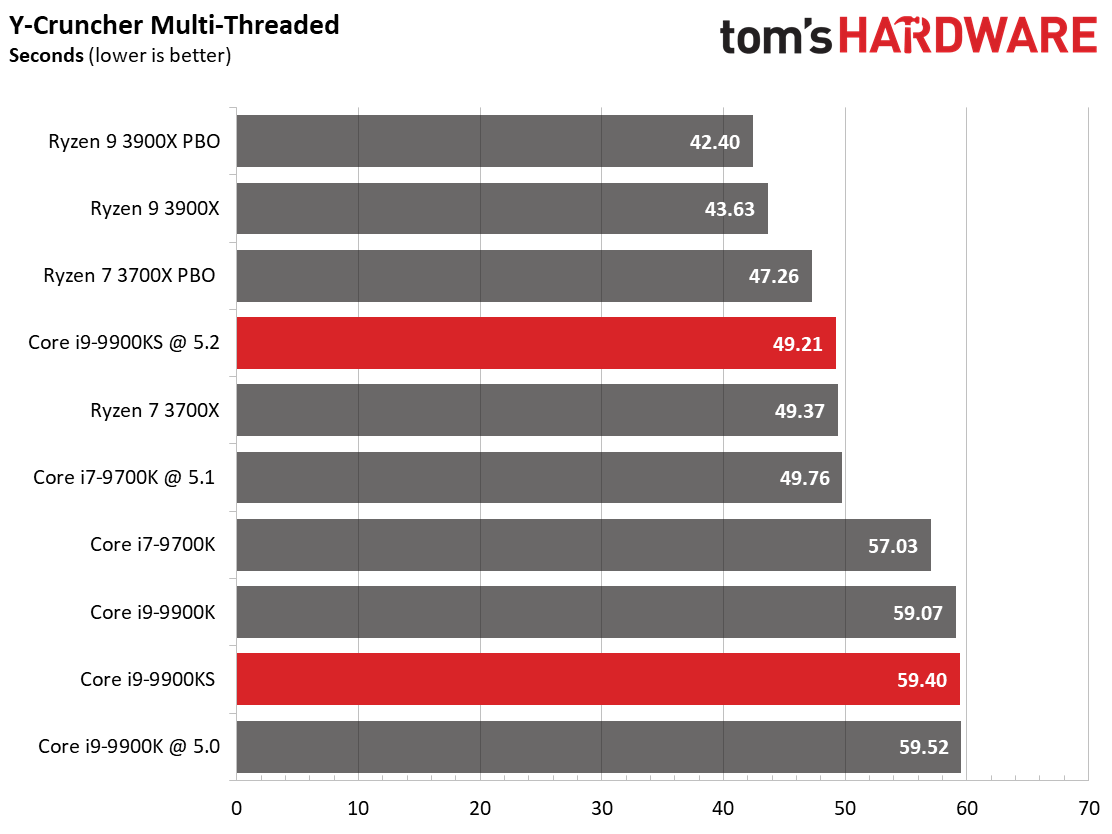

We can also see the vast improvement in Ryzen's AVX performance in the y-cruncher multi-threaded test. It's pretty surprising to see the Ryzen 7 3700X trade blows with the -9900KS in this test, especially given its wallet-friendly $329 price point. That said, the Intel models offer similarly impressive performance relative to competitors in the single-threaded y-cruncher test.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

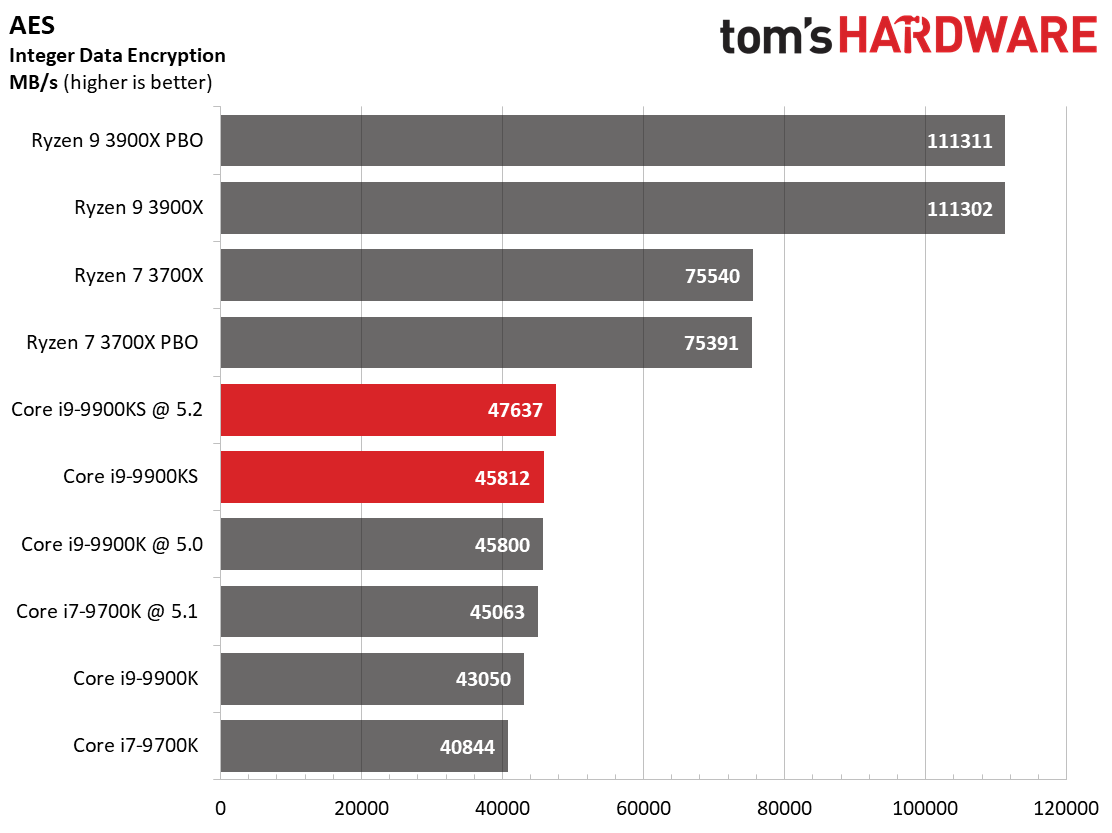

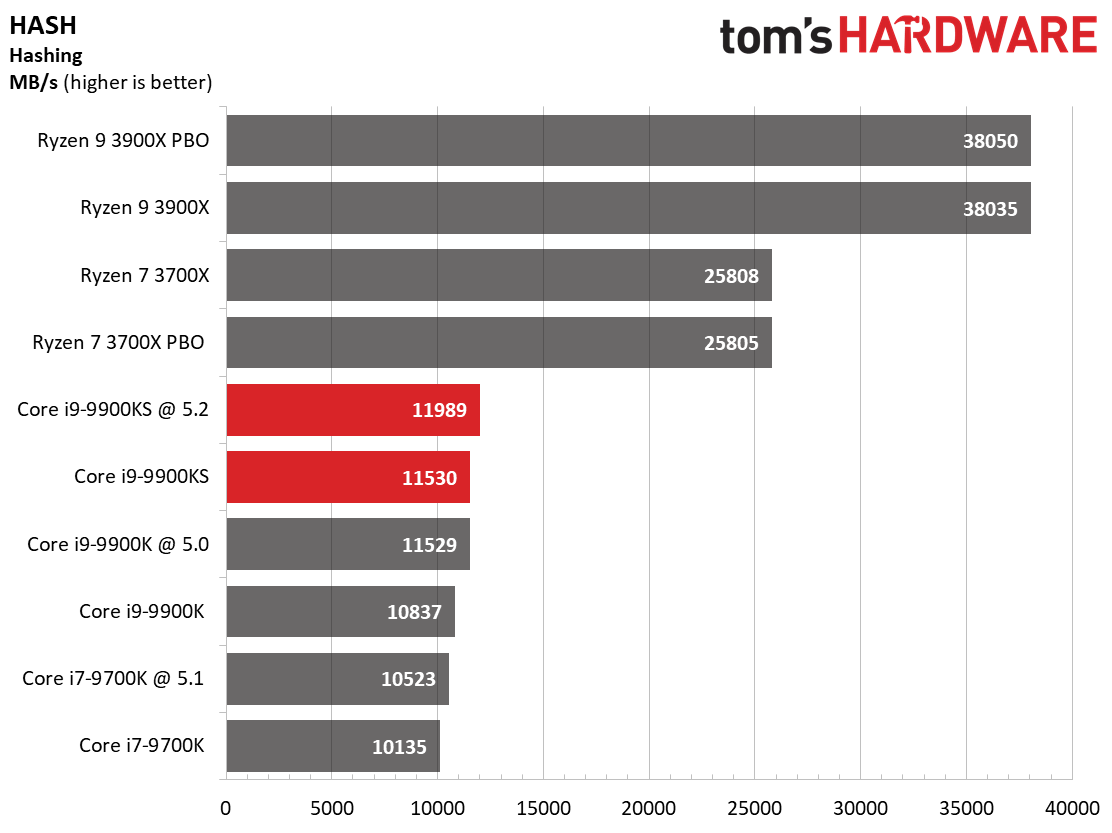

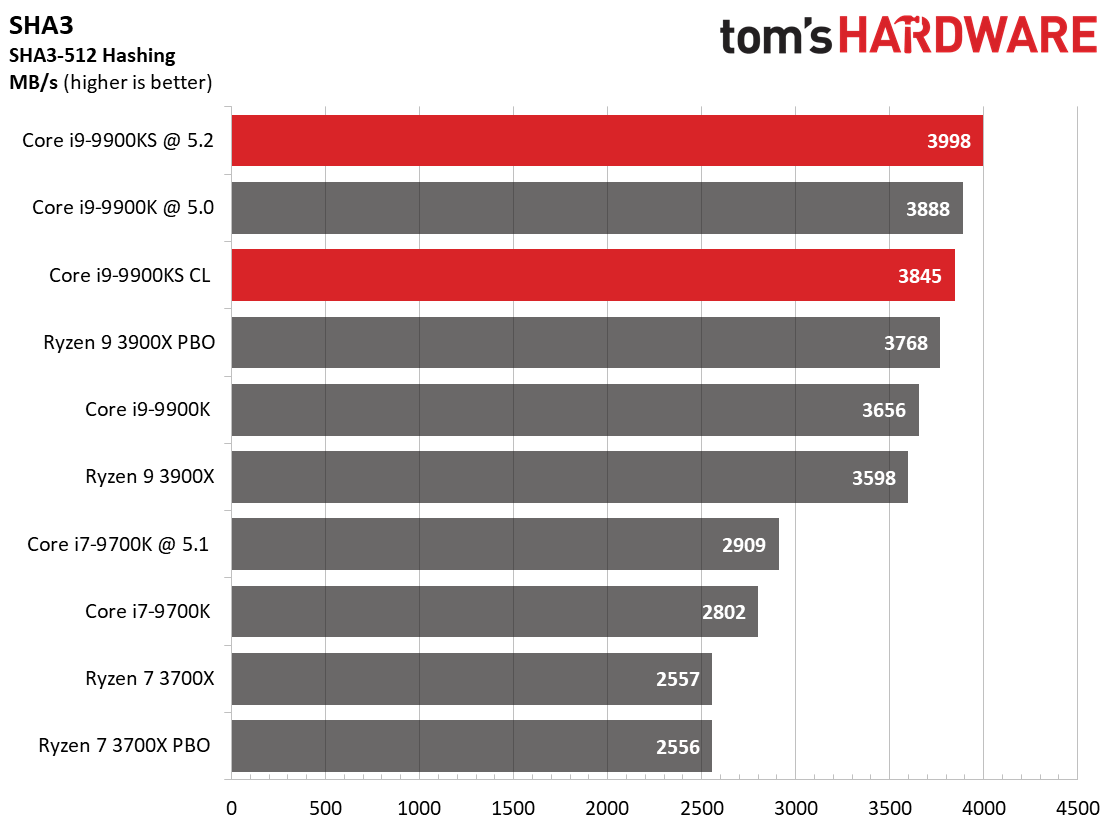

Overall, these tests largely leverage AMD's multi-threaded architecture, much like we observed in the rendering tests. It's noteworthy that AMD's architecture is far superior in hashing and AES encryption workloads, beating the -9900K/S models on a per-core basis.

Current page: Rendering, Encoding, Compression, Encryption

Prev Page Office, Web Browser, and Productivity Next Page Conclusion

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Aspiring techie If my memory serves me correctly, the Stockfish chess engine got stomped by Google's Deepmind AlphaZero, not the other way around.Reply -

joeblowsmynose I just watched Steve Burkes review, and he notes some pretty interesting caveats with this chip ...Reply

The title of this article: "5.0 GHz on All the Cores, All the Time" --- is not true if it is used with a motherboard manufacturer that stuck to Intel's TDP guidelines, like Asus did.

On Asus boards, this chip only boosts all core @5ghz for a limited time then drops back down to maintain reasonable power consumption, as per Intel's own TDP specification for this processor. So basically, Intel gave mobo makers specs to keep TDP ~127w but really it was just their way of lying about TDP but deferring that misinfo to the mobo makers. The mobo maker that actually chose not to allow a misleading TDP now gets punished ... sounds like an Intel move.

So I assume this will mean that Asus is going to be pissed with Intel since gigabyte and MSI boards will let it suck all the power it needs to maintain 5ghz -- completely disregarding the TDP is the only way it boosts at 5ghz all cores, full time.

So how this chip performs has far more to do with the mother board, than the chip. This is stupid.

As an aside question ... what's the cooling power required for OCing? The OC testing here was done using 720mms worth of radiators on a custom loop - what's next ... LN2 testing? ;) We know the limit is somewhere between the H115i and the dual rad custom loop, but I wonder where that is. A lot of cooling for any OCing anyway it seems ... (but expected). -

colson79 I find it sort of annoying how all these reviews always talk about how much better gaming performance is on Intel but leave out the fact that that is only 1080P or lower. The push Intel like it's the only choice for gamers without mentioning the fact that almost every game has the same performance with resolutions over 1080P. I haven't gamed on a 1080 P resolution for years. I think a lot of less informed people completely skip AMD as an option because these review sites push the Intel 1080 P benchmarks so hard. At a minimum I think they should include the 2k and 4k benchmarks in their CPU reviews.Reply -

PCWarrior Reply

If "Out of the box" was literally meant to mean “no bios fiddling whatsoever” then stock behaviour should also be with the XMP profile disabled as you need to get into the bios in order to enable it. And for ASUS boards, the moment you go to enable XMP, it prompts you to load optimised defaults which removes power limits. Also it should be pointed out that no-power-limits and MCE are not the same thing and are not viewed as the same thing by Intel. Reviewers like Steve Burkes from Gamers Nexus and Der8auer seem to conflate the two. They are NOT the same. No-power-limits sticks to stock turbo frequency tables (for example the regular 9900K still only boosts to the stock all-core turbo of 4.7 GHZ but instead for only 25 seconds it does so indefinitely). MCE, on the other hand, means both no-power-limits AND to make the all-core turbo boost equal to the single-core turbo boost (for example for the regular 9900K with MCE enabled it means boosting to 5GHZ on all cores indefinitely). For warranty purposes MCE is considered an overclock by Intel. No-power-limits is NOT considered an overclock by Intel. It is stock and it is left to the motherboard vendor how the settings are configured out of the box.joeblowsmynose said:I just watched Steve Burkes review, and he notes some pretty interesting caveats with this chip ...On Asus boards, this chip only boosts all core @5ghz for a limited time then drops back down to maintain reasonable power consumption, as per Intel's own TDP specification for this processor. -

PCWarrior Reply

They use 1080p because this is currently the highest resolution where with a top GPU there is definitely a cpu bottleneck and it is therefore a cpu test. It is not a cpu test when there is a gpu bottleneck. With current gpus, even with the likes of 2080Ti, you can have an i3 8100 and still do as well in 4K gaming as with a 9900K. However fast forward to the future and using something like a 3080Ti or a 4080Ti and you will be having a cpu bottleneck across all games at 1440p and probably even at 4K. With a 3080Ti or a 4080Ti you will be getting the same fps that you currently get with a 2080Ti at 1080p but at 1440p and 4K. You can verify this by going backwards to a lower resolution. If you have a 2080Ti, you get the same fps for 720p and 1080p because in a cpu bottleneck situation fps can only increase with a better cpu, not a better gpu.colson79 said:I find it sort of annoying how all these reviews always talk about how much better gaming performance is on Intel but leave out the fact that that is only 1080P or lower. -

joeblowsmynose ReplyPCWarrior said:If "Out of the box" was literally meant to mean “no bios fiddling whatsoever” then stock behaviour should also be with the XMP profile disabled as you need to get into the bios in order to enable it. And for ASUS boards, the moment you go to enable XMP, it prompts you to load optimised defaults which removes power limits. Also it should be pointed out that no-power-limits and MCE are not the same thing and are not viewed as the same thing by Intel. Reviewers like Steve Burkes from Gamers Nexus and Der8auer seem to conflate the two. They are NOT the same. No-power-limits sticks to stock turbo frequency tables (for example the regular 9900K still only boosts to the stock all-core turbo of 4.7 GHZ but instead for only 25 seconds it does so indefinitely). MCE, on the other hand, means both no-power-limits AND to make the all-core turbo boost equal to the single-core turbo boost (for example for the regular 9900K with MCE enabled it means boosting to 5GHZ on all cores indefinitely). For warranty purposes MCE is considered an overclock by Intel. No-power-limits is NOT considered an overclock by Intel. It is stock and it is left to the motherboard vendor how the settings are configured out of the box.

A complicated way to lie about TDP ... lol. Whatever you say, it disingenuous. -

TJ Hooker Reply

FYI this applies to all Intel CPUs with turbo boost, not just the 9900KS. Officially they're all supposed to have a limited duration boost buy nearly all mobos remove this limit. And they've been doing so for some time, so it seems Intel doesn't really care.joeblowsmynose said:On Asus boards, this chip only boosts all core @5ghz for a limited time then drops back down to maintain reasonable power consumption, as per Intel's own TDP specification for this processor. So basically, Intel gave mobo makers specs to keep TDP ~127w but really it was just their way of lying about TDP but deferring that misinfo to the mobo makers. The mobo maker that actually chose not to allow a misleading TDP now gets punished ... sounds like an Intel move.

Which makes sense, as it improves their benchmark scores and if anyone complains about power draw they can just point to their states rules for power levels and blame the mobo manufacturers, even though they've implicitly given them permission to do this by allowing it to go on in a widespread fashion. -

TJ Hooker Last page:Reply

Bear in mind that we tested with an Nvidia GeForce GTX 1080 at 1920x1080 to alleviate graphics-imposed bottlenecks.

Should say 2080 Ti. -

Soaptrail ReplyPCWarrior said:They use 1080p because this is currently the highest resolution where with a top GPU there is definitely a cpu bottleneck and it is therefore a cpu test. It is not a cpu test when there is a gpu bottleneck. With current gpus, even with the likes of 2080Ti, you can have an i3 8100 and still do as well in 4K gaming as with a 9900K. However fast forward to the future and using something like a 3080Ti or a 4080Ti and you will be having a cpu bottleneck across all games at 1440p and probably even at 4K. With a 3080Ti or a 4080Ti you will be getting the same fps that you currently get with a 2080Ti at 1080p but at 1440p and 4K. You can verify this by going backwards to a lower resolution. If you have a 2080Ti, you get the same fps for 720p and 1080p because in a cpu bottleneck situation fps can only increase with a better cpu, not a better gpu.

Yes but they should include a couple 1440p and 4K resolution benchmarks to put context. It could still be beneficial to buyers to get the cheaper options like the AMD Ryzen 3600 and upgrade in a couple years than buy the 9900KS and stick with it for 6 years.