IBM Used NYPD Surveillance to Tag People by Skin Color, Ethnicity

According to a report today from The Intercept and the Investigative Fund, IBM has been developing video surveillance software for law enforcement that can search people by skin color and ethnicity.

Post-9/11 IBM Bets On Video Surveillance

According to the report, IBM didn’t initially intend its video analytics software to be used for surveillance. However, after the 9/11 attacks happened in the U.S., the company saw high demand from law enforcement agencies and police departments for advanced video analytics technology that could be integrated into public cameras to search and find terrorist suspects.

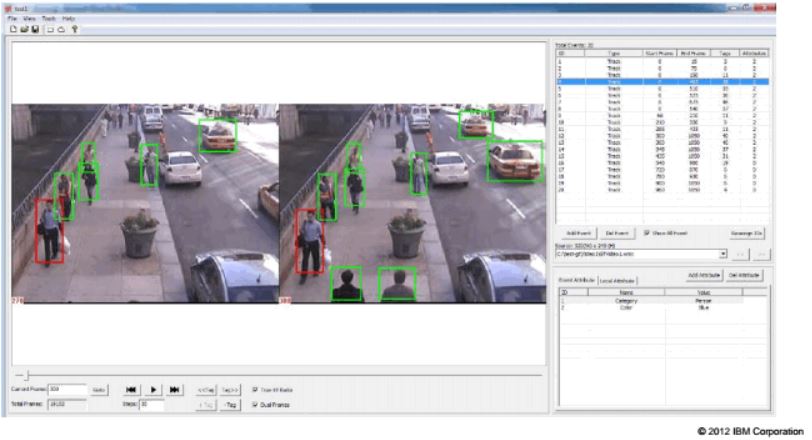

According to The Intercept, in 2012 the NYPD gave IBM “secret access” to its city-wide video surveillance system, which the company used to develop new surveillance features, such as searching camera footage for images of people by hair color, facial hair and even skin tone.

The NYPD said that it only used the skin tone feature for testing purposes and didn’t deploy it to its camera system because it didn’t want the public to think it was racially profiling. The NYPD also said that giving access to IBM to its camera system was required for the collaboration to work.

However, civil liberties advocates argued that New Yorkers should have been made aware that the real-time collection of physical data was being handed over to a private firm. This revelation comes at a time when New York City Mayor Bill de Blasio and the NYPD are fighting against a new city council bill that would require more transparency from the NYPD.

No Longer Just a Counter-Terrorism Tool

Although the original intent of the IBM surveillance technology was to integrate the technology into the NYPD’s camera system in order to find terrorist suspects, the technology may have been used in everyday crimes too. However, NYPD denied this, and Peter Donald, the department’s spokesperson, said that he’s not aware of any case where IBM’s technology was used in an arrest or prosecution.

Meanwhile, the Campus police at California State University, Northridge, which also adopted IBM’s technology, admitted that it has been using the technology to track everyday criminals and even student protesters.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

IBM’s Tech Tracks Ethnicity

Donald noted that sometime in 2016 or early 2017, IBM announced to the NYPD that it developed version 2.0 of its video surveillance technology, which could now also track people by ethnicity and provide tags, including “Asian,” “Black,” or “White.” The NYPD said it “explicitly rejected that product” because of this feature.

However, Rick Kjeldsen, a former IBM researcher who worked on this software, said that NYPD’s statement is misleading because neither IBM nor any other company would have worked on such a feature if the NYPD hadn’t shown interest in it.

Racial Profiling Concerns

Civil liberties advocates are worried that IBM’s technology could be used for mass racial profiling. Rachel Levinson-Waldman, senior counsel at the Brennan Center’s Liberty and National Security Program, said:

“Whether or not the perpetrator is Muslim, the presumption is often that he or she is. It’s easy to imagine law enforcement jumping to a conclusion about the ethnic and religious identity of a suspect, hastily going to the database of stored videos and combing through it for anyone who meets that physical description and then calling people in for questioning on that basis.”

Clare Garvie, a law fellow at Georgetown Law’s Center on Privacy and Technology, also said that any form of real-time location tracking should raise Fourth Amendment issues. The Supreme Court has already ruled twice that real-time location tracking is unconstitutional. Tracking where people are via city-wide camera surveillance systems could fall under the same ruling.

According to Garvie, any form of “identity-based surveillance” may also violate the First Amendment because it could compromise people’s right to anonymous public speech and association.

Kjeldsen also told The Intercept that IBM’s development of this surveillance technology for the NYPD without the public’s consent sets a dangerous precedent.

“Are there certain activities that are nobody’s business no matter what? Are there certain places on the boundaries of public spaces that have an expectation of privacy? And then, how do we build tools to enforce that? That’s where we need the conversation. That’s exactly why knowledge of this should become more widely available — so that we can figure that out," he said.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

-Fran- You mean the same Company that sold Hitler and the Nazi party their system to classify and track their concentrations camps and other "inventory" has developed a system for mass control in the modern era with racists undertones?Reply

Nah, get outta here!

But in all seriousness, why wouldn't ethnicity be an interesting piece of information to harvest? Like it or not, data is data. How you interpret it matters, but gathering it is fair game. This might sounds a bit naive, but it is the truth.

Cheers! -

fffcmad If an ethnic group is more prone to commit crime, its very natural to give them some priority in surveillance.Reply -

rinosaur Reply21298700 said:If an ethnic group is more prone to commit crime, its very natural to give them some priority in surveillance.

You mean like pulling black people over far more often in hopes of finding contraband... -

NewbieGeek “Are there certain activities that are nobody’s business no matter what? Are there "According to The Intercept, in 2012 the NYPD gave IBM “secret access” to its city-wide video surveillance system, which the company used to develop new surveillance features, such as searching camera footage for images of people by hair color, facial hair and even skin tone.Reply

The NYPD said that it only used the skin tone feature for testing purposes and didn’t deploy it to its camera system because it didn’t want the public to think it was racially profiling. The NYPD also said that giving access to IBM to its camera system was required for the collaboration to work.

However, civil liberties advocates argued that New Yorkers should have been made aware that the real-time collection of physical data was being handed over to a private firm. This revelation comes at a time when New York City Mayor Bill de Blasio and the NYPD are fighting against a new city council bill that would require more transparency from the NYPD."

You guys try to make this sound surprising. It isn't. It so happens I didn't explicitly know the technology existed (though I'm not surprised) but when useful technology like this IS developed, they would be crazy, frankly, to not use such useful information.

Sucks of course, this invasion of privacy, but who honestly expects better from the government? -

hotaru251 except white people are terrorists too.Reply

Pretty sure more white people joined ISIS than colored or others.

Skin color has nothign to do with risk. I have relatives of color and ahispanic gf. I am just as likely to turn to terrorism as they are. -

Co BIY Race (represented by hair and skin color) is probably the simplest identifying feature for a camera based system to track and already listed in the existing government data on every person so it is not surprising that it was heavily used for early experiments in camera tracking.Reply

The usefulness of that single piece of data is very low. But as a stepping stone for a system that will eventually be able to distinguish between and track individuals it makes sense.

"The Supreme Court has already ruled twice that real-time location tracking is unconstitutional." I'm not sure that this is true.

They have ruled that warrant-less real-time tracking using the person's own cell-phone data is unconstitutional in cases where there was not immediate danger to the public. That leaves a lot of other scenarios unclear. -

grlegters That's terrible. If this isn't made illegal, they may also use gender, age, weight, gait, disabilities, facial features, and species to tag people. The traditional version of lady justice was blind, but the modern version is deaf, dumb, and blind.Reply -

shrapnel_indie ReplyThe NYPD said it “explicitly rejected that product” because of this feature.

However, Rick Kjeldsen, a former IBM researcher who worked on this software, said that NYPD’s statement is misleading because neither IBM nor any other company would have worked on such a feature if the NYPD hadn’t shown interest in it.

Of course its misleading. They don't want people to know that they are indeed doing it... especially if it means they'd have a riot on their hands.

Considering the latest string of Mayors (who outrank the presidents of the Burroughs) of NYC... It could easily be misused as a political weapon... harass 2nd Amendment supporters, those who speak negatively of Bloomers or DeBlasio, etc.

Kjeldsen also told The Intercept that IBM’s development of this surveillance technology for the NYPD without the public’s consent sets a dangerous precedent.

That ship has already sailed with the likes of the Patriot Act and such at the federal level. You can't expect mini-tyrant mayors not to want in on the action.

“Are there certain activities that are nobody’s business no matter what?

YES!

Are there certain places on the boundaries of public spaces that have an expectation of privacy?

Yes.

And then, how do we build tools to enforce that? That’s where we need the conversation. That’s exactly why knowledge of this should become more widely available — so that we can figure that out," he said.

You can't really build tools that enforce that. As a community of law-breaking hackers exists, there are those that are under the control of governments too... they'd find a way to disable the safeguards and implement it if and when a government so insisted.

Just as a side note that follows in the line of the existence of white terrorists... Being Muslim, or Islam, has nothing to do with one's race and everything to do with one's religious beliefs. Although I'm sure some are of the belief to be a true Muslim, you must be of Arabic heritage. (Being Arabic is racial, not Islam or Muslim.) It doesn't mean they should be singled out... There are those Muslims out there that don't practice the full teachings of Mohamed, -

USAFRet Reply21300536 said:That's terrible. If this isn't made illegal, they may also use gender, age, weight, gait, disabilities, facial features, and species to tag people. The traditional version of lady justice was blind, but the modern version is deaf, dumb, and blind.

Well...if they're looking for a "early 20's, 5' 2"-5'5", 105-115lb white female"...I'd rather they not tag me, a 62 year old, 5' 10", 190lb Black male.

Otherwise, the only thing they be looking for is Human/Not Human.

Don't get me wrong...I fully realize the 8 zillion ways this can and will be abused. -

Robert Ostrowski If you are tracking a criminal that's say, Asian, how is it racial profiling to filter to only Asians? Why would you search white or black if you are not looking for them?Reply