IBM boosts mainframes with 50% more AI performance: z17 features Telum II chip with AI accelerators

Mainframes live on.

IBM has introduced the z17, its newest mainframe system, designed for mission-critical business transactions with advanced security capabilities enhanced with AI. The system is based on the Telum II processor that offers both 70% higher general-purpose performance over its predecessor as well as 50% improved AI capabilities. For those who need even higher AI performance, IBM offers to install additional Spyre accelerators.

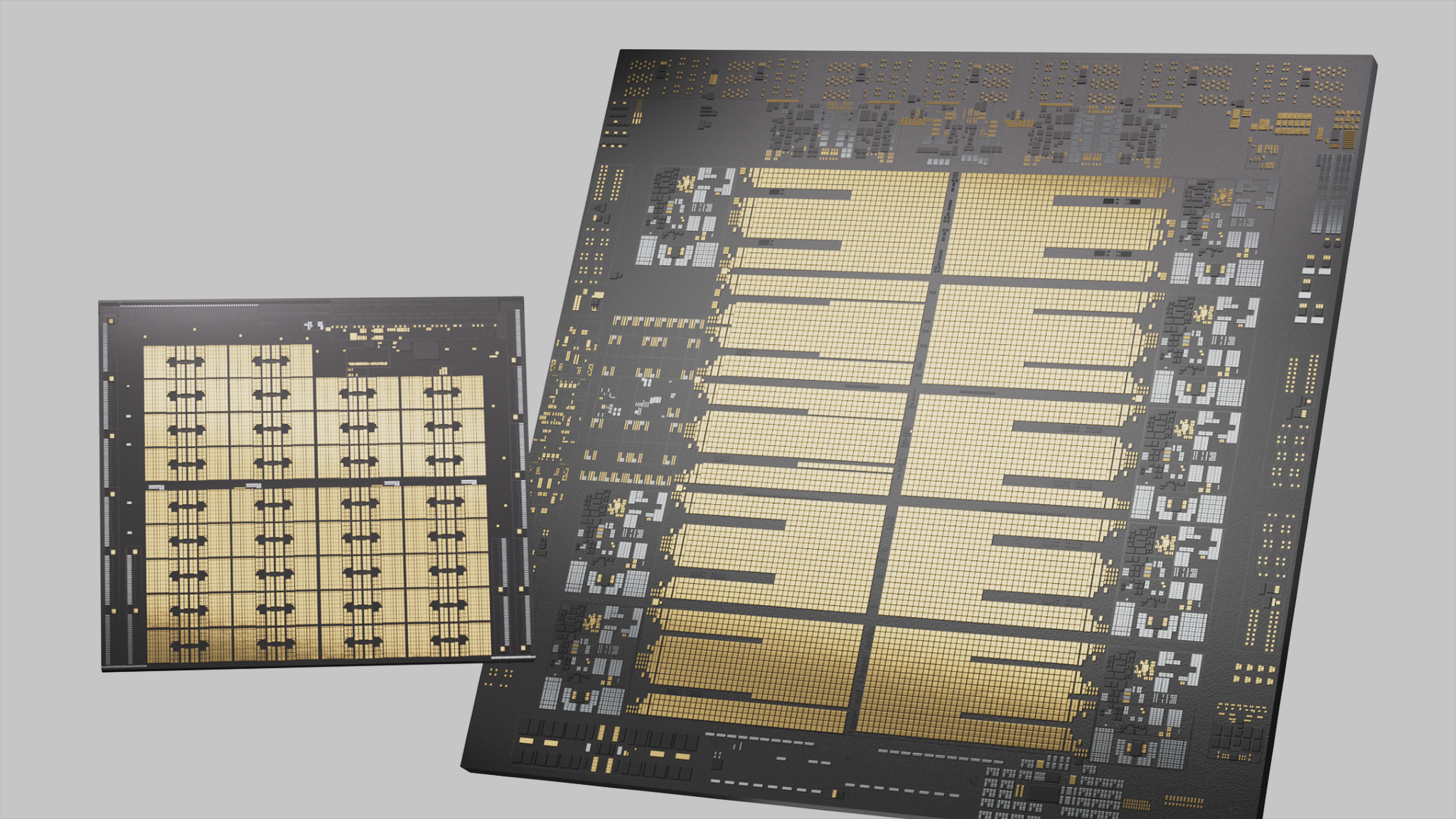

IBM's Telum II processor is the heart of the company's new z17 mainframe. The Telum II CPU features eight advanced cores operating at 5.5 GHz, featuring enhanced branch prediction, store writeback, and address translation. The chip is equipped with 36MB of L2 cache, a 40% increase compared to the earlier version. It offers support for virtual L3 and L4 cache levels, expanding available cache to 360 MB and 2.88 GB, respectively. Additionally, Telum II integrates a data processing unit (DPU) to accelerate transactional workloads, which the company says increases overall system responsiveness. The chip is manufactured using Samsung’s 5HPP fabrication process and contains 43 billion transistors.

However, the Telum II does not only boast enhanced performance. A central element of this processor is its upgraded AI unit, which delivers four times the compute capability of the previous generation, reaching 24 trillion operations per second with INT8 data precision. Perhaps, 24 TOPS wasn't very impressive. However, the NPU is designed for mission-critical time-sensitive application that supports ensemble AI methods (traditional machine learning with a large-language model) to detect suspicious activities and fraud attempts.

It should be noted that every AI unit within a processor drawer can accept tasks from any of the CPU cores. This ensures even distribution of processing demands and enables the full use of the available 192 trillion operations per second per drawer when all accelerators are active.

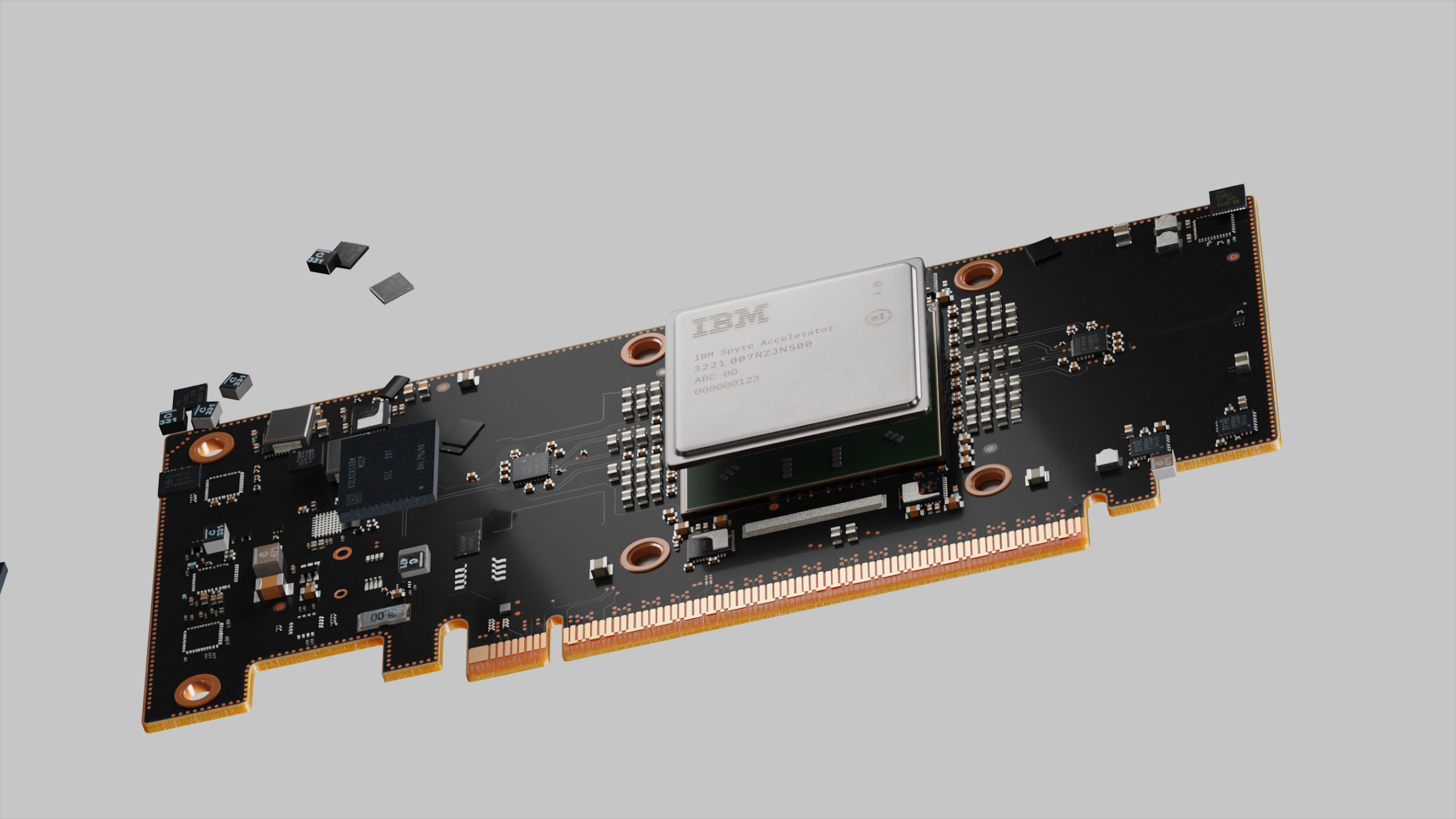

IBM understands that some workloads will require more AI performance. Hence, alongside its Telum II, IBM unveiled the Spyre AI accelerator card with a PCIe interface. This 26-billion transistor processor packs 32 AI cores and features an architecture that closely resembles that of the AI accelerator architecture found in Telum II and, therefore, can be used to dynamically expand AI capabilities and performance of z17 drawers.

"The industry is quickly learning that AI will only be as valuable as the infrastructure it runs on," said Ross Mauri, general manager of IBM Z and LinuxONE, IBM. "With z17, we are bringing AI to the core of the enterprise with the software, processing power, and storage to make AI operational quickly. Additionally, organizations can put their vast, untapped stores of enterprise data to work with AI in a secured, cost-effective way."

To support AI workloads at the system level, IBM intends to introduce its z/OS 3.2 in Q3 2025, an updated version of its mainframe operating system. The new OS is designed to work with hardware acceleration and supports NoSQL and hybrid cloud data.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Traditionally, for IBM's z mainframes, the new z17 features robust security capabilities, including a new tool called IBM Vault, originally developed by HashiCorp to handle credentials, keys, and tokens across hybrid environments.

The system also includes hardware-level support for data classification and anomaly detection using the inference capabilities of the Telum II CPU.

As for storage, IBM's z17 will use the company's IBM DS8000 Gen10 system, which is designed to support high-speed transactions, availability, and scalability for mission-critical operations.

The IBM z17 will be available starting June 18, 2025, with the Spyre Accelerator arriving later in the year.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

bit_user If you thought Nvidia's DGX systems were expensive...Reply

I think the real story on the AI acceleration is that it's for people who need to use mainframes for regulatory reasons, or maybe they're just deep-pocketed and afraid to break with tradition. For the latter set, AI was probably the one thing that would lure them out of the mainframe world and into the cloud. IBM was probably worried that once they started using the cloud for AI, they might decide they could migrate their other computing tasks there, as well. -

thestryker I imagine the on die AI capabilities are mostly just standard gen on gen improvement, but the addin board certainly isn't. It would be interesting to see what the practical application ends up being given how these systems are typically used. Ever since I first saw the improvements IBM was doing each z series generation they've been fascinating to watch. They have so much design innovation it's easy to forget that at their core they're still about allowing native execution of code that goes back decades.Reply -

bit_user Reply

They said it uses the same AI cores as the CPUs. They don't say how many AI cores the CPU has, but if we assume it's only 1, then the Spyre should do up to 768 TOPS, which is still peanuts compared to Nvidia.thestryker said:I imagine the on die AI capabilities are mostly just standard gen on gen improvement, but the addin board certainly isn't.

There's some more detail about non-AI parts of Telum II, here:

https://chipsandcheese.com/p/telum-ii-at-hot-chips-2024-mainframe-with-a-unique-caching-strategy

Each CPU only has 8 cores, which is sorta shocking in this day and age and considering how much they cost. That legacy code probably should've been rewritten ages ago. Leaving that aside, I wonder how fast it'd run in a JIT emulator on a modern x86 or ARM server CPU.thestryker said:They have so much design innovation it's easy to forget that at their core they're still about allowing native execution of code that goes back decades.

The part I find most interesting is that they still manage to pull enough revenue to fund the development of a custom microarchitecture and ...well, everything else. -

Sam Hobbs How does the performance of the Spyre AI accelerator cards compare to AI processors from other companies?Reply -

bit_user Reply

If we take the above figure of 768 TOPS and consider it to mean dense, int8 tensors, here would be the comparable scores for a few recent Nvidia products:Sam Hobbs said:How does the performance of the Spyre AI accelerator cards compare to AI processors from other companies?

ModelTOPS (int8, dense)Max Power (W)L448572L401448300RTX 50901676575H2001979700B20045001200

I'm guessing the 768 figure is an overestimate, because they apparently use only 75 W each, according to this:

https://www.servethehome.com/the-ibm-z17-mainframe-brings-ai-with-telum-ii-and-spyre/

Based on that, Nvidia's L4 is a rather useful point of comparison. I think they're also made on a similar process node and use similar memory (the Spyre appears not to use HBM-class DRAM). At 75 W, they're probably clocked a fair bit lower than the AI unit incorporated into each Tellum II processor. It could also have fewer AI cores than I assumed, since my estimate attributed one unit to each Tellum II. So, my guess of 768 might even be something like 3x of the real figure. Whatever the case, I think there's no way it's faster than a L4.

BTW, I just took another look at the die shot and it's quite clear the AI unit contains at least two units - maybe 4 or even more?

Source: https://chipsandcheese.com/p/telum-ii-at-hot-chips-2024-mainframe-with-a-unique-caching-strategy

However many you think it is, divide the 768 TOPS figure by that. Then, maybe tweak it further for power/clock speed.