Nvidia Refreshes Expensive, Powerful DGX Station 320G and DGX Superpod

Buy a DGX Station 320G for $149K, or rent one for just $9K per month

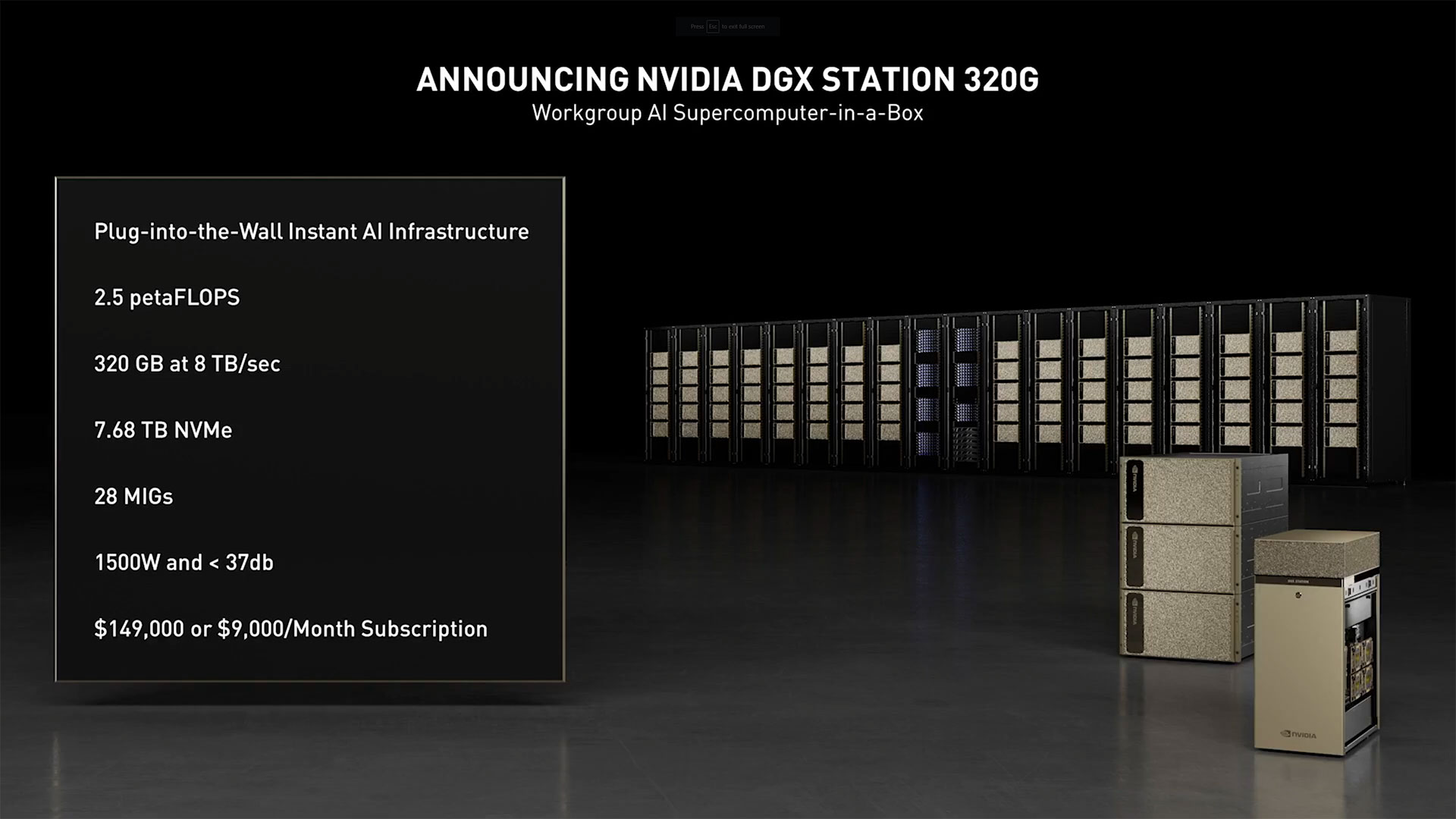

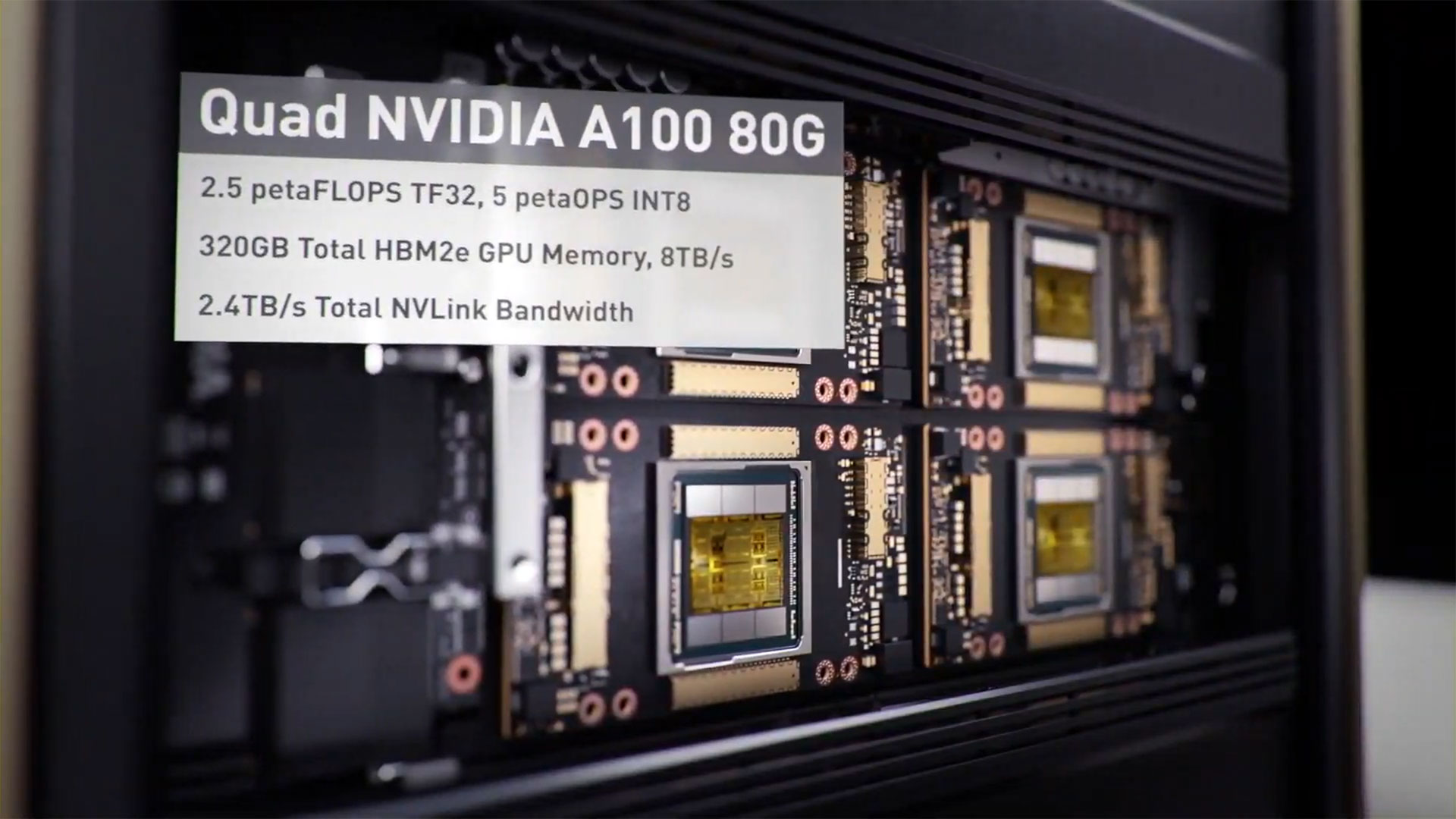

Nvidia today revealed its updated DGX Station A100 320G. As you might infer from the name, the new DGX Station sports four 80GB A100 GPUs, with more memory and memory bandwidth than the original DGX Station. The DGX Superpod has also been updated with 80GB A100 GPUs and Bluefield-2 DPUs.

Nvidia already revealed the 80GB A100 variants last year, with HBM2e clocked at higher speeds and delivering 2TB/s of bandwidth per GPU (compared to 1.6TB/s for the 40GB HBM2 model). We already knew as far back as November that the DGX Station would support the upgraded GPUs, but they're now 'available' — or at least more available than any of the best graphics cards for gaming.

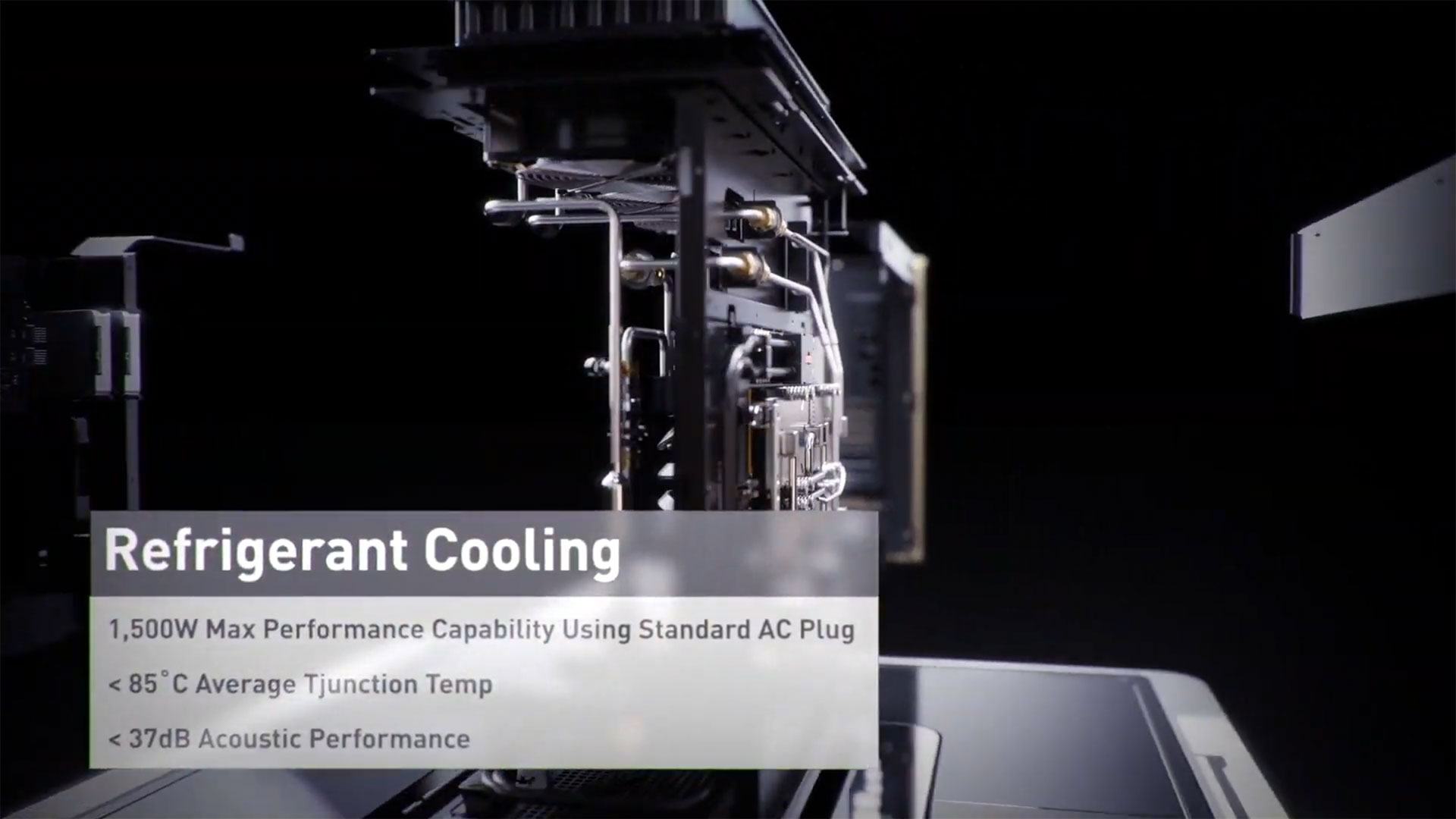

The DGX Station isn't for gaming purposes, of course. It's for AI research and content creation. It has refrigerated liquid cooling for the Epyc CPU and four A100 GPUs, and runs at a quiet 37 dB while consuming up to 1500W of power. It also delivers up to 2.5 petaFLOPS of floating-point performance and supports up to 7 MIGs (multi-instance GPU) per A100, giving it 28 MIGs total.

Article continues belowIf you're interested in getting a DGX Station, they run $149,000 per unit, or can be rented for $9,000 per month.

The DGX Superpod has received a few upgrades as well. Along with the 80GB A100 GPUs, it now supports Bluefield-2 DPUs (data processing units). These offer enhanced security and full isolation between virtual machines. Nvidia also now offers Base Command, the software the company uses internally to help share its Selene supercomputer among thousands of users.

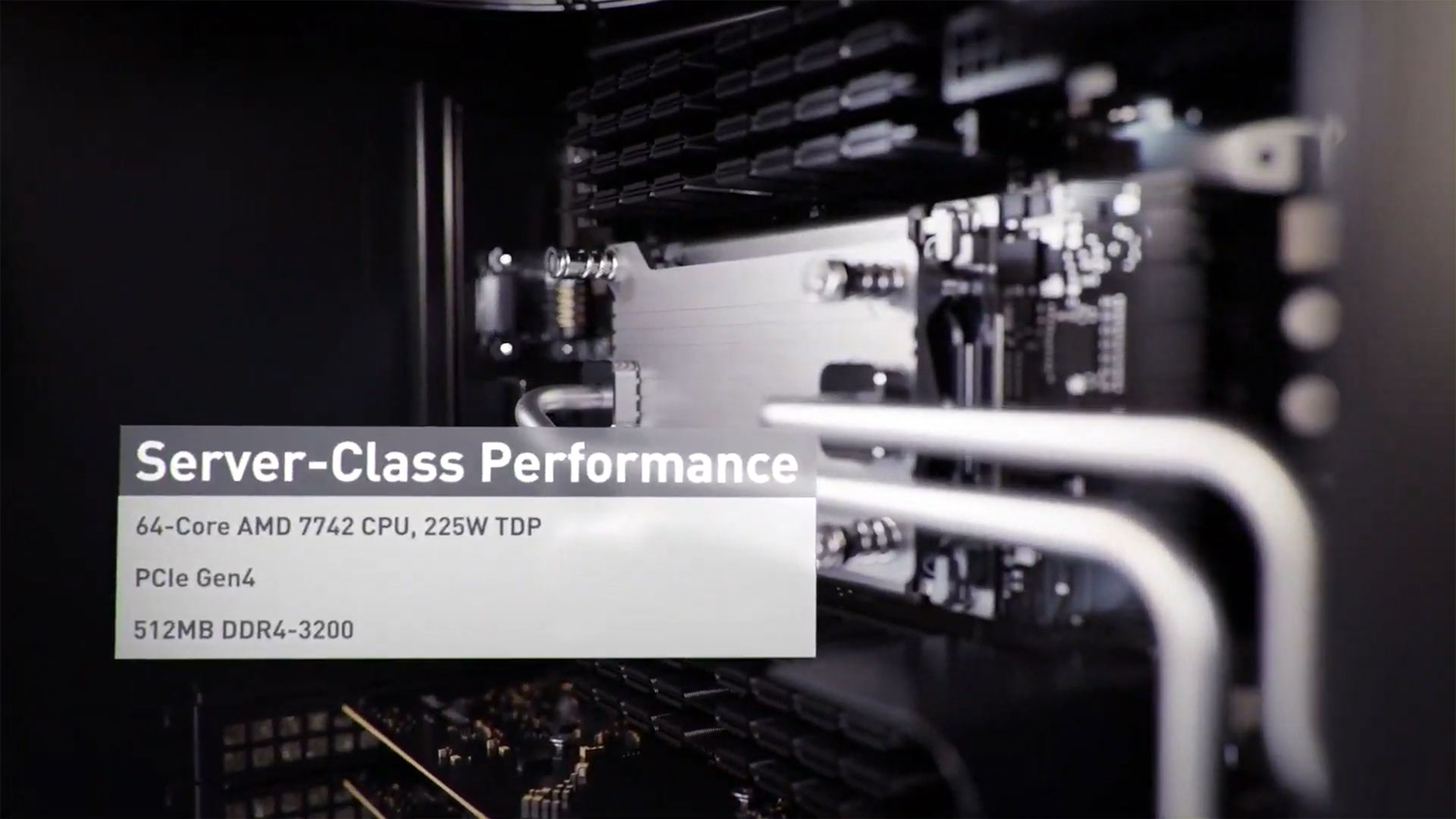

DGX Superpod spans a range of performance, depending on how many racks are filled. It starts with 20 DGX A100 systems and has 100 PFLOPS of AI performance, and a fully equipped system has 140 DGX A100 systems and 700 PFLOPS of AI performance. Each A100 system has dual AMD EPYC 7742 CPUs with 64-cores each, supports up to 2TB of memory, and has eight A100 GPUs. Each also delivers 5 PFLOPS of AI compute, though FP64 is also available.

The DGX Superpod starts at $7 million and scales up to $60 million for a fully equipped system. They'll be available in Q2. We'll take two, thanks.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.