Google's Project Tango Plus Intel's RealSense Equals A Gaming Smartphone

It takes two to properly tango, or so we've heard, and two companies doing the dance at present are Intel and Google. During the IDF 2015 keynote, we saw a demo of a Project Tango phone (notably, not a tablet) equipped with an Intel RealSense camera that mapped a room in 3D in just seconds. There were also demos populating the show floor, and we experienced a few and asked some questions about the collaboration and the involved hardware.

Project Tango, Revisited

It's been awhile since we last heard much from Google's Project Tango, but we can't say we were surprised to see a smartphone prototype emerge toting an Intel RealSense camera. The two projects have strikingly similar goals.

Project Tango was born in Google's ATAP division as a means of bringing together motion tracking sensors, "area learning" and depth perception cameras into a mobile device. The idea is that such a device would be able to map an area in 3D in real time and "learn" what it was seeing. You can imagine any number of potential use cases with such a technology in both AR and VR. Fun and games, real estate, construction, training -- you name it.

Article continues belowThe Prototype

Indeed, Project Tango dev kits in tablet form have been available for about a year, but a smartphone prototype packing a RealSense camera was there in the flesh (as it were) at IDF. The camera inside the prototype is an R200, the newer "world-facing" RealSense camera.

The Camera

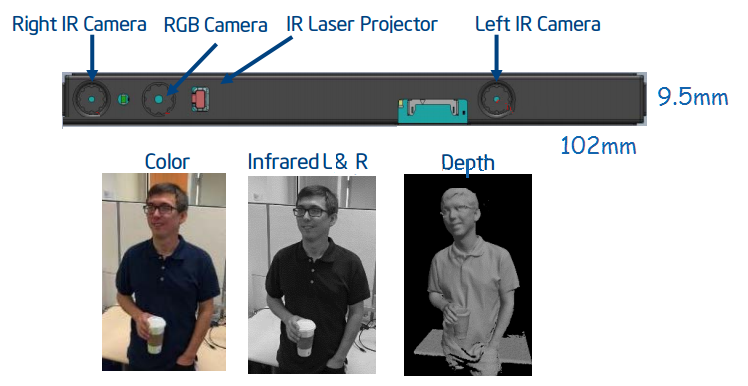

The R200 camera itself has right and left IR cameras, an RGB camera and an IR laser projector. The three cameras work together to provide a depth image, and that also allows you to edit images extensively after you click the shutter. The R200 is able to estimate its position and orientation in real time, and it can also scan the environment and reproduce it in the 3D.

It's a "world-facing" unit, to borrow Intel's marketing terminology, and it's aimed at integration mostly within tablets and 2-in-1 devices, although there's also a standalone version you can purchase for $99. (For now, it's available only for preorder.)

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Phone

Specifications on the Project Tango handset itself are a bit sparse. However, seeing this is a prototype, the internal hardware is likely to change anyway. Thus, instead of seeing specifics, we're getting a general overview, but that will at least give us a sense of what kind of horsepower the phone requires to power itself and the R200 camera.

| Project Tango Smartphone Prototype | |

|---|---|

| SoC | -Intel Atom x5-Z8500 (Cherry Trail) -Quad core, 1.44 GHz, 2 MB cache |

| Display | 6 inches, QHD (2560 x 1440) |

| Camera | Intel RealSense R200 |

| Sensors | Project Tango sensors and SDK |

| OS | Android 5.0.1 (Lollipop) |

| Dimensions | -8.2 mm thick-165g (5.8 oz) |

Again, the above isn't a tremendous amount of information to go on, but, again, this is prototype hardware and isn't meant to be an end-user product. For what it's worth, the x5-Z8500 packs Intel HD graphics (200 MHz base frequency, 600 MHz boost) and supports up to 8 GB of LPDDR3-1600 MHz RAM.

It's also important to note that, according to the demo team, Project Tango will eventually support Windows 10 Mobile, probably some time next year. This is a fascinating detail, as Project Tango was created by Google.

The Demos

The RealSense area at IDF 2015 had numerous demos, including the one we recently wrote about that uses Razer's new (but unnamed) RealSense-powered VR/game streaming peripheral with an OSVR HMD, but the two Project Tango demos showed how the handset could be used in gaming and other applications.

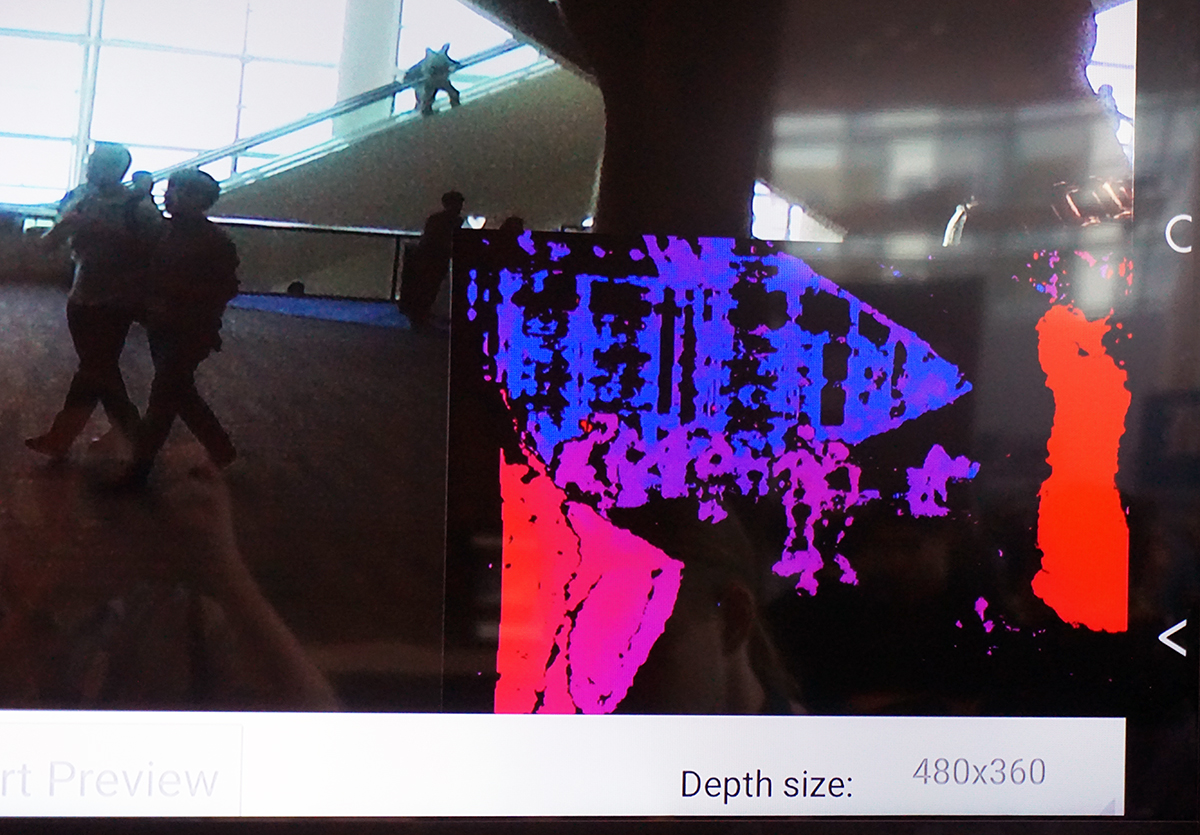

In both cases, there was a little window on the bottom right of the large displays that showed what the Project Tango handset was "seeing" in real time. The colors represent layers of depth. Note how the people closest to the cameras are red, people further back are purple, and the escalator off in the background is blue.

Playing A Little Game

The first demo I experienced used a Project Tango handset connected to a TV via a special dock to put you inside a simple game. The phone was placed on the dock in front of the TV with the RealSense camera pointed at me. For reference, it's the exact same idea as the Kinect, but it's not nearly as strong.

The prototype dock itself was heavy -- it felt like it weighed several pounds -- and that's by design, as it gives the phone a stable base. The phone connects to the dock via a proprietary connector, and the dock provides power as well as an HDMI out to the TV. I was told that a future consumer version of the dock will likely use a USB Type-C connector. (Support for USB Type-C is coming, at least.)

In the game, you just have to move side-to-side to avoid objects that come down the path, and try to pick up others. That's it. In my time in the demo, I found the lag to be fairly poor -- for such an intuitive movement, it was difficult to time my motions. As you can see in the video, the demonstrator did fairly well, although he was used to the lag and had compensated for it.

He was quick to note, however, that the device was hot -- it had been connected and running for several hours at that point -- and that contributed significantly to the lag issues. (For what it's worth, even a small amount of heat can throw off mobile performance. This is why we allow time for mobile devices to cool off between benchmark runs.)

What was impressive about the demo is that the game itself was running on the phone. The handset was streaming that content (via the wired dock) to the TV, at 2560x1440, and it was running the RealSense camera, processing the captured information in real time, and adding it to the game experience. That's a substantial workload for a 6-inch device.

Android gaming on a big screen is becoming more prevalent, and the point of the demo was to show what could be possible with a RealSense camera and a handset. You can imagine dropping your phone into a wired dock and streaming Netflix or running other applications that you could control with gestures from the couch.

Scanning And Playing

The second demo was more powerful, and it showed more potential gaming applications, some scanning capabilities, and some combinations thereof.

As you can see in the video, the demonstrator held a Project Tango phone connected to the display. As he moved the device around in space, the camera tracked the environment in real time, with an impressively small amount of lag. As he moved it, the handset maintained feature points (the green dots on the display) and combined them with sensor data (such as the accelerometer and gyroscope) to calculate both the device's relative position and also how far it traveled.

That's a neat feat, especially for quickly 3D mapping a space, but you can use it in game environments, too.

In a game called Tango Blaster, the demonstrator showed how you can use the Project Tango handset as both a controller to fire your weapons and a means for the game to track your position. With your aim indicated by a red laser beam, you can walk around or wave the device in a physical space to move around or change your view in the game, and when you encounter an enemy obstacle, you can zap it.

We also saw a scenario in which the demonstrator used an app called TangoCubeStacker to scan the floor and create a virtual environment. Then, in what looked like a version of Minecraft, he was able to stack virtual brick blocks on the facsimile of the floor. Using the handset's display as a working viewfinder and the TV as a large, beautiful display, you can imagine kids spending hours creating virtual architectural masterpieces.

In this second spate of demos, the phone did not use the dock; instead, it was wired directly to the TV. If the USB Type-C connector makes its way onto the Project Tango phone, the power delivery capability could allow your kids to game their little brains out for hours without killing the device's battery. (Performance negatively impacted by heat from all that processing is a different matter, though.)

None of the above was particularly revolutionary, but it is compelling as a proof of concept. Project Tango showed a future where a higher-end handset equipped with the R200 RealSense camera could be both a gaming controller and a means of input.

Seth Colaner is the News Director at Tom's Hardware. Follow him on Twitter @SethColaner. Follow us @tomshardware, on Facebook and on Google+.

Seth Colaner previously served as News Director at Tom's Hardware. He covered technology news, focusing on keyboards, virtual reality, and wearables.