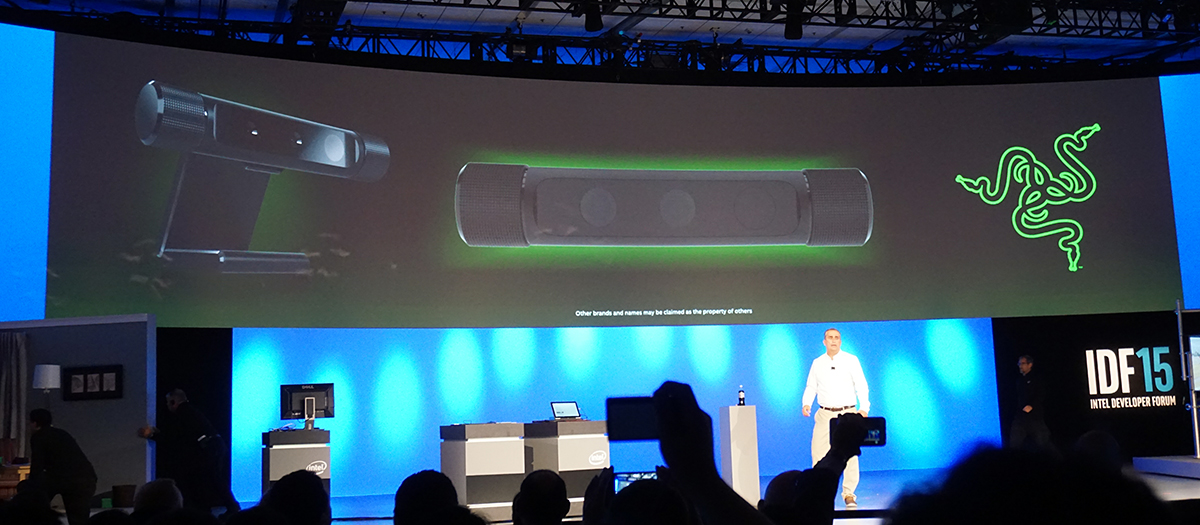

Razer's Unnamed RealSense-Powered 'VR' Camera, On An OSVR HMD (Updated)

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

A surprising announcement in the middle of Intel's IDF 2015 keynote was a new Razer peripheral -- a camera that, depending on whether you go by Intel's onstage announcement or Razer's press materials, is designed for Twitch game streaming or as a VR peripheral.

Whatever the application you might seem most keen to explore, the peripheral so far does not have an actual name. That tells us it's still somewhat in beta, and we believe that is probably influenced by the Intel side of things.

I had the chance to enjoy a demo with the new peripheral at IDF 2015. Although the onstage demo focused on the "green screen effect" capabilities of the device (and indeed, the demo made a compelling case for its Twitch potential), I saw firsthand how it would be used in tandem with an OSVR HMD.

Article continues belowGiving OSVR A Hand -- Well, My Hands

Essentially, the Razer/Intel camera peripheral was used to get my hands into the virtual reality environment. This is precisely what Leap Motion portends to do. And just as in our hands-on (oh, the puns) experience with the Leap Motion controller, the Razer/OSVR experience was promising, but still had a ways to go before it was ready for primetime.

The Razer peripheral was mounted on the front of the OSVR (and connected to a Razer Blade 14 laptop), so that when I looked down at my hands while wearing the headset, the camera would "see" them.

With the headset on, an OSVR rep showed me a basic -- a very, very basic -- demo in which I had to try and grab brightly-colored balls and squares. I was inside a space that resembled -- I don't know how else to describe it -- a tomb that looked like it was pulled from Temple Run. (The colored shapes were anachronistic, to say the least.) I had to scoop up the shapes and pinch to hold them.

However, the mechanics were difficult to master, at best. In order to get the camera to recognize my hands, I had to start with my arms held low and slowly raise them up until they penetrated the platform on which the shapes sat. Then, I could move my hands around and knock the shapes about; however, the interaction was inconsistent. Sometimes a small movement would bounce a ball hard and fast, but then another, larger movement would barely register at all.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

After a minute or so of fumbling ("You're actually doing better than most people," offered the rep), I was able to scoop up some shapes with my upturned palm. It took a while, but eventually the system began to recognize when I closed my finger to grip a shape. Even so, I was never able to actually "grip" and hold anything.

The demo was relatively low-res and clunky, and it seemed rather hastily thrown together, but to be fair, OSVR didn't intend for it to be anything more than a proof of concept, really. Further, certain aspects of the demo were impressive: Namely, the camera did reproduce a somewhat grainy but otherwise colorful and accurate representation of my hands, and when I moved my arms forward, it reproduced those, too. It even reproduced my watch and shirtsleeves. There was also very little lag.

The above indicates to me that a better-tuned demo would likely produce much stronger results.

The Thing

We don't yet know much else about the thing itself (meaning the Razer-made device), and we also haven't been made privy to much about the RealSense camera inside it.

What we do know is that the camera uses the RealSense depth technology to draw a point cloud, and it uses the hand-tracking technology to paint a 3D model over it in real time.

We also know that it will be sold as a standalone item, so users can deploy it however they want -- as a webcam replacement for Twitch or Skype, or as a VR aid. Pricing and a launch date are TBA.

Why?

To be clear, using this device for VR is a really great idea; what OSVR brings to the virtual reality market is a platform designed to let anyone with a great idea have a go at creating it, and it's good to see that at least two players (remember, Leap Motion has a similar product) are working on VR hand tracking like this.

An external hand-tracking peripheral will be a welcome addition to the market. Using your real hands inside of a VR experience is natural, and as the immersive tech improves, real hands will become more and more of an ideal means of input and control.

This stands in contrast to the HTC Vive's controllers, which are fun and easy to use but don't reproduce your actual hands. Oculus' Touch controllers take the idea of controllers-as-hands a big step further, but in neither case do they bring an image of your actual hands into the VR environment.

Further, although no one really knows anything about how much the upcoming spate of VR HMDs will cost, it's a good bet that the likes of the Rift and the Vive will be pricey, and their associated peripherals will add to that cost (or will cost plenty if sold separately).

It seems likely that OSVR will be a less expensive alternative, and a host of add-on peripherals for OSVR would be commensurately low-priced. Further, you'd get to pick and choose which controllers or peripherals you want, rather than be locked in to Oculus's or HTC/Vive's ecosystem.

Hand-tracking tech like this Razer/Intel camera have a ways to go, but it's healthy to see some progress being made, however slowly.

Update, 8/21/15, 7:45am PT: The RealSense camera in the Razer peripheral is an unreleased version called the RC250 "Falcon Crest." It's not the R200. We don't have any additional details on the camera at this point, unfortunately.

Seth Colaner is the News Director at Tom's Hardware. Follow him on Twitter @SethColaner. Follow us @tomshardware, on Facebook and on Google+.

Seth Colaner previously served as News Director at Tom's Hardware. He covered technology news, focusing on keyboards, virtual reality, and wearables.

-

wysir Am I the only person here thinking that this is a major step for VR pr0n? Glove sensors would've been enough, but lets get right down to the skin..Reply -

DonGateley I think it make a great porn VR camera even if interactivity isn't featured. Porn is mainly about voyeurism and anything that merely enhances that important aspect can be a winner.Reply