Skylake: Intel's Core i7-6700K And i5-6600K

Intel gave us an early look at the Core i7-6700K, i5-6600K and Z170 chipset two weeks ahead of IDF and the unveiling of Skylake's architectural details.

Overclocking

The overclocking community isn’t always happy with the decisions that Intel makes in pursuit of greater integration, improved efficiency or even just lower cost. We’ve seen lower-quality thermal interface material, restrictive ratios and integrated voltage regulation affect tuning in different ways. Overall, though, the company seems receptive to feedback from the enthusiasts pushing its products beyond their factory specifications.

Sometimes the alterations are superficial in nature. Devil’s Canyon saw Intel make adjustments to the Haswell architecture’s power delivery and thermal performance. But we were still stuck with BCLK ratios, around which the base clock could only be manipulated plus or minus a few megahertz. A new architecture like Skylake gives Intel the opportunity to reevaluate more fundamental subsystems and change their behavior. With that in mind, the Core i7-6700K and Core i5-6600K represent a significant step forward in many ways, even if certain details remain unavailable (for example, what’s Intel using between its die and heat spreader this time?).

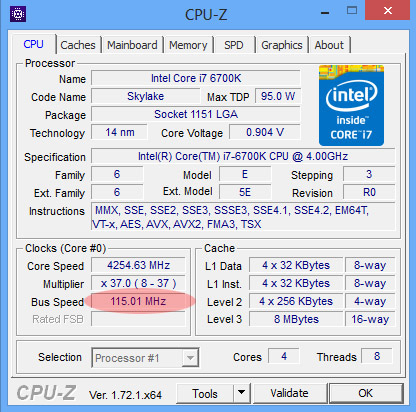

To begin, the Platform Controller Hub’s reference clock to the PCIe bus and I/O is fixed at 100MHz. A separate BCLK signal from the PCH facilitates tuning the processor’s cores, cache, graphics subsystem, memory controller and system agent in 1MHz increments up to 200MHz.

That signal is multiplied against a number of different ratios to dial in optimized clock rates. The cores, for instance, support ratios of up to 83x—higher than Haswell’s 80x ceiling, but far beyond the CPU’s practical limit in both cases. As with Haswell, Skylake’s ring bus ratios are not locked to the cores. Adjustments are available up to 83x as well, though you can detune the ring if you suspect it of holding back a more aggressive overclock elsewhere. The graphics engine offers ratios up to 60x, similar to Haswell and Ivy Bridge. And if you have a mobile Skylake CPU with embedded DRAM, adjustable ratios facilitate a first opportunity to improve its performance through overclocking.

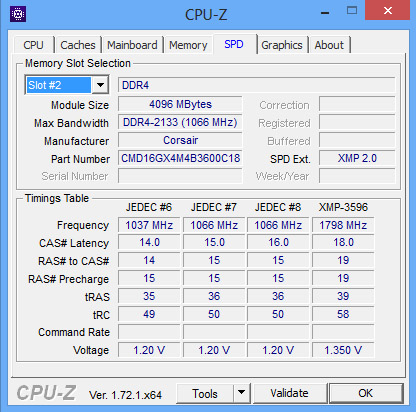

Intel’s early overclocking documentation suggested that memory ratios would be available up to 24x (at 133MHz) and 31x (at 100MHz), creating a maximum of around 3200 MT/s. However, the company's launch material mentions data rates of up to 4133 MT/s. We have kits in-house capable of 3600 MT/s, so there’s still room to scale up as motherboard vendors continue optimizing. Whereas previous architectures exposed ratios that stepped memory data rates up and down in 200/266 MT/s increments, Skylake is more granular with 100/133 MHz steps. The addition of XMP 2.0 simply accounts for a new DDR4 specification. Intel’s XMP certification process remains unchanged, though.

Gone is the fully-integrated voltage regulator, which many enthusiasts faulted for making their Haswell-based CPUs run hotter and require higher-end cooling. It remains to be seen how much impact this has on real-world results, particularly since we’re dealing with a completely different architecture.

Our Hands-On Experience

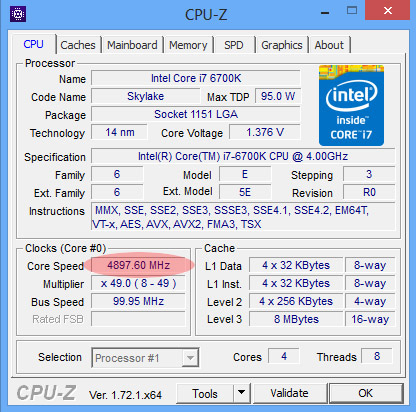

It’s early in Skylake’s life, and we’re still testing pre-production samples. We weren’t particularly optimistic about the architecture’s scalability given Intel’s 4GHz base clock rate and 4.2GHz peak Turbo Boost frequency on the Core i7-6700K. However, our experience so far suggests that 4.7GHz could be a reasonable target with minimal voltage increase.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

One of our samples even cruised along at 4.9GHz using a 1.41V setting, but it wasn’t stable under load, and we’re not comfortable with that voltage.

We didn’t go to the trouble of trying to find our i7-6700K’s breaking point using single-megahertz BCLK adjustments. But just to show Intel’s more flexible BCLK controls do work, we set the reference clock to 115MHz and snapped the following screen shot:

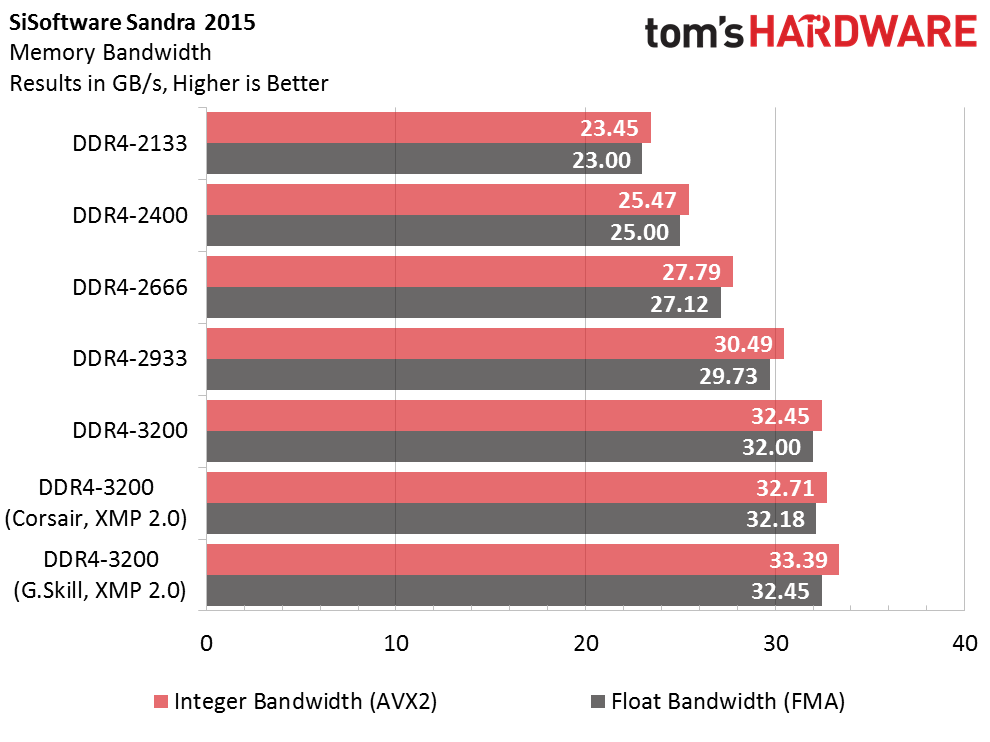

We also devoted more time to testing DDR4 memory scaling. Corsair and G.Skill both sent in kits capable of 3200 MT/s. Additionally, Corsair followed up with a 3600 MT/s configuration. Using MSI’s Z170A Gaming M7 motherboard, we tested at 2133, 2400, 2666, 2933 and 3200 MT/s. Both companies’ kits were rock solid using MSI’s default 3200 MT/s settings and their XMP profiles. But we weren’t able to get 3600 MT/s dialed in; Windows consistently crashed as it started up.

In the hours before launch, MSI did send over a firmware update that got 3466 MT/s stable, albeit at lower bandwidth than 3200 MT/s. It's only a matter of time, though, until even more aggressive memory settings become viable. Intel's early overclocking guidance suggested that ratios enabling 3200 MT/s would be possible and now the company is throwing around numbers like 4133 MT/s. Motherboard vendors are quickly optimizing for the enthusiast-oriented memory kits popping up in anticipation of Skylake, so expect more on this front soon.

Bandwidth continues to scale with data rate. Starting at 2133 MT/s, a >23 GB/s result certainly isn’t bad for a dual-channel controller. By the time you get to 3200 MT/s, though, you’re up above 32 GB/s—an almost-40% increase.

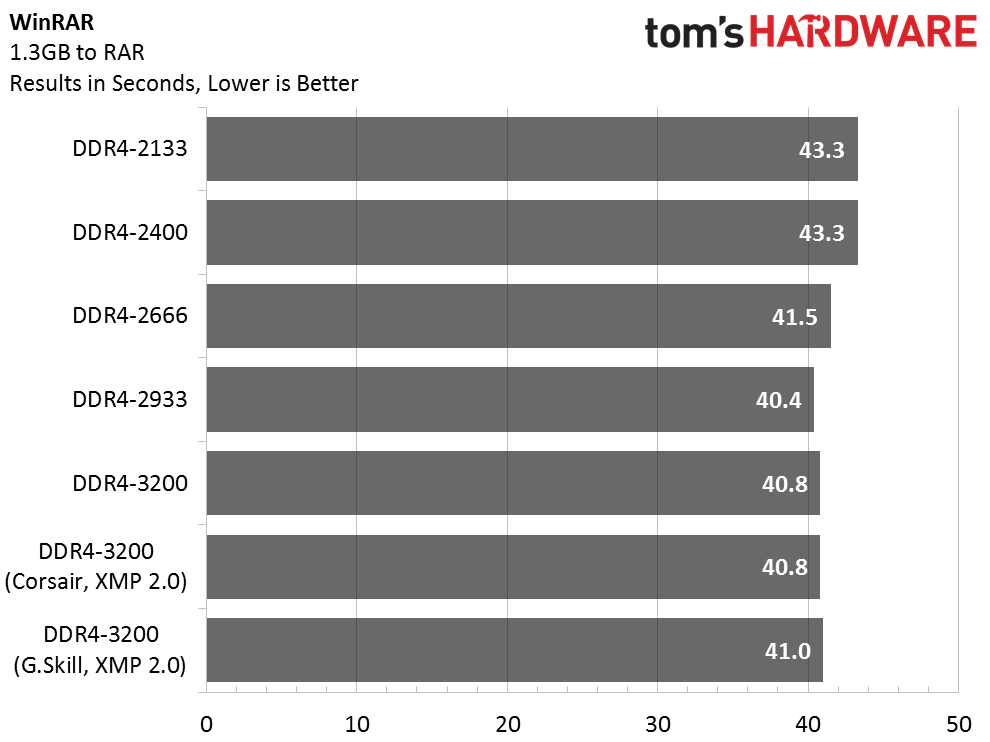

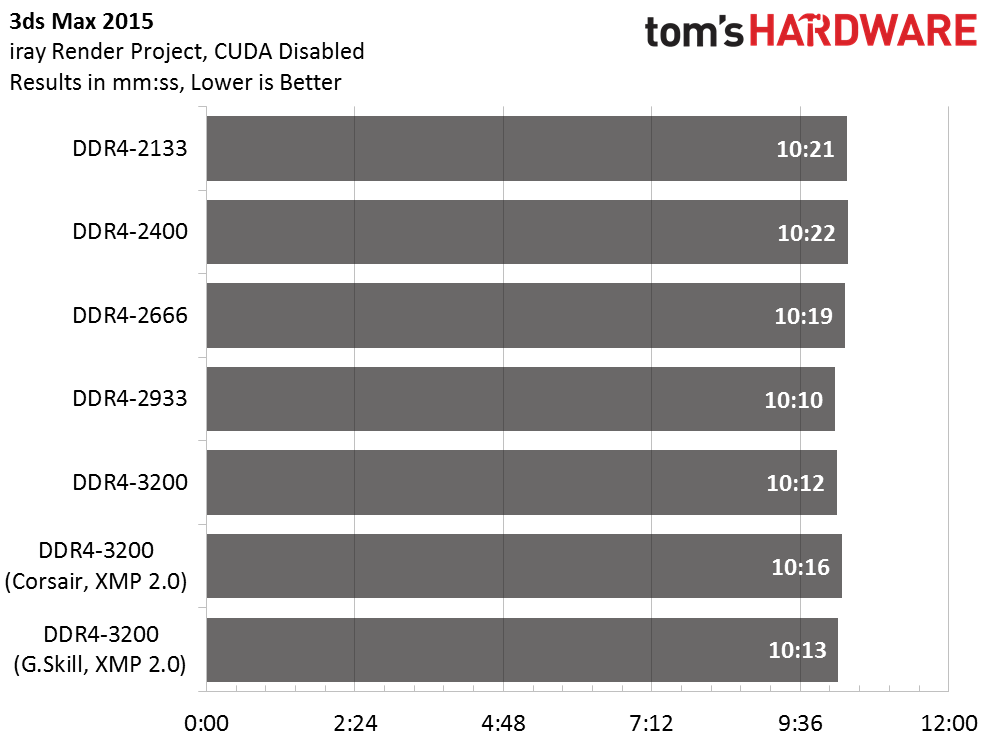

Of course, real-world performance doesn’t improve nearly as much. Intel’s desktop configurations typically aren’t starved for data given the workloads we hit them with, so a best-case speed-up in WinRAR is more like 6-7%. And that’s a test we know to be at least somewhat sensitive to memory performance. Pretty much everything else we run is less responsive.

Interestingly, it’s not at the highest data rate where performance is best, either. DDR4-2933 appears to be the peak, after which bandwidth keeps going up while our timed benchmarks slide ever so slightly.

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

rantoc Yawn... its easy to see that intel have to little competition, they have stagnated in the cpu performance department!Reply -

Vlad Rose What the heck Intel? So, you provide great integrated graphics into Broadwell, then nerf it for Skylake? I guess you had to find a way to help sell your 'paper launch' of Broadwell. I really hope Xen makes you guys wake up; although it more than likely won't.Reply -

Bartendalot At least Skylake HEDT should be powerful. Unless DX12 pulls a rabbit out of a hat, this doesn't look promising for anyone who has Sandy or higher.Reply -

stairmand ReplyStill 4 cores.... Im sticking to my Q6600.

Then you really are missing out, 4 cores or not a current i5 (let alone an i7) will simply destroy the old Q6600 C2Q. It was great in the day but it's very old hat now and the lack of features on the board worse still. -

salgado18 ReplyStill 4 cores.... Im sticking to my Q6600.

You do know that your Q6600 is astronomically slower than Skylake in every single department, right? By your logic, the Phenom II X6 is better than the i7 6700K.

I think you should consider upgrading. You won't regret, promise. -

salgado18 ReplyWhat the heck Intel? So, you provide great integrated graphics into Broadwell, then nerf it for Skylake? I guess you had to find a way to help sell your 'paper launch' of Broadwell. I really hope Xen makes you guys wake up; although it more than likely won't.

Do you mean Shen, from LoL? Or Zen? XD

I believe the cost of the integrated memory chips would make these processors too expensive and niche to be viable products.

-

Lmah Good upgrade for 1st Gen i5/i7 users. Though I think they targeted it at the 2nd Gen i5/i7 users, doesn't seem like a huge improvement for them though.Reply