When Will Ray Tracing Replace Rasterization?

The Basic Concepts

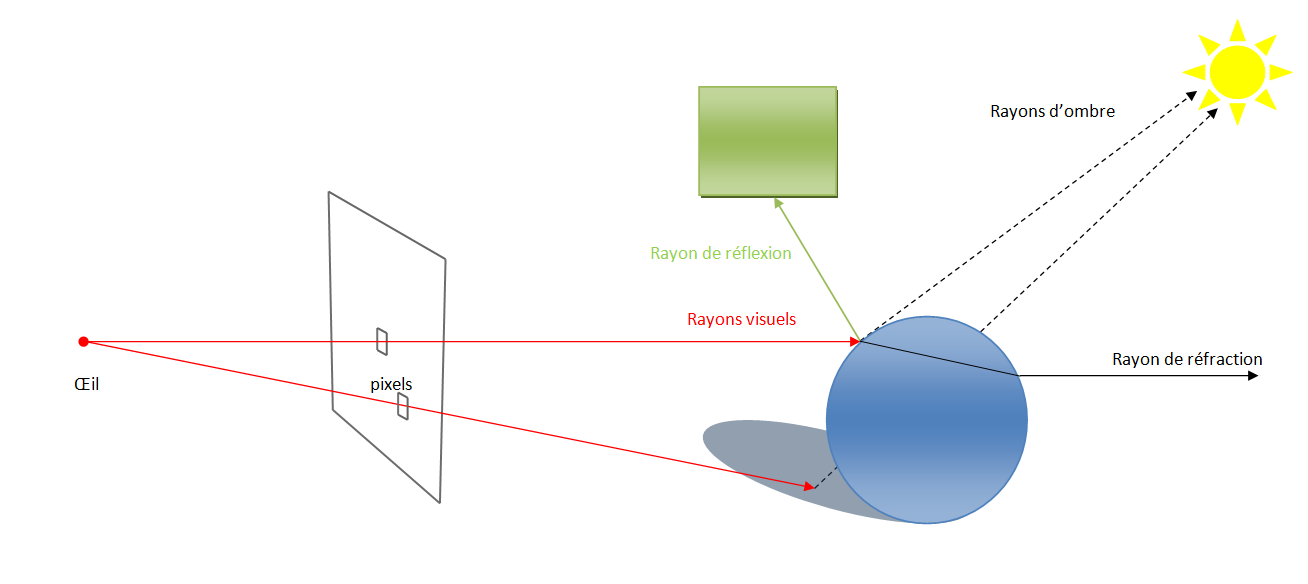

The basic idea of ray tracing is extremely simple: for each pixel of the display, the rendering engine casts a ray that propagates in a straight line until it intersects an element of the scene being rendered. This initial intersection is used to determine the color of the pixel as a function of the intersected element's surface.

But this alone is not enough to achieve realistic rendering. For that, the lighting of the pixel also needs to be determined, which is done by shooting secondary rays (as opposed to the primary rays that determine the visibility of the different objects making up the scene). To calculate a scene's lighting effects, secondary rays are emitted toward the different light sources. If these rays are blocked by an object, then the object in question is in a shadow that the light source under consideration casts. Otherwise, that light source would affect its lighting instead. The sum of all the secondary rays that have reached a light source determines the quantity of light falling on our scene element.

But that's still not the whole picture. In order to achieve even more realistic rendering, the indices of reflection and refraction of the material have to be taken into consideration. In other words, the amount of light reflected at the point of impact with the primary ray and the amount of light that passes through the material have to be accounted for. Here again, rays are emitted to determine the final color of the pixel.

In summary, there are several types of rays. Primary rays are used to determine visibility and are like the Z-buffer used in rasterization. Then, there are secondary rays, which consist of:

- shadow rays

- reflection rays

- refraction rays

This ray tracing algorithm is the result of the work of Turner Whitted, the researcher who invented it 30 years ago. Until then, the ray tracers of the period worked only with primary rays. Thus, the improvements made by Whitted were a giant step toward realism in scene rendering.

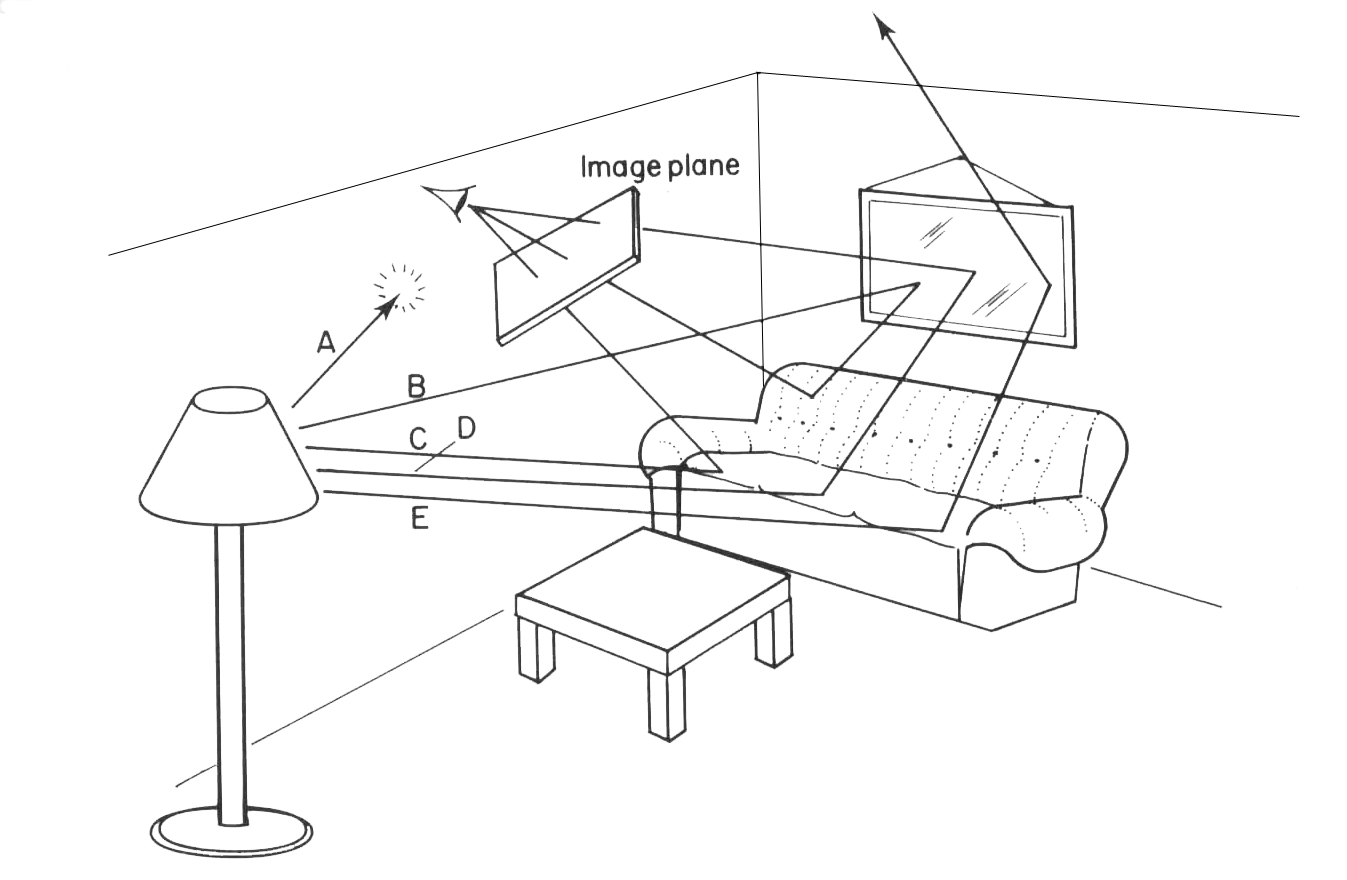

If you have some physics courses under your belt, you will have noticed that the way ray tracing operates is exactly the inverse of what happens in the real world. Unlike the belief widely held in the Middle Ages, our eyes don't send out rays, but instead they receive the rays of light from light sources that have been reflected off the various objects by which we're surrounded. That's how the first ray tracing algorithm worked, in fact.

But the main disadvantage of the technique was that it was extremely computationally-expensive. For each light source, thousands of rays had to be cast, many of which had no influence on the scene being generated (because they didn't intersect the image plane). Recently-developed ray tracing algorithms are an optimization of the basic algorithm and are referred to as backwards ray tracing, since the rays propagate in the opposite direction from what happens in reality.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

IzzyCraft Greed? You give an inch they take a mile? Very pessimistic conclusion although it helps drive the industry so hard to really complain. ;)Reply -

Ramar I'm definitely the kind of person that would prefer to lose some performance in exchange for elegance and perfection. The eye can tell when something is done cheaply in a render. I've made this argument that quite often we find computationally cheap methods of doing something in a game, and after time it seems to me that we've got a 400 horsepower muscle car that, on close inspection, is held together with duct tape and dreams. I'd much rather have a V6 sedan that's spotless and responds properly.Reply

Okay, well in real life, the Half Life 2 buggy would be a lot cooler to drive around than a Jetta, but you get the analogy. -

zodiacfml i still like the simplicity of ray tracing and how close it is to physics/science. it is just how it works, bounce light to everything.Reply

there are a lot of diminishing returns i can see in the future, some are, how complex can rasterization can get? what is the diminishing returns for image resolution especially on the desktop/living room?

ray tracing has a lot of room for optimization.

for years to come, indeed, raster is good for what is possible in hardware. look further ahead,more than 5 years, we'll have hardware fast enough and efficient algorithm for ray tracing. not to mention the big cpu companies, amd & intel, who will push this and earn everyones money. -

stray_gator aargh. start typing, then sign in to find your first words posted.Reply

Anyway, what I liked about this article is its being under the hood, but not related to a new product, announcement or such.

"deep tech" articles accompanying product launches tend inevitably to follow the lines of press kits, PR slides, etc.

Articles like this, while take longer to research, are exactly that - they are researched rather than detailing "company X implemented techniques Y and Z in their new product, which works this way, benefits performance that way and is really cool.". it gives an independent, comprehensive view of the subject, and gives the reader real understanding in the field. -

enewmen The ray-tracing code on the business card was way cool. I was hoping (real-time)ray-tracing and photo-realistic rendering will come with DX11 and GPGPU offloading - this seems completely unrealistic.Reply

I still never read of any dedicated ray-tracing hardware, at any price. It seems the better we understand ray-tracing and it's limitations, the more cloudy the future becomes. -

LORD_ORION Ray tracing will inevtiably replace rasterization. It will just flat out look better to the human perception, when in motion, than pure rasterization, and that is all that is required.Reply

Heh... this article brought to you by Nvidia. -

annymmo Hopefully GPGPU (OpenCL)Reply

will make raytracing possible.

(Together with a huge number of processing cores per graphic card and an advanced raytracing algorithm.)

-

Inneandar nice article.Reply

I wouldn't mind having just a little bit more technical depth, but I'd be glad to seem more like this on Tom's.