Web Browser Grand Prix VI: Firefox 6, Chrome 13, Mac OS X Lion

Chrome 13, Firefox 6, Safari 5.1, and Mac OS X Lion (10.7) have all emerged since our last Web Browser Grand Prix. Today, we test the latest browsers on both major platforms. How do the Mac-based browsers stack up against their Windows 7 counterparts?

Performance Benchmarks: Startup Time

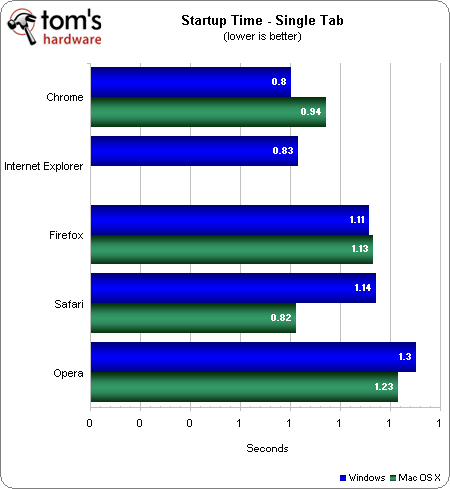

Single Tab

The Google home page serves as our test in the single-tab startup time test.

Starting with a single tab, Google Chrome takes the lead, followed closely by IE9. Firefox 6 is the first browser to take more than one second to start up, landing Mozilla a third-place finish. Safari 5.1 ups the game, leap-frogging Opera to take fourth.

In Mac OS X, Apple's own Safari reaches the finish line first, just a fraction of a second behind Chrome's winning Windows 7 time. Google still manages to grab second place on OS X in under one second. Firefox 6 again comes in third at over one second, very close to the Windows 7 run. Opera still places last.

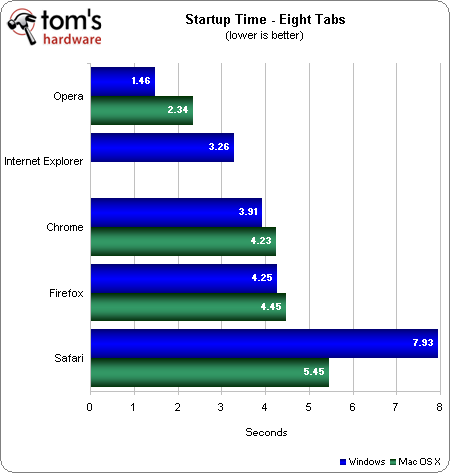

Eight Tabs

We used the Top Eight websites (according to Quantcast) for our eight-tab startup time test. These sites include: Google, Facebook, YouTube, Yahoo!, Twitter, MSN, Amazon, and Wikipedia.

When starting with eight tabs, it's Opera that really shines, finishing in just under 1.5 seconds. IE9 comes in second place. Chrome 13 places third at just under four seconds, while Firefox 6 takes fourth at 4.25 seconds. Safari falls far behind the pack at just under eight seconds.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Using Lion doesn't change the finishing order one bit. Opera still turns in the best time. Chrome takes second place at 4.25 seconds, Firefox is in third with 4.5, and Safari again places last with 5.5 seconds.

All of the third-party Web browsers start up in approximately the same time, or slightly slower, in OS X. Safari is the exception. We start to see that Safari has significant advantages on its native platform, starting up about 25% faster.

The newer versions of Safari and Firebox seem to have helped Apple and Mozilla's startup times. However, with Chrome 13, the times are higher than they were in version 12.

Current page: Performance Benchmarks: Startup Time

Prev Page Hardware And Test Setup Next Page Performance Benchmarks: Page Load Time-

adamovera ne0nguyThe first chart says "higher is better" for the load timethank you, workin' on itReply -

SteelCity1981 Chrome is the best browser out there right now. While FireFox maybe more popular then Chrome is, Chrome has shown why it is the best browser out today. If you haven't used Chrome yet it's def worth a look.Reply -

soccerdocks The reader function in safari actually looks really nice. Although I'd never use Safari on principle. I hope other browsers implement a similar function.Reply -

mayankleoboy1 why does firefox(6/8/9) performa so horribly on the IE9 maze solover test?Reply

chrome13 completely obliterats it.

and firefox 8/9 are still a memory hog.

not really surprised by poor show of ie9. moat updates it gets are "security updates". -

tofu2go Being on a Macbook with only 3GB of memory, memory is the most important factor for me. I open a LOT of tabs and I keep them open for long periods. For awhile I used Chrome, but recently switched to Firefox 6 and saw my memory utilization drop by well over 1GB. Granted with Firefox I was able to do something I am not able to do in any other browser, I could group my tabs into tab groups. I believe this allows for more efficient memory management, i.e. only the current group uses much memory. Not having done any tests, this is pure speculation. All I know is that I'm seeing MUCH lower memory usage with Firefox on OSX. Despite what this article would suggest.Reply -

andy5174 @Google:Reply

Bring back the Google Dictionary, otherwise I will use Bing Search, Firefox and Facebook instead of Google Search, Chrome and G+. -

kartu ReplyFirefox 6 comes in third for both OSes, representing a major drop from Firefox 5.

According to the graphic on "Reliability Benchmarks: Proper Page Loads" on MacOS Firefox is actually second, not third. -

LaloFG I keep Opera, more memory used and time to load pages is nothing when it load pages correctly; and the feeling in its interface is the greater.Reply