AMD Supercomputers with 327,680 Zen 2 Cores Will Help NOAA Predict the Weather

AMD Epyc systems win again based on performance-per-dollar

The United States’ National Oceanic and Atmospheric Administration (NOAA) announced plans to purchase and install two new forecasting supercomputers as part of its new 10-year $505.2 million program.

The two supercomputers have 2,560 dual-socket nodes placed in 10 cabinets and powered by second-generation AMD Epyc ‘Rome’ 64-core 7742 processors, all connected by Cray’s Slingshot network. That works out to 327,680 Zen 2 cores for the clusters.

Each machine will have 1.3 petabytes of system memory and Cray’s ClusterStor systems will come with 26 petabytes of storage per site. 614 terabytes will be flash storage, with the rest being split into two 12.5 petabytes HDD file systems.

The peak theoretical performance of each Cray system is 12 petaflops. Combined with its other research and operational capacity, NOAA’s supercomputers can reach a peak theoretical performance of 40 petaflops.

Cray’s Shasta systems haven’t hit the top 500 supercomputer list yet, but based on their promised performance, they’d be ranking somewhere around the 25th place, based on the November 2019 listing. However, the new Shasta systems won’t be installed until 2022, and chances are they won’t rank as high by then.

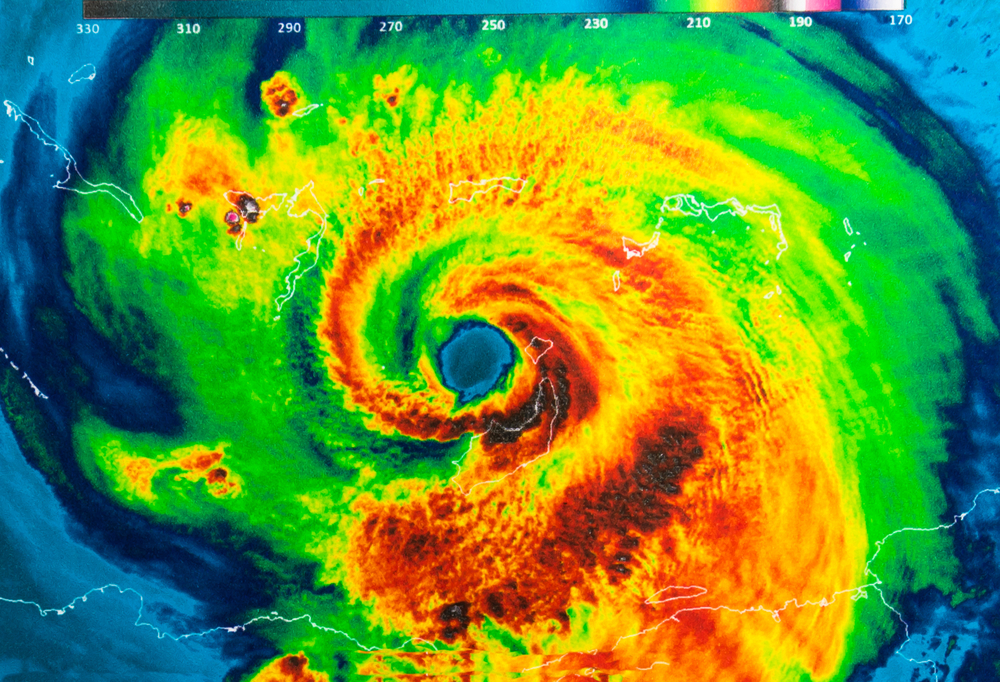

The NOAA scientists argue that leadership could also be measured based on how well hurricanes are tracked, the accuracy of the upper-level flow, identifying surface temperature anomalies, and so on.

They also don’t see the supercomputers as putting them in a “fierce competition” with their colleagues at other forecasting centers around the world.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Out With The Old (IBM, Dell), In With The New (Cray)

NOAA will be replacing a mix of eight older systems, most of which are IBM and Dell, but with two also being older Cray systems. IBM manages the operational centers of NOAA until 2022, after which General Dynamics Information Technology (GDIT) will take over for the following eight years with a two-year optional renewal period. The contract with GDIT is worth $505.2 million over the 10 year span.

GDIT won the contract based on it meeting the 99% system availability requirements as well as offering the best the performance-per-dollar out of all the bidders. GDIT choosing AMD’s Epyc servers for the CPUs may have played a significant role in reaching that performance-per-dollar objective, too. We've recently seen several other institutions opt for AMD Epyc-based systems due to their high performance-per-dollar.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.

-

spongiemaster Reply

Wouldn't it be cheaper and easier to order a Farmers' Almanac off of Amazon?admin said:The NOAA announced that it will install two new AMD Epyc-based supercomputers for weather forecasting.

AMD Supercomputers Heading to NOAA Will Predict Weather With 327,680 Zen 2 Cores : Read more -

OMGPWNTIME Replysvan71 said:Why do they state the core count rather than cpu count?

A system of 64-Core chips totalling 327,680 cores would mean 5120 CPUs.

An interesting thing to consider is that while I'm sure you get a discount for ordering a massive number of CPUs, the MSRP of the 7742 processor is $6950, which would mean in excess of $35 million in CPUs alone. -

drivinfast247 Reply

Or transistor count 163,840,000,000,000svan71 said:my question was sarcastic really, 327,680 sounds allot more exciting than 5120 . -

ron baker This will use so much Powa that it will create its own weather systems near the Heat exfiltration systems. Someone needs to develop a seriously effiient design 5-10w per core , not this 60-90w nonsense. Back to the drawing board Mr Cray!Reply -

aldaia Replyron baker said:This will use so much Powa that it will create its own weather systems near the Heat exfiltration systems. Someone needs to develop a seriously effiient design 5-10w per core , not this 60-90w nonsense. Back to the drawing board Mr Cray!

AMD EPYC 7742 requires less than 4 W per CORE -

bit_user ReplyEach machine will have 1.3 petabytes of system memory and Cray’s ClusterStor systems will come with 26 petabytes of storage per site.

That makes it sound like each 2-processor node will have 1.3 PB. In fact, if this number is for the whole site, then each node would have about 512 GB. So, that must be what it's describing.

Incidentally, 26 PB only works out to 10 TB per node, which is fairly unremarkable. The flash storage is only about 256 GB per node.

What's so strange about this is no mention of GPUs. The numbers check out, though. 12 PFLOPS works out to about 5 TFLOPS per node, which is in the ballpark of 2x 64-core EPYCs, and pretty low for anything more than a single GPU per node. In fact, a single Tesla V100 is rated at 7 TFLOPS of fp64. Or, sticking with AMD, a MI60 would net you 7.4 TFLOPS of fp64.

So, either their algorithms don't map well to GPUs, or maybe they involve a lot of legacy code that would be too painful to port. I'd expect they'd want to use deep learning models, though. GPUs would provide the best balance of versatility and performance, for a mix of deep learning & conventional models. -

bit_user Reply

What are you even talking about? Where did you get those numbers?ron baker said:Someone needs to develop a seriously effiient design 5-10w per core , not this 60-90w nonsense. Back to the drawing board Mr Cray!

FWIW, Seymour Cray died in 1996.

https://en.wikipedia.org/wiki/Seymour_Cray

That was several years after he spun off Cray Computer, which went bankrupt in 1995. So, he's had nothing to do with Cray Research for about 30 years, now. -

bit_user In an ironic twist, if you'd asked me what I'd do with access to a Cray, until a couple years ago, I'd have said something to the effect of "realtime raytracing would be a good start". Now, here we have a Cray that doesn't even have GPUs, each of which would be the most suitable platform for such an endeavor. My, how things change.Reply

At this point, I think my brain is the limiting factor. I can't think of anything especially interesting to do on such a machine.