Apple M1 Max Catches up to RX 6800M, RTX 3080 Mobile in GFXBench 5

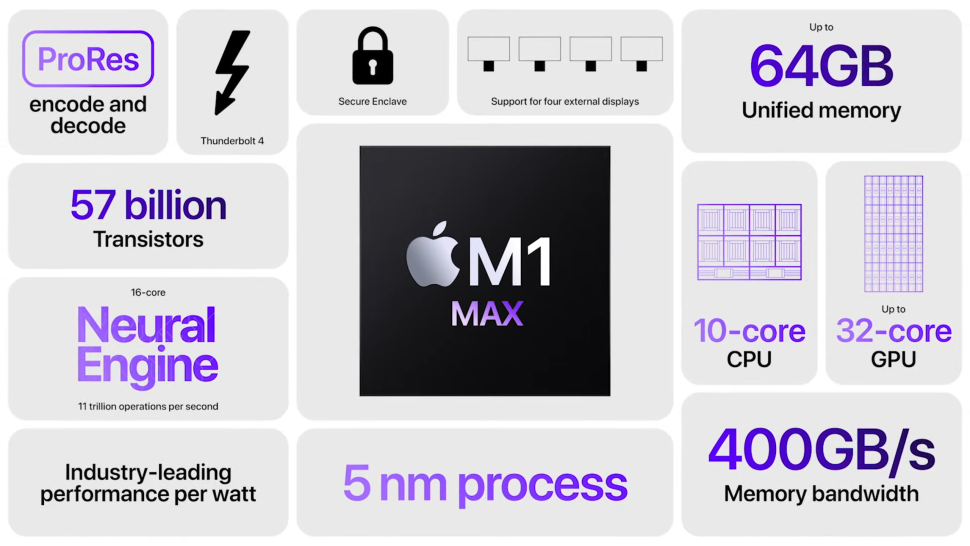

Apple announced the 14-inch and 16-inch MacBook Pros on Monday, marking the debut of the company's new M1 Pro and M1 Max SoCs. While we've already seen a bit of the M1 Max's computing power, one Redditor has found a new benchmark of the M1 Max stretching its feet in a graphics benchmark.

The M1 Max wields a 32-core GPU with 16 execution units each. Each execution unit houses eight ALUs, bringing the total number of ALUs in the M1 Max to 4,096. According to Apple, the GPU delivers performance up to 10.4 TFLOPs. In theory, the M1 Max's GPU should perform somewhere in the area of the GeForce RTX 3060 Mobile, which offers 10.91 TFLOPs.

During its presentation, Apple claimed that the M1 Max's GPU (60W) rivals Nvidia's GeForce RTX 3080 Mobile (160W). Apple's SoC reportedly offers equivalent performance at 100W less power. The company also provided a comparison to the 100W variant of the GeForce RTX 3080 where the M1 Max showed a 40% lower power consumption. For the time being, we should take Apple's numbers with a bit of salt since the company didn't provide any context, only having used "select industry-standard benchmarks."

GFXBench originated as a smartphone benchmark, therefore, the tests aren't suitable for modern graphics cards. Unlike 3DMark, we recommend you approach GFXBench results with lots of caution. Furthermore, the M1 Max submission was on Mac OS X with the Metal API, whereas the GeForce RTX 3080 Mobile and Radeon RX 6800M submissions were carried out on Windows and the OpenGL API. We've used the offscreen results for comparison.

Apple M1 Max Benchmarks

| Processor | Aztec Ruins Normal Tier | Aztec Ruins High Tier | Car Chase | 1440p Manhattan 3.1.1 Offscreen | Manhattan 3.1 | Manhattan | T-Rex | ALU 2 | Driver Overhead 2 | Texturing |

|---|---|---|---|---|---|---|---|---|---|---|

| Apple M1 Max | 503.3 FPS | 194.3 FPS | 298.5 FPS | 398.9 FPS | 816.9 FPS | 1,187.8 FPS | 1,391.2 FPS | 1,073.1 FPS | 398.1 FPS | 235,842 MTexel/s |

| Nvidia GeForce RTX 3080 Mobile | 455.1 FPS | 217.6 FPS | 437.8 FPS | 394.8 FPS | 580.7 FPS | 632.9 FPS | 1,918.0 FPS | 2,887.9 FPS | 172.5 FPS | 221,647 MTexel/s |

| AMD Radeon RX 6800M | 390.9 FPS | 242.0 FPS | 298.7 FPS | 363.5 FPS | 389.5 FPS | 404.1 FPS | 1,298.2 FPS | 2,650.4 FPS | 115.8 FPS | 234,201 MTexel/s |

| Apple M1 | 203.6 FPS | 77.5 FPS | 176.5 FPS | 130.9 FPS | 272.4 FPS | 403.9 FPS | 649.5 FPS | 298.6 FPS | 245.1 FPS | 71,098 MTexel/s |

Apple touted that the M1 Max's graphics performance was up to four times faster than the original M1. The results showed that Apple's claims were mostly on point, although not in every workload.

The M1 Max looked pretty good beside the GeForce RTX 3080 Mobile or Radeon RX 6800M. Apple's chip outperformed Nvidia and AMD's GPUs in some workloads and stayed within a small margin in others. The M1 Max's power efficiency was the most impressive feat, considering that the GeForce RTX 3080 Mobile and Radeon RX 6800M conform to TDP ratings of 160W and 145W, respectively.

Logically, we'll have to watch for more benchmarks to see whether Apple's M1 Max is what the company claims. It's fine if it doesn't beat the latest and greatest GPUs from Nvidia or AMD since Apple didn't conceive the M1 Max for gaming. Instead, the 5nm SoC is tailored for professionals to deliver potent CPU and graphics performance on-the-go in the new MacBook Pros.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

King_V Pending actual gaming benchmarks, this definitely has me wishing that Apple were more open with their chip, and willing to sell it as something that can go into a socketed motherboard.Reply -

Heat_Fan89 These specs are all well and fine but it won't change anything regarding the top publishers bringing their games to the Mac. The Big publishers have so much invested in games that some big budget games are now pushing half a billion dollars. Those games are going to platforms with the largest audiences and those platforms are the consoles and PC's running Windows.Reply

If Apple were to become serious and want those big budget AAA games, they'll need to develop middleware software to port those games to the hardware running Apple Silicon. It can be done but it will require they open their wallet and then convince the top publishers why it makes business sense to bring their games to the Mac. So far they only have interest on the TV side and their gaming interest is in Apple Arcade. -

drtweak ReplyHeat_Fan89 said:These specs are all well and fine but it won't change anything regarding the top publishers bringing their games to the Mac. The Big publishers have so much invested in games that some big budget games are now pushing half a billion dollars. Those games are going to platforms with the largest audiences and those platforms are the consoles and PC's running Windows.

If Apple were to become serious and want those big budget AAA games, they'll need to develop middleware software to port those games to the hardware running Apple Silicon. It can be done but it will require they open their wallet and then convince the top publishers why it makes business sense to bring their games to the Mac. So far they only have interest on the TV side and their gaming interest is in Apple Arcade.

True but I think with most App Store apps most compatible with M1, I don't think they will care. Unless apple make them go through the app store as well (Wouldn't be surprised) why would the developers go through the trouble of porting the game to one of the smallest gaming OSes for normal desktop gaming and have to share the profits? Sounds like Windows and Linux is the way for them to stick to it. -

kdawg92 Replydrtweak said:True but I think with most App Store apps most compatible with M1, I don't think they will care. Unless apple make them go through the app store as well (Wouldn't be surprised) why would the developers go through the trouble of porting the game to one of the smallest gaming OSes for normal desktop gaming and have to share the profits? Sounds like Windows and Linux is the way for them to stick to it.

It'd make more sense if Windows and Linux started supporting the M1 Max where you could dual-boot. Then opening up DX or the other gaming apis would be much easier.

But I think the point is being missed here - an arm apu built for the consumer market (granted- the rich consumer market) just went head to head with a dedicated gpu. That's pretty cool. Will it bring enough attention to finally say "oh yeah ARM is now in competition" (like they've been touting for decades)? Ehhh... maybe. -

Eximo Just wait for TSMC to open up that node to AMD and Intel, and perhaps Nvidia, should be interesting to say the least.Reply -

JamesJones44 Replydrtweak said:True but I think with most App Store apps most compatible with M1, I don't think they will care. Unless apple make them go through the app store as well (Wouldn't be surprised) why would the developers go through the trouble of porting the game to one of the smallest gaming OSes for normal desktop gaming and have to share the profits? Sounds like Windows and Linux is the way for them to stick to it.

The App Store is not required on macOS. You can load from anywhere, even Steam.

macOS's market share has steadily risen over the past decade from sub 5% to 10% world wide while Windows share has fallen 15%. In the US it's more drastic, macOS is up to 26% share and Windows down to 61%. It's not a stretch to think that if Apple does start to produce hardware that can outperform Win-A-Tel that shift will start to accelerate drawing in game developers. It's also worth noting that among developers in general the market share is roughly 60% Windows 40% macOS. This is a massive increase from 10 years ago.

I'm not saying game devs will jump on board overnight, but it's not a stretch to believe they will stop ignoring the platform if they think they can reach enough of an audience. -

JamesJones44 ReplyEximo said:Just wait for TSMC to open up that node to AMD and Intel, and perhaps Nvidia, should be interesting to say the least.

The issue is Apple paid a large sum to have exclusive access to TSMC's latest nodes. As everyone transitions to 5nm, M2 or whatever Apple ends up call it will be on the 3nm node. That will give Apple the advantage of always being ahead of those companies in terms of node size until that agreement ends or someone catches up and/or passes TSMC. -

hotaru.hino GFXBench shouldn't be used to be honest. The problem is that you can only use Metal on Apple's OSes. And GFXBench states they're using Metal.Reply

For the Windows side of things, Aztec is the only test that uses DX12 and Vulkan. The others use OpenGL or DirectX 11, except on Apple's OS, where Metal is used. This right here is not even a fair comparison as Metal is a low-overhead API.

See https://gfxbench.com/benchmark.jsp

Also there's the question of just how much of a graphics load GFXBench is doing. FPSes in the 200+ region sounds more like it's a CPU bound test. -

artk2219 ReplyEximo said:Hmm, Apple game console...wouldn't surprise me if we see that in a short while.

They tried that in the 90's with the pippin, and it didnt work out well since apple's "features" dont mesh well with a console audience. Not everyone wants an expensive console with a limited supply of games and hardware restrictions.