Computational Storage Comes One Step Closer with Launch of Official Specs

Processors in your SSDs, baby

Computational storage has its first set of official specifications following the publication of version 1.0 of The Storage Networking Industry Association’s Computational Storage Architecture and Programming Model. As reported by The Register, the model is aimed at developing this promising new tech by allowing different manufacturers’ designs to operate together.

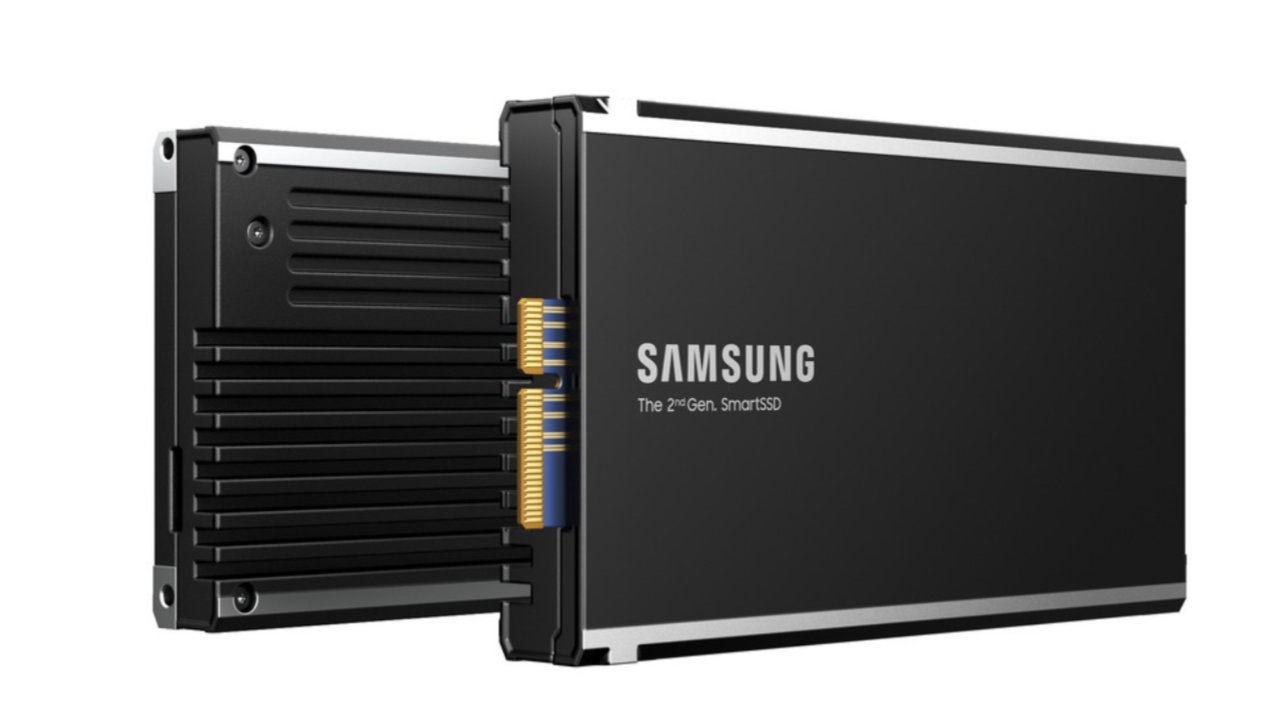

‘Computational storage’ is a new buzzword that doesn’t exactly mean that we’re going to turn SSDs into processors, but that processing and storage will be more tightly bound together, with processors embedded in the storage to share the load of storage-heavy tasks. At the moment, this means an FPGA running encryption, compression and erase tasks under the CPU’s supervision, and could mean an Arm CPU running Linux left to its own devices in the near future.

Products already shipping include Samsung’s SmartSSD CSD (computational storage drive, pictured above), which uses Xilinx Versal Adaptive SoCs from AMD and, according to Samsung’s own figures, can slash CPU utilization by up to 97 percent. Scaleflux also has a product, the third-gen CSD3000, which uses an eight-core Arm chip.

What computational storage has lacked, until now, is standardization, and the SNIA’s (Storage Networking Industry Association) Computational Storage Technical Working Group has been working since 2018 on the new specs. Now that they’re here, hardware vendors can begin the process of building their own interoperable computational storage systems, and software types can get stuck into drivers with common terminology and a discovery process so computers faced with a new CSD know how to begin interfacing with their resources.

The specifications themselves come to a hefty 71-page PDF, but for those looking for lighter reading the SNIA has published a blog post explaining the important parts of the new computing model.

“Having an industry developed reference architecture that hardware and application developers refer to is an important attribute of the 1.0 specification,” wrote SNIA Computational Storage Architecture and Programming Model editor Bill Martin in the post, “especially as we get into cloud to edge deployment where standardization has not been as early. Putting compute where data is at the edge – where data is being driven – gives the opportunity to provide normalization and standardization that application developers can refer to contributing computational storage solutions to the edge ecosystem.”

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ian Evenden is a UK-based news writer for Tom’s Hardware US. He’ll write about anything, but stories about Raspberry Pi and DIY robots seem to find their way to him.