Geomerics Whitepaper Explains Lighting's Importance To Immersive VR

Geomerics published a whitepaper exploring how important lighting is to creating immersive virtual reality (VR) experiences. The paper features interviews with people from nDreams, Oculus Story Studio, and ARM Enlighten about how each company approaches lighting in VR. Seeing is believing, after all, and nothing messes with a person's suspension of disbelief more than weird lighting that doesn't behave quite like people expect.

nDreams: Lighting Is Critical To Immersion

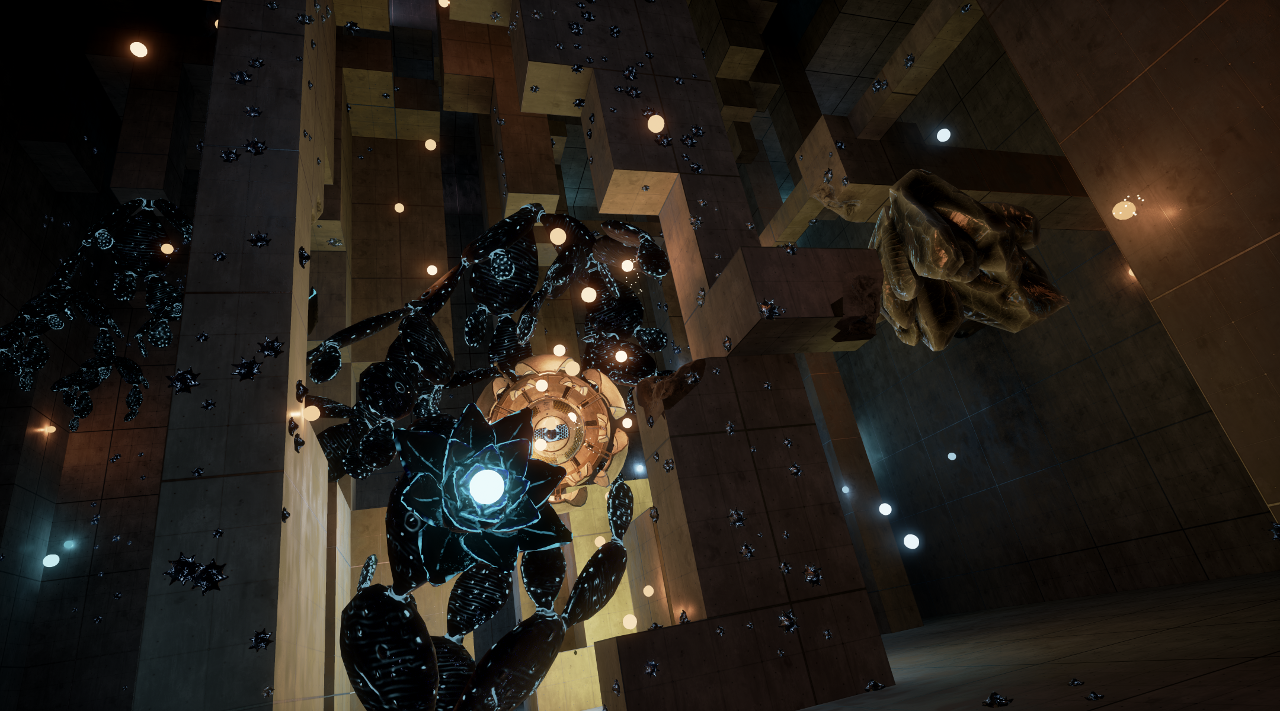

Not content to support just one VR platform, nDreams has released The Assembly for Oculus Rift, HTC Vive, and PlayStation VR. The game tasks players with getting to the bottom of a vague conspiracy involving secret experiments. It's meant to be an easy introduction to VR for newcomers--the game is all about letting people explore their environments, learn how to move around them, and solve puzzles while also making a few moral choices along the way. Geomerics interviewed senior programmers Steven Cannavan and Jamie Holding to learn how nDreams approached lighting with its VR thriller.

Cannavan said that lighting is "incredibly important" to immersing people in VR experiences. "The last time I checked," he said, "The real world was lit with effectively real time lighting!" VR experiences don't have to match the real world exactly--one would hope a secret group known as the Assembly isn't conducting underground experiments here in the physical realm--but they do have to come close for people to lose themselves in the virtual world. People expect the way sound and light behave to be consistent between the real world and immersive VR experiences, as Holding explained:

Article continues belowWith dynamic lighting you need the environment to react properly. If you’re in an outside space and you hear bird noise, when you look up you expect to see birds. If you hear atmospheric noise from wildlife or whatever, if you can’t see what caused it, it can break that immersion very quickly. In the same way, when introducing a spotlight into a scene – if you look up and can’t see a light source, you will be immediately brought out of the action. All objects in the world affect light in some way. They cause shadows; they cause light to bounce around the room in a certain way; they can be affected by other things. If you haven’t got effective lighting then it can completely destroy any immersion in VR.

Oculus Story Studio: Lighting Has Its Trade-Offs

Oculus Story Studio is the in-house film team at Facebook's VR company. It's released several videos to critical acclaim since its creation in 2014: Lost debuted at the Sundance Film Festival in January 2015, Henry won an Emmy Award in 2016, and Dear Angelica will be part of the Sundance Film Festival's VR-focused New Frontier program later in January. Oculus takes these projects seriously--it even hired directors and animators from Pixar and Dreamworks to work on Story Studio projects with the hope of proving to the world that VR has the potential to change the way people experience films.

Max Planck, the group's technical founder, told Geomerics that Oculus Story Studio has approached lighting differently for each project. Lost used lighting to hide low resolution assets far away from the main action; Henry embraced an animated style that used approximations of light instead of dynamic light sources; and the illustrative style of Dear Angelica allowed the team to avoid having to use any lighting at all. Planck said these approaches resulted from the current limitations of VR hardware and software, which require developers to decide what they want to prioritize.

Here's some of what Planck said about lighting in VR:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

What’s cool about all of this is that it feels like the kind of problems early visual effects had initially. You feel like a pioneer! At that time you couldn’t fit a lot into the two gigabyte limit. If you added too much then you would run out [of] memory, time, or even break the renderer. Right now real time engines can take a lot, but as soon as you add like three or four dynamic lights then you start getting framerates that are above the 11 millisecond limit. [...] A lot of VR content development is the art of practicing constraint when talking about lighting.

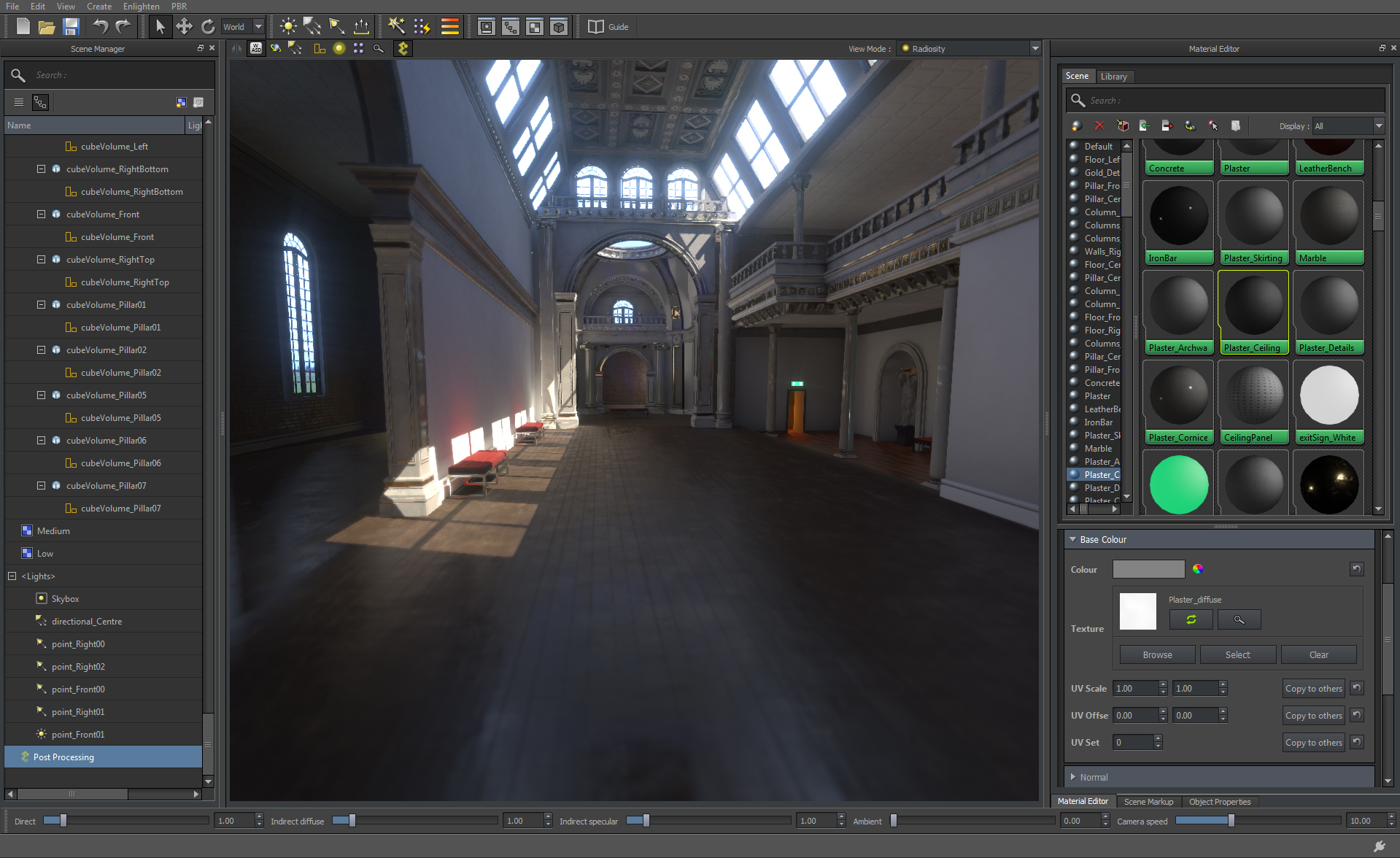

ARM Enlighten: One Solution To The Problem

Geomerics was acquired by ARM in 2013, so the interview with ARM Enlighten general manager Chris Porthouse must've been the easiest to get. Enlighten was made to bring dynamic lighting to VR experiences without affecting performance. When the third version of the solution debuted in March 2015, it brought with it more controls over where a light comes from; better reflections; and other tools used to create more believable lighting. The goal is to make ARM central to VR, whether it's via processors or software like Enlighten, and whitepapers like this one help the company in that regard.

Porthouse echoed Cannavan, Holding, and Planck in saying that lighting is critical to immersive VR experiences and difficult to do right. "I think dynamic lighting is essential to VR," he said. "It facilitates a more accurate representation of the real world where interaction with the lighting environment happens whenever you pick up an object, turn on a light, or move from one place to another." Naturally, he believes Enlighten offers the best tool for creating those interactive and dynamic light sources. Here's his thinly veiled pitch to VR developers:

VR offers so much opportunity to challenge conventional gameplay and graphics; it is a new and exciting platform and developers need to be enabled to innovate in as unconstrained a way as possible despite the obvious performance limits of the platform. While real-time lighting has typically been seen as too computationally expensive when designing a game, it doesn’t have to be. It is possible to deploy dynamic light effects without impacting frame rate – and virtual reality experiences will be all the richer if developers design with this in mind.

Lighting The Path

Despite the plentiful conflicts of interest, Geomerics' paper is a good starting point for anyone wondering how developers plan to make VR experiences more immersive. Right now the biggest limitations are technical: VR platforms have only so much power, and devs fear that focusing too much on both high-resolution art and realistic lighting could lead to performance issues. Introducing more capable hardware and software could make this a moot point, much like the ever-growing power of game consoles from the Nintendo Entertainment System to the PS4 Pro did for mainstream video games.

Perhaps then it will be easier to suspend disbelief and let VR experiences approach the gap between the real and virtual worlds.

Nathaniel Mott is a freelance news and features writer for Tom's Hardware US, covering breaking news, security, and the silliest aspects of the tech industry.

-

anbello262 For VR, I think that HDR is a must for many games, especially when they are dark/horror games. Remember the (exagerated) focus/brightness auto adjust from the first Mirror edge, trying to mimic our eyes? What if we could just let our eyes do it, with some seriously natural-feeling brightness?Reply

But I sincerely believe that lighting has a VERY long way to go. We still have some pretty lackluster implementations of lighting in modern non-VR games, and ray tracing is probably quite far to what it should be to feel natural. I don't recall seeing a game where a white wall would slightly reflect your bright red shirt when standing next to it, as a basic example (have only seen it done on static elements of the game, not moving players/monsters. If you know examples, please let me know and will happily play them)

I have only seen some ray-tracing on specular reflection objets, and even there it is not the best most of the times.

I would guess that it maybe has a bigger impact than 4K/high resolution on the "feel" of the game.

I understand that it has a huge performance impact, and therefore it is nowhere practical for VR, but when not even normal games are advanced enough, I don't really see realistic lighting on the near future of VR.