Google Accelerating Video Understanding Research With 'YouTube-8M' Dataset

Google announced the release of a dataset of eight million labeled videos to help accelerate machine learning research for video understanding. It's called YouTube-8M.

ImageNet For Videos

Many technology companies have used the ImageNet dataset of millions of labeled images to benchmark the performance of their chips and algorithms. This has helped them improve their technology over the years, as they tried to become better and better at classifying objects in static images.

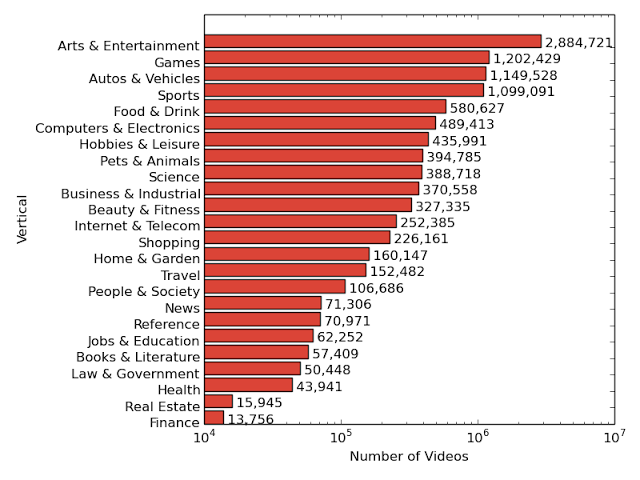

Google now aims to do the same for videos, which is why it’s releasing the YouTube-8M dataset. The dataset contains eight million YouTube URLs, or the equivalent of 500,000 hours of video, as well as 4,800 video labels from Google’s own Knowledge Graph.

The previously largest video dataset, called Sports-1M, contained one million YouTube video URLs and 500 labels, so YouTube-8M increases both the number of videos and labels by almost an order of magnitude. This increase in complexity means there will now be more for researchers’ neural networks to learn, and it gives those networks the opportunity to become more accurate.

Challenges

Google faced some challenges before creating the dataset. One of them is that video is harder to annotate manually than images, simply because it’s more time-consuming to watch and figure out what it’s about. To solve this problem, Google had to rely on YouTube’s machine-generated labels. However, the company believes that they are accurate enough to be useful for benchmarking and research purposes.

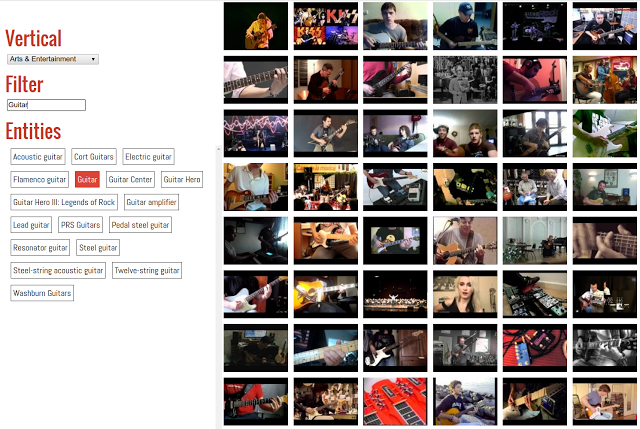

To ensure the videos were of high-enough quality, the company chose videos that had at least 1,000 views. It also used other automated tools to determine if the “entities” in the videos could be easily observable.

Video is also much more computationally intensive, which created a second challenge for Google. Normally, all of the 500,000 hours of video in the YouTube-8M dataset would require a petabyte (PB) of storage and dozens of CPU-years' worth of processing. That means it wouldn’t be readily available to most potential researchers, including students.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

To solve this problem, Google pre-processed the video and extracted frame-level features using a publicly available deep learning model that was trained on ImageNet called Inception-V3. The extracted features can be further compressed and fit onto a 1.5TB drive. This makes it possible for the dataset to be downloaded over the internet by just about anyone. Then, new deep learning models can be trained on it using the Tensorflow deep learning framework and a GPU, in less than a day.

Google believes that the YouTube-8M dataset will significantly accelerate research on video understanding, as it enables researchers everywhere, including students, to work with large datasets on their own computers. The company also made available the technical report for this announcement.

Lucian Armasu is a Contributing Writer for Tom's Hardware US. He covers software news and the issues surrounding privacy and security.