Huawei Seeks Independence From the US With RISC-V and Ascend Chips

Huawei has launched its 7nm Ascend 910 artificial intelligence chip for data centers together with a new comprehensive AI framework MindSpore. The announcement comes at a time when Huawei is facing pressure from the US government, which Huawei is responding to by considering using the open-source RISC-V.

Ascend 910 and MindSpore

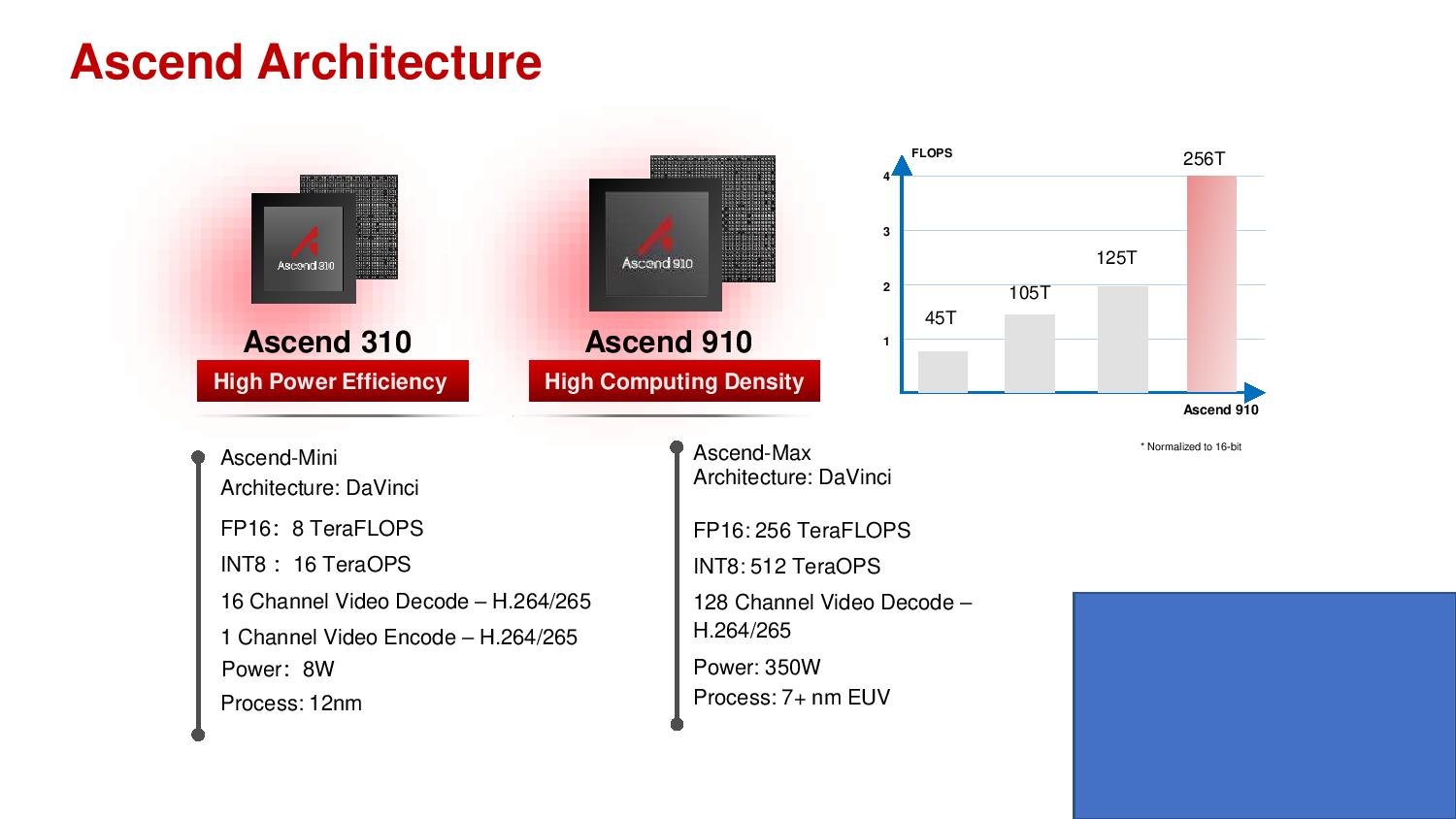

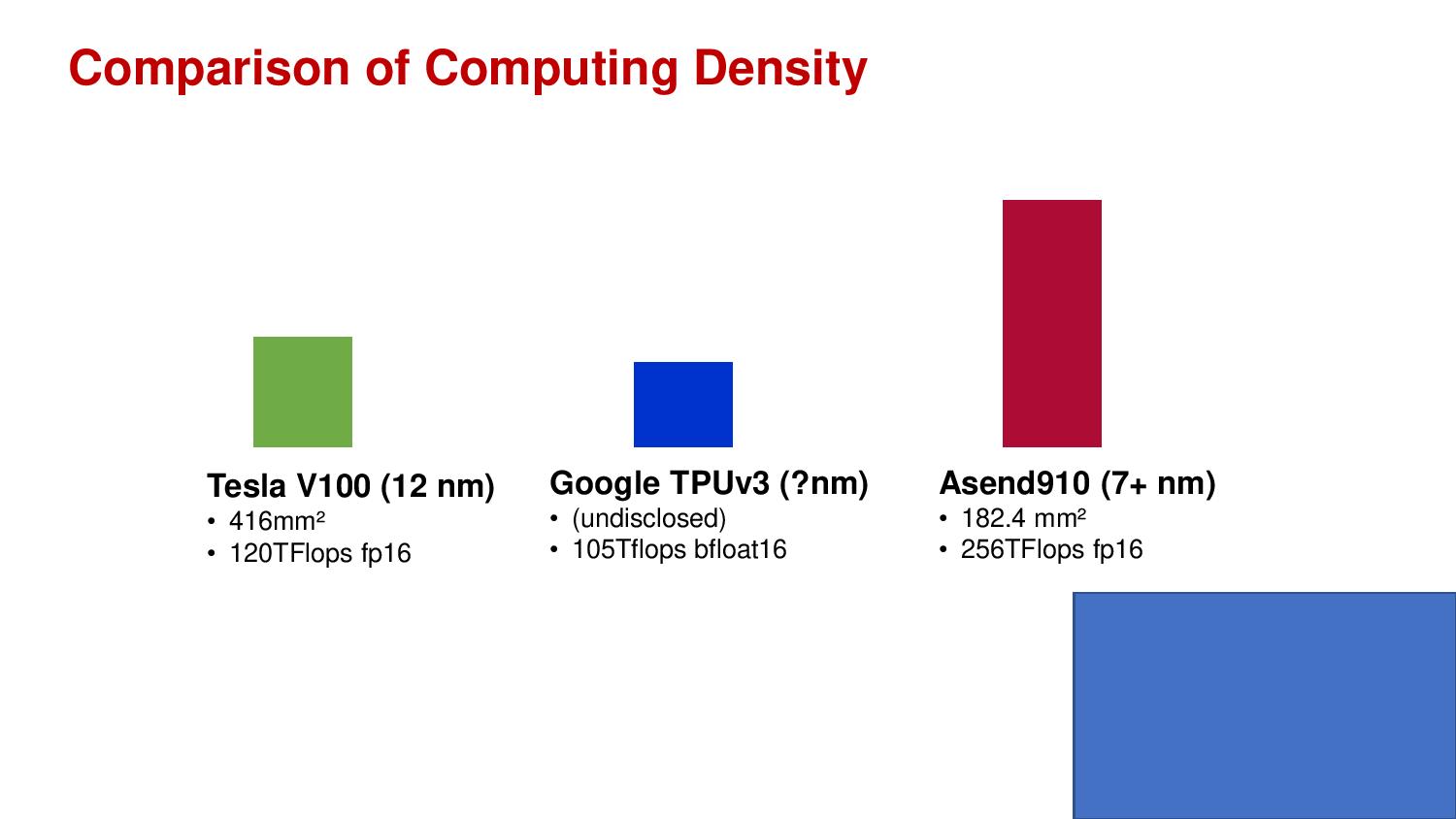

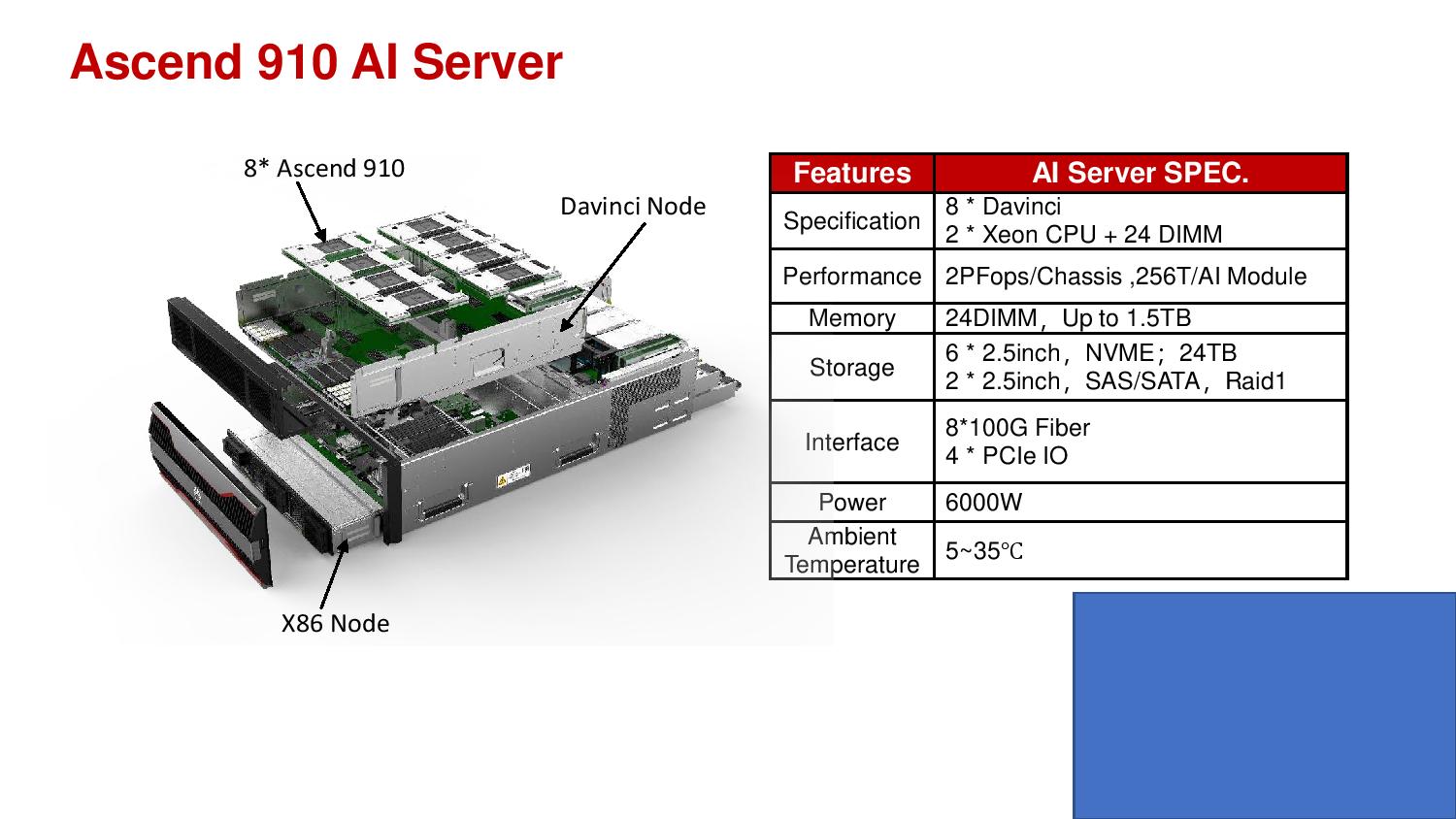

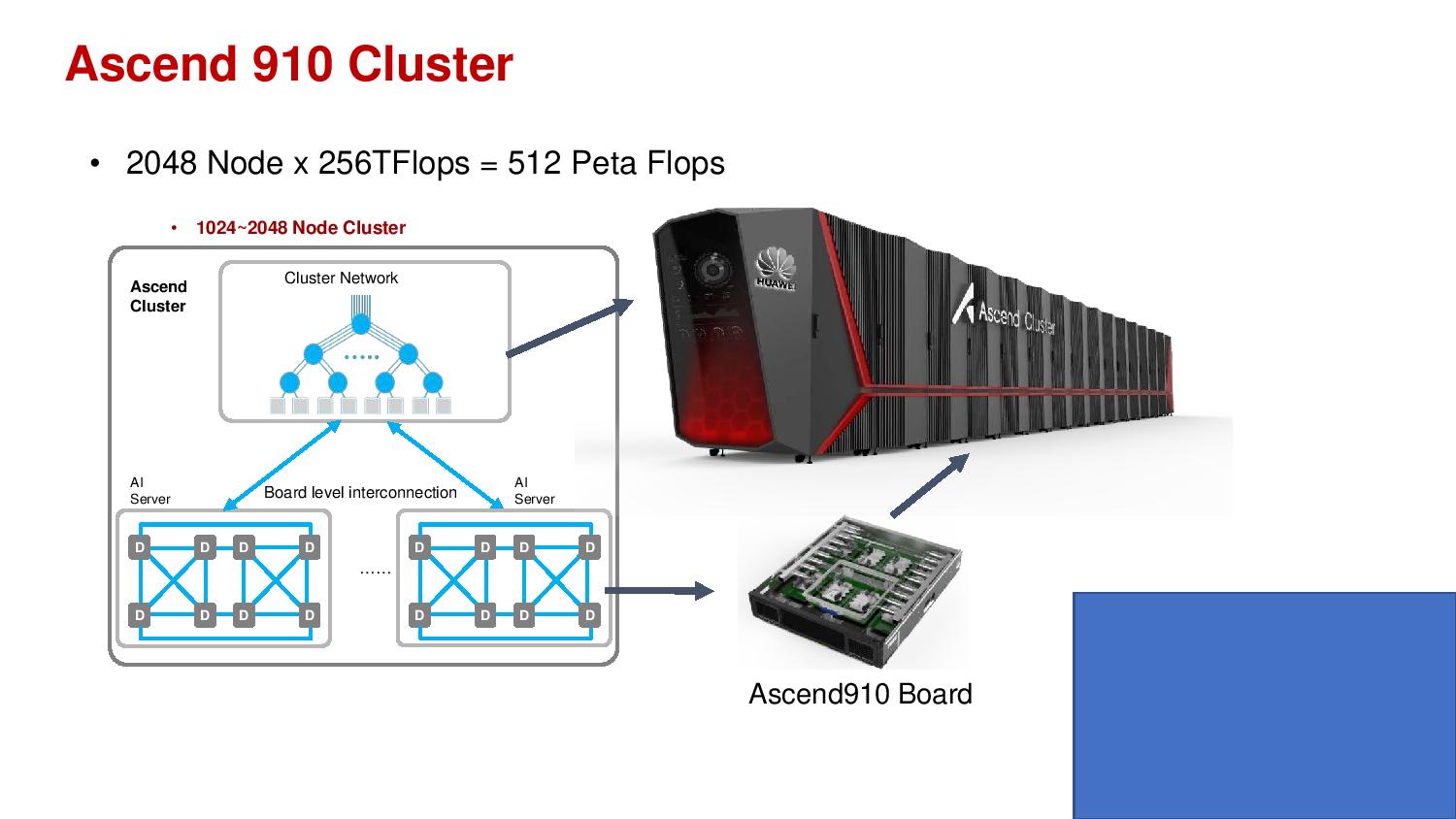

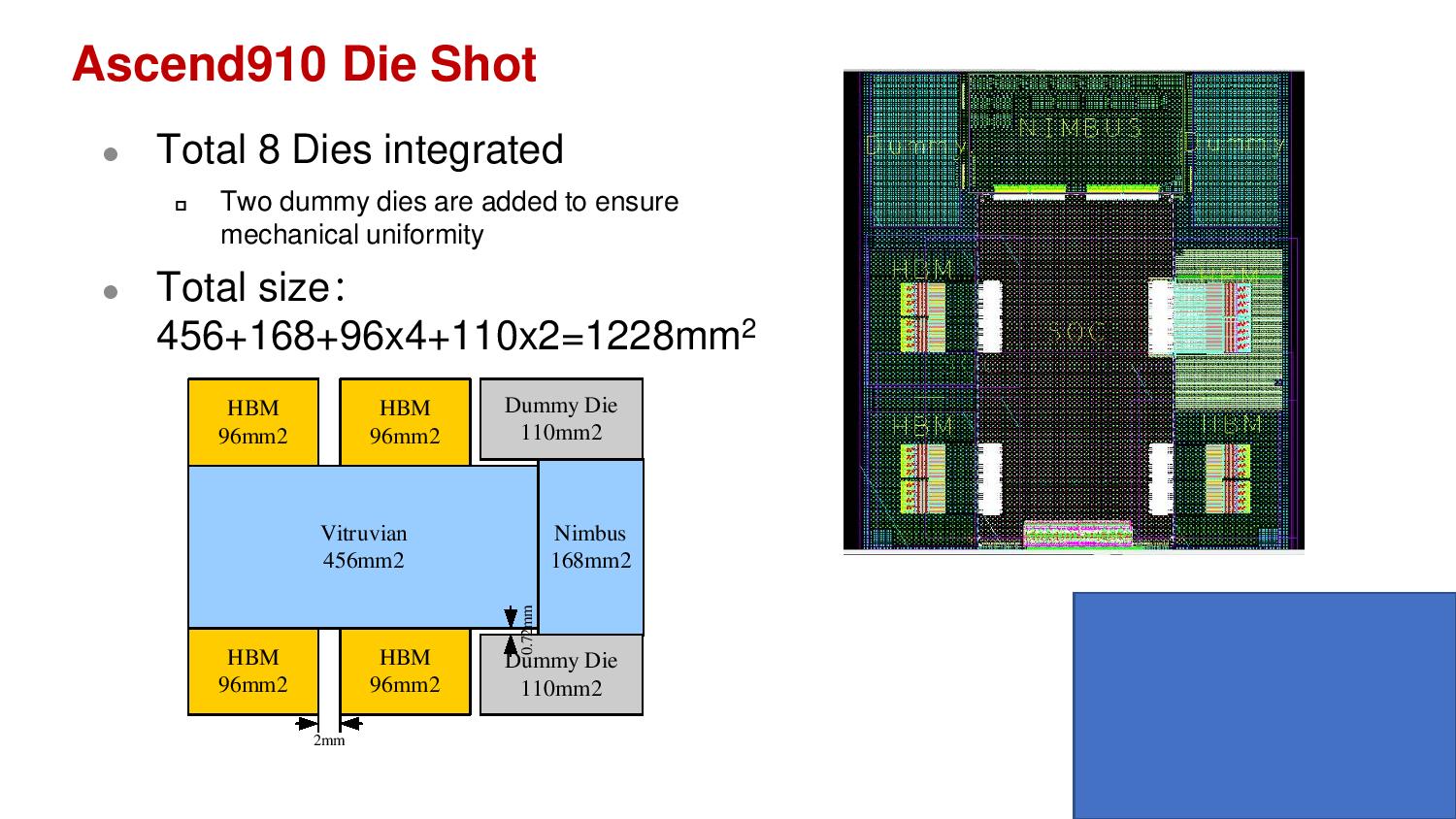

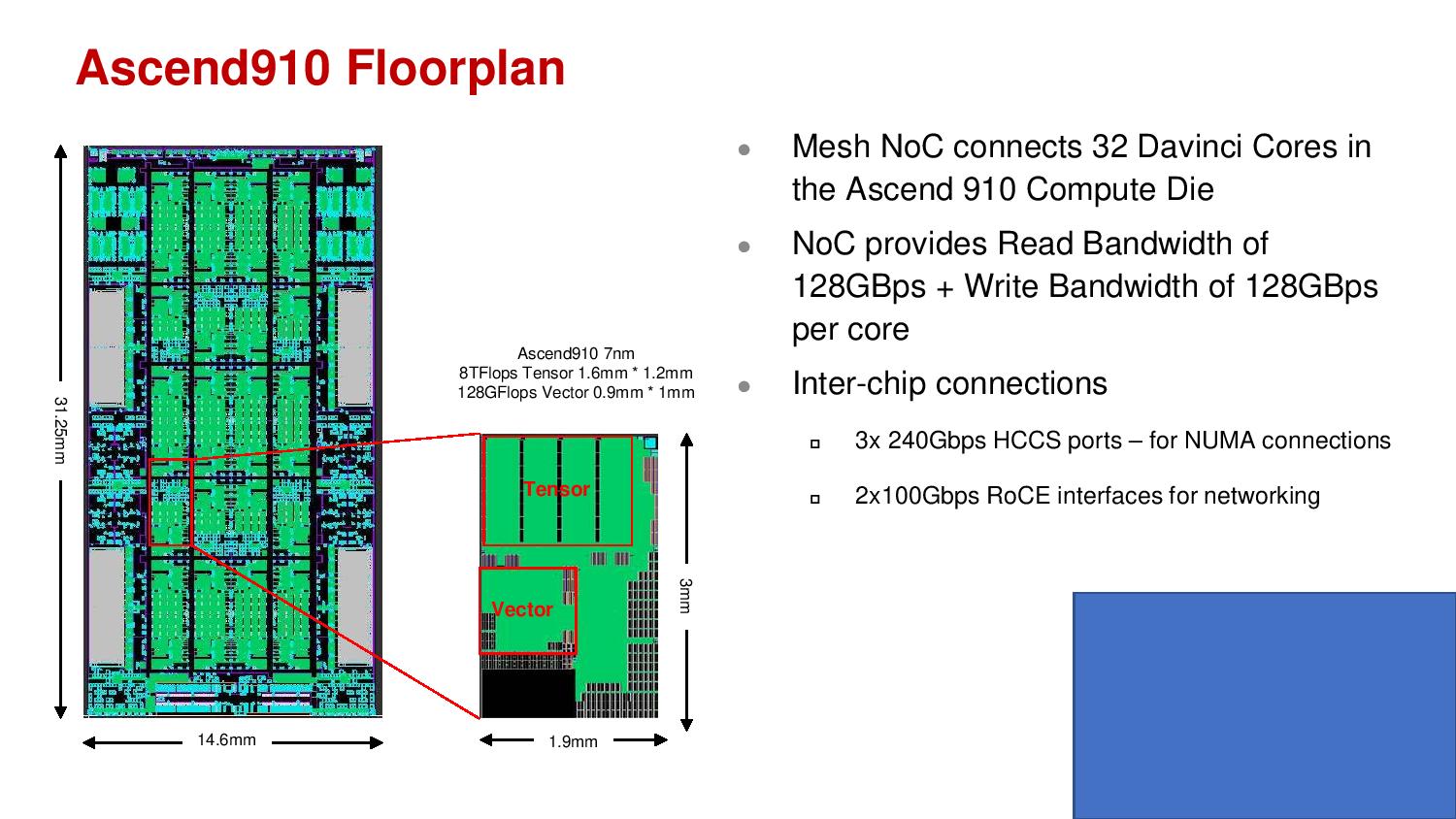

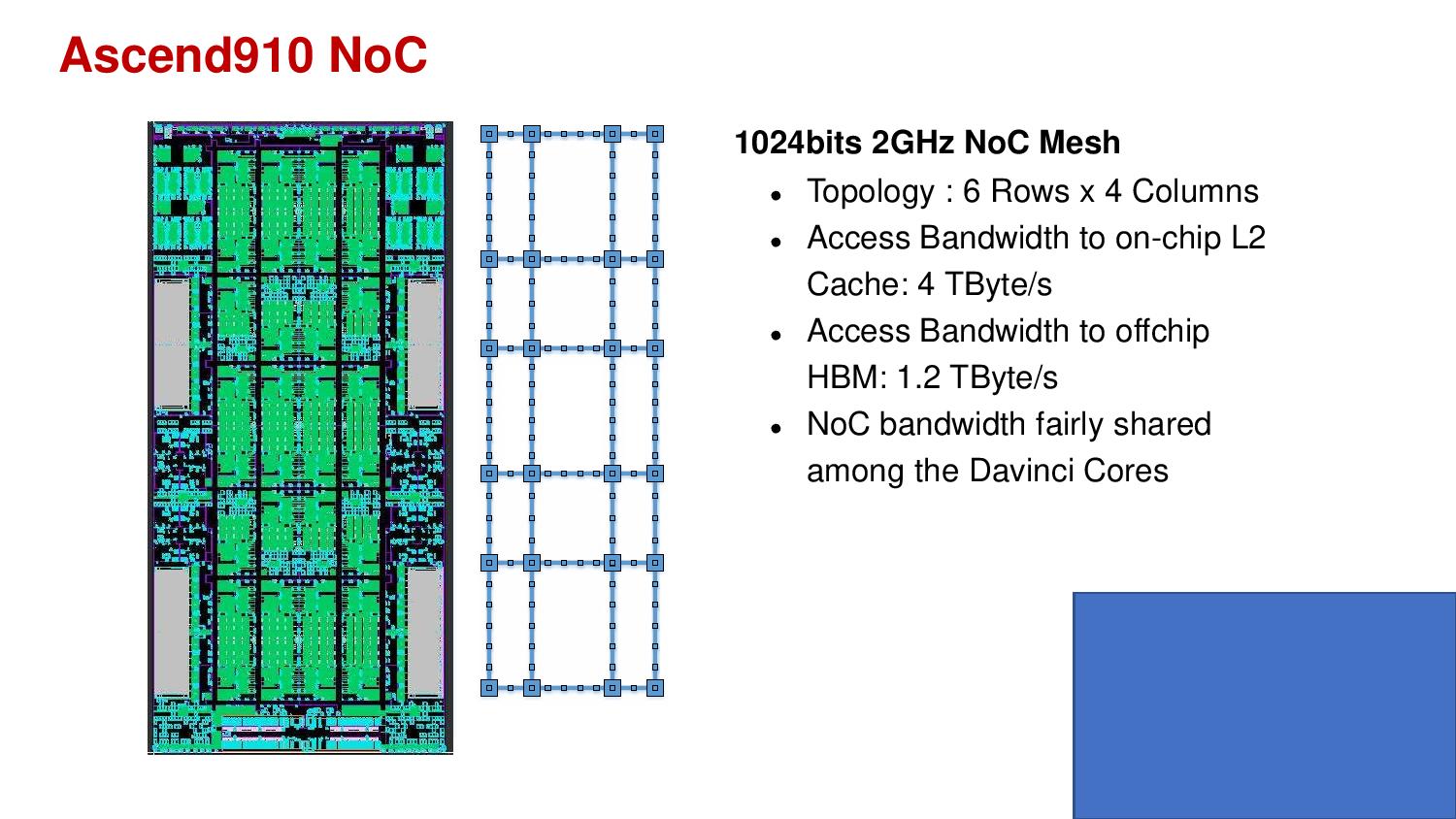

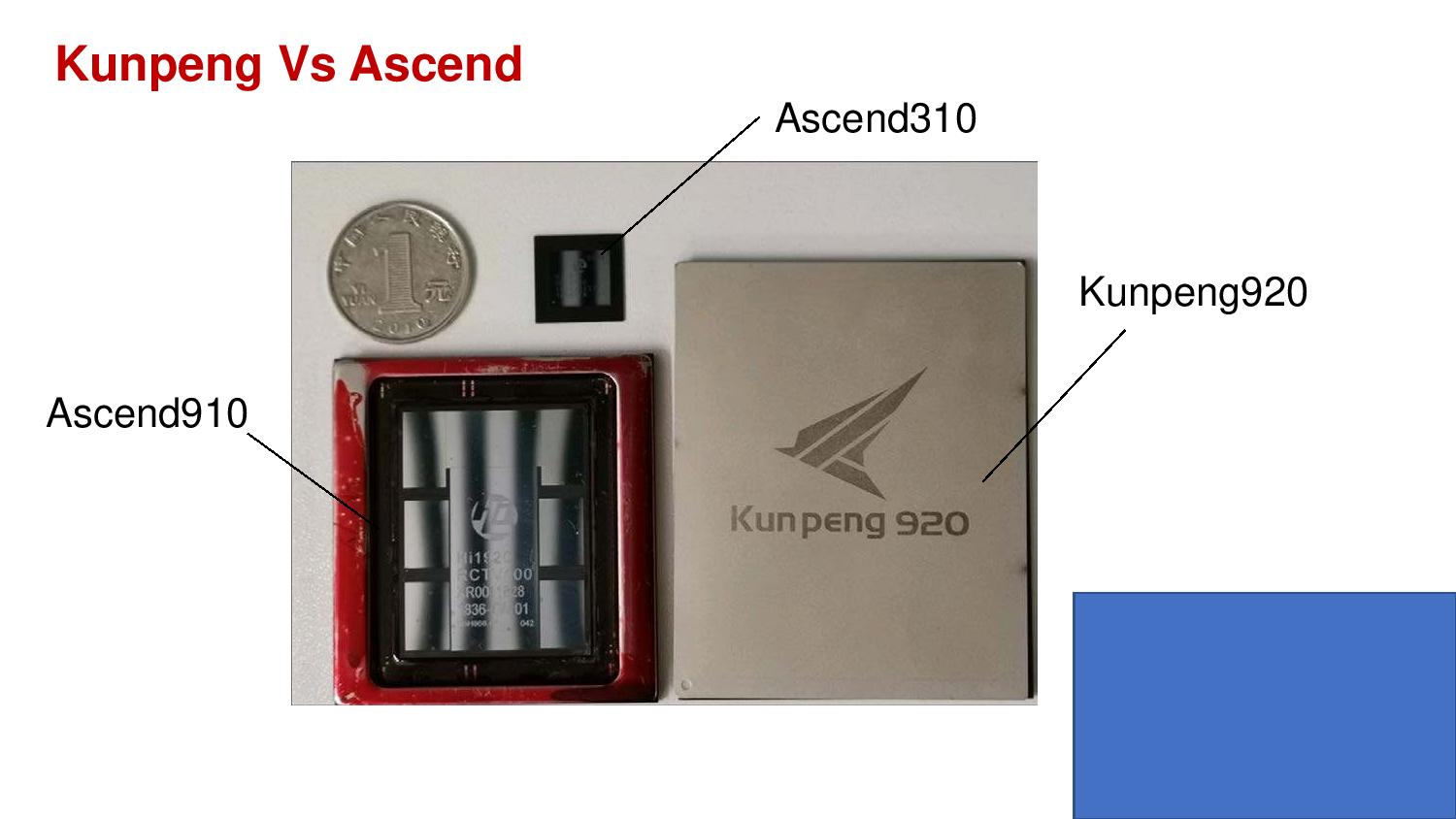

Huawei already talked about the Ascend 910 in October last year, but the present announcement marks the commercial availability of the chip, which Huawei claims is the world’s most powerful AI processor. Moreover, Huawei claims that the chip reaches its planned performance targets with lower power than anticipated: the Ascend 910 delivers 256 half-precision TFLOPS in a power envelope of 310W compared to the previously-announced 350W. Performance doubles to 512 TOPS for 8-bit integer calculations (INT8).

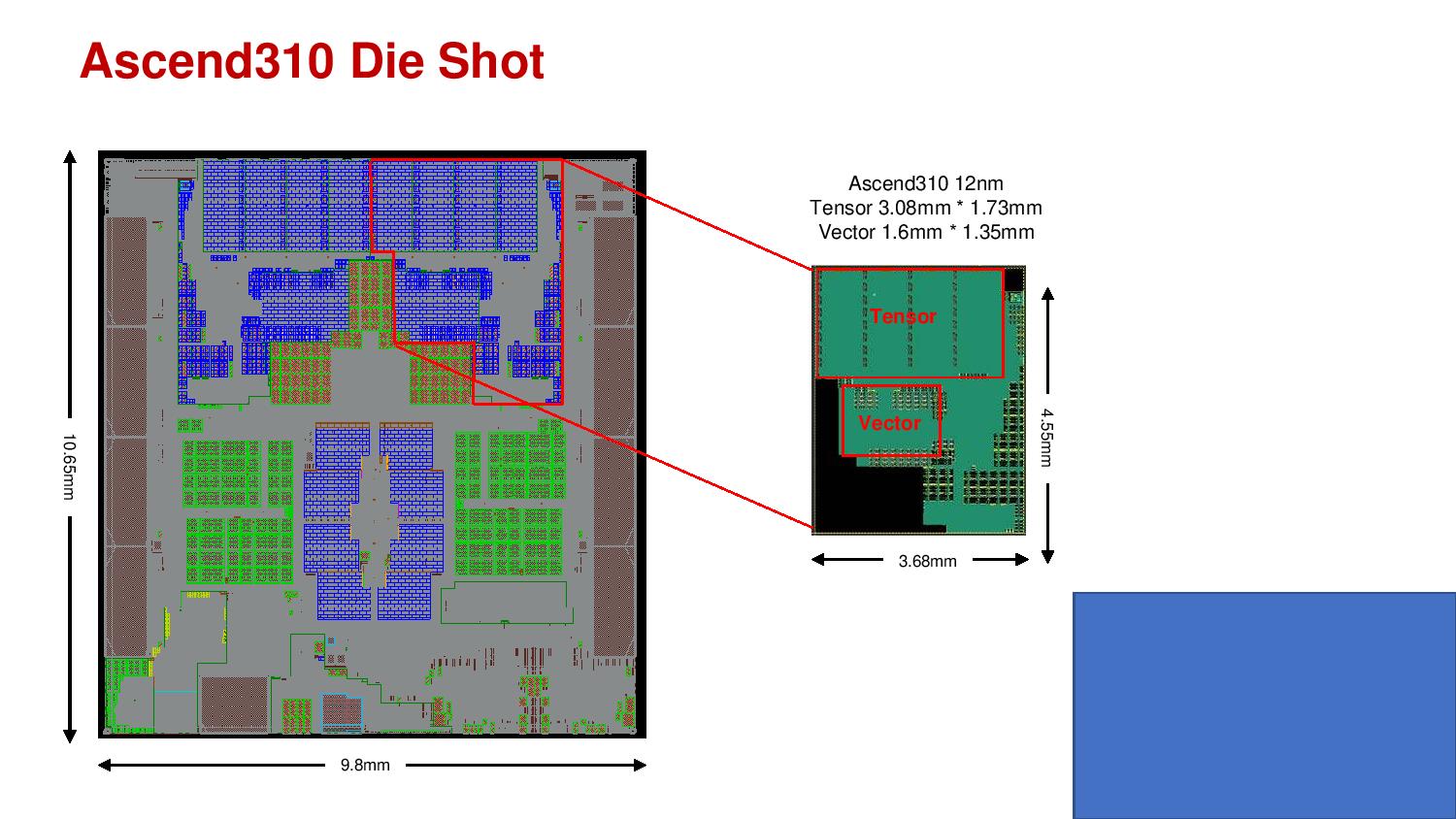

Manufactured on TSMC’s 7nm process, The Ascent 910 serves as a neural processing unit for training AI models (as opposed to inference) in the data center, but Huawei says it is also investing in silicon for other compute scenarios, such as edge computing, devices, and autonomous vehicles.

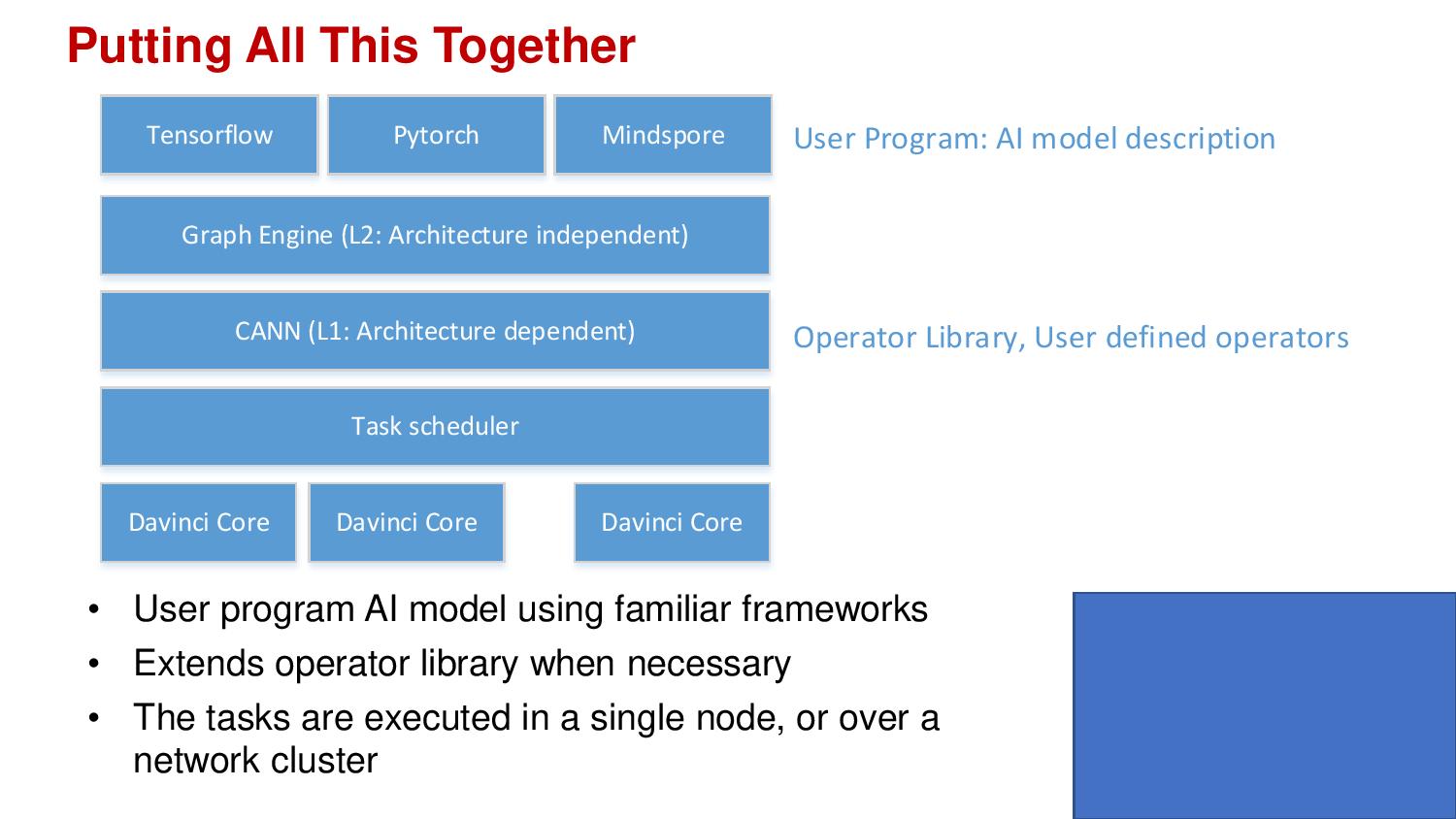

In a similar vein, Huawei has launched MindSpore, a development framework for AI applications in all scenarios. The framework aims to help with three goals: easy development, efficient execution and adaptable to all scenarios. In other words, the framework should aid to train models at the lowest cost and time, with the highest performance per watt, for all use cases.

Another key design point of MindSpore is privacy. The company says that MindSpore doesn’t process the data itself, but instead “deals with gradient and model information” that has already been processed. Huawei describes its framework as an “AI algorithm as code” design flow. As an example, Huawei claims that for natural language processing (NLP), MindSpore has 20% fewer lines of code and raises developers’ efficiency by 50% compared to the current leading frameworks. Huawei also claims that combination of MindSpore with the Ascend 910 achieves double the performance in ResNet-50 compared to “other mainstream training cards” (likely Nvidia’s V100) with TensorFlow.

MindSpore supports both its Ascend processor as well as CPUs, GPUs and “other types of processors.” Huawei also disclosed that it would open source MindSpore in the first quarter of 2020 in support of a robust AI ecosystem. Huawei’s Rotating Chairman, Xu, said: “We promised a full-stack, all-scenario AI portfolio. And today we delivered.”

MindSpore joins a sizeable collection of AI frameworks such as TensorFlow, Caffe, and Theano. Nvidia continues to reign supreme in the data center DL training market as other companies begin more focused development efforts. Intel recently described its 16nm Spring Crest NNP-T accelerator at HotChips to take on Nvidia (with initial availability later this year). Spring Crest does not have INT8, but it does support the young bfloat16 format, albeit at half the FLOPS as the Ascend’s FP16 performance. However, Intel spent a lot of work on the interconnect to scale up to hundreds of nodes, a capability that Huawei has not mentioned.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Seeking US Independence

Also on Friday, Huawei said that it would consider using RISC-V if the US government restrictions persist. Huawei’s launch schedule is currently not impacted by a US ban because the company already has obtained a license to the ARMv8 architecture. However, there is a possibility that Huawei won’t be able to use ARM’s new technologies in the future.

Even though ARM is a UK company, some of its technologies are developed in the US, so it has to comply with the ban. “If ARM's new technologies are not available in the future, we can also use RISC-V, an architecture which is open to all companies. The challenge is not insurmountable,” Xu said. Huawei is already a member of the RISC-V Foundation. However, the company also said it had not started any efforts yet to migrate to RISC-V, preferring to continue using ARM.

RISC-V is not the only open instruction set architecture (ISA), as IBM recently made its POWER instruction set open source.

-

bit_user ReplyHuawei has launched its 7nm Ascend 910 artificial intelligence chip for data centers together with a new comprehensive AI framework MindSpore.

Launched, as in shipping? Who already has 7 nm EUV at production level? Samsung?

Tesla V100 416 mm^2

No, it's 815 mm^2. It's not exactly a fair comparison, given how much else the V100 does. Maybe the 416 number is an attempt to account for that.

The Ascent 910 serves as a neural processing unit for training AI models (as opposed to inference) in the data center

Well, the presence of int8 seems like at least a hedge.

Spring Crest does not have INT8, but it does support the young bfloat16 format

Because it's an actual training-focused chip.

Huawei’s launch schedule is currently not impacted by a US ban because the company already has obtained a license to the ARMv8 architecture.

Launch of which product, now? Does this thing have an embedded ARM core?

Anyway, the slides show that the boards have Intel Xeon server CPUs on them.

Even though ARM is a UK company

Japanese-owned, don't forget. And Japan recently seems very willing to restrict exports over trade & other disputes, for anyone who hasn't heard of the Japan/South Korea trade spat.