Working Prototype of Intel’s Failed Larrabee GPU Sells for $5,000 on eBay

Prototype under the hammer

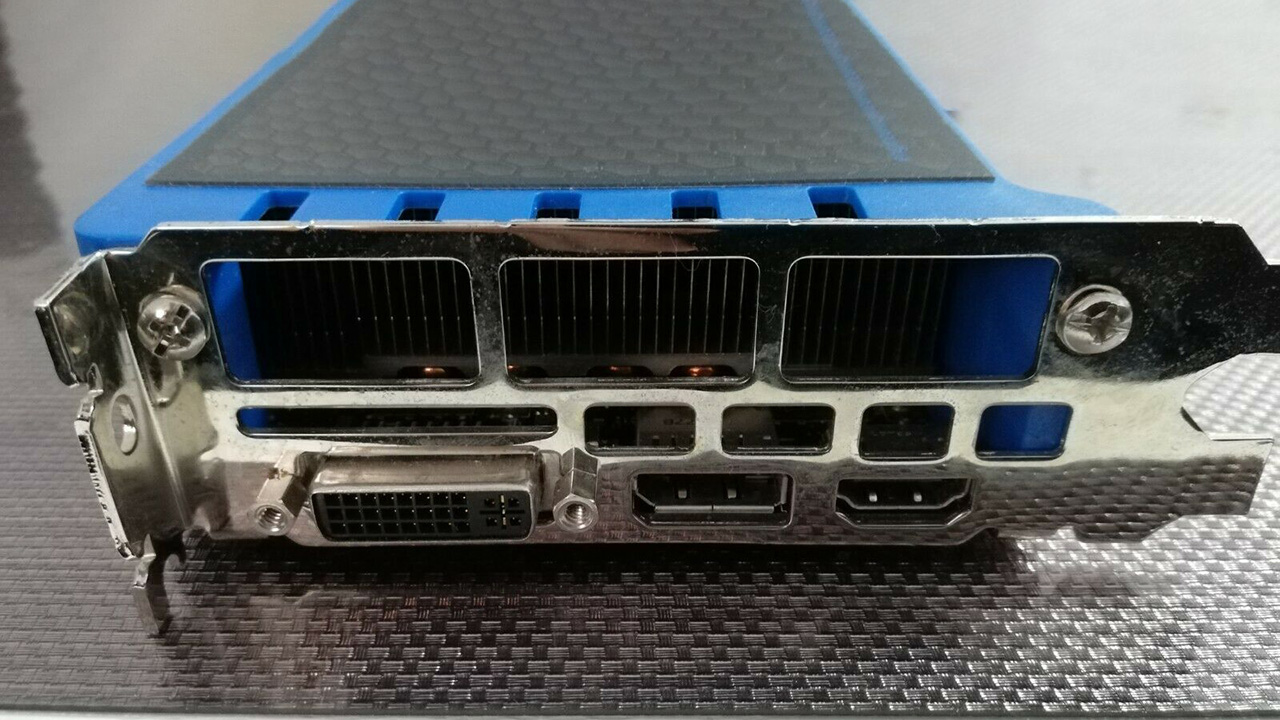

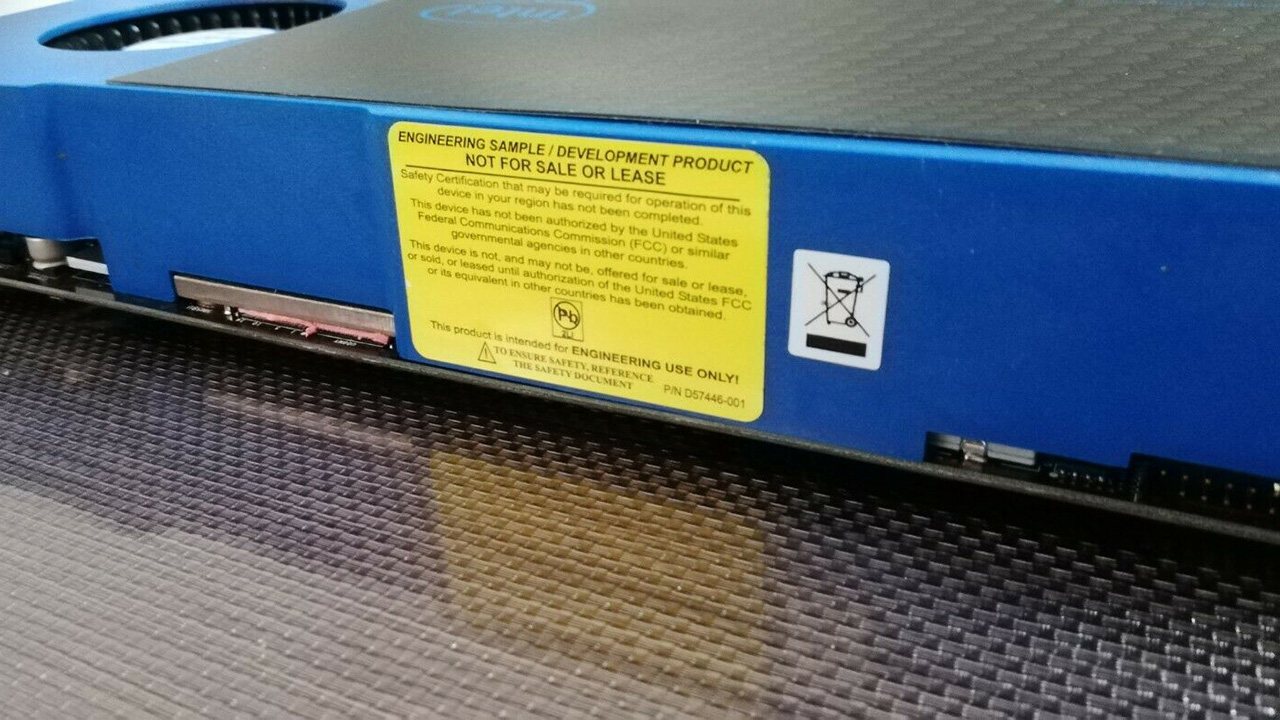

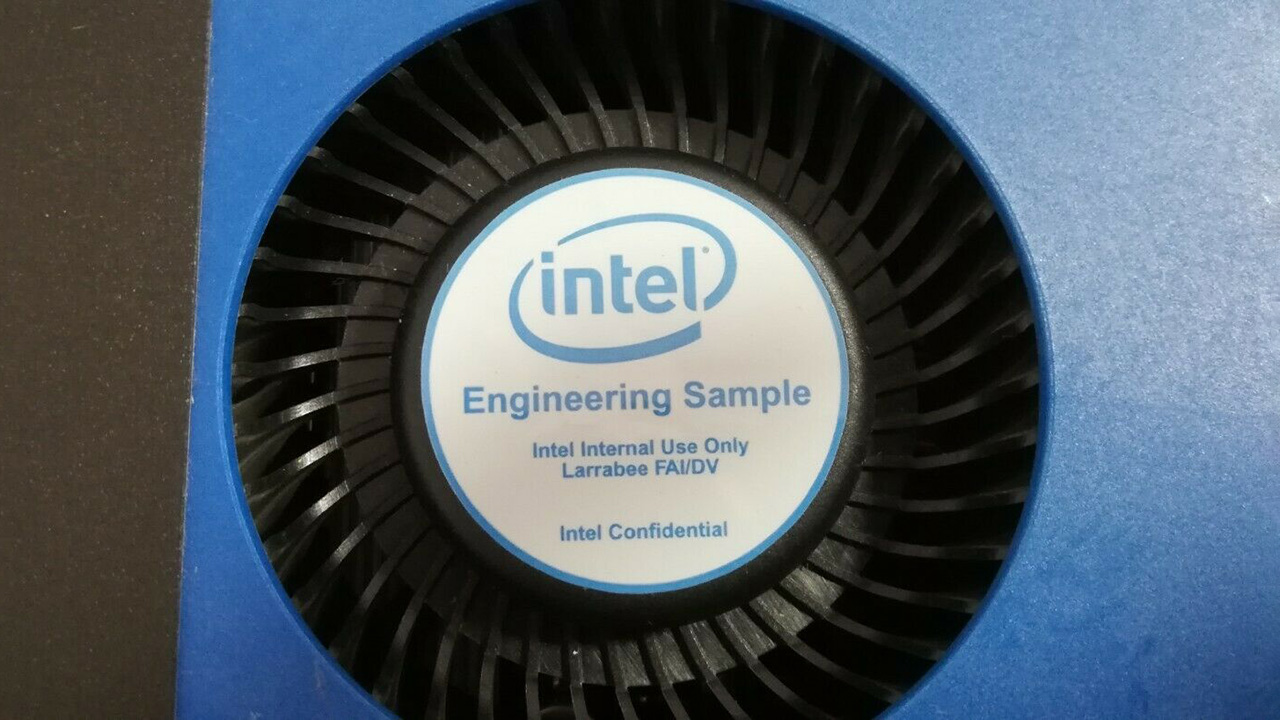

Collectors of rare PC hardware have just missed out on the chance to own a little piece of history: a prototype Intel GPU claiming to be the only working Larabee board in the world. It sold on eBay France for a mere €4,650 ($5,234) and even came in a snazzy case.

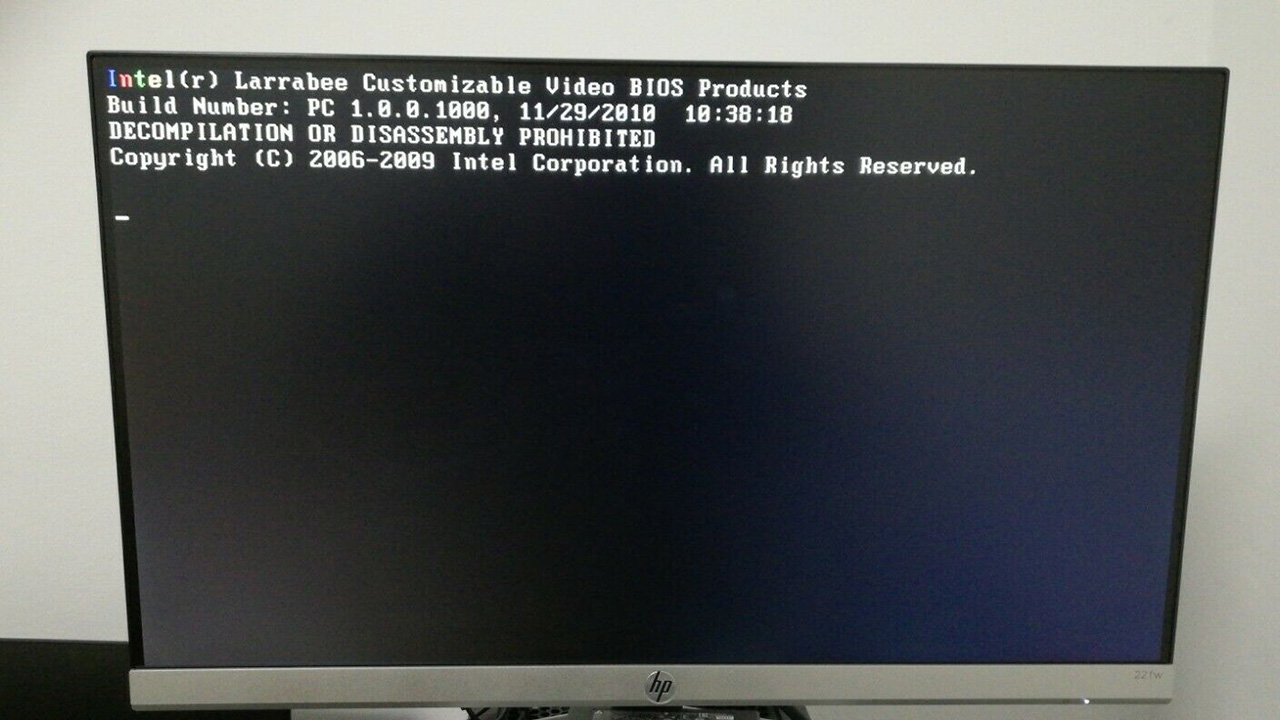

Apparently fully working, a screenshot shows the BIOS startup, but without drivers, the Larrabee GPU is marked as an Intel engineering sample for internal use only. Exactly which truck it fell off to end up in the hands of the seller remains unknown, though we have no reason to doubt the bonafides of the vendor, who has a 100% feedback rating.

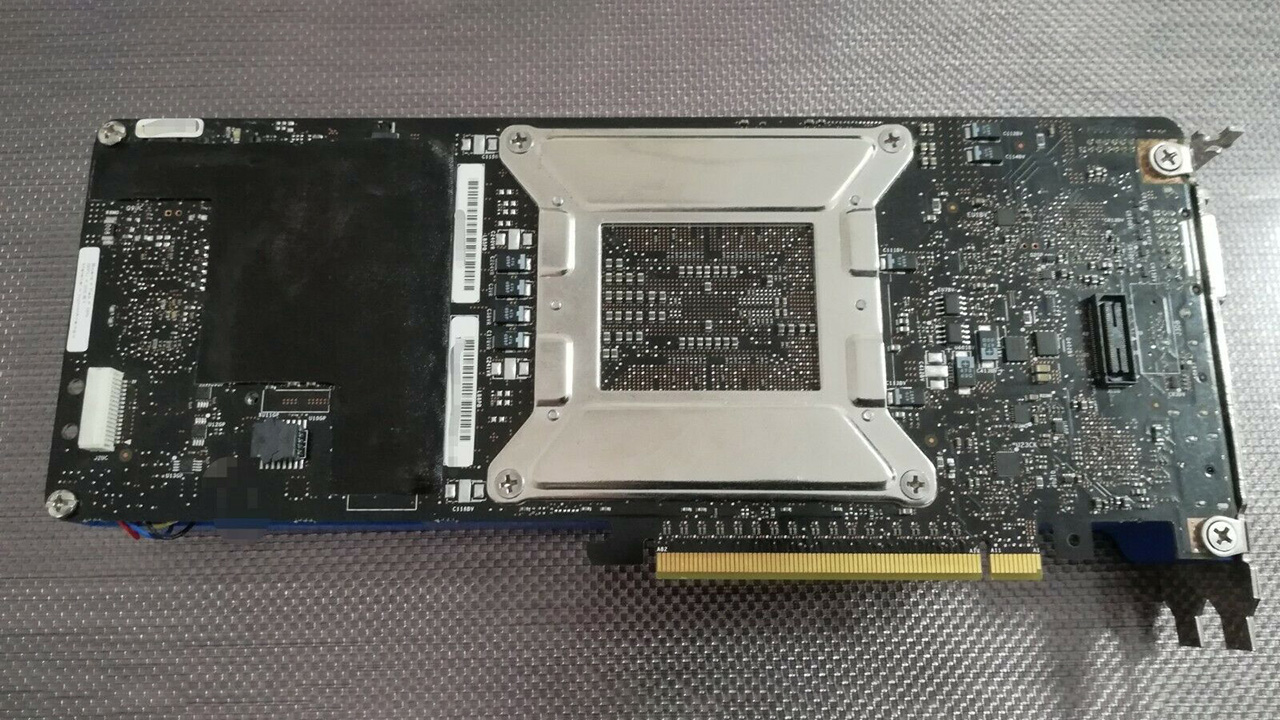

Larrabee was Intel's 2008-vintage attempt to make a GPU, or rather a GPGPU, separately from the project that led to Iris Pro. Rather than following in the footsteps of Nvidia and ATi, Larrabee used the X86 instruction set with special extensions, and the GPU functioned more like a hybrid of a CPU and GPU. In addition, it did a lot of its work in software rather than using specialized graphics hardware, using a tile-based rendering approach. The idea was to make a board that could accelerate more diverse workloads than 'just' games and achieve graphics effects that GPUs at the time couldn't manage, such as real-time ray-tracing and irregular shadow mapping.

Larrabee's processor was derived from Pentium designs, fitting 32 (or 24 in a cut-down version to use defective chips) in-order cores, each with four-way multithreading, onto a single chip. Each core had a 512-bit vector processing unit and used a 1,024bit (512bit bi-directional) bus to communicate with memory. It was speculated that 25 cores were enough to run Gears of War, an Xbox 360 game, without antialiasing.

Larrabee was general-purpose enough that, theoretically, the GPU could have run its own operating system. However, its graphics performance was poor compared to competing products, and the project was shelved as a GPU in 2009. Its GPGPU approach, however, is echoed today in things like Nvidia's CUDA, which opens up the power of the GPU's parallel processing to other applications.

The Larrabee technology passed to Intel's supercomputing division, which eventually built an accelerator card for high-performance computing released as Knights Corner, and the Xeon Phi co-processors, in 2012. They hung around until 2019 and were used in supercomputers, including Cori at the National Energy Research Scientific Computing Center, and China's Tianhe-2, which topped the list of the world's fastest supercomputers from June 2013 until November 2015.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Ian Evenden is a UK-based news writer for Tom’s Hardware US. He’ll write about anything, but stories about Raspberry Pi and DIY robots seem to find their way to him.

-

Howardohyea really interesting in my opinion, nice to read about a bit of technical background about a piece of hardware instead of just "someone sold a GPU".Reply

The fact that Intel used CPU cores for graphics rendering is a really fast way to develop a graphics card, but yeah, the graphics only performance won't be good. That's why graphics cards are considered ASIC (Application Specific Integrated Circuits) -

Historical Fidelity Very interesting article, I appreciate intel’s novel approach to their first foray into the graphics card segmentReply -

DieReineGier This card woulkd complement my i740-based card that I bought on ebay too.Reply

The article leaves the impression that CUDA was influenced by Larrabee, but CUDA was first. Larrabee was started because Intel was afraid of loosing high performance computing market share to GPGPUs.

Larrabee had its very own SIMD instruction set incompatible to any SSE or AVX implementation on regular Intel CPUs. Some instructions were kind of weird just to not infringe on patents held by others. Very interesting architecture. It actually ran flavors of BSD (I saw) and Linux (I heard of), some parts optionally had their own permanent storage and network connection!