Intel Lights Up Silicon, Shipping Silicon Photonics Transceivers

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

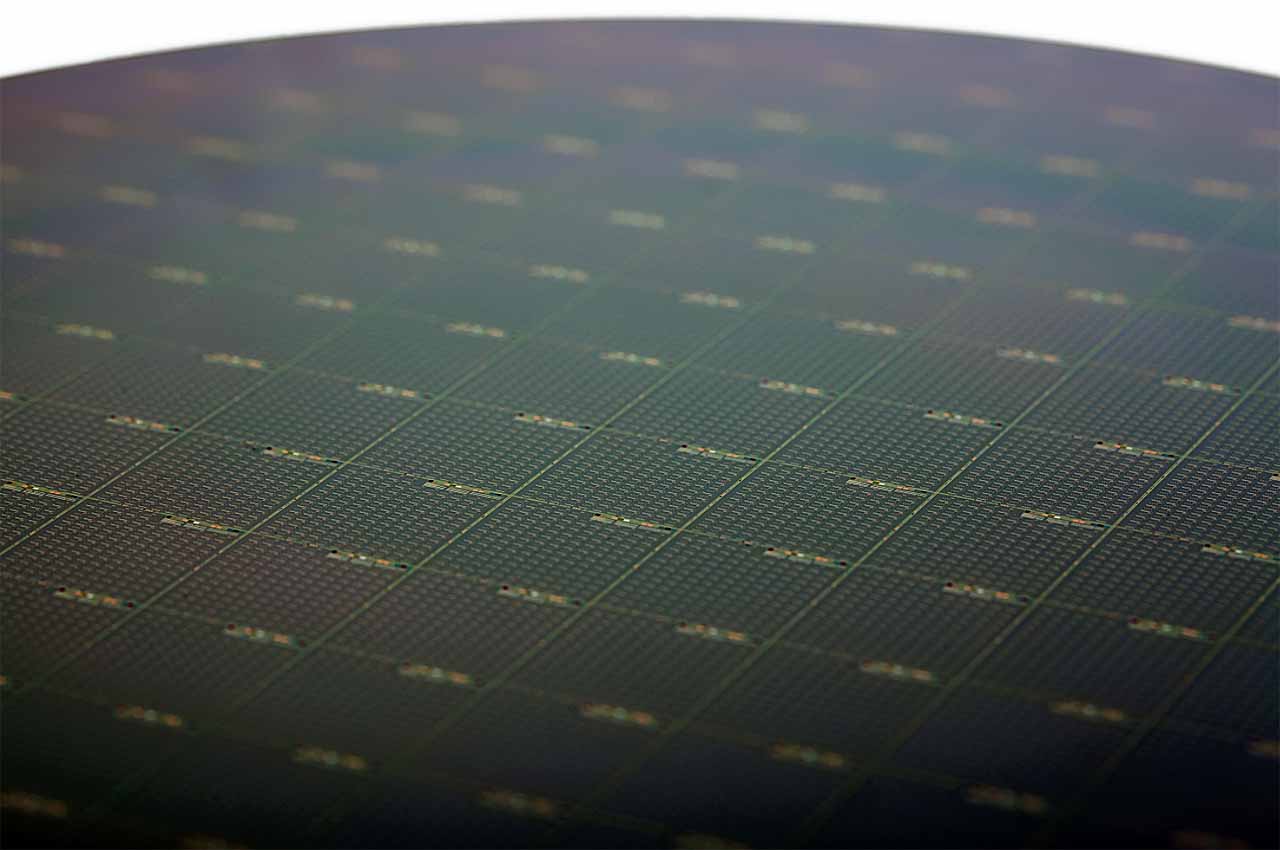

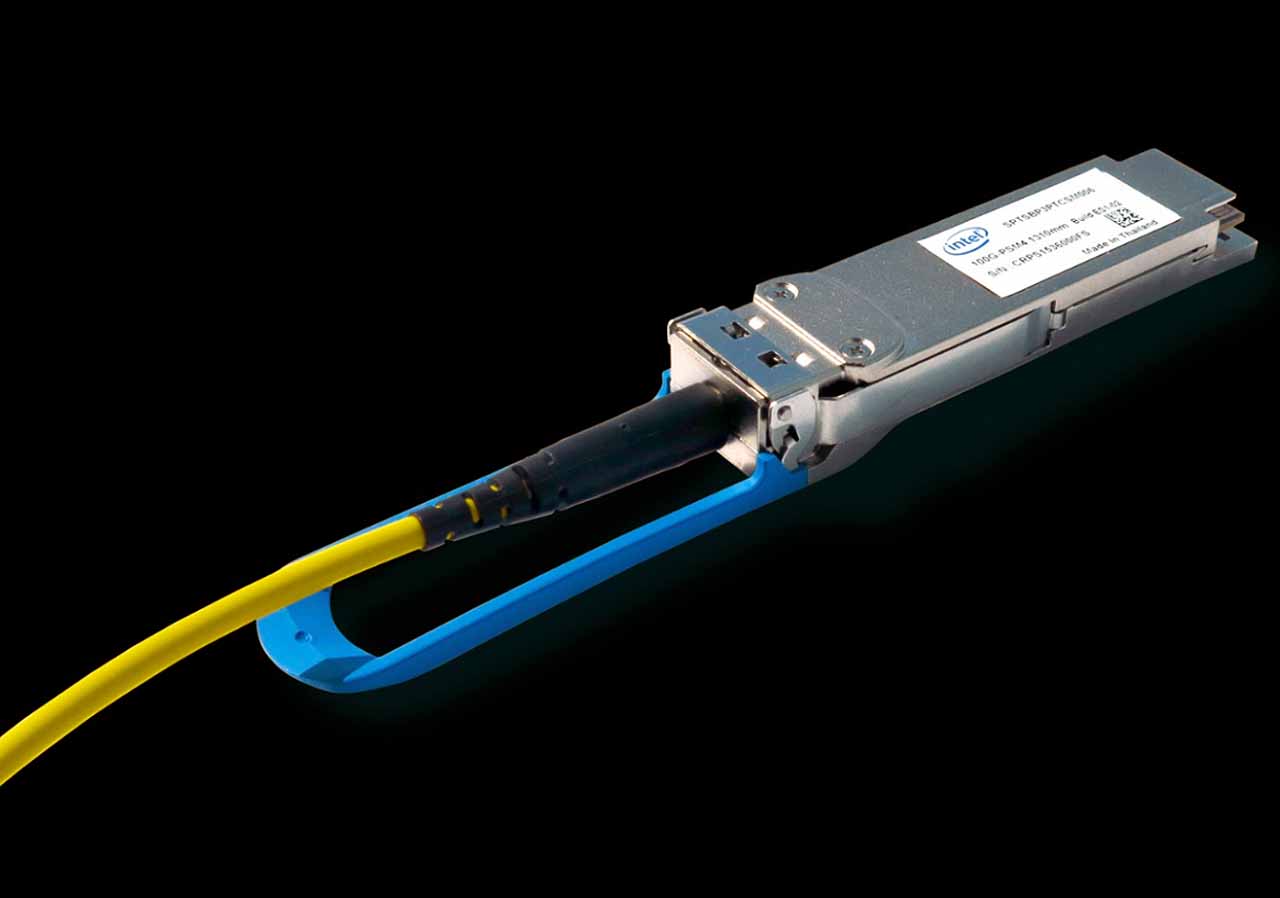

Intel announced at IDF that it is in volume production of its silicon photonics products, which blend a normal integrated circuit with semiconductor lasers to transport data at 100G speeds with existing Ethernet, InfiniBand and Omni-Path protocols.

The industry has long viewed silicon photonics as the best solution to increasing data transfer speeds, and in fact, Intel's developmental process dates back 16 years. The key advantage of embedded lasers is that light can travel faster than electrons, and photonics allows transmission of light streams in closer proximity to each other than electrons, which increases density (Intel claims up to 100X bandwidth density).

Electrons generate heat as they travel inside copper, which is a challenge in dense designs, such as processors. Electrons also require more power to traverse the copper highway; whereas using light to transmit data holds the promise of huge power savings, (Intel claims 3x less power per Gbps). Finally, light beams can cross each other without affecting data transfer capabilities, which is impossible with electrons.

Article continues belowIntel's initial plan is to use silicon photonics to boost network transfer speeds to 100G between switches, and the company sees a clear evolutionary path up to 400G. The first wave of products consists of 100G PSM4 (Parallel Single Mode Fiber with four lanes) and 100G CWDM4 (Coarse Wavelength Division Multiplexing with four lanes) optical transceivers, which can transmit and receive optical signals. The transceivers can push data up to 1.2 miles, which is a tall order for the existing copper-based interconnects that lose speed as distance increases.

Most data centers are beginning the transition to 10 GbE for communication between servers, and due to the lower cost of copper-based connections, that trend should continue. For now, Intel is relegating its silicon photonics products to switch-to-switch traffic, and Microsoft announced that it is integrating the technology into its Azure data centers.

Intel's approach will allow the company to mass-produce the products for less cost-sensitive applications at first, but prices will decline as the technology matures and it reaps the benefits of volume production. Silicon photonics will eventually filter down to handle traffic between and inside of servers. It may eventually boost data transfer speeds between microchips before it eventually works its way into the CPUs.

The new transceivers are a key piece of Intel's strategy to leverage its overwhelming CPU dominance in the data center (it accounts for 99% of the market) to take over other lucrative segments, such as networking.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Eventually--some claim within the next five years--photonics will work their way down to the die level, which could usher in the next wave of high-performance computing. Intel's silicon photonics transceivers are already shipping and are in volume production.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

jasonelmore how long before intel replaces traditional Fin Fet transistors with photonic based ones?Reply -

Adr2t Wont happen unless it's to cross talk with another core on the other side of the CPU or MB. Other wise, electrons move just as fast as light over wire with in a short distant. Photonic also require that you convert electrons to photons and then back to electrons. This process takes a long time compare to just sending a electron down a wire.Reply -

Ksec I think people are missing the point. It is about using less PCI-E link to achieve the same bandwidth, and therefore saves cost on the Die Size space. I/O interface dont shrink as much as other parts of the CPU. So instead of PCI-E 3.0 4x for an SSD, now 2x will do. There is also a lot of power efficiency, focusing on Mobile usage.Reply -

bit_user ReplyMost data centers are beginning the transition to 10 GbE for communication between servers

You mean 100 GbE?

Eventually--some claim within the next five years--photonics will work their way down to the die level, which could usher in the next wave of high-performance computing.

Later this year, Intel is slated to ship a version of the Knights Landing Xeon Phi with integrated OmniPath (in-package, but not on-die) @ 25 GB/sec/port (bi-dir) with 2 ports.

Unfortunately, each port is connected via a PCIe 3.0 x16 link. So, this version of the chip will have only 4x PCIe 3.0 lanes reaching the motherboard.

-

gondor "Silicon photonics will eventually filter down to handle traffic between and inside of servers. It may eventually boost data transfer speeds between microchips before it eventually works its way into the CPUs."Reply

Eventually. -

ammaross Reply18473500 said:how long before intel replaces traditional Fin Fet transistors with photonic based ones?

They won't. Transistors are different than what they're referring to in this article. The better equivalent would be the traces that run from the CPU cores to the L1/L2/L3 cache or from the CPU to RAM, etc.