Lenovo Dishes On 3D XPoint DIMMS, Apache Pass In ThinkSystem SD650

Can you imagine a 512GB memory stick? Intel's Apache Pass DIMMs make that a reality in Lenovo's new ThinkSystem SD650 servers, which are the first servers to support the new devices. These new DIMMs will feature 3D XPoint memory addressed as normal system memory in a RAM slot, but they aren't on the market yet. The new DIMMs require specialized accommodations, but Lenovo said the SD650 will support them when the Cascade Lake Xeons, which will be drop-in compatible with the server, come to market next year.

RAM is one of the most important components in a system, be it a desktop PC or a server, but the relationship between capacity and cost has long been a serious drawback. For instance, the densest memory sticks come with hefty price premiums. The largest single DDR4 stick you can buy is 128GB and carries an eye-watering $4,000 price tag. You can reach the same level of capacity for much less money if you spread it across multiple DIMMs, so that type of density isn't attractive from a cost standpoint.

Intel's 3D XPoint DIMMs are designed to radically change that. Lenovo's SD650 server supports up to four of the forthcoming 512GB 3D XPoint DIMMs per server node, meaning you can slot in up to 2TB of memory into just four slots. These DIMMs will function as a memory-mapped device, meaning the operating system will address it as memory. That will boost system memory capacity dramatically. Intel has long said that 3D XPoint DIMMs will be less expensive than standard system memory, which the company says will help the new memory bust through both capacity and cost barriers.

Article continues belowWe got our first glimpse of an Optane DIMM package back at Storage Visions in early 2016. However, the devices didn't come to market within the expected time frame. That fueled speculation that endurance was an issue for 3D XPoint in heavy-use memory applications.

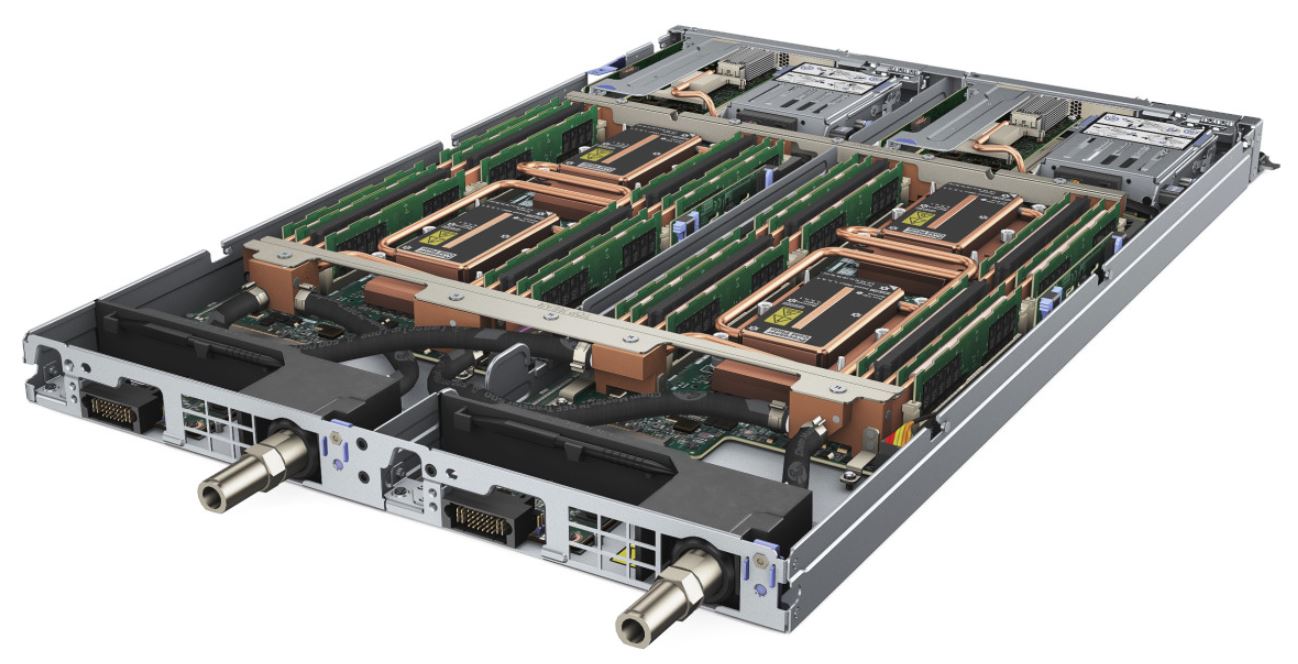

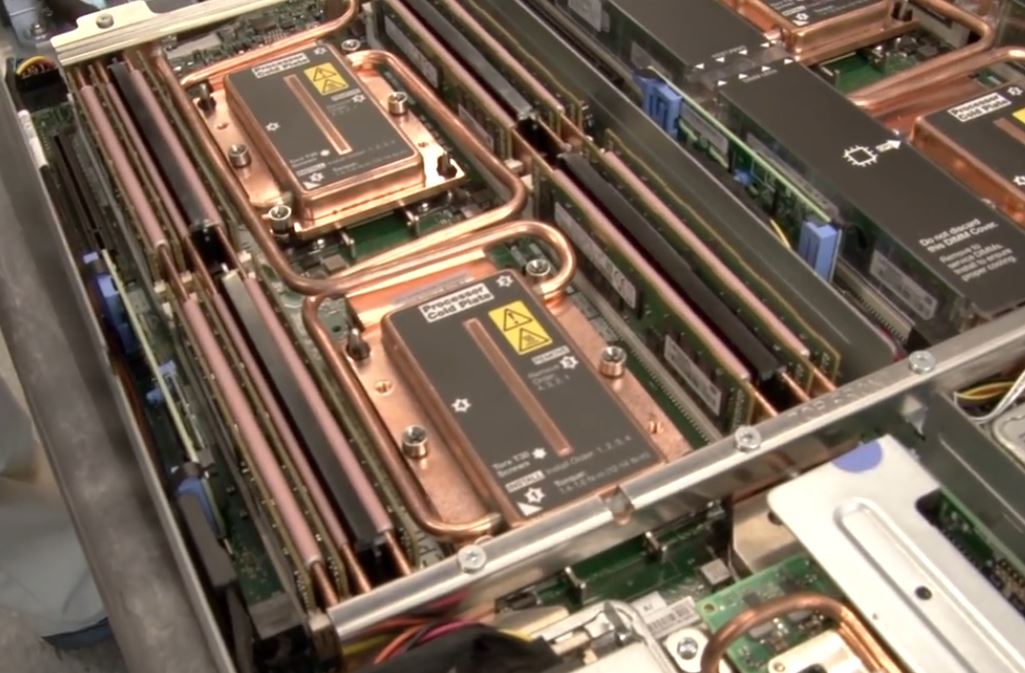

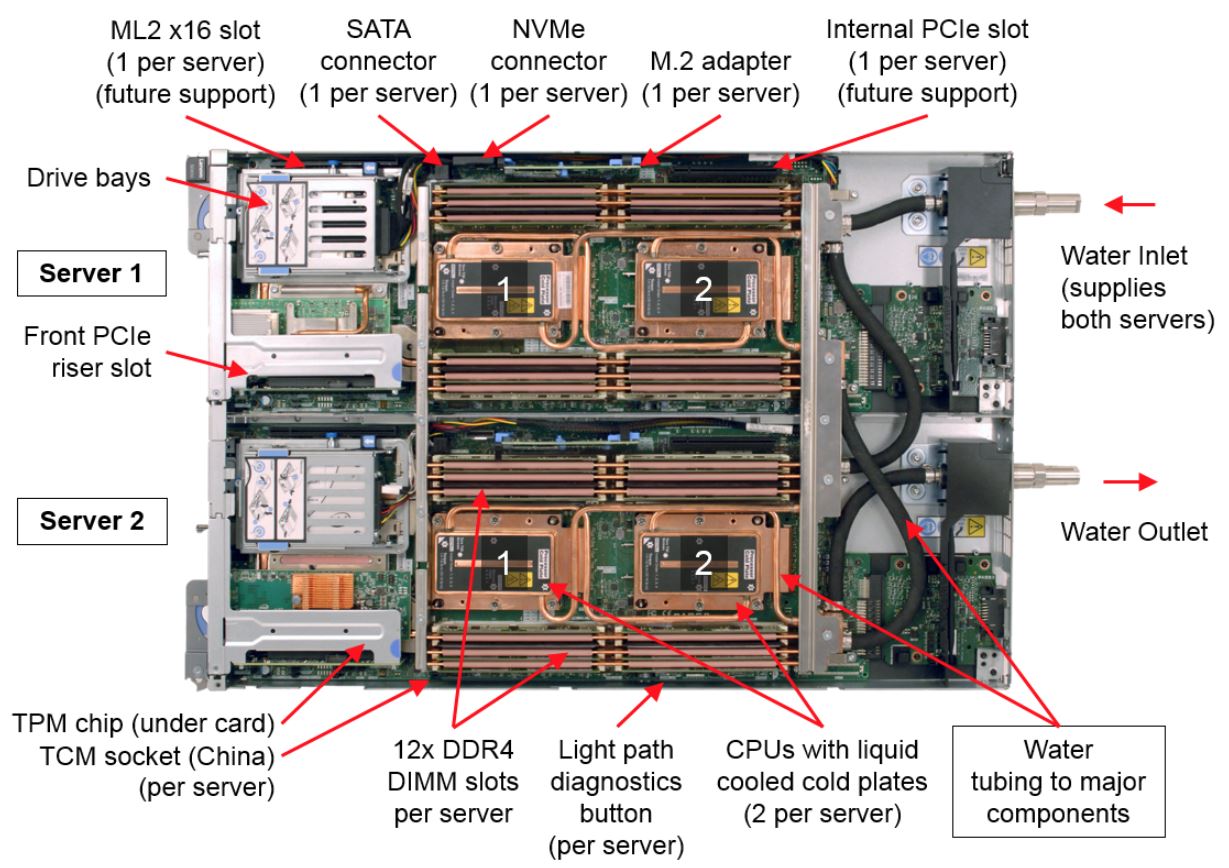

But we've also heard that power and thermal requirements were also a challenge. The ThinkSystem SD650 features a warm-water cooling system, meaning it uses water up to 45C instead of chilled liquid. That provides the utmost density by allowing more hot components in a smaller footprint. The system can recover up to 90% of the waste heat generated inside the chassis.

The design allows Lenovo to pack in two full servers and a total of four (up to) 205W Skylake Xeon processors into a single 1U tray. Lenovo's SD650 walkthrough video specifically mentions that the cooling system is also designed to "maximize cooling on higher TDP processors as well as 3D XPoint and other higher-power memory for future designs."

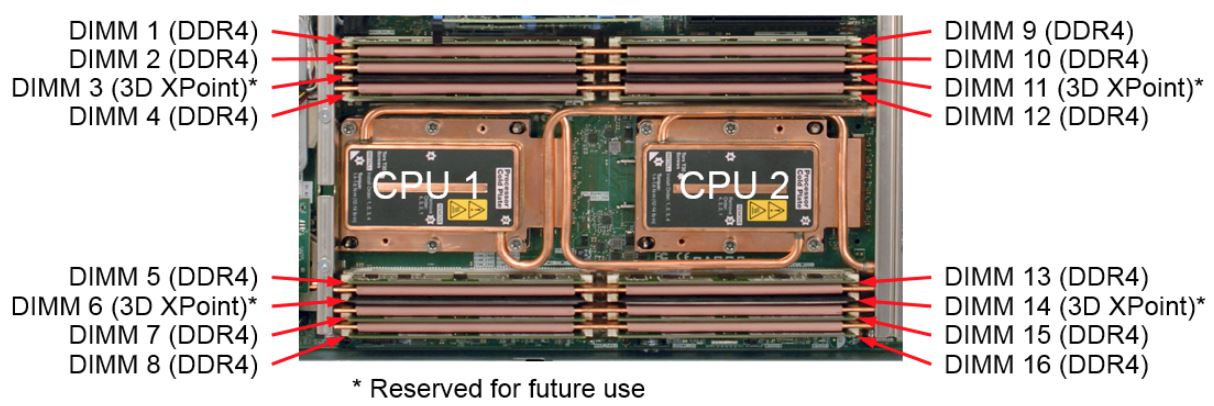

The server documentation also specifically lists four 3D XPoint-compatible slots in each two-socket server node. We reached out to Lenovo's Scott Tease (Executive Director, HPC and AI, Lenovo Data Center Group) about the specialized slots:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

"The 3D DIMM[...] has a higher power profile and is slightly wider so most servers would not be able to accept them in a standard DDR4 slot without specific changes to the board to accommodate it."

The four 3D XPoint slots are also DDR4-capable, so they adhere to JEDEC spec, and you could use regular memory in them as well. But it is an important distinction that the 3D XPoint DIMMs will not function in standard memory slots in most servers. That means that accommodating the DIMMs will likely require specialized motherboard designs.

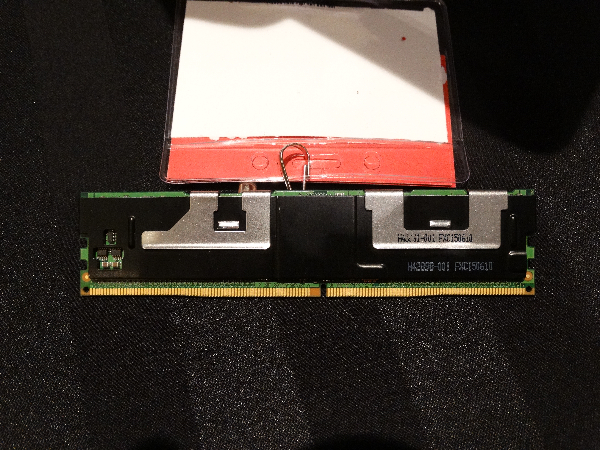

Lenovo designed the SD650 specifically to maximize density through effective thermal management, so like the rest of the system, the DIMMs are water cooled. The system uses gap pads, which are thermal pads that make contact with the DIMMs, draped over a waterblock (seen above). We've seen similar techniques in other watercooled servers, but we aren't sure if water cooling will be a strict requirement for 3D XPoint DIMMs. You can also see the four thick black inserts among the DIMMs. Those fillers coincide with the 3D XPoint DIMM slots, which are sandwiched between pads.

Vinod Kamath, Ph.D., Thermal Architect at Lenovo, also weighed in to answer our questions about power consumption:

The 3D Xpoint memory can consume about 3X the power of a standard 8/16GB DDR4 DIMM. The actual value could range from 15-18W depending on the workload.Cooling for this memory required special focus and optimization of the cooling loop to ensure the next generation device is capable of being supported in the server with the warm water cooling technology. The memory cooling loop extracts heat from all heat transfer surfaces of the 3D XPoint memory efficiently with the shortest conduction path to the critical device from the water flow channel.

Power consumption is a huge consideration in the data center, and gaining 32x the memory capacity in exchange for a 3x increase in power consumption is a dramatic improvement. Intel's first prototype also featured an FPGA to manage the underlying media. Squeezing in the FPGA's power draw and 512GB of 3D XPoint into a 15W-18W envelope is impressive.

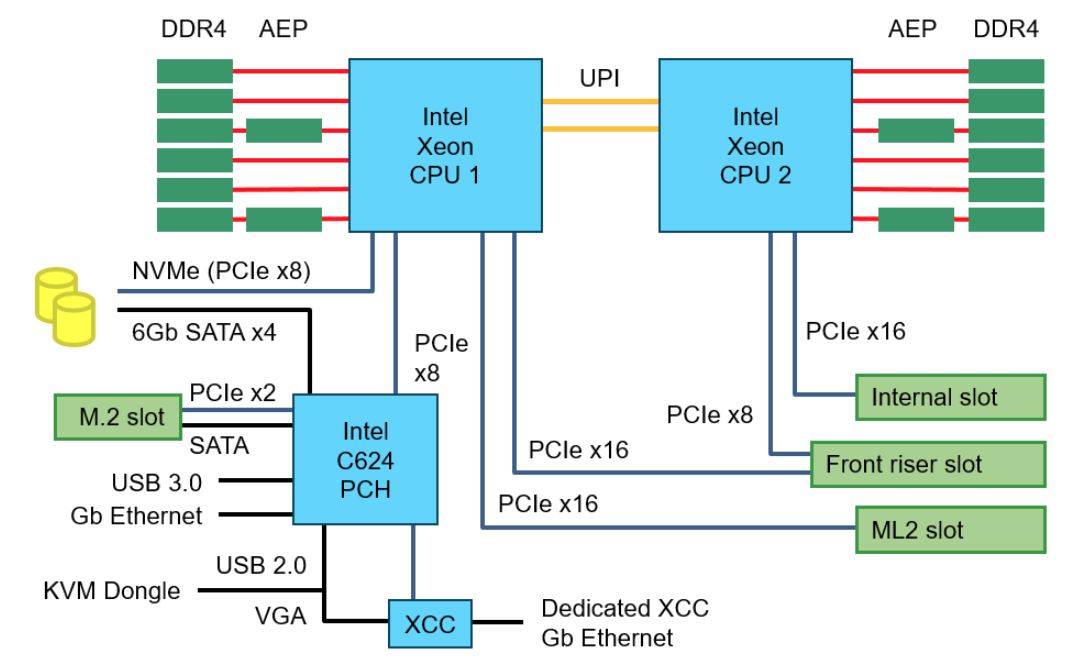

We also learned that the Apache Pass DIMMs, much like NVDIMMs, require a DRAM "chaperone," meaning the 3D XPoint DIMMs have to be accompanied by at least one standard DDR4 stick in the same memory channel. The block diagram shows the four Apache Pass DIMMs (also known as AEP) riding along with standard DDR4 DIMMs on the same channel.

As expected, the motherboard also features the Lewisburg chipset with the C624 PCH. Lenovo infused the platform with a bevy of advanced features, such as support for Xeon "F" models that support 100Gb/s Omni-Path with interposers that plug directly into the processor. Lenovo also offers standard PCIe x16 networking solutions with both air- and water-cooled options.

Lenovo's video states that the water cooling system has some additional headroom to tackle processors with a higher TDP "should Intel come out with those." In the server documentation, we found that the system supports up to a 240W TDP. We've seen several indicators that Intel may have processors coming with much higher TDPs, so that functionality may prove useful.

Thoughts

The emergence of a platform that supports Apache Pass is a big step forward, but questions abound as Intel's new 3D XPoint DIMMs make their way to market. Intel CEO Brian Krzanich recently said that 3D XPoint DIMMs wouldn't have a material impact on revenue this year. Considering that Lenovo said that Cascade Lake Xeons aren't coming to its servers until next year, that likely signals the DIMMs will ship this year only for qualification purposes with early partners. Intel does ship server silicon early to cloud service providers, but it appears widespread Cascade Lake availability will not come until early next year.

Many analysts are predicting near-term revenues for NVDIMMs, which typically consist of NAND-backed DRAM, in the billions of dollars. Intel's Apache Pass DIMMs have the potential to plunder that market, as a single device with inherent data persistence and an exponential amount of capacity is a far more sophisticated solution. The 3D XPoint DIMMs will be much slower than DDR4-based NVDIMMs, but they don't require batteries and will piggyback on the same software and programming models that enable the NVDIMM ecosystem. That should help speed adoption.

Endurance and price will be the key considerations that could make or break Apache Pass. There is some speculation that Apache Pass' relatively late arrival is because Intel needed the more endurant second-generation of 3D XPoint to meet its desired endurance threshold, but we don't expect Intel to confirm that chatter.

In either case, the DIMMs will have a finite lifespan, so they'll likely be assigned endurance ratings, much like SSDs. We also suspect that several different techniques will emerge to mitigate the lower endurance, such as using the DDR4 pool as a fast front-end cache for the Apache pass DIMMs. We're also told it can be used as block storage.

Lenovo's ThinkSystem SD650 is designed to provide the utmost in performance density, but it's also a forward-looking platform loaded with the latest in server technology, and it has provisions for the future, too. We expect that other servers that support Intel's Apache Pass will follow Lenovo's ThinkSystem SD650, so we should learn more in the coming months.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

2Be_or_Not2Be Memory with endurance limits - yikes! I'd hate to have to manage that. You would almost have to buy an extra set & validate it just so you wouldn't be faced with lack of supply or compatibility for your particular set when you have to replace it x years down the road.Reply

I think I'd rather have research into 1TB DDR DIMMs than 1TB XPoint NVDIMMs. Let me use 3D XPoint in my SSDs - like bigger & better Optane SSDs. There I'm facing endurance limits already with SSD storage, so it's nothing new there. -

wes.vaske The first use will be applications that know how to use the persistent memory space. Think column store or indexes for databases. You won't really worry about endurance because the first uses will be read intensive applications.Reply -

lorfa Actually 512 GB dimms exist, IBM uses them in their z14 servers, but you're still correct in a way because I'm pretty sure that you cannot use these dimms outside of their machines, so 128 GB would be the largest you could 'buy and use' in a 'typical' machine.Reply -

Paul Alcorn I believe the IBM DIMMs use a specialized buffer implementation. They can't work with regular servers.Reply