Intel's Iris Xe DG1 GPU Is Seemingly Slower Than The Radeon RX 550

The first benchmark (via Tum_Apisak) of Intel's Iris Xe DG1 is out. The graphics card's performance is in the same ballpark as AMD's four-year-old Radeon RX 550 - at least in the Basemark GPU benchmark.

If we compare manufacturing processes, the DG1 is obviously the more advanced offering. The DG1 is based on Intel's latest 10nm SuperFin process node, and the Radeon RX 550 utilizes the Lexa die, which was built with GlobalFoundries' 14nm process. Both the DG1 and Radeon RX 550 hail from Asus' camp. The Asus DG1-4G features a passive heatsink, while the Asus Radeon RX 550 4G does require active cooling in the form of a single fan. The Radeon RX 550 is rated for 50W and the DG1 for 30W, which is why the latter can get away with a passive cooler.

The Asus DG1-4G features a cut-down variant of the Iris Xe Max GPU, meaning the graphics cards only has 80 execution units (EUs) at its disposal. This configuration amounts to 640 shading units with a peak clock of 1,500 MHz. On the memory side, the Asus DG1-4G features 4GB of LPDDR4X-4266 memory across a 128-bit memory interface.

On the other side of the ring, the Asus Radeon RX 550 4G comes equipped with 512 shading units with a 1,100 MHz base clock and 1,183 MHz boost clock. The graphics card's 4GB of 7 Gbps GDDR5 memory that communicates through a 128-bit memory bus to pump out a memory bandwidth up to 112 GBps.

In terms of FP32 performance, the DG1 delivers up to 2.11 TFLOPs whereas the Radeon RX 550 offers up to 1.21 TFLOPs. On paper, the DG1 should be superior, but we know that FP32 performance isn't the most important metric.

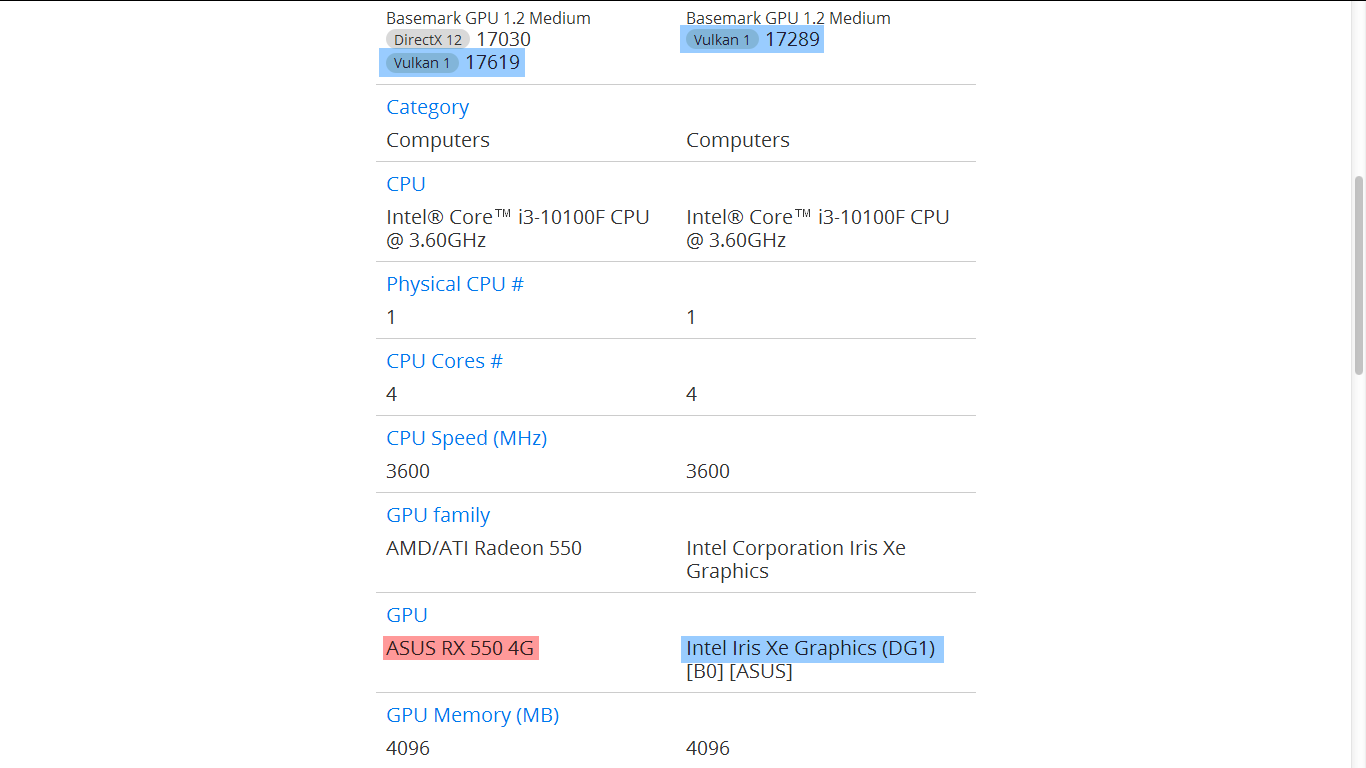

Both systems from the Basemark GPU submissions were based on the same processor, the Intel Core i3-10100F. Therefore, the DG1 and Radeon RX 550 were on equal grounds as far as the processor is concerned. Let's not forget that the DG1 is picky when it comes to platforms. The graphics card is only compatible with the 9th and 10th Generation Core processors and B460, H410, B365 and H310C motherboards. Even then, a special firmware is necessary to get the DG1 working.

The DG1 puts up a Vulkan score of 17,289 points, while the Radeon RX 550 scored 17,619 points. Therefore, the Radeon RX 550 was up to 1.9% faster than the DG1. Of course, this is just one benchmark so it's too soon to declare a definite winner without more thorough tests.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Intel never intended for the DG1 to be a strong performer, but rather an entry-level graphics card that can hang with the competition. Thus far, the DG1 seems to trade blows with the Radeon RX 550.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

Kamen Rider Blade WTF is Intel doing, releasing a new (GPU/Video Card) that loses to a 4 year old card on 14 nm while DG-1 is on the more advanced 10nm process.Reply

Makes you wonder if poaching Raja Koduri was really worth it? -

watzupken Apart from their integrated Xe graphic solution, so far the dedicated graphics side of things are not looking good. With Raja beating around the bush showing chip but not performance, it just reminded me of the same trend when AMD revealed Vega.Reply

I suspect Raja and/or Intel may have underestimated the performance of the current gen of AMD and Nvidia cards. And so, I don't think even the top end Xe card for the consumer market will be competitive in terms of performance. Just my guess. -

hannibal This is actully better than i was expecting. This is fine for Office usage and similar!Reply -

InvalidError Reply

The DG1 was primarily released as a development tool and OEM special. The board does not even have its own BIOS so you need a motherboard with a BIOS build that has baked-in DG1 support if you want to use it at boot.Kamen Rider Blade said:WTF is Intel doing, releasing a new (GPU/Video Card) that loses to a 4 year old card on 14 nm while DG-1 is on the more advanced 10nm process.

DG1 is not intended for sale to consumers, it is basically a high-functioning prototype. -

cryoburner This level of performance shouldn't be at all surprising. Intel's new UHD 750 graphics found in their Rocket Lake CPUs might be an improvement over UHD 630, but they still apparently only offer less than half the performance of the Vega 11 integrated graphics found in AMD's quad-core Ryzen APUs from the last few years. UHD 750 utilizes 32 EUs, while this dedicated card only increases that to 80, without increasing clock rates much and only using LPDDR4 for VRAM. So performance shouldn't be much better than Vega 11, or roughly in the ballpark of an entry-level RX 550 or GT 1030 from several years back. It sounds like the consumer-facing cards might go up to 512 EUs though, and will likely utilize GDDR6, which should get them at least into the mid-range, if not higher.Reply -

Co BIY If the cards have the ability to share workload with the IGP on the processor that should give them a boost too.Reply

Very few high end GPUs are sold so Intel may be only targeting the fattest part of the market. And would probably be wise to do so.

WTF is Intel doing, releasing a new (GPU/Video Card) that loses to a 4 year old card on 14 nm while DG-1 is on the more advanced 10nm process.

In the current market Nvidia is re-releasing two year old cards and they will probably sell well. -

TechyInAZ ReplyInvalidError said:The DG1 was primarily released as a development tool and OEM special. The board does not even have its own BIOS so you need a motherboard with a BIOS build that has baked-in DG1 support if you want to use it at boot.

DG1 is not intended for sale to consumers, it is basically a high-functioning prototype.

This ^^^^^^ To be honest I'm very surprised to see DG1 in a consumer build at all. DG2 is where the magic is supposedly going to be for Intel -

rtoaht ReplyAdmin said:The Intel DG1 gets in the ring with AMD's Radeon RX 550 on the Basemark GPU benchmark.

Intel's Iris Xe DG1 GPU Is Seemingly Slower Than The Radeon RX 550 : Read more

Except this is not a card for consumers. Just a software development tool. -

cyrusfox So many words saying so little... basic point:Reply

50W old gen card vs new Intel IGPU 30W,

The Intel DG1 puts up a Vulkan score of 17,289 points

Radeon RX 550 scored 17,619 points

Radeon card slightly faster at 67% more power consumption...

This is Intels showing 1st year drivers. Its all about efficiency here, if it is good, should scale well.

I would buy one if they didn't lock the bios down, Make it work on my Z490 board and I would happily see if this could replace my GT1030 -

cryoburner Reply

For an extreme low-end card like this 80 EU DG1, that could potentially help performance a bit. However, it's unlikely to provide tangible benefits to a mid-range gaming card, or even fairly low-end gaming cards. Again, the performance provided by UHD 750 is less than half that of AMD's few-year-old integrated graphics found in their APUs, or a GT 1030 or RX 550. And even that graphics hardware was super-low-end when it launched years ago, only offering around a quarter of the performance of something like a GTX 1060 6GB or RX 580 (or the newer and slightly faster 1650 SUPER and 5500 XT for that matter).Co BIY said:If the cards have the ability to share workload with the IGP on the processor that should give them a boost too.

So even late-2019 lower-end gaming cards positioned at a $160-$170 MSRP offer performance that is somewhere close to 10 times that of Rocket Lake's integrated graphics. Meaning best-case scenario, the integrated graphics might theoretically be able to boost performance of a card at that level by up to 10% or so, or even less for mid-range to high-end cards. However, even that's not likely to happen, since the integrated graphics won't have direct access to the data and framebuffer in the video card's VRAM. So using them together would most likely not help performance to any perceptible degree in games, and is probably more likely to just make performance less-stable, if anything.

AMD actually tried something like that a number of years back in the pre-Ryzen days, but it only benefited performance at all when paired with a limited number of very low-end graphics cards, and often made performance worse.