LattePanda Announces Sigma, a 'Hackable Single Board Server'

Raptor Lake CPU, Intel Iris XE GPU and 16GB of LPDDR5

The LattePanda Sigma, launched today by the LattePanda team from $579, improves upon the power of the LattePanda 3 Delta with a Raptor Lake-based CPU, 16GB of LPDDR5, and an Intel Iris Xe GPU. All of this makes it a much more powerful machine than the LattePanda 3 Delta and the Raspberry Pi 4.

The LattePanda 3 Delta was already an impressive board, much more powerful than a Raspberry Pi 4. However, the specs for the Sigma blow the Delta firmly out of the water.

The choice of a 13th-Gen Intel Raptor Lake Core i5-1340P (12 core, 16 threads) is a big upgrade from the quad-core Intel Celeron N5105 found in the LattePanda 3 Delta. The GPU also sees an upgrade, now sporting an Intel Iris Xe GPU with 80 execution units and up to 1.45 GHZ, compared to the Delta's now rather pedestrian Intel UHD GPU running between 450 and 800 MHz.

The Sigma's RAM upgrade is also welcome. While the 8GB of LPDDR4 RAM afforded by the Delta was enough for most tasks, 16GB of LPDDR5 running at 6400 MHz means we could get a big performance boost for all projects.

What the two share, though, is an onboard Arduino Leonardo-compatible co-processor, which is located this time along the short edge of the board. In our review of the LattePanda 3 Delta, we loved the easy GPIO access, and using the Arduino IDE and Python firmware, we easily created projects. The Sigma seems to share the same pin arrangement, and while it isn't a true Arduino Uno pinout, it is functional.

| CPU | Intel Core i5-1340P (12 Core, 16 Thread. 12MB Cache. 4.6 GHz P-Core, 3.4 GHz E-Core |

| RAM | 16GB Dual-Channel LPDDR5 6400MHz |

| GPU | Intel Iris Xe Graphics, 80 Execution Units, up to 1.45 GHZ |

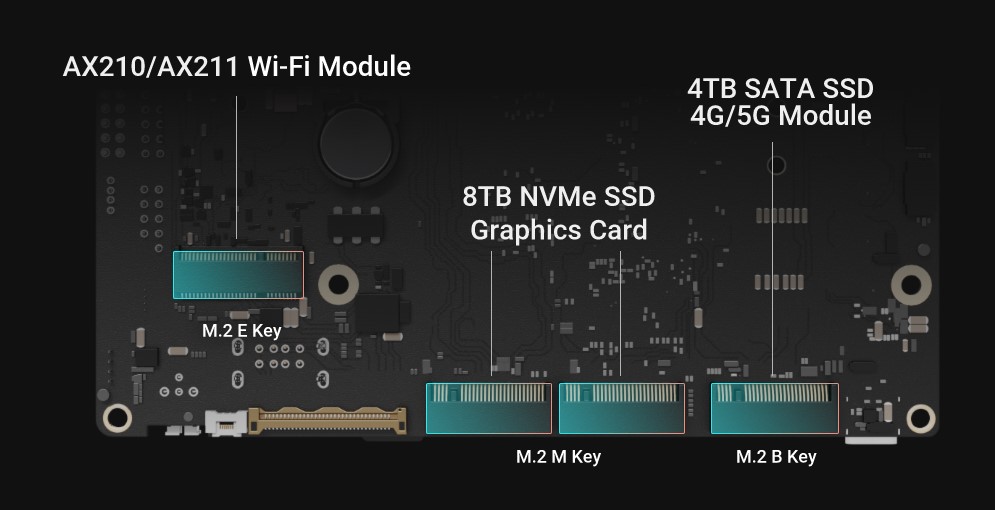

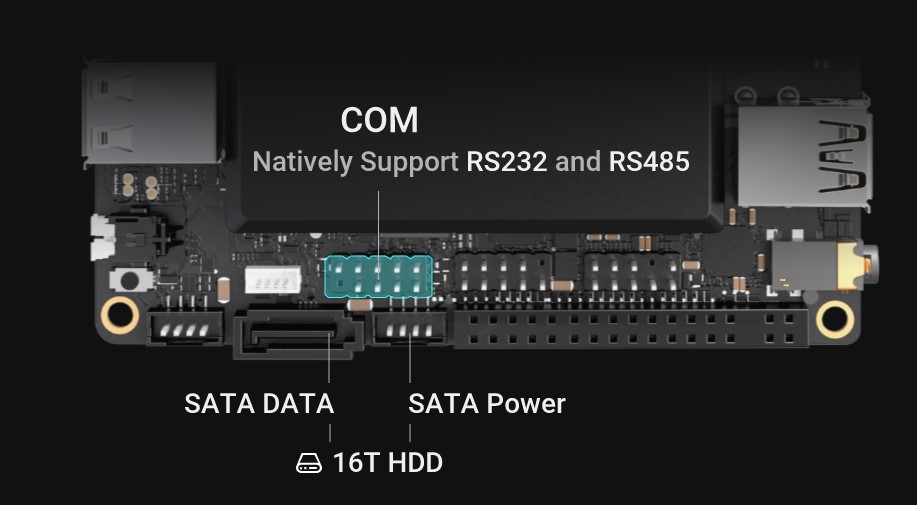

| Storage | M.2 NVMe / SATA SSD |

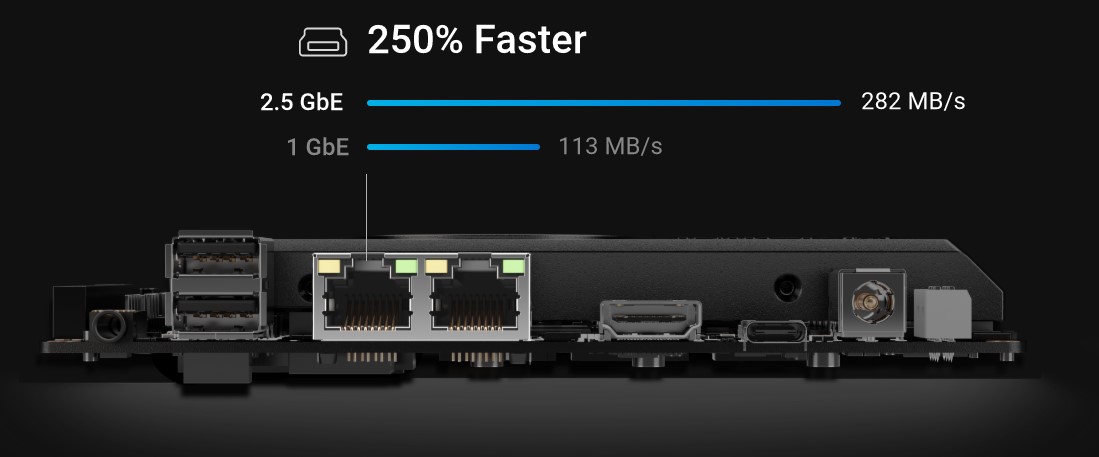

| Networking | 2 x 2.5GBe (Intel i225-V) |

| Ports | 2 x USB 2.0, 2 x USB 3.2, 2 x Thunderbolt 4 / USB C |

| Display | 1 x HDMI 2.1 up to 4096 x2304 @ 60Hz; |

| Row 7 - Cell 0 | DisplayPort via USB C up to 7680 x 4320 @ 60Hz |

| Row 8 - Cell 0 | eDP1.4b,up to 4096 x 2304 @120Hz |

| Expansion Slots | M.2 M Key: PCle 3.0x4, M.2 M Key: PCle 4.0x4 |

| Row 10 - Cell 0 | M.2 B Key: SATA/PCle 3.0 |

| Row 11 - Cell 0 | USB Headers |

| Co-Processor | Arduino Leonardo |

| Operating System | Windows 11 / 10, Ubuntu 22.04 |

| Dimensions | 146 x 102mm |

The LattePanda Sigma is pitched as a "Hackable Single Board Sever with Mighty Power," but that doesn't restrict it to just sitting in an office. The onboard Arduino Leonardo provides a GPIO interface accessible via a USB-to-serial interface. With some Arduino and Python code and a USB camera, building a powerful machine learning/computer vision project would be simple. Sure, the Sigma is larger than a Raspberry Pi 4, but it could be easily integrated into a robotics project. The CPU / GPU combo is potent and could easily be used as a low-power desktop machine with four 4K displays running simultaneously. To keep it cool, we see a combined heatsink and fan assembly dominating the board's top, taking up more space than the LattePanda 3 Delta's cooling solution.

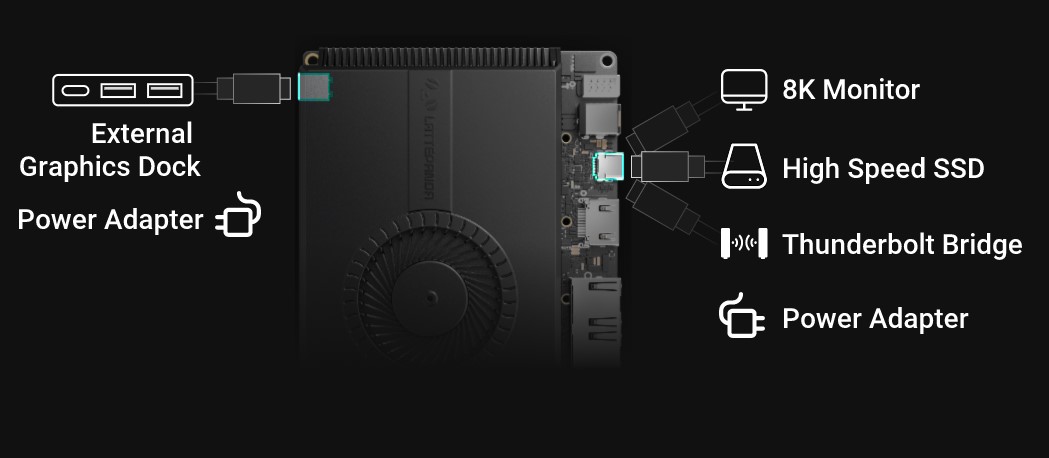

The dual Thunderbolt 4 ports are an enticing proposition. We potentially benefit from fast data transfer speeds and potentially external GPUs. Gaming is possible, on the Intel Iris Xe GPU, heck we managed a competent session of Call of Duty: Modern Warfare on the LattePanda 3 Delta, so it should be a cakewalk for Sigma.

The LattePanda Sigma is now available direct from DFRobot, starting from $579 for a base unit, $648 with a 500GB SSD and Wi-Fi 6E module.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Les Pounder is an associate editor at Tom's Hardware. He is a creative technologist and for seven years has created projects to educate and inspire minds both young and old. He has worked with the Raspberry Pi Foundation to write and deliver their teacher training program "Picademy".

-

bit_user This is so much more expensive and power-consuming, that it really doesn't make any sense to compare it to a Raspberry Pi 4, IMO.Reply

If you're in the market for a Pi 4-class machine, and you end up buying one of these, then all it says is that a Pi 4 really isn't what you wanted, in the first place. -

abufrejoval While I'd love to test specifically its graphics performance, I don't think I would want to own one of these.Reply

RAM expandability is too essential for me, I want at least up to 64GB on a box this size, which is why I use NUCs currently. Yes, twice the DRAM bandwidth should help with iGPU performance, but that's why I'd actually like to see some benchmarks there, against a DDR4-3200 box using a similar Xe iGPU.

Actually I now have various 96 and 80 EU Xe iGPU on Tiger and Alder Lake, but all with DDR4-3200. The difference between 80 and 96 EUs is too low to actually notice: None of these are fit for gaming.

Bandwidth is typically the biggest constraint on iGPU performance, but are the Xe actually capable of taking advantage of twice the "typical design bandwidth"?

If you can get hold of one, please let us know! It's the one area from which I expect the 780m to obtain their iGPU might and justify their DDR5 dependency for the generational uplift.

For general purpose computing, the CPU's TDP will be too much of a constraint to take advantage of the bandwidth, so I don't see this as a "server" oriented design at all, unless LPDDR5 was also significantly less power than DDR4 SO-DIMMs: a Watt or two I would guess, but I wouldn't sacrifice expandability for that.

Lack of 10GBit Ethernet is a bummer, 2.5Gbit/s is certainly better than the really truly terrible Gbit, which has been with us far too long. There are Aquantia/Marvel AQC113 chips out there which offer 10Gbit on a single PCIe v4 lane, so why is nobody using them (except Intel's NUC12 Extreme and some really high-end mainboards)?

Are Intel back-room dealings to blame? That seemed the major obstacle until Intel finally managed to produce 2.5 Gbit NICs as well: RealTek had them for a long time by then... -

bit_user Reply

I think 10 Gigabit Ethernet MACs were hard-hit by pandemic-related supply/demand issues. I've seen a lot of 10 Gigabit products either in short supply or with significantly elevated pricing. The situation finally seems to be recovering, but it's taken a long time to sort out.abufrejoval said:There are Aquantia/Marvel chips out there which offer 10Gbit on a single PCIe v4 lane, so why is nobody using them? Are Intel back-room dealings to blame? That seemed the major obstacle until Intel finally managed to produce 2.5 Gbit NICs as well: RealTek had them for a long time by then... -

abufrejoval Reply

It's getting harder and harder to believe that.bit_user said:I think 10 Gigabit Ethernet MACs were hard-hit by pandemic-related supply/demand issues. I've seen a lot of 10 Gigabit products either in short supply or with significantly elevated pricing. The situation finally seems to be recovering, but it's taken a long time to sort out.

The AQC107 has been out for years and availabilty didn't suffer through the pandemic as I got a steady supply of PCIe x4 cards and the Thunderbolt variants before and troughout without price hikes. The ACQ108 (1-5Gbit/s) was a little less popular, because it really required NBase-T capable switches to support 5Gbit/s, when a lot of switches and PHYs only supported 100/1000/10000 Mbit, but not 2500 or 5000.

But again that stopped being an issue eight years ago, mostly because Aquantia co-developed the NIC and switch silicon and supplied Netgear etc. for cheap 8-port NBase-T switches. Admittedly I didn't try to buy switches during the pandemic, I had enough to last me throughout, only added AQC107 and 2.5Gbit clients, but unlike the data center equipment I didn't see them becoming unavailable.

The AQC113 offers 10Gbit using only a single PCIe v4 lane and was very slow ramping up. It might have suffered from the Marvel aquisition of Aquantia, but that doesn't quite explain why the only AQC113 plugin NICs available are PCIe x2 variants, a form factor that seems little more popular than PCIe x12 or 32, which are part of the official standard.

The AQC10x family seems to remain available and the AQC107 covers both PCIe v2 and v3 needs with the far more prevalent x4 factor quite nicely, so why are all available ACQ113 NICs all in the x2 form factor when nearly nobody offers x2 slots and the far "sexier" 10Gbit speed only requires a single v4 lane?

Last time I looked the highest-end Intel and AMD mainboards in the near €1000 range even had ACQ113 chips (using a single lane) on-board as well as one or two (closed end!) x1 slots, but the x1 plug-in NIC variant was nowhere to be had while x4 v5 slots where clearly much too dear and overkill for 10Gbit Ethernet.

Did I mention that I hate closed-end PCIe slots? They were terrible in the old days, when a PCIe x4 slot was often an unsued (and unusable) surplus while using the 2nd x16 slot e.g. for a x8 RAID controller meant bifurcating the GPU slot.

But they aren't better when the ACQ113 even as an x2 card would work perfectly in an open-ended x1 v4 slot, but these don't exist, cutting them open on a brand new mainboard is no fun (done that on those x4 slots) and even the x1 slots are disappearing alltogether (perhaps x1 NICs are finally coming?).

No, it would seem that really nobody wants to offer commodity 10Gbit on desktops at a bargain and it can't be the availability of the chips, because they are sold onboard and as plugin cards, just not in the single most sensible form factor of PCIe v4 x1. -

bit_user Reply

Just look at what's happened to pricing of 10 Gigabit switches, for a datapoint that has almost nothing to do with the PC market. Last I checked (a few months ago), they still cost almost double the pre-pandemic prices.abufrejoval said:It's getting harder and harder to believe that.

The same can't be said for Intel and Broadcom. It's not like AQC107 is a drop-in replacement for them, so I think a lot of manufacturers tried to wait it out.abufrejoval said:The AQC107 has been out for years and availabilty didn't suffer through the pandemic as I got a steady supply of PCIe x4 cards and the Thunderbolt variants before and troughout without price hikes.

There was a motherboard with 2x 10 Gigabit MACs I was trying to buy that was unavailable for about a year. They even made a variant that swapped out Intel for Broadcom, but availability of that one was little better.

I have a separate datapoint on 10 Gigabit Intel NICs, which has suffered substantial price increases & availability problems.

https://pcpartpicker.com/product/cpndnQ/intel-x550-t2-network-adapter-x550-t2?history_days=730 -

abufrejoval Reply

Geizhals.de confirmed no price hikes on Netgear ProSAFE XS500M during the pandemic, if anything it became cheaper (have a look at MAX data, which goes back to 2017). Prices fell below €50/port, which was inbelieveable during the decade before.bit_user said:Just look at what's happened to pricing of 10 Gigabit switches, for a datapoint that has almost nothing to do with the PC market. Last I checked (a few months ago), they still cost almost double the pre-pandemic prices.

The same can't be said for Intel and Broadcom. It's not like AQC107 is a drop-in replacement for them, so I think a lot of manufacturers tried to wait it out.

There was a motherboard with 2x 10 Gigabit MACs I was trying to buy that was unavailable for about a year. They even made a variant that swapped out Intel for Broadcom, but availability of that one was little better.

I have a separate datapoint on 10 Gigabit Intel NICs, which has suffered substantial price increases & availability problems.

https://pcpartpicker.com/product/cpndnQ/intel-x550-t2-network-adapter-x550-t2?history_days=730

Same with the AQC107 in the very popular ASUS XG-C100C incarnation, cheaper during the pandemic than before or after with €80/port: again the Geizhals price data goes back to 2017.

10Gbit Ethernet was strictly considered enterprise and became a battle zone during the first two decades after its specification, it threatened to replace nice fat FC revenues with FCoE and vendors were going for fat offload ASICs that could also do really cool VM and network virtualization things. Of course everybody wanted to sell fiber links and GBICs so 10GBase-T was treated like a paria. It didn't help that it cost nearly 10 Watts per port originally to do the modulations made necessary by a medium that didn't couldn't just physically support higher frequencies.

The result were costly functional monsters with buggy drivers and VMware eventually gave up and put all their overlay network and software switch logic in software, because it only cost 2-3% of CPU overhead, but allowed rapid code changes, which ASICs didn't (today at 100/400Gbit they are reversing that again).

Many of the fabric vendors went bust, Intel survived relatively well with much less offload functionality, as it had never put their faith into really fat ASICs that much. Instead they prefer CPU side offload-engines on edge SoCs, which ASICs vendors obviously could not duplicate.

But with the hyperscalers 10GBit quickly became far too small and merchant silicon took over the switches at x0 and x00 Gbit/s, there was little money to be made at 10Gbit/s. Servers sell with DPUs these days that are 400Gbit/s going on 800.

Aquantia with NBase-T tried to change the game consumerizing >1Gbit Ethernet by reducing the power cost for modulation to around 3 Watts at 10Gbit/s, lower at 5Gbit and almost to 1Gbit/s levels for 2.5Gbit/s. They also offered fully integrated chips for NICs and switches (cascadable 4 port designs), which changed the price game significantly and essentially made 10Gbit "consumer ready"... except that consumers evidently weren't buying, perhaps because the only network they cared about was the broadband connection and East-West traffic never an issue.

Nobody at Microsoft or Intel was really interested in better office LANs, so somehow better than Gbit LAN never made it to the workplace, either.

Intel and and the big ASIC manufacturers refused for ages to do anything NBase-T, because they wanted to sell high revenue. When Intel finally did 2.5Gbit/s, they must have put interns to the job because they did it badly and buggy, while RealTek was, well, RealTek 2.5Gbit no better or worse than 1Gbit.

What Intel is still selling at 10Gbit is mostly chips they designed 15 years ago and might still make at 90nm somewhere. They just can't compete in power efficiency to Aquantia and BroadCom is even worse: they needed either active cooling or server chassis air-flow to survive when i tried them last more than a decade ago and I doubt they redesigned or shrunk their 10Gbit ASICs since then.

They are also mostly dual port chips by design requiring twice the single port PCIe lane allocations, because that's what everybody wants in servers, but doesn't care for in consumer hardware. Not that I've ever had a single Aquantia card fail on me: much lower power consumption evidently helps.

No, they are not drop-in replacements. But at least these days (since they went into the upstream kernel some years ago) they work much better out of the box with just about every Linux I've tried, while 2.5-10Gbit NIC drivers from Intel have become a true nightmare with hundreds of device IDs for dozens of chipsets and revisions and at least a handful of distinct drivers.

Aquantias support nothing fancy in terms of offloading, iSCSI or FCoE, they are basically 1Gbit technology scaled to NBase-T: exactly what I want for my home lab and now only on a 1x PCIe v4 slot (or a 20Gbit USB port). -

bit_user Reply

No, I don't think so. The motherboard I wanted, and one of their more popular NICs, is based on the X550-AT2, which is 28 nm and launched in Q4 2015 (7.5 years ago). Its TDP is 11 W for 2 ports and it supports multi-gigabit.abufrejoval said:What Intel is still selling at 10Gbit is mostly chips they designed 15 years ago and might still make at 90nm somewhere. They just can't compete in power efficiency to Aquantia

https://www.intel.com/content/www/us/en/products/sku/84329/intel-ethernet-controller-x550at2/specifications.html

I'm not trying to have a **** match between Intel and Aquantia, as much as you seem to be trying to bait me into one. I'm just pointing out what seemed to be in short supply for much of 2021 and 2022. Take it as a datapoint, if you will.