What's Inside Microsoft's HoloLens And How It Works

We have seen Microsoft’s HoloLens in action many times, enough to know that it works. But until now, we didn’t know exactly how it worked.

We knew that it was a self-contained augmented reality (or “mixed reality,” as Microsoft calls it) HMD. We knew it had fundamentally mobile hardware inside (an Intel SoC) that ran Windows 10, and that it had sensors and an inertial measurement unit (IMU). We also knew it had a “Holographic Processing Unit” (HPU), but without any details, Microsoft might as well have called it the “magic black box thingy.” Other than the fact that optical system was projection-based, we were in the dark on that, too.

Finally, Microsoft has now revealed more details about HoloLens--what’s under the hood, what are the system components, and how it all works together.

Article continues belowAt a presentation over the weekend, we got the goods. “HoloLens enables holographic computing natively,” began the presenter. “You don’t have to place any markers in the environment, no reliance on external cameras or other devices. No tethers. You don’t need a cell phone, you don’t need a laptop. All you need to interact with a fully built-in Windows 10 mobile computer is 'GGV'--gaze, gesture and voice.”

And then we got down to the nitty gritty details.

[Update: You can also read our follow-up piece, Microsoft HoloLens: HPU Architecture, Detailed.]

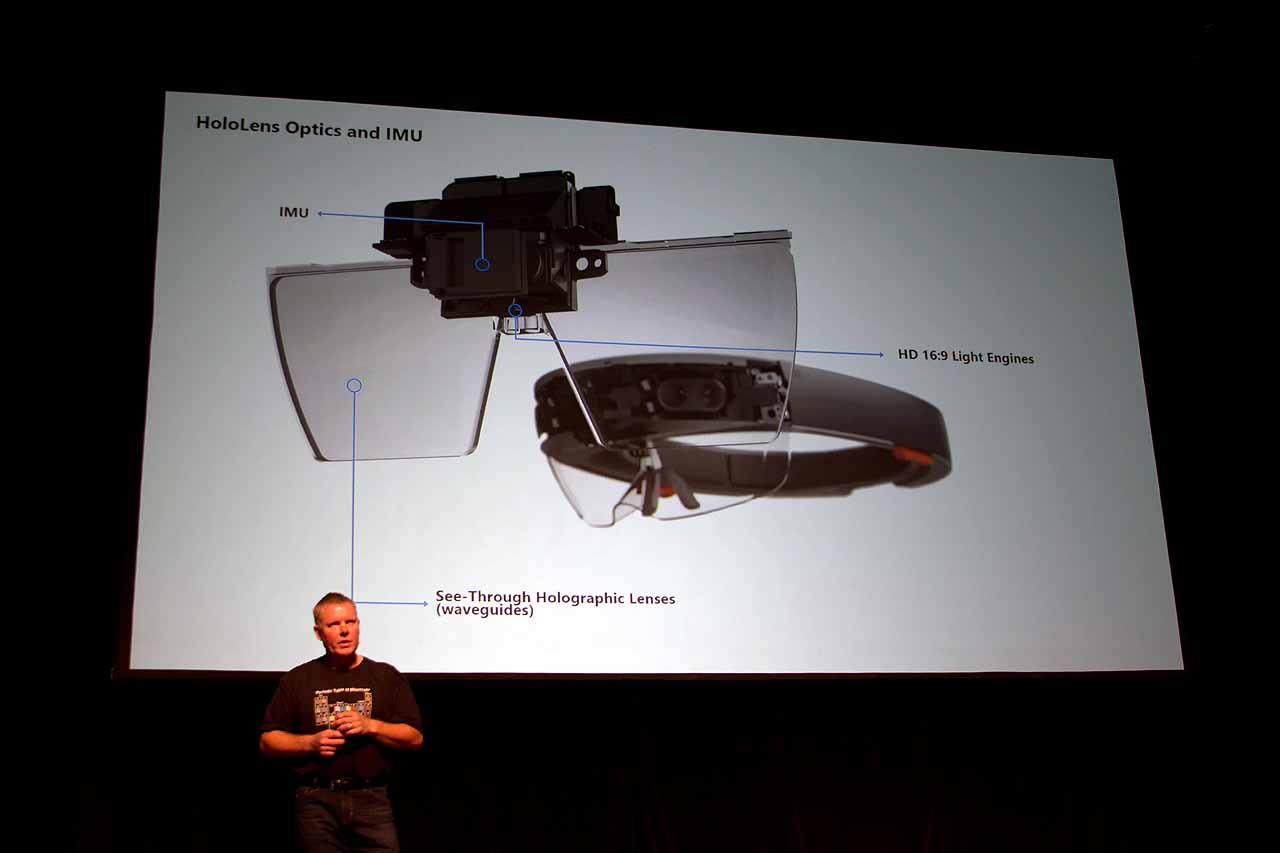

Optics

One of the biggest mysteries of the HoloLens has been the optics. Unlike VR headsets, which produce visuals via OLED displays that are situated right in front of your eyes that you view through glass lenses, HoloLens is a passthrough device. That is, you see the real world through the device’s clear lenses, and images (holograms) are projected out in front of you, up to several meters away.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

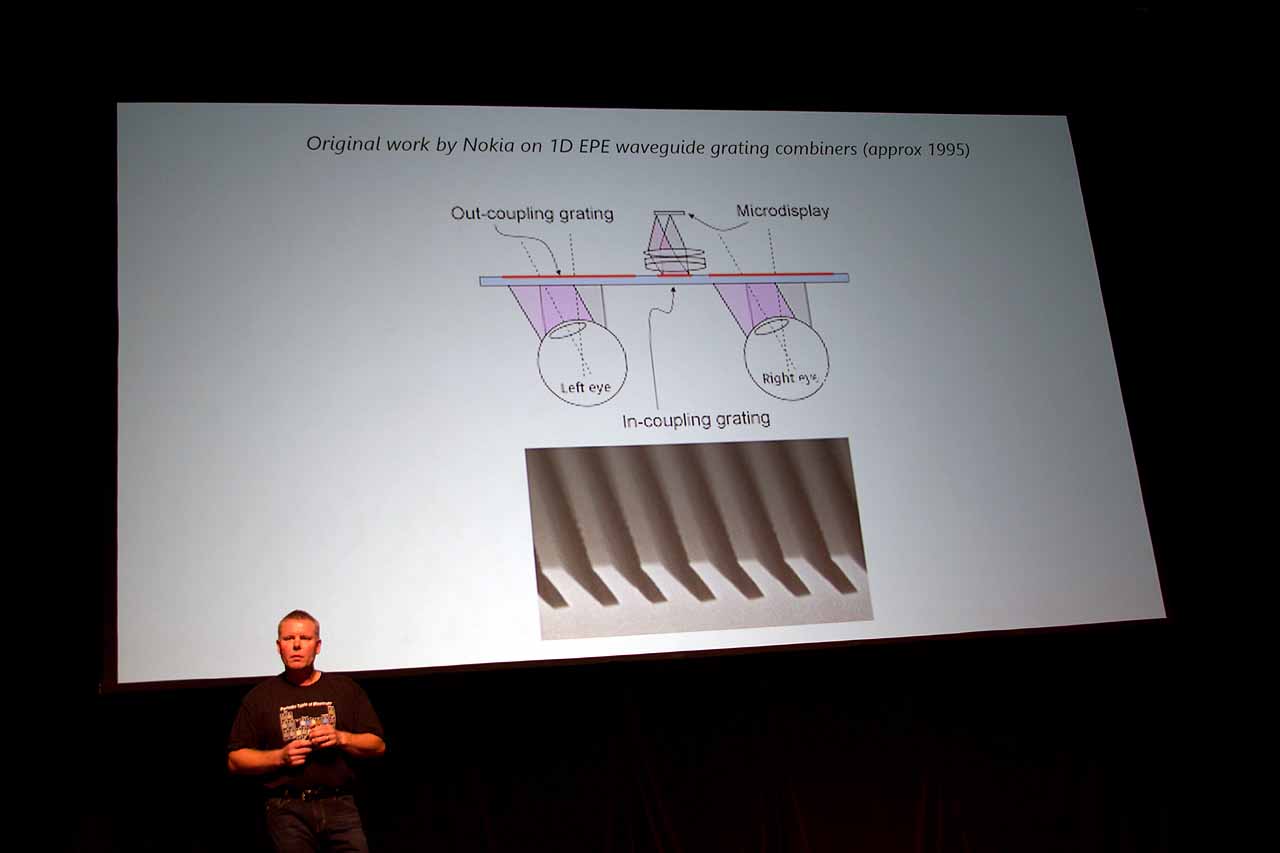

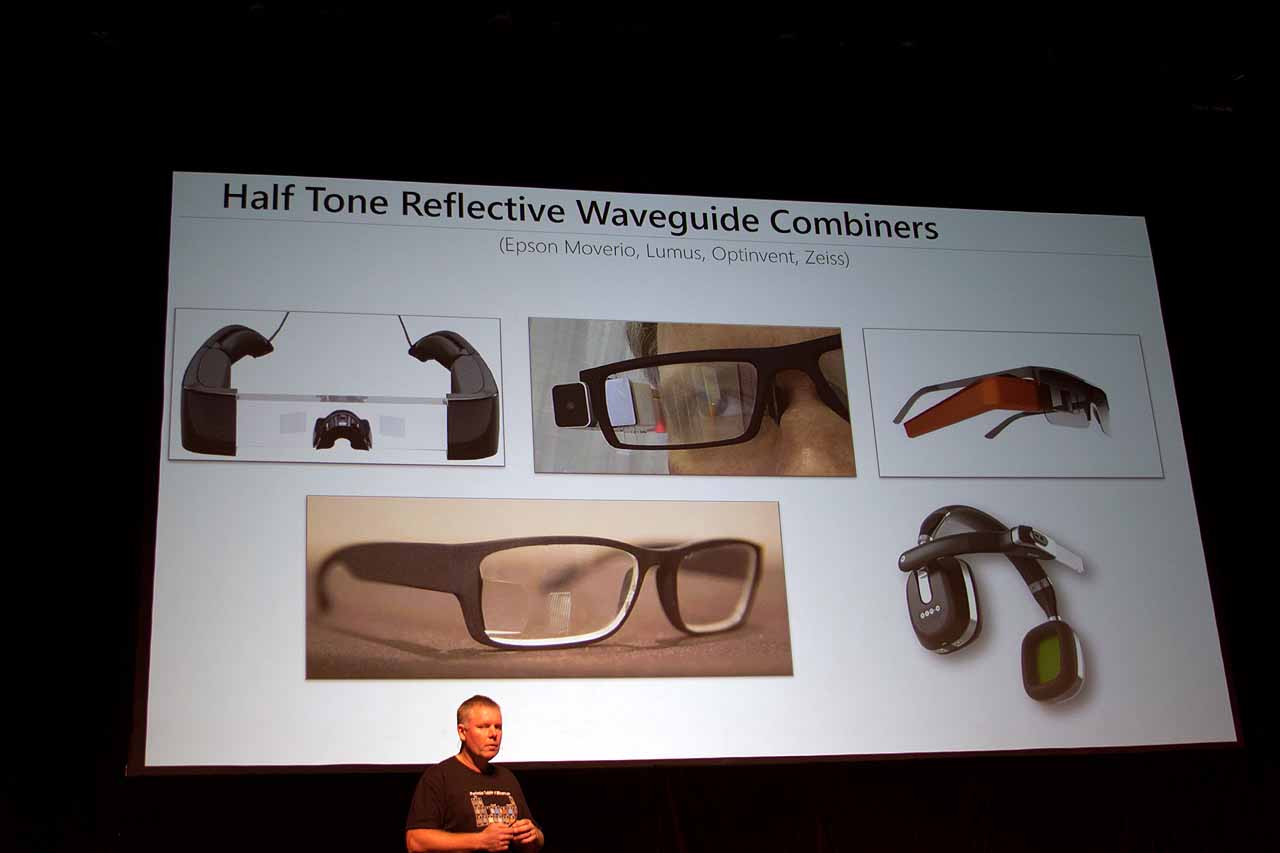

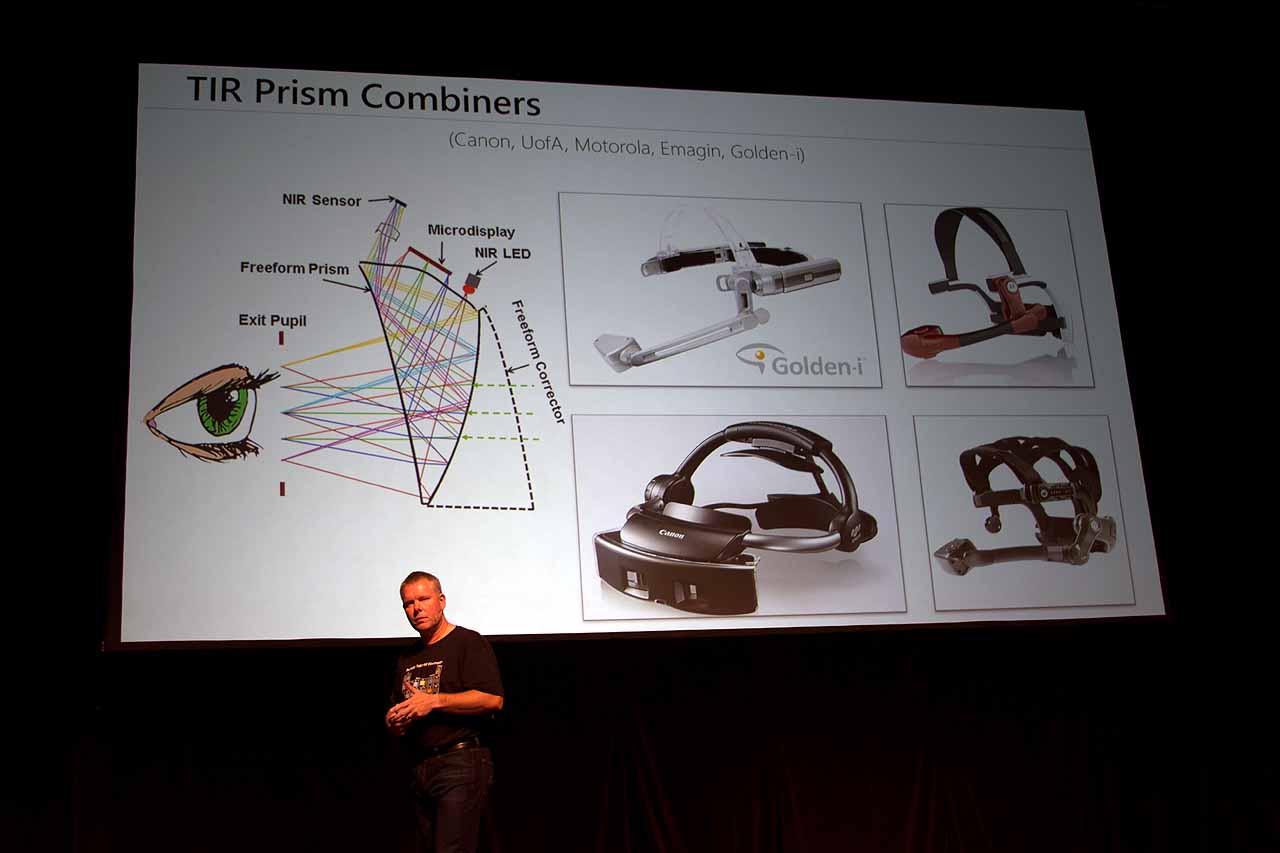

The components of the HoloLen’s optical system break down as follows: Microdisplay → imaging optics → waveguide → combiner → gratings (that perform exit pupil expansion).

That’s a lot of vocabulary words. Hang tight, we’ll explain.

Working backwards, we must understand “exit pupil” versus “entrance pupil.” In this case, the entrance pupil is your eye, and the exit pupil is the projection. The key to the whole display system working correctly is exit pupil expansion, using what’s called an “eye box.” In order for a device like HoloLens to work, you need a large and expandable eye box.

VR enthusiasts have certainly encountered the term interpupillary distance (IPD), which is, simply put, the distance between your pupils. This distance is different for everyone, and that’s problematic in VR and AR. You have to have a way to mechanically adjust for IPD, or the visuals don’t work very well. HoloLens has two-dimensional IPD, which means that you can adjust the eye box horizontally and vertically. Microsoft claimed that this capability gives HoloLens the largest eye box in the industry.

So that’s the goal. To get there, Microsoft started with what it calls “light engines,” or more simply, “projectors.” In the HoloLens, these are tiny liquid crystal on silicon (LQoD) displays, like you’d find in a regular projector. There are two HD 16:9 light engines mounted on the bridge of the lenses (under the IMU,which we’ll discuss later). These shoot out images, which must pass through a combiner; this is what combines the projected image and the real world.

HoloLens uses total internal reflection (TIR), which, depending on how you shape the prism, can bounce light internally or aim it at your eye. With IR, this can be leveraged for eye tracking. According to the presenter on stage, “The challenge doing it this way is that the volume gets large if you want to do a large FoV.” The solution Microsoft employed is to use waveguides in the lenses. He said it’s difficult to manufacture these in glass, so Microsoft applied a surface coating on them that allows them to create a series of defraction gratings.

It’s crucial, he noted, to get all of this right. Otherwise, the holograms will “swim” in your vision, and you’ll get nauseous.

He elaborated on how it all works: There’s a “display at the top going through the optics, coupled in through the diffraction grating, [and] it gets diffracted inside the waveguide.” Then it gets “out-coupled.” “How you shape these gratings will determine if you can do two-dimensional exit pupil expansion,” he added. You can use a few different types of gratings to make RGB color holograms. (If you’ve looked closely at a HoloLens HMD, you may have noticed these layered plates that form the holographic lenses.)

(Very) simply put: With a VR HMD, you essentially have two tiny monitors millimeters from your face, and you view them through glass lenses. By contrast, HoloLens spits out a projected image, which is then combined, diffracted and layered to produce images you can see in space.

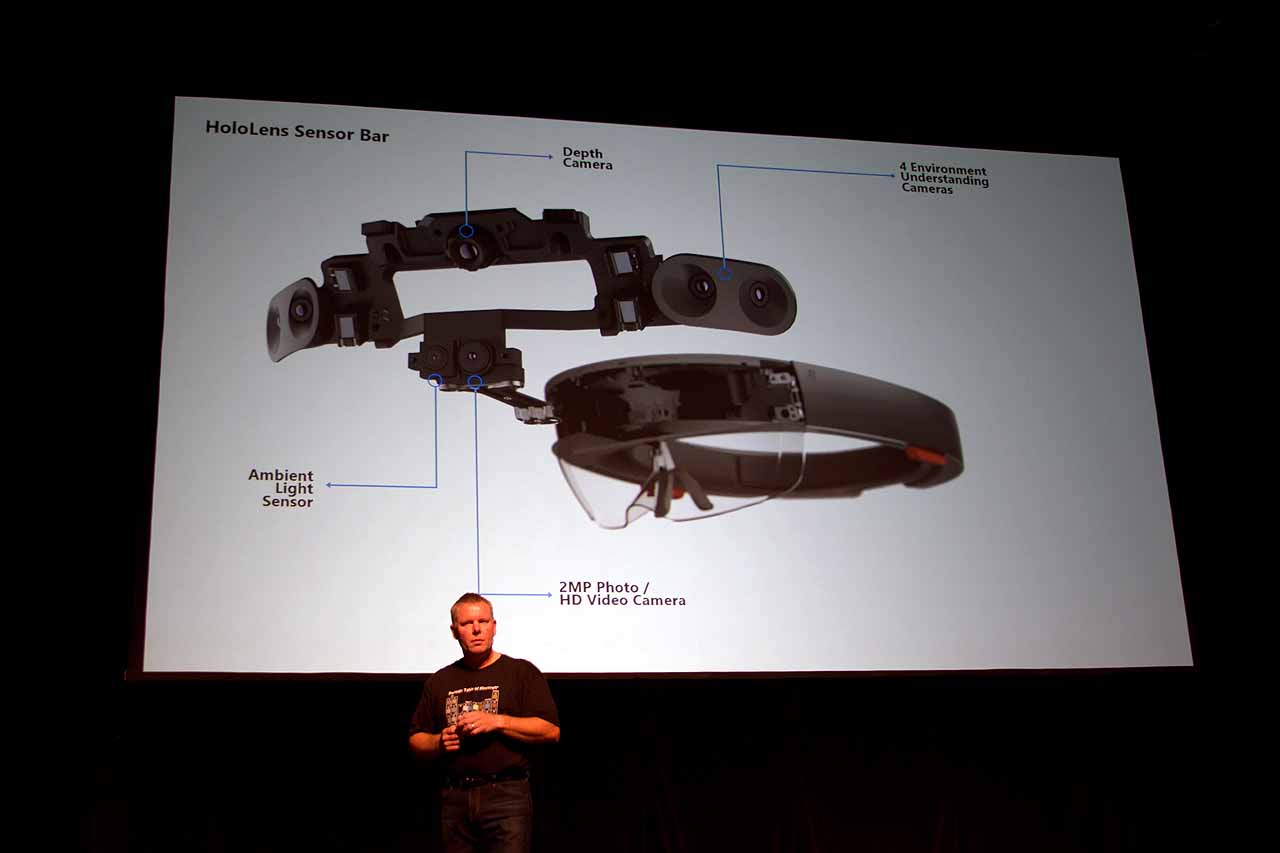

Sensors Sensing Sensibly

When it comes to XR (that is, any kind of virtual, augmented or mixed reality), sensors are paramount. Whether it’s head tracking, eye tracking, depth sensing, room mapping or what have you, the quality and even the speed of the sensors can make or break the XR experience.

Considering the difficulty of doing what we’ve seen HoloLens do, understanding what sensors are on the device has been a subject of much interest.

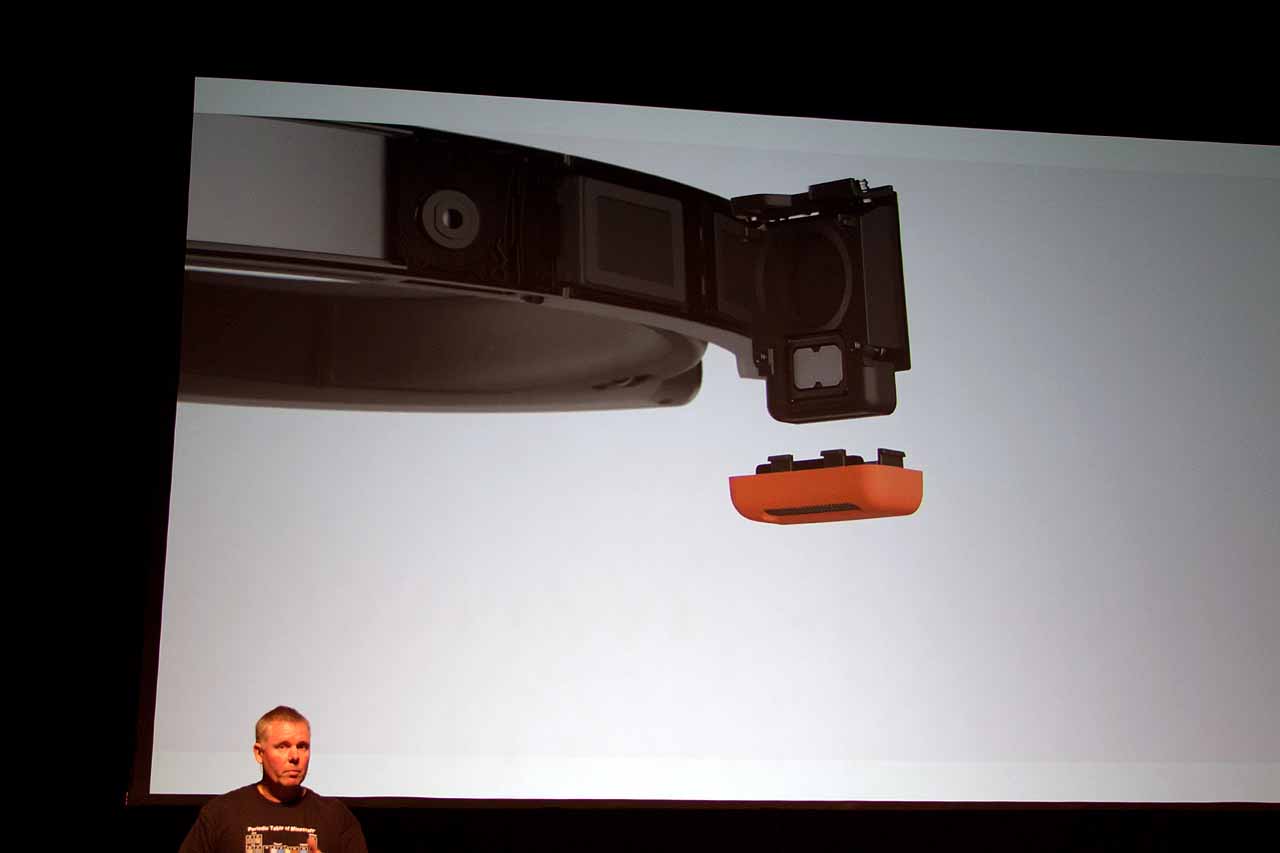

The sensor bar on the HoloLens comprises four “environment understanding cameras,” two on each side; a depth camera; an ambient light sensor; and a 2MP photo/HD video camera. Some of these are off-the-shelf parts, whereas Microsoft custom-built others.

The environmental sensing cameras provide the basis for head tracking, and the (custom) time of flight (ToF) depth camera serves two roles: It helps with hand tracking, and it also performs surface reconstruction, which is key to being able to place holograms on physical objects. (This is not a novel approach--it’s precisely what Intel is doing with its RealSense 400-series camera on Project Alloy.)

These sensors work in concert with the optics module (described above) and the IMU, which is mounted on the holographic lenses, right above the bridge of your nose.

Said the presenter, “Environment cameras provide you with a fixed location in space and pose,” and the IMU is working fast, “so as you move your head around...you need to be able to feed your latest pose information into the display as quickly as possible.” He said that HoloLens can do all of this in <10ms, which, again, is key to preventing “swimming” and also to ensuring that holograms stay locked to their position in the real world space.

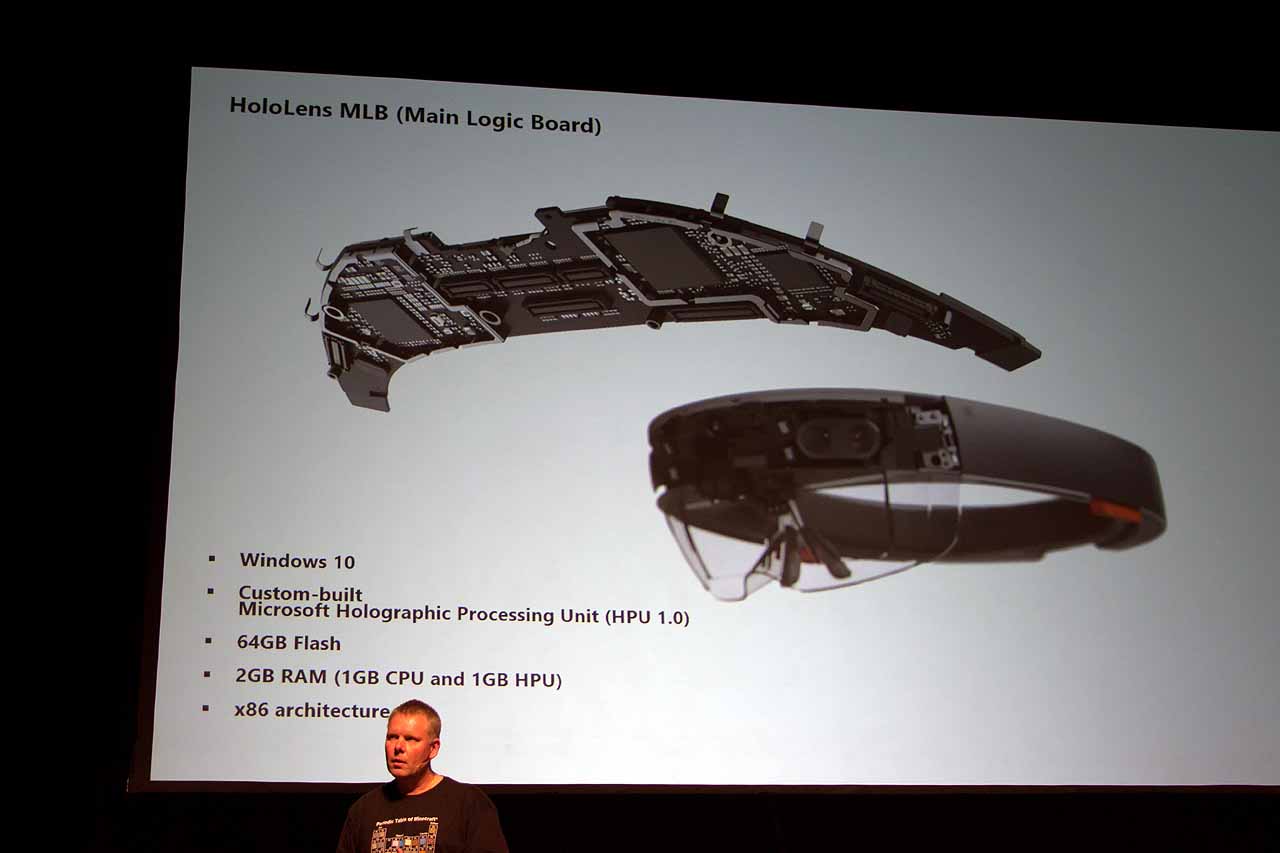

The Heart Of The System

Although the optics and sensor bar have been more mysterious than the how the system works together as a whole--we had previous sussed out that HoloLens had an Intel Cherry Trail chip, an IMU and the Holographic Processing Unit (HPU)--we had no details until now.

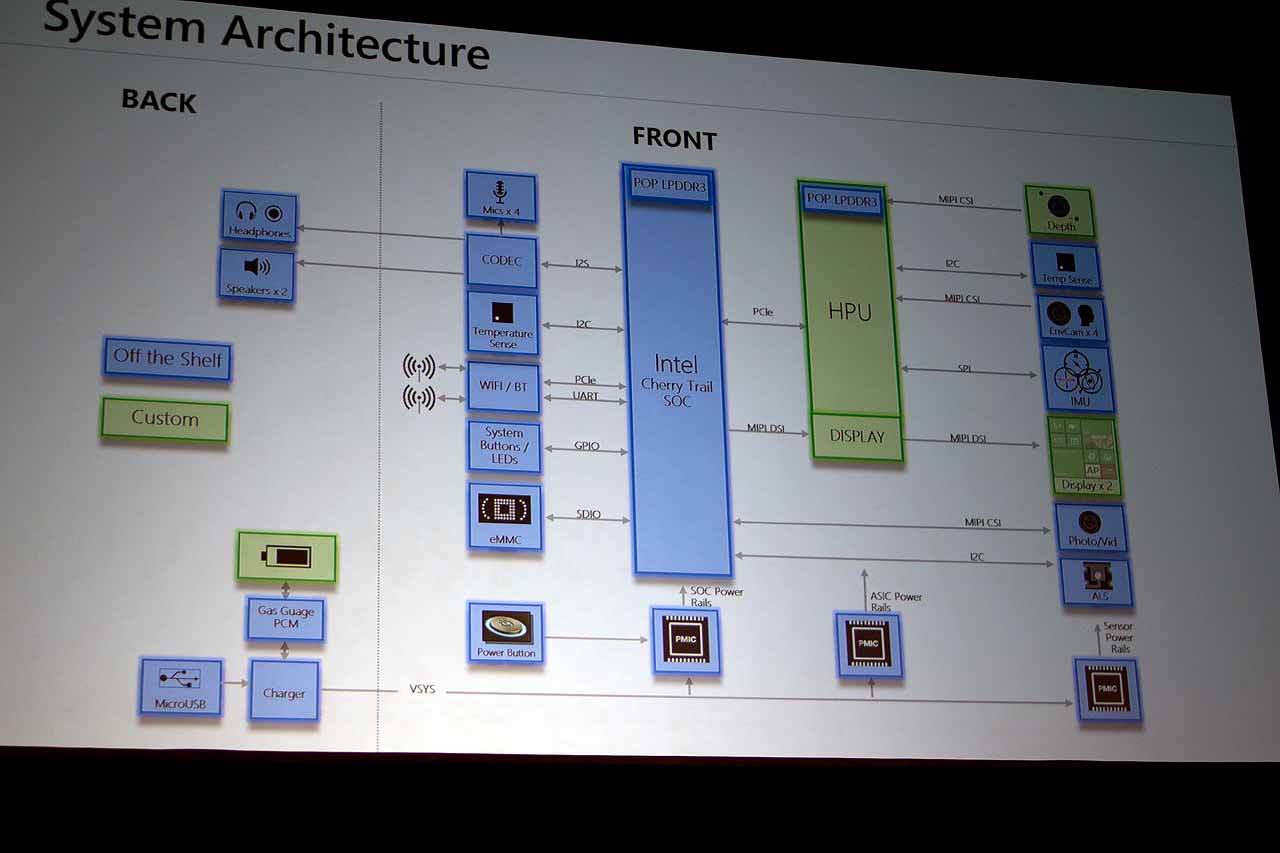

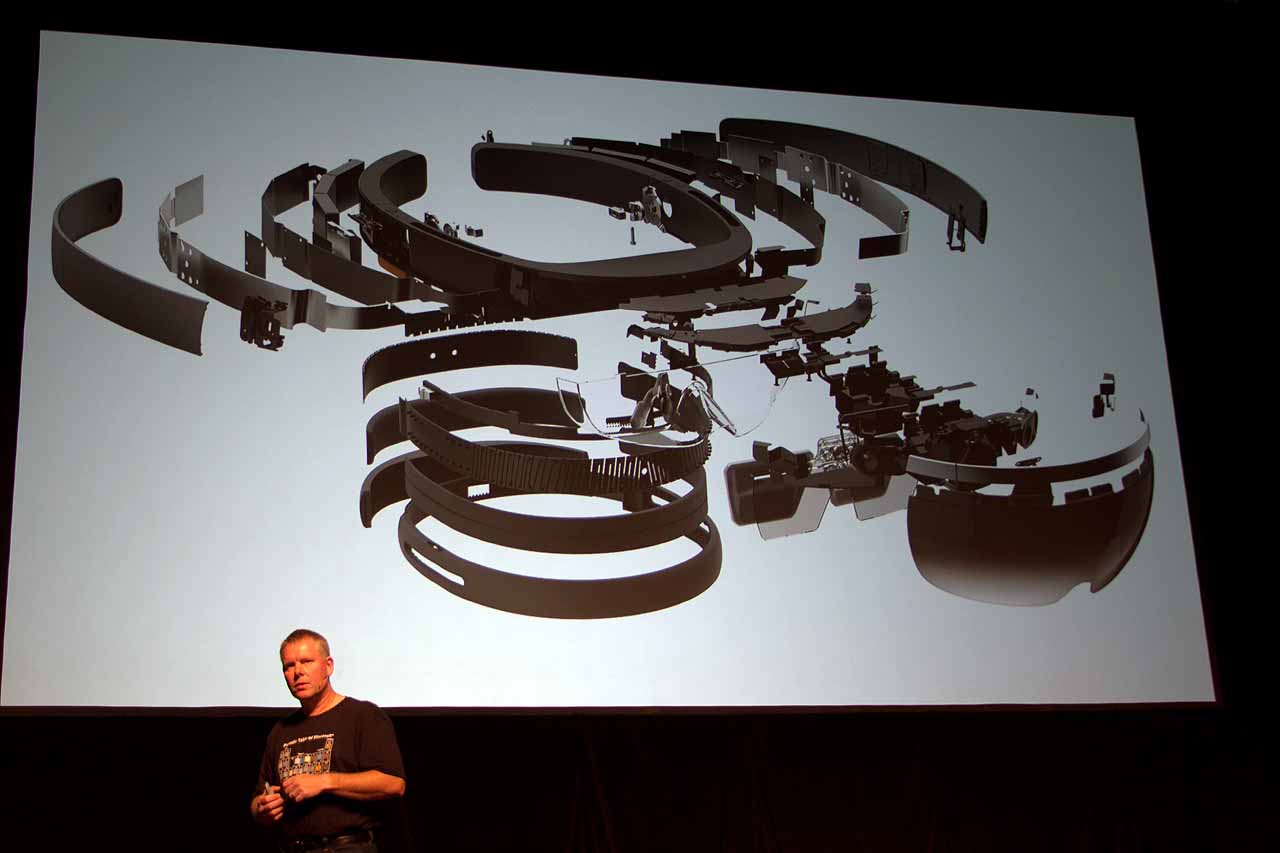

Obviously, the HoloLens has a custom mainboard. (Note the absence of fans or heatsinks, by the way.) The system is essentially mobile hardware with a 64 GB eMMC SSD and 2GB of LPDDR3 RAM (1GB each for the SoC and the HPU). It’s based on x86 architecture and runs Windows 10.

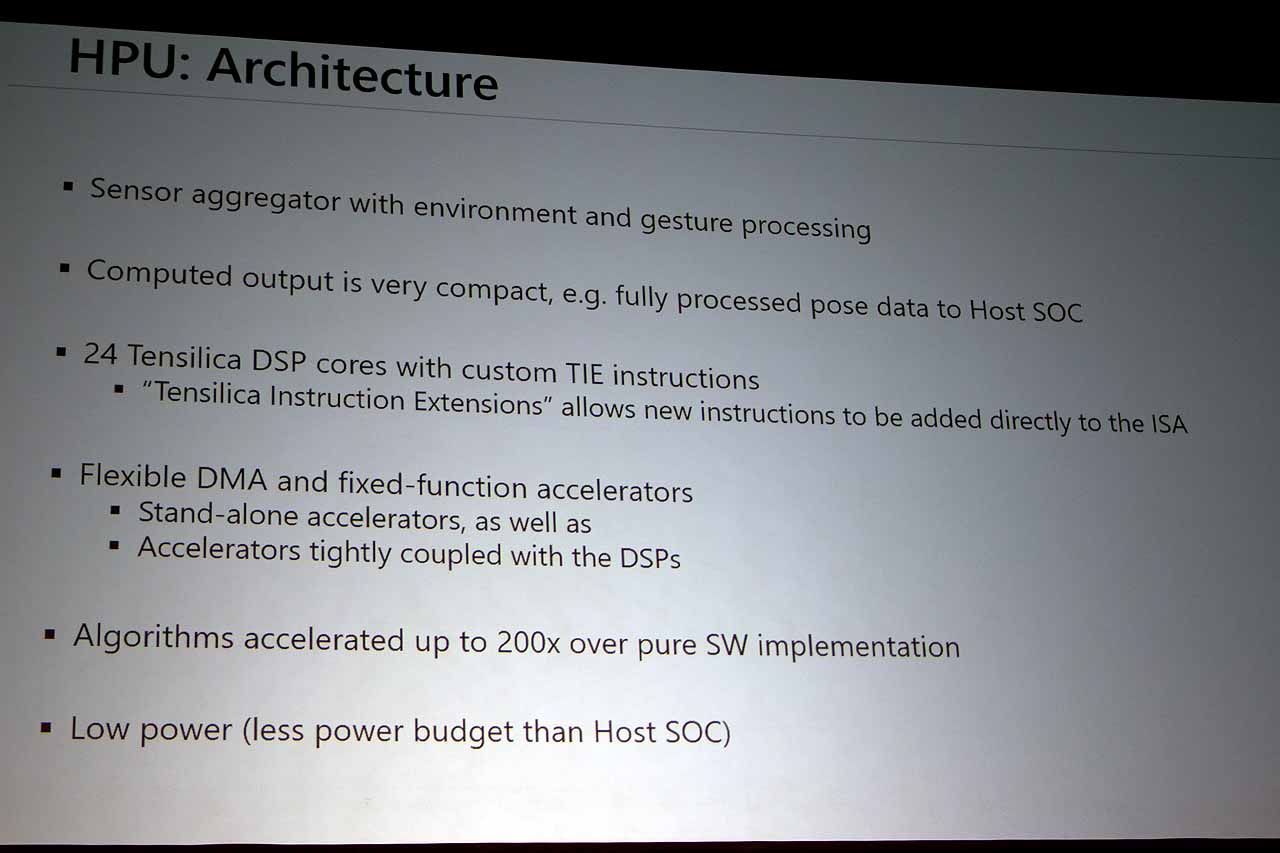

The Cherry Trail SoC (although precisely which one is still unknown) does much of the heavy lifting, but it’s aided by the HPU, which is currently at “HPU 1.0.” As you may have surmised, the SoC handles the OS, applications and shell, and the HPU’s job is offloading all the remaining tasks from the SoC--all of the sensors connect to the HPU, which processes all the data and hands it off as a tidy package to the SoC.

Looking at the system architecture diagram, you can see it all nicely laid out. Note especially which components are custom and which are off-the-shelf. In particular, it's interesting that the battery is custom--actually, it’s “batteries,” plural. What that means exactly, we don’t know, but it’s an intriguing detail.

You can also see that there are four microphones, and they can benefit from noise compression. Further on the audio side, there are stereo speakers mounted on the headband, right where your ears would be (imagine that). Some have criticized the HoloLens speakers as being too weak, with the audio swallowed up by ambient noise. That may be true, but they do offer impressive spatial (and directional) sound, and you get that without headphones (although you can add some cans if you like). In demos, the spatiality is almost creepy.

For connectivity, there’s Wi-Fi and Bluetooth--although, again, Microsoft has not specified which types of either.

HPU 1.0

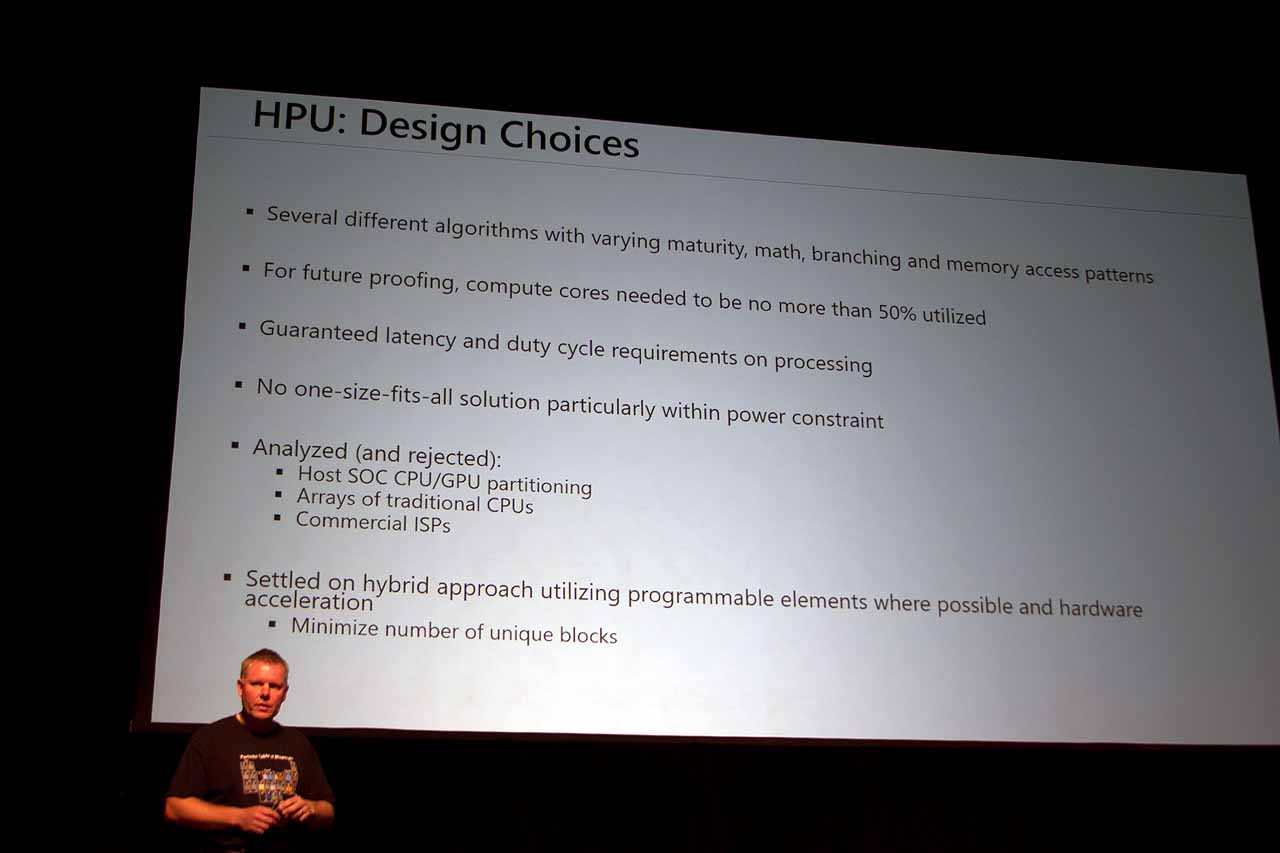

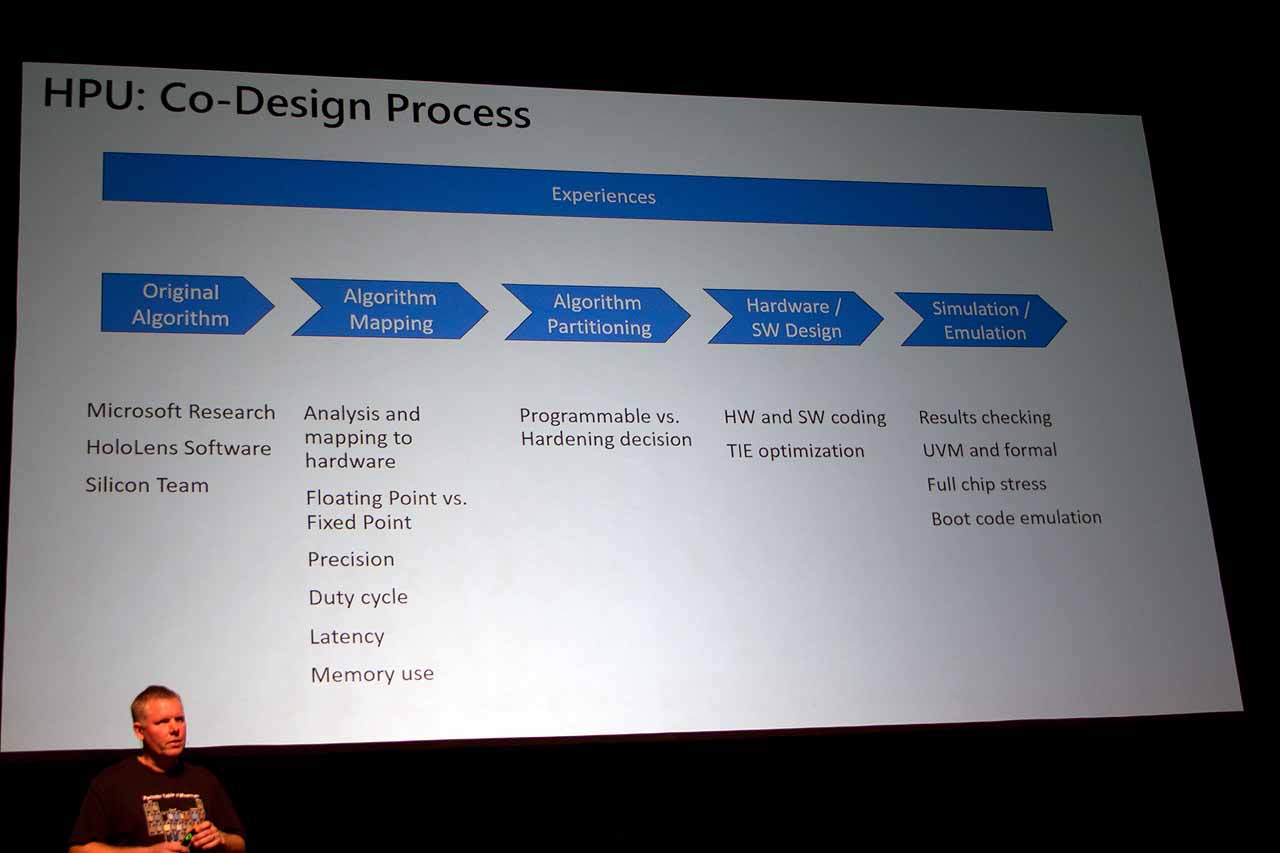

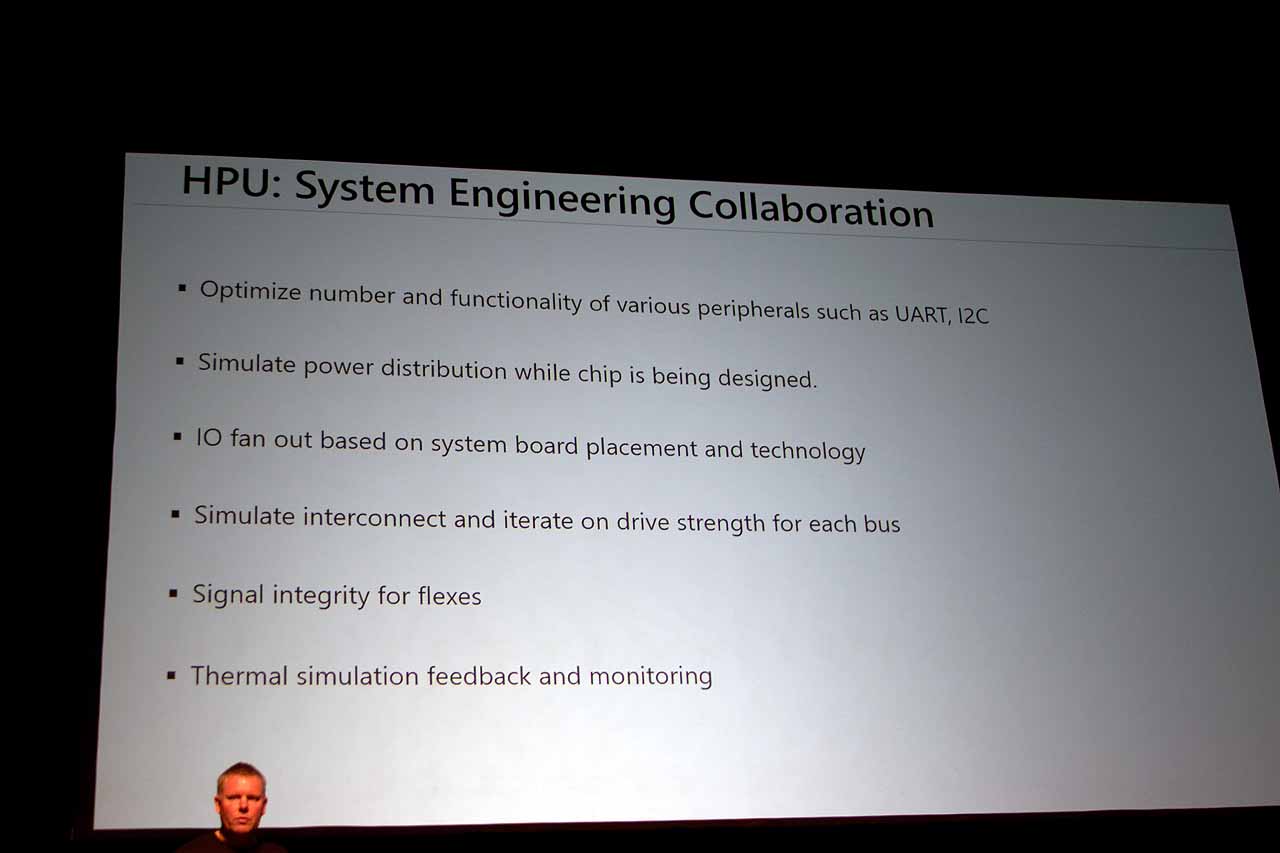

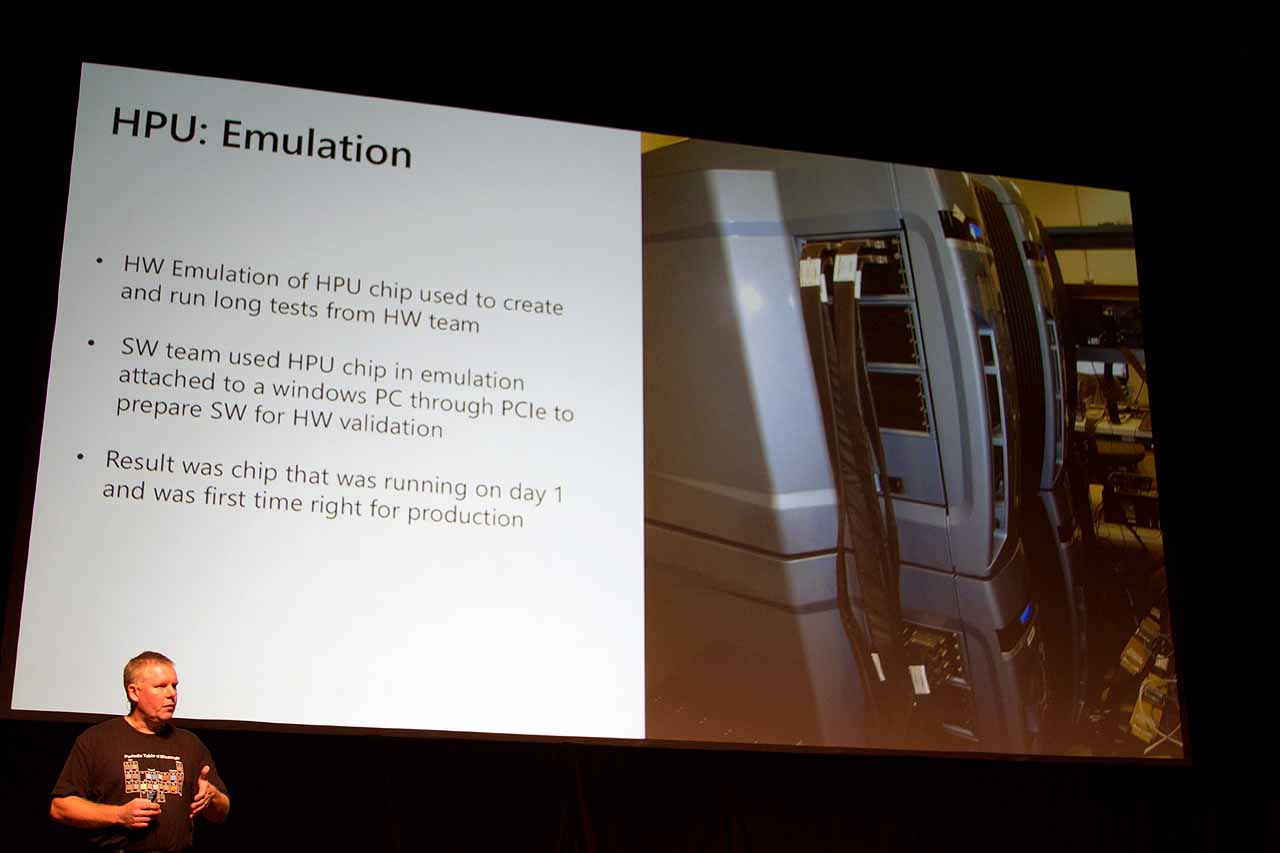

A true deep dive into the architecture of the HPU is beyond the scope of the data we have presently, but Microsoft did provide some illuminating details.

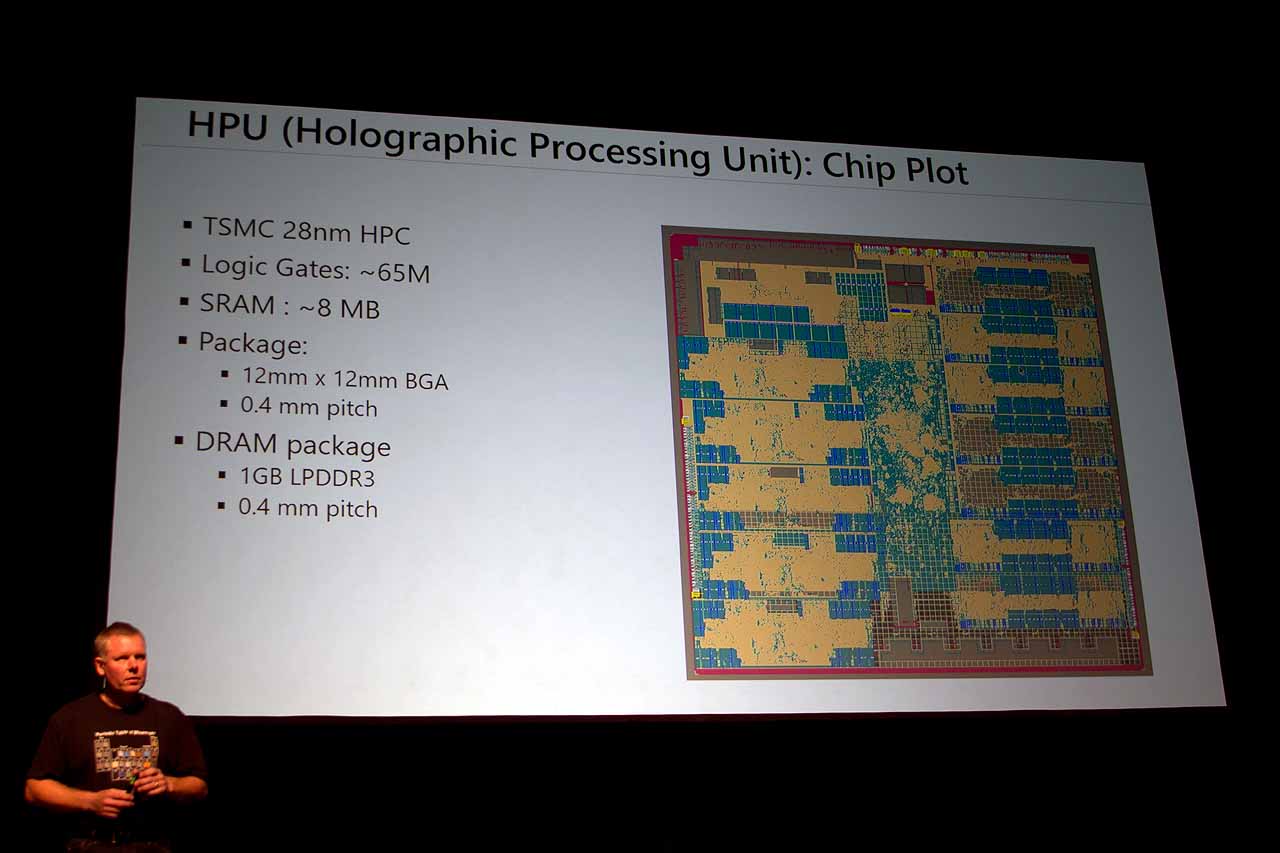

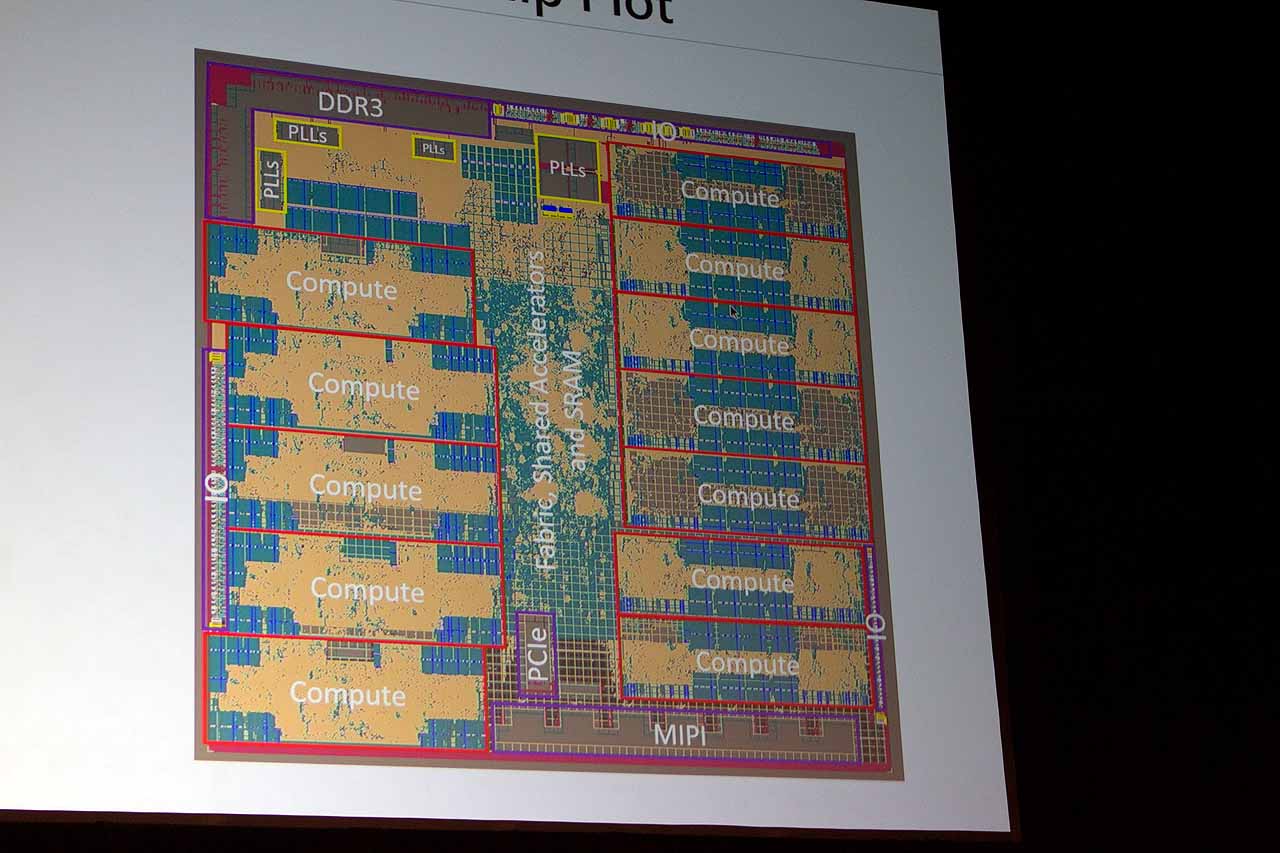

For starters, HPU 1.0 is a TSMC 28nm HPC, and it has 65 million logic gates, 8MB SRAM, and the aforementioned 1GB LPDDR3 RAM, on a 12x12 mm BGA package. It has its own I/O, as well as PCIe and MIPI, and 12 “compute nodes.” Wrapping up his description, the presenter deadpanned, “The rest of the chip comprises the on-chip fabric, some shared fixed-function accelerators, the logic block, [and] a bunch of SRAM.” He also noted that the compute data it outputs is “very compact,” and the chip consumes less power than the SoC.

We will continue to press Microsoft for more information about HoloLens. But the above is a great, long-awaited start.

Seth Colaner previously served as News Director at Tom's Hardware. He covered technology news, focusing on keyboards, virtual reality, and wearables.

-

bit_user Excellent writeup!Reply

I'm still not clear on whether the projected image has real depth of field, or is merely projected on a single plane. If the latter, is the plane fixed in space, or can it be focused at different depths? I was hoping it had a light field projector, but it sounds like the image is just 2D. I guess there's always Magic Leap...

Regarding the HPU, I assume the reason they opted not to use the SoC's HD Graphics GPU was that it's needed for rendering. And basing the HPU on DSPs probably made it easier to augment with custom hardware accelerators than if they'd based it on a GPU. Otherwise, a GPU would probably be more power-efficient. -

d_kuhn Projected image absolutely has depth... I'm not sure HOW it has depth... but when you focus on an item 3' away from you that has a web page overlayed on it, the web page is in focus. When you focus on a different object 20' away any virtual objects layered on that object are in focus at that range. Finally, if you create an object hanging in midair 8' away, it's not in focus when you focus on an object behind them. Basically... virtual object layer seamlessly into the real world, there's no dof shenanigans or at least none that are at all noticeable.Reply

The Hololens design is the real deal... top shelf stuff given it's still in the tech demo phase. Only real issue is that the lens design introduces halo artifacts. It's not a deal killer for the device (I'm still blown away by it), but it is noticeable and I'm sure high on their list of things to reduce/eliminate. -

anbello262 Knowing more about the Hololens is making me interested. At first, I thought that it was just some experimental gadget that would go nowhere (like google glass), and that I would still have to wait many years before another company attempted this and succeded, but I am starting to feel this is the real deal.Reply

I really hope this takes off, I would like to try this. -

bit_user Reply

That's even more amazing, given that it seems they're only rendering with the downsized HD Graphics GPU in the Bay Trail SoC (about 1/2 to 1/3 of what you'd find in a desktop CPU).18486214 said:Basically... virtual objects layer seamlessly into the real world, there's no dof shenanigans or at least none that are at all noticeable.

The Hololens design is the real deal... top shelf stuff

Heh, this is like what Google Glass 3.0 wanted to be. It's a massive leap-frog by MS. I was stunned by the announcement and launch demos, quite frankly.18486869 said:At first, I thought that it was just some experimental gadget that would go nowhere (like google glass)

I get the feeling it even puts Intel's Project Alloy to shame. As far as I know, Magic Leap is the only other thing on the horizon that can even approach it. I actually wonder which cost more to create. Magic Leap is up to like $1.3B in funding, right?

-

wifiburger too bad it's going to get ignored for years just because it's a Microsoft product,Reply

make it gold with diamonds Microsoft and I think people wont even look at it -

scolaner Reply18486869 said:Knowing more about the Hololens is making me interested. At first, I thought that it was just some experimental gadget that would go nowhere (like google glass), and that I would still have to wait many years before another company attempted this and succeded, but I am starting to feel this is the real deal.

I really hope this takes off, I would like to try this.

We get easily jaded as tech journos, but I have to tell you, this thing is amazing. I tried Google Glass back when it was a Thing...it wasn't great. The HoloLens is far and away superior in every way. -

scolaner Reply18486948 said:

That's even more amazing, given that it seems they're only rendering with the downsized HD Graphics GPU in the Bay Trail SoC (about 1/2 to 1/3 of what you'd find in a desktop CPU).18486214 said:Basically... virtual objects layer seamlessly into the real world, there's no dof shenanigans or at least none that are at all noticeable.

The Hololens design is the real deal... top shelf stuff

Heh, this is like what Google Glass 3.0 wanted to be. It's a massive leap-frog by MS. I was stunned by the announcement and launch demos, quite frankly.18486869 said:At first, I thought that it was just some experimental gadget that would go nowhere (like google glass)

I get the feeling it even puts Intel's Project Alloy to shame. As far as I know, Magic Leap is the only other thing on the horizon that can even approach it. I actually wonder which cost more to create. Magic Leap is up to like $1.3B in funding, right?

*Cherry Trail, and that was my biggest question when they first announced it. If you need a beastly PC to power VR experiences, how on earth was Microsoft doing this with a small, self-contained HMD?

But if you think about it, it totally makes sense. To oversimplify: In VR, the GPU has to render *everything*. Entire worlds. With AR (which is what this is, with apologies to MSFT's insistence on calling it "mixed reality"), you're rendering just *one thing*. And of course the sensors and HPU handle the task of keeping the image(s) in place.

Alloy is a totally different beast altogether...I have much to say about it (stay tuned), but you can think of it as both VR and AR, in a way. It's a totally occluded headset (like Vive and Rift) as opposed to having a passthrough lens (like HoloLens), but it uses the RealSense camera to "see" the real world and recreate it inside the HMD for you to "see."

I wrote about some of the tricks devs are using to optimize the GPU resources, though. From the mouths of JPL scientists using HoloLens for mission-critical applications: http://www.tomshardware.com/news/vr-ar-nasa-jpl-hololens,31569.html -

bit_user Reply

Thanks for the correction. It was a slip, on my part, but it's interesting to see that the x7-Z8700 did launch way back in Q1 2015.18489506 said:*Cherry Trail

Since the imagery is translucent, perhaps less emphasis is placed on realism. So, they needn't worry about things like lighting, shadows, or sophisticated surface shaders.18489506 said:But if you think about it, it totally makes sense. To oversimplify: In VR, the GPU has to render *everything*. Entire worlds. With AR (which is what this is, with apologies to MSFT's insistence on calling it "mixed reality"), you're rendering just *one thing*.

Actually, depending on the range and precision of geometry they're extracting, they could conceivably estimate the positions of real world light sources and then light (and shadow) CG elements, accordingly. Perhaps we'll see these types of tricks in version 2.0, or are they already doing it?

Thanks for the link. This sounds specific to their app and landscape rendering, rather than Hololens, generally.18489506 said:I wrote about some of the tricks devs are using to optimize the GPU resources, though. From the mouths of JPL scientists using HoloLens for mission-critical applications: http://www.tomshardware.com/news/vr-ar-nasa-jpl-hololens,31569.html

Clever scene graphs and LoD optimizations have long been standard fare, for game engines (seriously, I remember people talking about octrees and BSP trees on comp.graphics.alogrithms, back in '92). When it's LoD is poorly implemented, you can see the imagery shudder, as it switches to a higher detail level. Anyway, this is a nice little case study. -

srmojuze Reply18486214 said:Projected image absolutely has depth... I'm not sure HOW it has depth... but when you focus on an item 3' away from you that has a web page overlayed on it, the web page is in focus. When you focus on a different object 20' away any virtual objects layered on that object are in focus at that range. Finally, if you create an object hanging in midair 8' away, it's not in focus when you focus on an object behind them. Basically... virtual object layer seamlessly into the real world, there's no dof shenanigans or at least none that are at all noticeable.

From what I've played with on Unity with a basic side-by-side splitscreen viewed on a standard mobile VR goggle (don't laugh yeah)... Overall "Depth" is generally achieved using the amount of stereo separation of the left and right cameras, with according parallax of said cameras and objects in the virtual 3D space.

However, as you mention, when it comes to AR and then mapping all that onto real objects, spooky stuff...

18492439 said:Since the imagery is translucent, perhaps less emphasis is placed on realism. So, they needn't worry about things like lighting, shadows, or sophisticated surface shaders.

Actually, depending on the range and precision of geometry they're extracting, they could conceivably estimate the positions of real world light sources and then light (and shadow) CG elements, accordingly. Perhaps we'll see these types of tricks in version 2.0, or are they already doing it?

Clever scene graphs and LoD optimizations have long been standard fare, for game engines (seriously, I remember people talking about octrees and BSP trees on comp.graphics.alogrithms, back in '92). When it's LoD is poorly implemented, you can see the imagery shudder, as it switches to a higher detail level. Anyway, this is a nice little case study.

Currently AR is orders of magnitude "easier" to render than VR, if only because, as you mention, the style of the elements eg. wireframe overlays, floating UI.

Octrees and BSP and Culling rings a bell, but if I'm not mistaken the current issue in GPU fidelity nowadays is not pure polycount. In fact as a layperson observer I would say the next big leap for graphic engines is realtime global illumination and ultra-high (8K per surface) textures, along with "cinematic" post-processing and sophisticated shaders, eg: https://www.youtube.com/watch?v=1LamAe-k9As

"Sonic Ether" and Nvidia VXGI and Cryengine are close to cracking realtime global illumination (ie. no baked lighting, all lighting, shadowing, light bouncing calculated and rendered in realtime (90fps-160fps?)). VRAM especially with HBM2 means 8K and 16K textures are not too far away. Cinematic post-processing and sophisticated shaders are coming along too.

So yeah in terms of AR you could be walking on the street, the AR device computes the surrounding including light calculation, then renders say a photoreal person which is realtime global illumination-lit and textured, along with suitable post-processing based on the physical environment you are in (say a dusty grey day vs bright blue sunny skies). At that point you can legitimately say it is "mixed reality" since one would not be able to tell the difference between a real person standing there and the rendered artificial character.

Lurking on the sidelines is point cloud/ voxel stuff, which if/when implemented suitably, will make reality go bye-bye forever. So between 3D, VR, AR and mixed reality, well, we're at the doorstep of the final jack in your head: https://www.youtube.com/watch?v=DbMpqqCCrFQ

-

bit_user Reply

Why are you concerned about huge textures? GPUs have all that compute horsepower in order to procedurally generate textures as needed. The resolution of procedural textures is limited only by the precision of the datatypes used to specify the texture coordinates.18514585 said:VRAM especially with HBM2 means 8K and 16K textures are not too far away.

Sure, not everything lends itself to coding as a procedure, but most things can at least be decomposed into a macro scale texture map, and smaller, repeating textures. I just don't see any need for 8k or 16k textures. I mean 4k makes sense for mapping screens, windows, and video images onto things, but that's about it.

I think that's not what anyone means by "mixed reality".18514585 said:So yeah in terms of AR you could be walking on the street, the AR device computes the surrounding including light calculation, then renders say a photoreal person which is realtime global illumination-lit and textured, along with suitable post-processing based on the physical environment you are in (say a dusty grey day vs bright blue sunny skies). At that point you can legitimately say it is "mixed reality" since one would not be able to tell the difference between a real person standing there and the rendered artificial character.

Anyway, there's a lot you're oversimplifying. Light source estimation will never be perfect, in unconstrained AR applications. It can be "good enough", in most cases, so that rendered objects don't seem jarringly out of place.

But you're also glossing over the whole display issue. Hololens doesn't block the light arriving through the visor. So, you'd be talking about something like Intel's Project Alloy, which is a VR-type HMD + cameras.

Safe to say, it'll be a while before we need to worry about a "Matrix" scenario. I don't even see it happening with conventional silicon semiconductors. Maybe carbon nanotube-based computers, or something else beyond lithography.