Nvidia Clears up G-Sync Ultimate Confusion

Mostly

Recently, Nvidia brought some confusion to those shopping for the best gaming monitors when it quietly changed the G-Sync Ultimate requirements listed on its website. We spoke with Nvidia about its highest tier of Adaptive-Sync technology to understand what exactly a G-Sync Ultimate monitor entails in 2021.

In general, G-Sync Compatible is Nvidia’s answer to AMD FreeSync. A G-Sync monitor paired with a system running an Nvidia graphics card will fight off screen tearing and offer other gaming benefits, depending on the type of G-Sync the monitor has. There’s standard G-Sync, which requires Nvidia’s proprietary scaler, G-Sync Compatibility, which doesn’t, and G-Sync Ultimate.

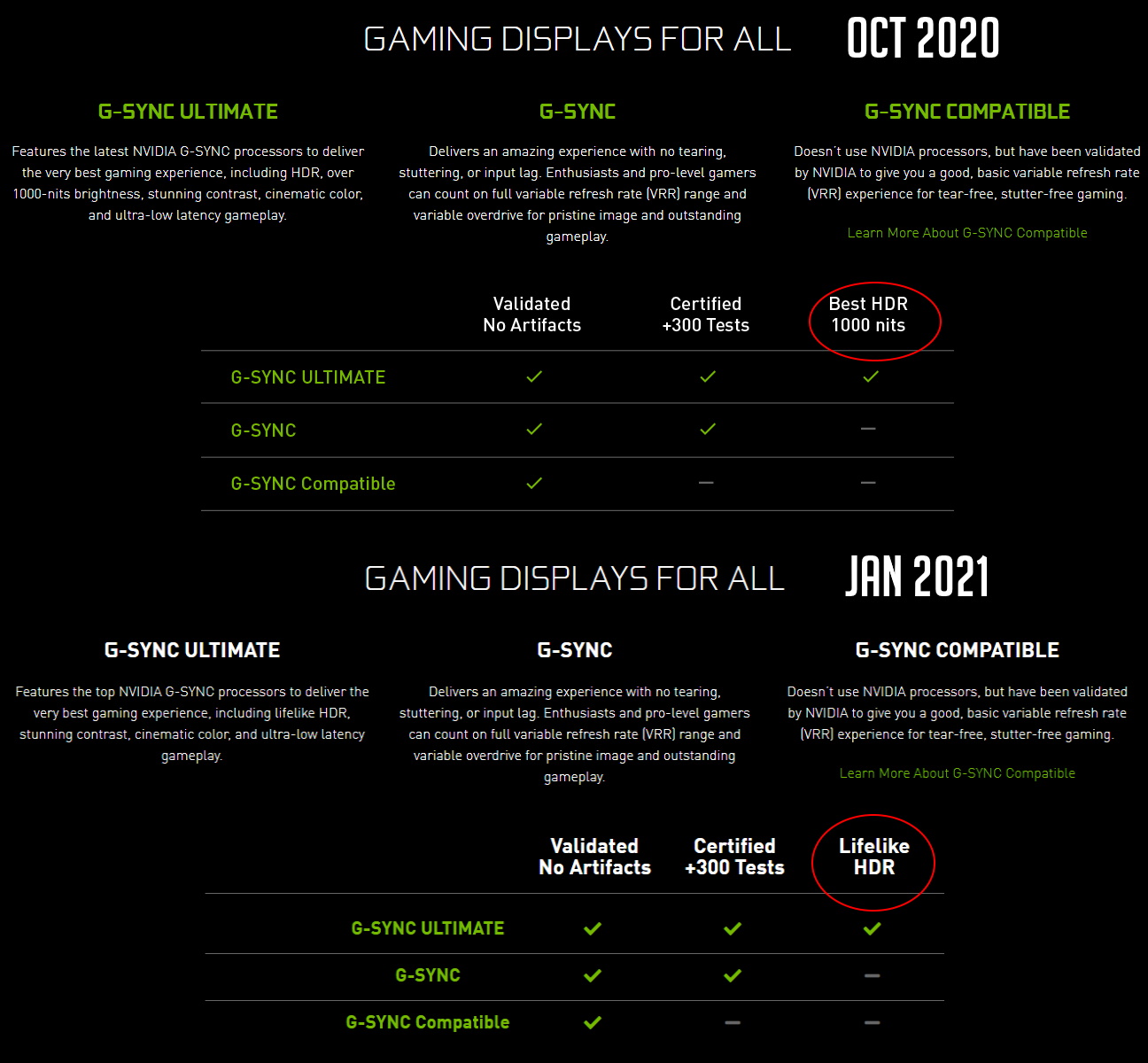

Up until a few weeks ago, Nvidia’s website listed G-Sync Ultimate as featuring “the latest Nvidia G-Sync processors to deliver the very best gaming experience, including HDR, over 1,000 nits brightness, stunning contrast, cinematic color and ultra-low latency gameplay.” In January, Nvidia changed that description, now saying that G-Sync Ultimate monitors have “the top Nvidia G-Sync processors to deliver the very best gaming experience, including lifelike HDR, stunning contrast, cinematic color and ultra-low latency gameplay.”

Nvidia removed mention of “1,000 nits” as a necessary checkmark for G-Sync Ultimate monitors and replaced it with “lifelike HDR.”

But what exactly does "lifelike HDR" mean?

An Nvidia representative confirmed to Tom’s Hardware that today G-Sync Ultimate monitors require:

- Refresh rates greater than or equal to 144 Hz

- Variable LCD overdrive

- Optimized latency for gaming

- Factory-calibrated accurate SDR (sRGB) and HDR color (P3) gamut support

- Best-in-class HDR support

- Best-in-class image quality

While that doesn’t provide us much more information on that idea of “lifelike HDR,” Nvidia did note that its design, testing and validation processes take into account color gamut, brightness, contrast, resolution and refresh rate, among other factors.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

But what about the old 1,000-nit spec? If you’re looking for the best HDR monitor, a monitor that can hit 1,000 nits brightness can do wonders. Some of the first G-Sync Ultimate monitors included the Acer Predator X27 and Asus ROG Swift PG27UQ. Both are VESA-certified to hit a minimum/maximum brightness of 1,000 nits with HDR content and do using premium-priced full-array local dimming (FALD) backlights. The results are stellar, making for two excellent HDR screens, as well as some of the best 4K gaming monitors money can buy.

Nvidia no longer promises 1,000 nits with G-Sync Ultimate but did point to OLED monitors as potential candidates for the certification. OLED monitors are also known to make amazing HDR monitors, due to infinite contrast levels, but may not hit 1,000 nits. For example, the Alienware AW5520QF, which our testing found to produce brilliant HDR thanks to amazing contrast offered by the OLED panel, is only specced for a minimum max brightness of 400 nits with HDR (the AW5520QF isn’t G-Sync-certified).

There are currently no OLED monitors on Nvidia’s list of G-Sync Ultimate monitors, but with the continuing growth of OLED, including an upcoming desktop-sized OLED monitor from LG, OLED may eventually play a bigger role in gaming monitors than it does today.

An Nvidia rep also noted that “advanced multi-zone edge-lit displays offer remarkable contrast with 600-700 nits.” Edge-lit backlights are a tier down from the FALD backlights the aforementioned 1,000-nit monitors feature, but, if well-implemented, can still produce strong HDR that brings a noticeable impact over SDR content. One such example is the Dell S2721DGF (G-Sync Compatible and FreeSync only), which is only specced for 400 nits. We can expect monitors like this to earn the G-Sync Ultimate badge moving ahead.

“G-Sync Ultimate was never defined by nits alone nor did it require a VESA DisplayHDR 1000 certification,” Nvidia’s rep said.

Scharon Harding has over a decade of experience reporting on technology with a special affinity for gaming peripherals (especially monitors), laptops, and virtual reality. Previously, she covered business technology, including hardware, software, cyber security, cloud, and other IT happenings, at Channelnomics, with bylines at CRN UK.

-

wr3zzz Where is the Nvidia-Monster Cable G-sync Ultimate HMDI cable so I can get the best out of my HDMI 2.1 VRR OLED TV?Reply -

bjarnepedersen Did they clear anything up? - it's just more blah blah if we like it - it gets certified....Reply

and how does any monitor get the G-SYNC certification when then monitor can have NO input lag? -

sizzling Reply

There will always be some lag. Zero lag would break the laws of physics.bjarnepedersen said:

and how does any monitor get the G-SYNC certification when then monitor can have NO input lag?