Nvidia VSR Testing: AI Upscaling and Enhancement for Video

Improve those low quality video streams

Nvidia Video Super Resolution — Nvidia VSR — officially becomes available to the public today. First previewed at CES 2023, and not to be confused with AMD's VSR (Virtual Super Resolution), Nvidia VSR aims to do for video what its DLSS technology does for games. Well, sort of. You'll need one of Nvidia's best graphics cards for starters, meaning an RTX 30- or 40-series GPU. Of course, you'll also want to set your expectations appropriately — the above lead image, for example, is faked and exaggerated and not at all representative of VSR.

By now, everyone should be getting quite familiar with some of what deep learning and AI models can accomplish. Whether it's text-to-image art generation with Stable Diffusion and the like, ChatGPT answering questions and writing articles, self-driving cars, or any number of other possibilities, AI is becoming part of our everyday lives.

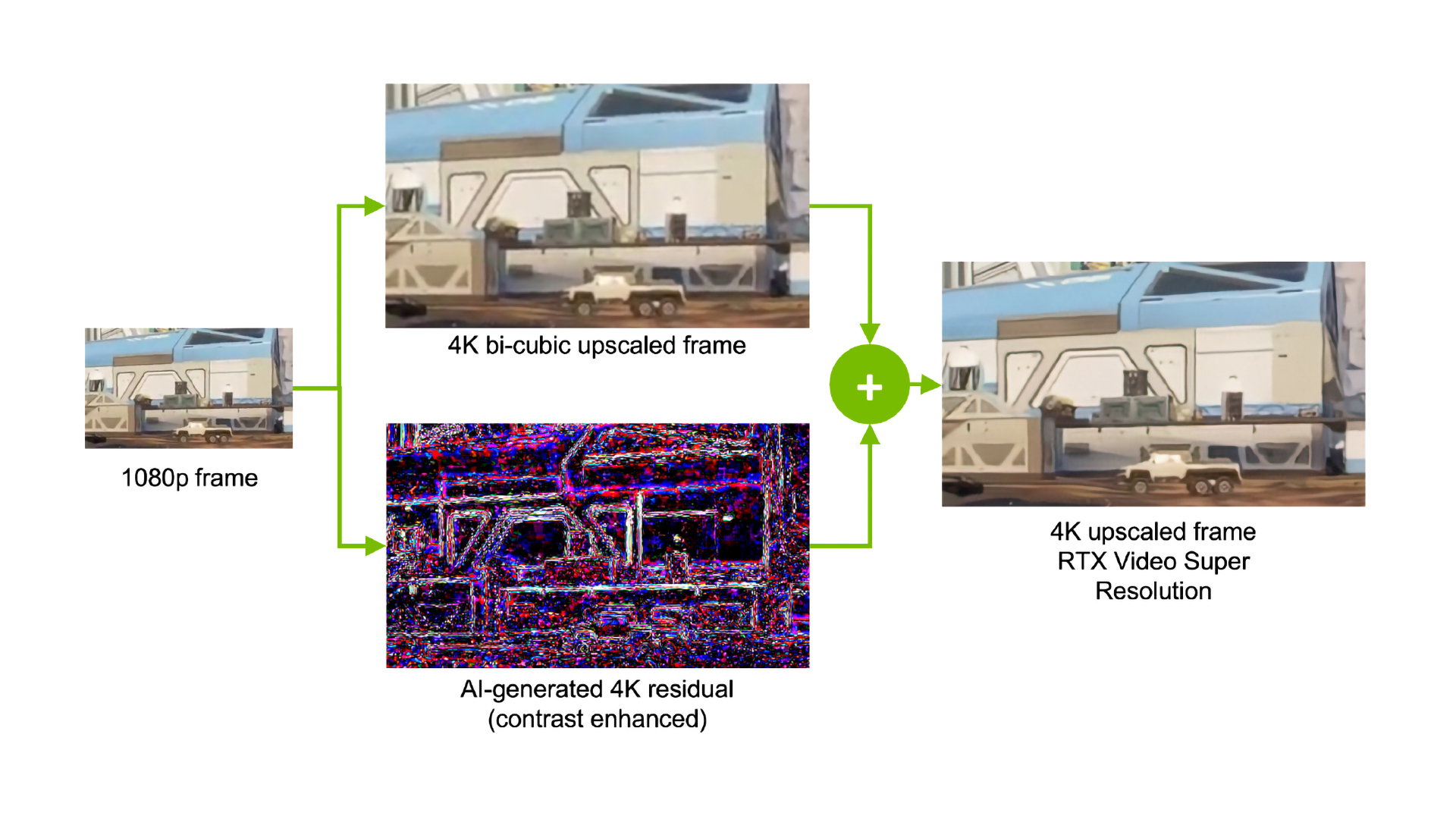

The basic summary of the algorithm should sound familiar to anyone with knowledge of DLSS. Take a bunch of paired images, with each pair containing a low-resolution and lower bitrate version of a higher resolution (and higher quality) video frame, and run that through a deep learning training algorithm to teach the network how to ideally upscale and enhance lower quality input frames into better-looking outputs. There are plenty of differences between VSR and DLSS, of course.

For one, DLSS gets data directly from the game engine, including the current frame, motion vectors, and depth buffers. Combined with the previous frame(s) and the trained AI network to generate upscaled and anti-aliased frames. With VSR, there's no pre-computed depth buffer or motion vectors to speak of, so everything needs to be done based purely on the video frames. So while in theory VSR could use the current and previous frame data, it appears Nvidia has opted for a pure spatial upscaling approach. But whatever the exact details, let's talk about how it looks.

Nvidia provided a sample video showing the before and after output from VSR. If you want the originals, here's the 1080p upscaled via bilinear sampling source and the 4K VSR upscaled version — hosted on a personal Drive account, so we'll see how that goes. (Send me an email if you can't download the videos due to exceeding the bandwidth cap.)

We're going to skirt potential copyright issues and not include a bunch of our own videos, though we did grab some screenshots of the resulting output from a couple of sports broadcasts to show how it works on other content. What we can say is that slow-moving videos (like Nvidia's samples) provide the best results, while faster-paced stuff like sports is more difficult, as the frame-to-frame changes can be quite significant. But in general, VSR works pretty well. Here's a gallery of some comparison screen captures (captured via Nvidia ShadowPlay).

Nvidia Sample: Source 1080p with bilinear upscale

Nvidia Sample: 1080p upscaled via VSR

Soccer Sample: 720p bilinear upscaled source

Soccer Sample: 720p to 4K VSR upscale

Soccer Sample: 480p bilinear upscaled source

Soccer Sample: 480p VSR upscaled to 4K

Hockey Sample: 720p bilinear upscaled source

Hockey Sample: 720p to 4K VSR upscale

Hockey Sample: 720p bilinear upscaled source

Hockey Sample: 720p to 4K VSR upscale

All those images are 4K JPG, with maximum quality — not lossless, but we can't exceed 10MB, so some slight compression was required. You can still clearly see the differences between the normal upscaling (in Chrome) versus the VSR upscaling. It's not a massive improvement in any of the sports samples, but there's definitely some sharpening and deblocking that, at least in our subjective view, looks better.

VSR can't work miracles. Starting with a 720p source and upscaling to 4K (9x upscale) will be more difficult than going from 1080p to 4K (4x upscaling). And the 480p to 4K upscale (20.25x upscale!) is still missing a ton of detail, like you can't see the strands of the net in either the VSR or non-VSR content. Even the Tottenham Hotspurs logo in the top-right looks much better on the 720p upscaled sample than on the 480p upscaling (sorry about that overlay on the one image).

The good news: If you have an RTX 30- or 40-series graphics card, you can download the latest Nvidia drivers and give VSR a shot. You'll also need the latest Chrome or Edge browser, at least for now. But with the appropriate software, VSR seems to work on every video we have tried so far.

The bad news: RTX 20-series users are left out in the cold, for now at least. We asked about this requirement and don't have a precise answer as to the omission yet. It's possible that Nvidia trained the network for its Tensor cores with sparsity, which means it can currently only run on Ampere and later architectures. But it seems like it could have easily opted for Turing compatibility from the start, had it wanted to, because the actual computational workload appears relatively small.

To show this, we tested VSR on the same video sequence — the 720p NHL game upscaled to 4K — on two different extremes of the VSR spectrum, with both the VSR Quality 1 and VSR Quality 4 settings. At the top, we have the RTX 4090 Founders Edition, while at the bottom, we have the EVGA RTX 3050. The 4090 has theoretical compute of 661 teraflops FP16, with sparsity. The RTX 3050 tips the scales at just 73 teraflops, again with sparsity. In practice, both cards looked the same. More importantly, we captured power data for just the graphics cards, which proves telling.

| GPU | VSR Off | VSR On (1) | VSR On (4) |

|---|---|---|---|

| RTX 4090 (Watts) | 28.9 | 32.8 | 36.9 |

| RTX 3050 (Watts) | 13.0 | 15.9 | 15.9 |

Clearly, neither of the GPUs are being pushed even remotely hard by the VSR algorithm. The 4090 uses 4W more power with the VSR quality at 1, and 8W more power with VSR 4. In contrast, the RTX 3050 needed just 3W more power for either VSR setting. That means the Tensor cores aren't even close to maxed out, on either GPU, which also means that even if you have a lowly mobile RTX 3050 with 4GB VRAM, you can still run VSR.

Overall, it's an interesting take on video enhancement. There are lots of other algorithms that don't use machine learning that have been attempted for upscaling and enhancement as well, and some might be able to match VSR, but they're not supported just by downloading the latest Nvidia drivers and Chrome browser updates.

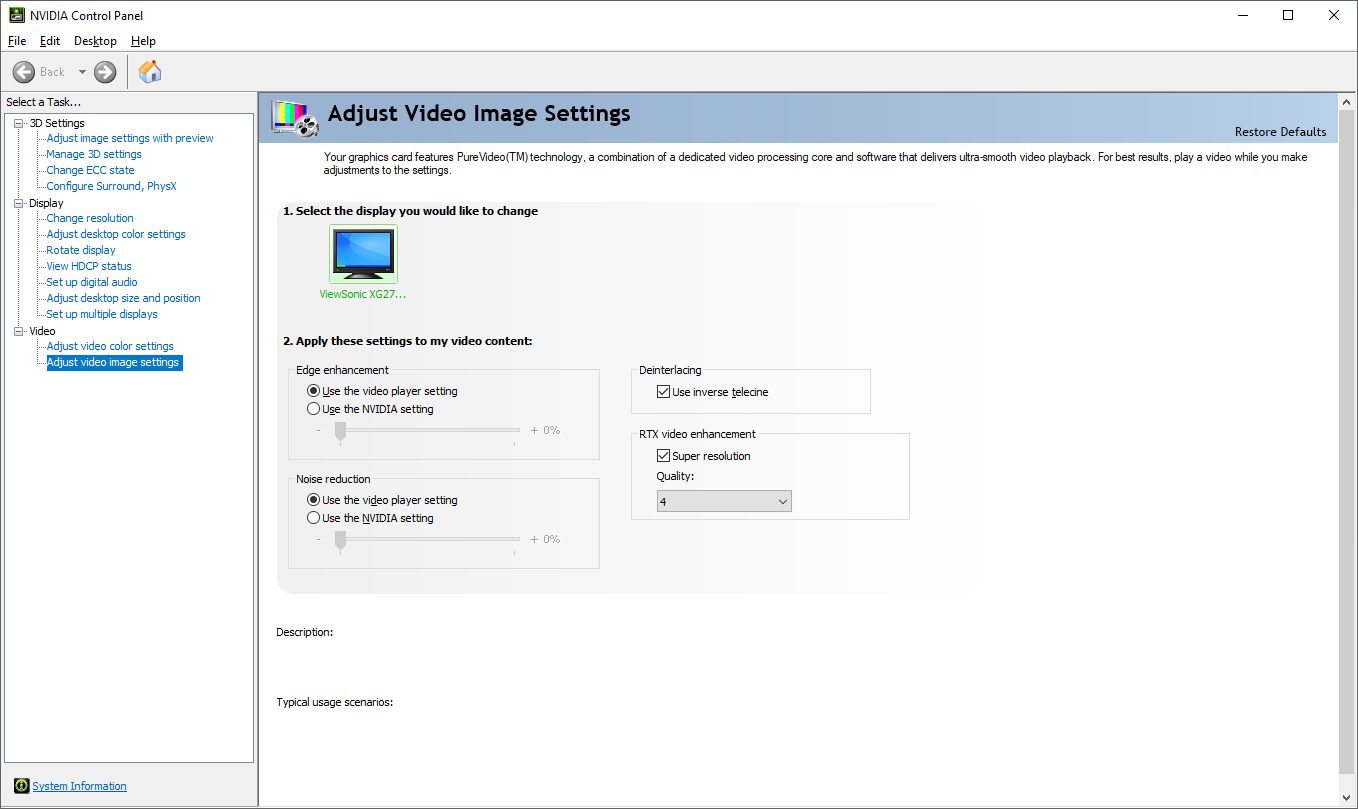

As far as what you need to do to turn on VSR, it's a toggle in the latest drivers (we received early access to Nvidia's 531.14 drivers for purposes of this review). First, tick the "Super resolution" box under RTX video enhancement, then select a desired quality. Nvidia says higher quality settings may put more of a load on your GPU, so perhaps if you're watching a video stream on a second monitor while playing a game, you'll want to stick with a lower setting. If you're only watching a video, though, you may as well go whole hog and set the quality to 4.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

DataPotato A 2.9 watt algorithm restricted to only the newest 2 generations of only their card. "Nice" job Nvidia.Reply

Now it's AMD's turn: go make a 0.5 watt screen-space filter that runs on every card since 2004 and no one will adopt. -

JarredWaltonGPU Reply

Yes, as far as I understand it, at least for now, this is purely for browsers. I wouldn't be shocked to see Nvidia offer a final version (or at least, RTX 20-enabled version) for use in other utilities like VLC, but we'll have to wait and see. I'll check with Nvidia as well to see if that's officially in the works.Jake Hall said:So it only works in a browser? -

PlaneInTheSky Replyhttps://i.postimg.cc/sXf0ccX2/ghfhfhfhf.jpg

Cool 240p image.

This might have been interesting in the 90s when people were watching 360p cat videos.

But video content is now 1080p+ and 4k. Video upscaling tech isn't relevant anymore.

And this tech also comes at a cost, it drains battery life. Simply not worth it. -

JarredWaltonGPU Reply

As noted in the text, it's an exaggerated picture to illustrate the idea — no one uses nearest neighbor interpolation unless it's for effect these days. Also, it's 360p.PlaneInTheSky said:Cool 240p image.

This might have been interesting in the 90s when people were watching 360p cat videos.

But video content is now 1080p+ and 4k. Video upscaling tech isn't relevant anymore.

And this tech also comes at a cost, it drains battery life. Simply not worth it.

There's plenty of 720p stuff still out there, sports streams for example are regularly 1080p or lower. Also, lower bitrate can cause blocking, which the AI algorithm is also trained to at least mostly remove.

And battery life? I guess if you're only watching videos on unplugged laptops, yes, it might drop a bit. Based on the 3050 results and a 50Wh battery, you might go from four hours of video playback to 3.3 hours if you turn on VSR and watch 720p upscaled to 4K. Except then you're on a laptop with a 1080p display most likely, which changes the upscaling requirements — there's no point in upscaling 1080p to 1080p, though perhaps the deblocking and enhancement stuff would still be useful.

Is it a panacea? No. But is it potentially useful if you already have an RTX GPU? Sure. -

RTX 2080 ReplyPlaneInTheSky said:Cool 240p image.

This might have been interesting in the 90s when people were watching 360p cat videos.

But video content is now 1080p+ and 4k. Video upscaling tech isn't relevant anymore.

And this tech also comes at a cost, it drains battery life. Simply not worth it.

Not all online video is 1080p or up. Many videos only go up to 1080p; upscaling to 4K would be useful.

Additionally, if you have a bad internet connection but ample GPU power and no battery life issues (think trying to watch something in a public place using slow public wifi, but you're plugged in or have plenty of battery life) being able to upscale a lower bitrate stream into a higher one almost for free would be worthwhile to most people.

There are also plenty of use cases out there with people wanting to watch older content that is only available at 480p.

If you really have no use case where you find any of this at all beneficial, then just don't use it. Nobody is forcing you. There are a lot of people out there though who have use cases in which this will be helpful. -

garrett040 Will this become a feature I can simply use for video files on my computer? why the browser requirement? I already bought the overpriced card.Reply -

-Fran- Thanks for the initial impression of the tech.Reply

How does this play when playing games and watching YT on the side? How much grunt does it take away from the GPU?

Regards. -

ComputePronto While this is not the best AI upscaling I have seen, it is pretty good when considering that it is done with only an extra 4-8 W in real time. The browser requirement is pretty limiting, though...Reply