Talking Heads: VGA Manager Edition, September 2010

CPU/GPU Hybrids And The Death Of The Graphics Business?

Question: Will there ever come a time when integrated graphics make discrete graphics cards unnecessary?

- There are still a lot of room on the performance improvement front for discrete graphics and 3D applications. As long as the applications do not satisfy our need for realism, discrete will still be necessary.

- Discrete graphics cards always have their place, especially for the high-end segment, which the power envelope will not fit into CPU/GPU hybrid solution.

- At the current stage, we don't think the CPU/GPU hybrids can replace high-end discrete graphics. The design architecture of CPU-based CPU/GPU hybrids cannot really compete with what discrete graphics can do.

- The levels of integration necessary to deliver even today's high-end graphics performance, together with full CPU logic, are unrealistic for the foreseeable future. In any case, this is a moving target, as graphics performance continues to multiply year on year. There will always be specialist applications in design or animation, for example, where the highest levels of graphics performance are still not enough to deliver results in a short time, and multiple graphics arrays or even higher-performing GPUs are demanded.

- Discrete graphics will always be necessary. The pace of development and power restrictions on integrated GPUs will always keep them a minimum of one generation behind. The average user will also see increased benefits of a discrete graphics card as more and more programs/operating systems take advantage of the parallel processing power of the GPU. I think these new applications, in addition to games of course, will continue to showcase the benefit of a graphics card. Very similar to the reason "wireless" will never replace "wired" networking entirely. There is always more demand for bandwidth and "wireless," or integrated graphics in this case, may be good enough...but never fully support the latest generation.

- Discrete graphic cards should still have better performance, and the end-user should consider the total cost of whole PC. CPU/GPU hybrid solution should have higher cost premium than normal one.

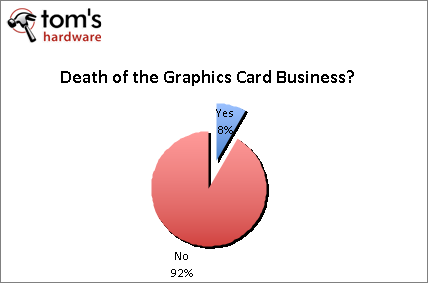

The outlier here really has us scratching our heads. While we were curious to see if anyone had an apocalyptic vision on the horizon, we honestly never expected an outright “yes.” The need for discrete graphic solutions harkens to the very existence of these companies (or a very large portion of their business). That respondent didn’t provide details behind his answer, so this only raises more questions. While not a tier-one company, they are still a large manufacturer/supplier in terms of worldwide unit sales. Does this mean they are planning to retreat from the graphic card market, either in the short-term or long-term? Or do they see this as a very long-term technological advancement at an unforeseeable date?

In our opinion, we don’t see the video card industry disappearing, one, two, or even five years forward. One has to wonder a bit if CPU/GPU hybrids are as much as a “game changer” as AMD and Intel hope them to be (or if they’re only game-changers for each company’s bottom line, as they cut cost via integration and maintain pricing by offering better performance). Even if they are, these folks have a valid point. The applications and demands made of graphic solutions will continue to multiply, most likely to a degree that outpaces the development of integrated graphics for some time to come.

Combine this with a desire to enable general-purpose computing using GPUs. It is easy to see how these companies will exist in the long term. If you recall the emergence of 64-bit computing, Intel and AMD were both heavily vested in pushing adoption. Fast forward to the present day. We are still lacking a concerted effort by the software development community to adopt 64-bit programming. We still lack a 64-bit version of Firefox, and there is no ETA on a 64-bit Flash plug-in. While the benefits of 64-bit in these two scenarios may in fact be negligible, it shows how slow the software community has been in contrast to what today’s hardware provides. Only recently did Adobe update its suite of apps to support a 64-bit architecture, and we’ve already shown the effect to be massive.

If all of AMD’s and Intel’s wishes come to fruition, the video card industry will no doubt be shaken up. In a worst-case scenario, it seems more likely that within a couple of years, we will see the number of third-party board vendors condense (indeed, industry veteran BFG has already disappeared, and customers have been told directly that the company wasn’t getting enough support from Nvidia). What doesn’t seem to be changing is the focus on the high-end graphic space. This market will never really be satisfied by integrated graphics.

This makes sense considering what one representative pointed out. “The pace of development and power restrictions on integrated GPUs will always keep them a minimum of one generation behind. The average user will also see increased benefits of a discrete graphics card as more and more programs/operating systems take advantage of the parallel processing power of the GPU.” With general-purpose GPU programming, there should still be a huge performance delta between a high-end discrete graphics card and a “high-end” IGP solution.

If you talk to the people in the motherboard industry, everyone is more confident in their own strengths after watching competitors exit the market amid what we might think of as the second tech bust. It was a wake up call that many needed to focus on what really made brands stand out in the first place: overclockablity, stability, feature set, pricing, quality of service, and so on. In the short-term, the only business really being threatened is the low-end discrete space. While there is volume here, it really isn’t in the channel, if you are looking at the marginal profit. It is a decent revenue stream for tier-one and tier-two board vendors, simply because of the large volumes on lower contracted pricing. However, the real money is in the “sweet spot” from $125 to $175. If this means card makers are going to invest more time and money into making better cards in that range, everyone might be better off having the IGP solutions take the low-end of the spectrum. The war for the low-end discrete space is going to drag out for at least a year and a half, because success depends on multiple factors (price, driver support, discretionary spending, economic climate, etc...), so it feels like there is ample time for all card makers to stay ahead of the curve.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: CPU/GPU Hybrids And The Death Of The Graphics Business?

Prev Page CPU/GPU Hybrids And Performance Integrated Graphics Next Page Future Opportunities For VGA Vendors-

Who knows, By 2020, AMD would have purchased Nvidea and and renamed Geforcce to GeRadeon... And talk about considering integrating RAM, Processor, Graphics and Hard drive in Single Chip and name it "MegaFusion"... But there will still be Apple selling Apple TV without 1080p support, and yeah, free bumpers for your Ipods( which wont play songs if touched by hands !!!)Reply

-

Kelavarus That's kind of interesting. The guy talked about Nvidia taking chunks out of AMD's entrenched position this holiday with new Fermi offerings, but seemed to miss on the fact that most likely, by the holiday, AMD is going to already be starting to roll out their new line. Won't that have any effect on Nvidia?Reply -

The problem I see is while AMD, Intel, and Nvidia are all releasing impressive hardware, no company is making impressive software to take advantage of it. In the simplest example, we all have gigs of video RAM sitting around now, so why has no one written a program which allows it to be used when not doing 3d work, such as a RAM drive or pooling it in with system RAM? Similarly with GPUs, we were promised years ago that Physx would lead to amazing advances in AI and game realism, yet it simply hasn't appeared.Reply

The anger that people showed towards Vista and it's horrible bloat should be directed to all major software companies. None of them have achieved anything worthwhile in a very long time. -

corser I do not think that including a IGP on the processor die and conecting them doesn't means that discrete graphics vendors are dead. Some people will have graphics requirements that will overhelm the IGP and connect an 'EGP' (External Graphics Processor). Uhmmmm... maybe I created a whole new acronym.Reply

Since the start of that idea, believed that IGP on the processor die could serve to offload math operations and complex transformations from CPU to IGP, freeing CPU cycles for doing what is intended to do.

Many years ago Apple made somewhat similar to this with their Quadra models that sported a dedicated DSP to offload some tasks from the processor to the DSP.

My personal view on all this hype is that we're going to a different computing model, from a point that all the work was directed to the CPU and making some small steps making that specialized processors around the CPU do part of the work of the CPU (think on the first fixed instruction graphics accelerators, sound cards that off-load CPU, Physx and others).

From a standalone CPU -> SMP ->A-SMP (Asymetric SMP). -

silky salamandr I agree with Scort. We have all this fire sitting on our desks and it means nothing if theres no software to utilize it. While I love the advancement in technology, I really would like devs to catch up with the hardware side of things. I think everybody is going crazy adding more cores and having an arms race as a marketing tick mark but theres no devs stepping up to write for it. We all have invested so much money into what we love but very few of us(not me at all)can actually code. With that being said, most of our machines are "held hostage" in what they can and cannot do.Reply

But great read. -

corser Hardware should be way time before software starts to take advantage of it. Has been like this since the start of the computing times.Reply -

Darkerson Very informative article. I'm hoping to see more stuff like this down the line. Keep up the good work!Reply -

jestersage Awesome start for a very ambitions series. I hope we get more insights and soon.Reply

I agree with Snort and silky salamandr, we are held back by developments on the software side. Maybe because developers need to take backwards compatibility into consideration. Just take games for example: developers would like to keep the minimum and even recommended specs down so that they can get more customers to buy. So we see games made tough for the top-end hardware but, thru tweaks and reduced detail, can be played on a 6-year old Pentium 4 with a 128mb AGP card.

From a business consumer standpoint, and the fact that I work for a company that still uses tens of thousands of Pentium 4s for productivity related purposes, I figure that adoption of the GPU/CPU in the business space will not happen for another 5-7 years AFTER they launch. There is simply no need for an i3 if a Core2 derivative Pentium Dual Core or Athlon X2 could still do spreadsheet, word processing, email, research, etc. Pricing will definitely play into the timelines as the technology ages (or matures) but both companies will have to get money to pay for all that R&D from somewhere, right? -

smile9999 great article btw, out of all this what I got seems that the hyprid model of cpu/gpu seems more of a gimmick that an actual game changer, the low end market has alot of players, IGPs are a major player in that field and they are great at it and if that wasnt enough there still is nvidia and ati offerings, so I dont think it will really shake the water much as they predict.Reply