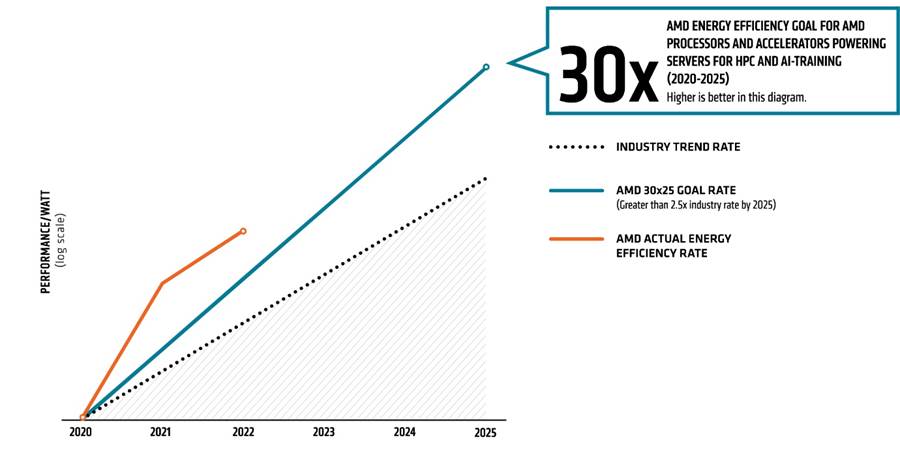

AMD: On Track to Improve Accelerated Datacenter Efficiency by 30x by 2025

Efficiency of AMD's CPUs and GPUs in AI and HPC applications increasing.

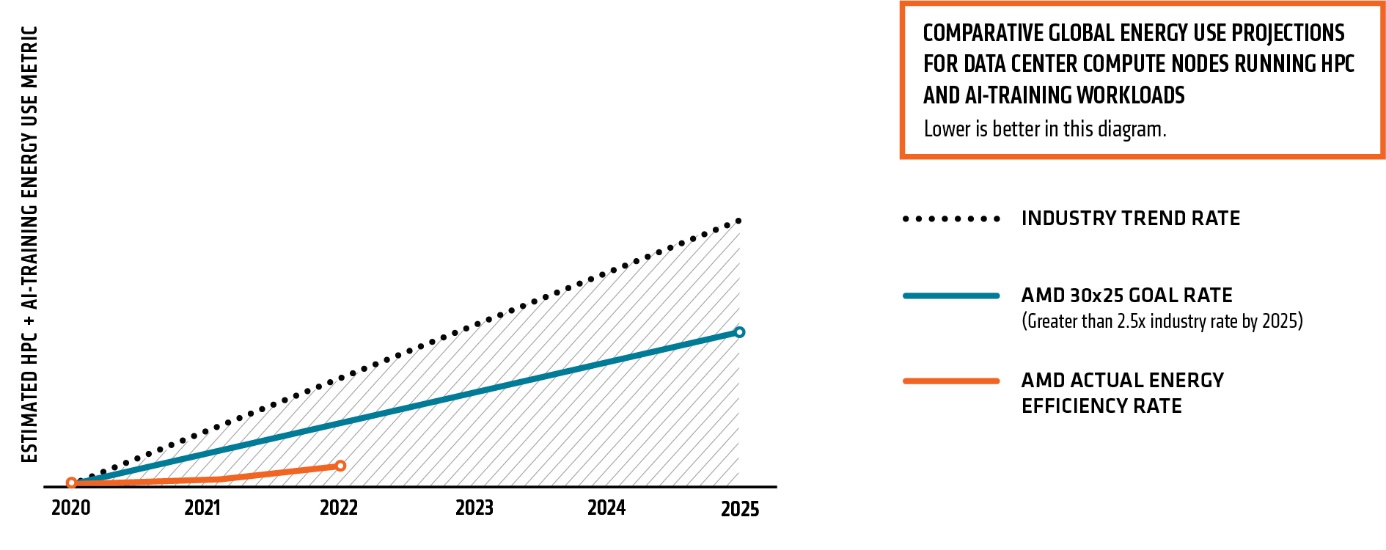

The amount of data generated by people and machines has increased exponentially and this requires a steady increase of datacenter compute performance. To meet the requirements of next-generation datacenters, AMD last year set itself a goal to increase efficiency of its accelerated datacenter platforms used for artificial intelligence (AI) and high-performance computing (HPC) workloads by 30 times by 2025 when compared to its 2020 platforms.

This week the company shared its progress, and it appears that AMD is advancing quite well. The energy efficiency of its accelerated platform for AI and HPC — which includes EPYC processors and Instinct compute GPUs — has improved by 6.79 times from the 2020 baseline.

For those who do not remember, AMD's baseline machine for its 30x25 comparisons is a server based on its EPYC 7742 CPU (64C/128T, 2.25GHz – 3.40GHz, 256MB, 225W) and four Instinct MI50 compute GPUs (5th Gen GCN, 3840 stream processors at 1450 MHz – 1725 MHz, 300W). This machine produced 5.26 TFLOPS per MI50 on 4k matrix DGEMM with trigonometric data initialization, and 21.6 TFLOPS of FP16 on 4k matrices while consuming 1582W.

Since 2020, AMD has released a new generation of CPUs (3rd Generation EPYC) and two new generations of compute GPUs based on its CDNA architecture specifically designed for AI and HPC. AMD's 2022 machine is equipped with a 64-core EPYC 7003-series CPU and four Instinct MI250 compute accelerators (CDNA 2.0, 13312 SPs at 1.0GHz – 1.70GHz, 500W), which deliver 13.66 times more FP16 TFLOPS than four Instinct MI50 accelerators. Given tremendous progress that AMD's compute GPUs have made over the recent years, it is not exactly surprising that AMD is demonstrating a steady progress with its 30x25 goal.

AMD's 30x25 initiative is a multi-lane roadmap that involves increasing raw performance of AMD accelerated datacenter hardware, improving its performance-per-watt efficiency, implementing application-specific optimizations, and refining software stack to both amplify performance and lower power consumption. Any kind of improvement (versus 2020 baseline hardware) essentially brings AMD closer to its goal.

For example, once AMD's reference moves on to its DDR5-supporting 96-core 4th Generation EPYC 'Genoa' processor, its energy efficiency will get even higher due to the lower power consumption of DDR5 memory, compared to DDR4 SDRAM. Meanwhile, further improvements of AMD's CDNA performance in AI and HPC applications, via hardware and/or software refinements, will take the efficiency even higher.

"While there is more to go to reach our 30x25 goal, I am pleased by the work of our engineers and encouraged by the results so far. I invite you to check in with us as we continue to annually report on progress," said Mark Papermaster, CTO of AMD, in a statement.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

gdmaclew I find it very strange that Tom's doesn't have a story about AMD's latest earings report.Reply -

KyaraM Reply

Why should they?gdmaclew said:I find it very strange that Tom's doesn't have a story about AMD's latest earings report.

On topic. Sounds good, especially considering ever higher energy costs and environmental destruction. Let's see what the future brings and if things stay this way.