The DOE Announces El Capitan, World's New Fastest Supercomputer Will Hit 1.5 ExaFLOPS

Developing new nuclear weapons, maintaining the U.S. nuclear weapons stockpile, preventing nuclear terrorism, and powering the U.S. military's nuclear-powered submarines and aircraft carriers falls to the National Nuclear Security Administration (NNSA), but nuclear non-proliferation acts, not to mention environmental concerns, removed actual nuclear testing (i.e., explosions) in 1992 as an option for conducting further research.

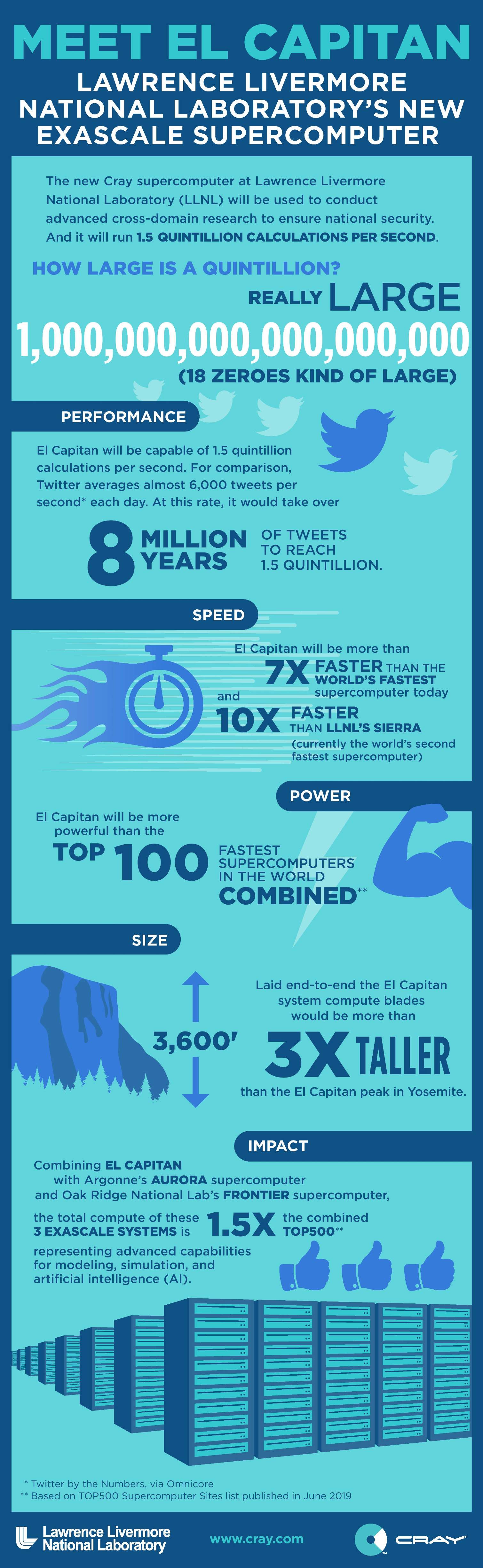

The DOE is currently in the process of redesigning every component of its nuclear warheads and delivery systems due to aging designs, and supercomputers are the only recourse. That requires a new level of unprecedented computational power that will come in the form of El Capitan, a new exaFLOP-scale (exascale) supercomputer that is slated to come online in 2023. Upon completion, El Capitan will be faster than the top 100 supercomputers in the world, combined.

The Department of Energy (DOE) and the NNSA announced today that Cray's Shasta supercomputing platform would form the backbone of El Capitan. The new premier supercomputer in the U.S.'s arsenal will reach up to 1.5 exaFLOPS of computational power, or 1.5 quintillion calculations per second. A quintillion weighs in at one billion billion operations per second, making El Capitan 10 times faster than any existing supercomputer.

El Capitan will use artificial intelligence and machine learning to conduct 3D simulations at an unprecedented scale and speed, and at resolutions that are largely impossible with existing supercomputers that tend to peak at ~400 petaFLOPS of performance and offer sustained performance in the 200-petaFLOP range.

Of course, the first question is which hardware the DOE, which hosts four of the world's ten fastest supercomputers, will use to power the new system. The DOE disclosed today that it had awarded a $600 million contract to Cray, which is currently being acquired by HPE, to build the system with its Shasta architecture, Slingshot interconnect, and software platform. This is the same platform that powers both of the DOE's other exascale supercomputers, Aurora and Frontier.

The one-exaFLOP Aurora comes armed with undisclosed 'future' Intel Xeon processors, its not-yet-released Xe graphics architecture, and Optane Persistent DIMMs. Meanwhile, the 1.5-exaFLOP Frontier comes packing next-generation AMD EPYC processors and Radeon Instinct GPUs. You'll notice that neither of those systems comes packing Nvidia GPUs, which have long been the mainstay for supercomputers.

And we aren't sure if Nvidia will make an appearance in El Capitan, either. The DOE has yet to make a final decision on which processors it will use for the system, which is odd given that it already has performance projections and the design is obviously in the final stages, but we do know that the architecture will follow the Shasta architecture's standard combination of GPUs and CPUs. The Shasta architecture currently only supports Intel, AMD, and Nvidia CPUs/accelerators, so that seemingly eliminates IBM's POWER or one of the many variants of ARM processors. Cray says it will announce El Capitan's specific CPUs and GPUs at a later date.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

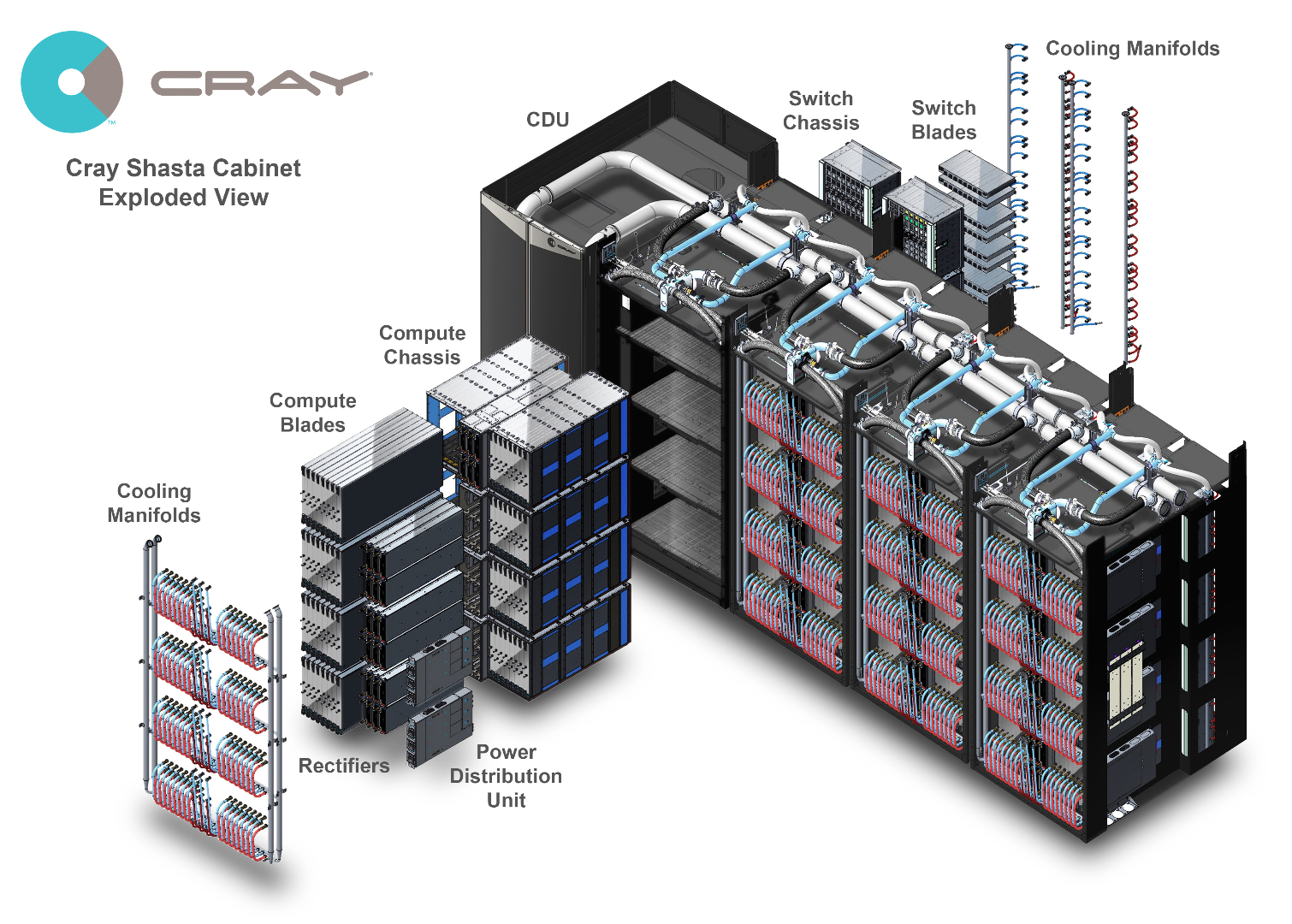

Mind-bending computational power is always impressive, but power efficiency is also important for supercomputing. Although final power consumption figures haven't been revealed, which makes sense because the DOE hasn't settled on which processors and GPUs it will use, the agency says El Capitan will consume roughly 40 megawatts of power and be four times more efficient than Sierra, the DOE's current fastest supercomputer. That enhanced efficiency comes largely as a byproduct of networking, water cooling, and software optimizations (AI/ML).

Building El Capitan

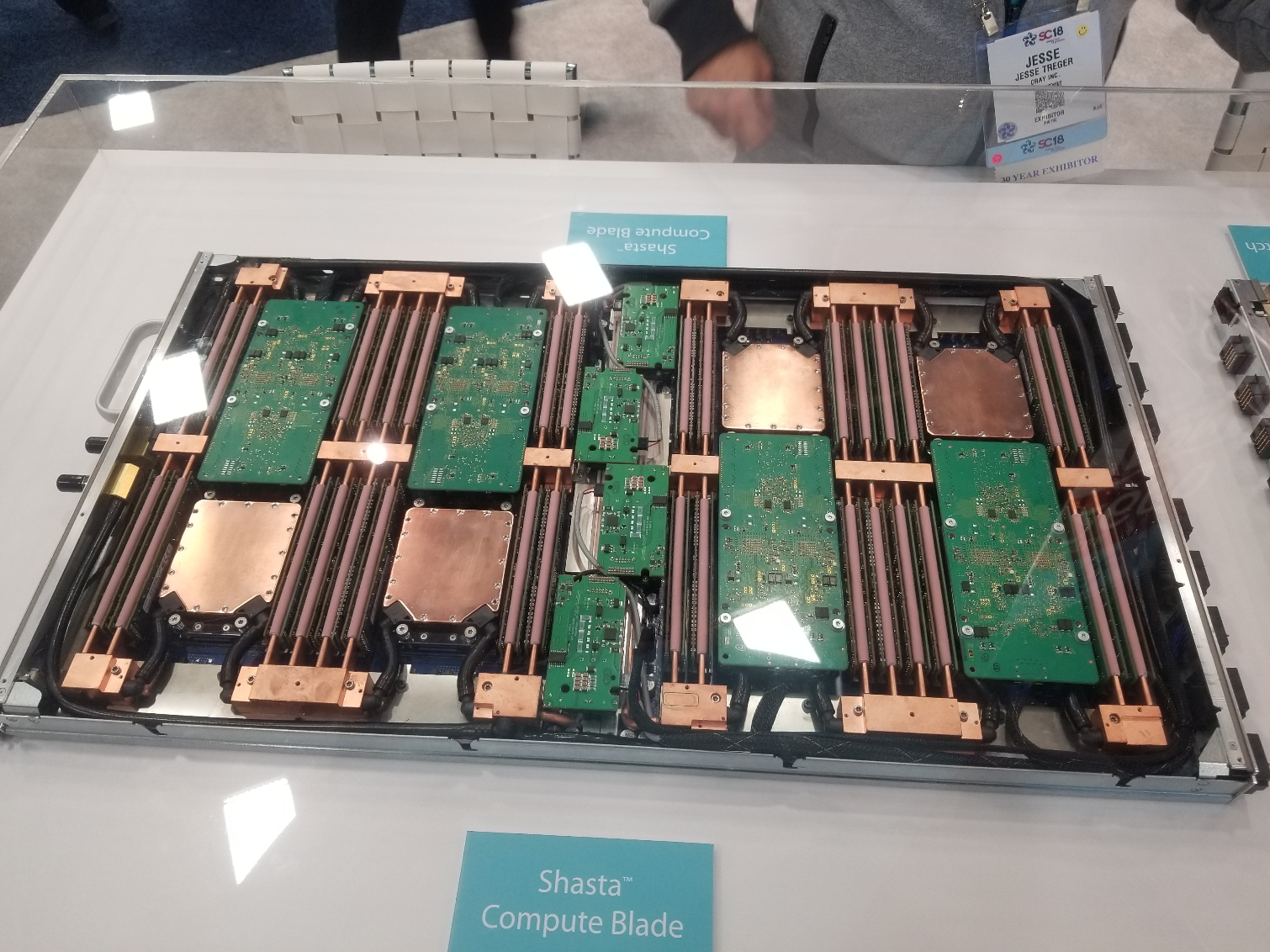

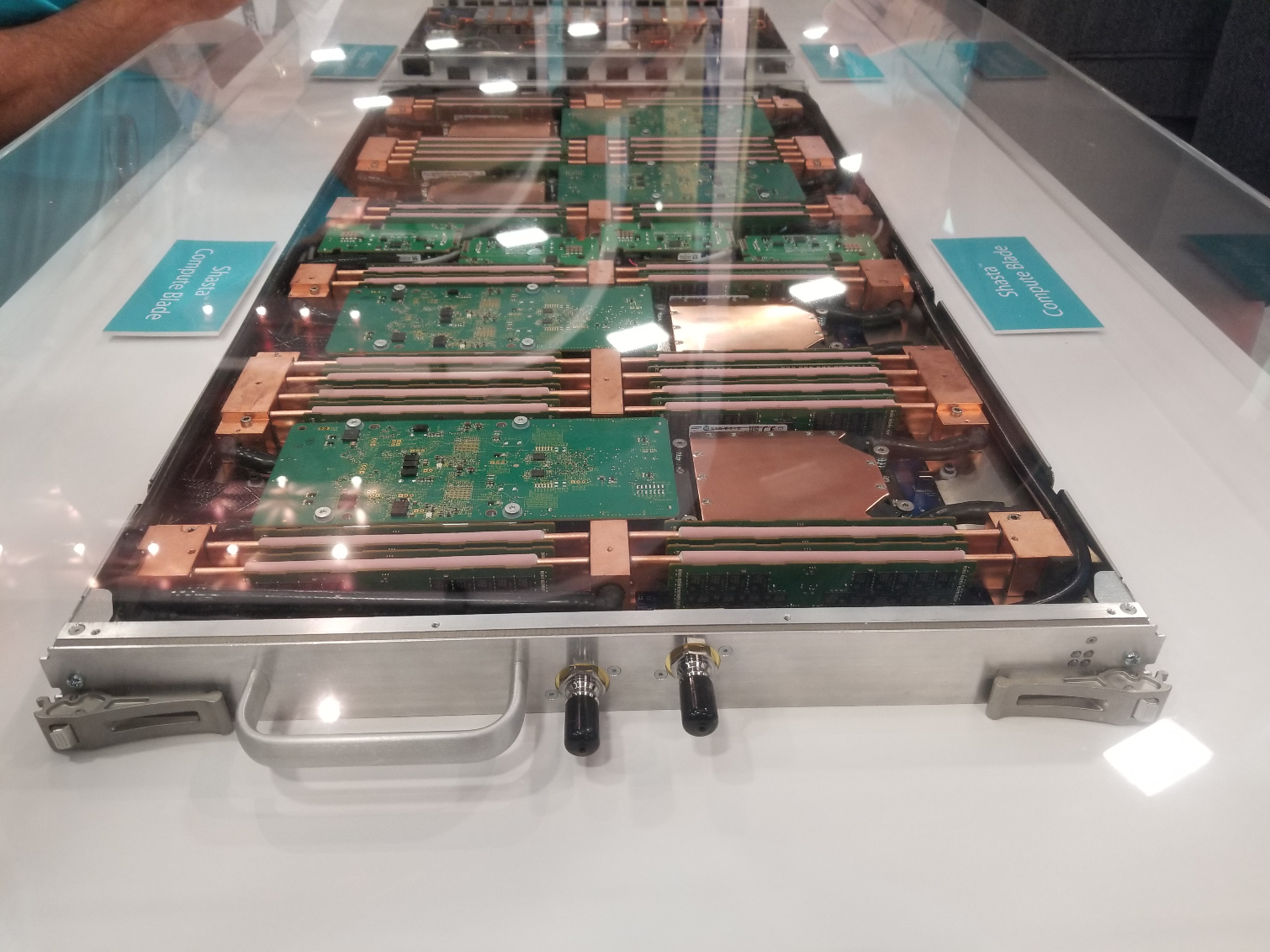

We had a chance to get an up-close look at the Shasta platform at last year's Supercomputer conference. The DOE hasn't revealed how many cabinets El Capitan will use, instead saying that if the blades were laid end to end, they would be three times taller than the 3,600-foot tall El Capitan peak in Yosemite. We do know that it follows the standard rack-based Shasta architecture, as outlined above.

El Capitan will use Shasta compute blades with four nodes. Each node currently houses up to eight compute sockets and a full complement of memory DIMMs and networking. Cray has specified that the current generation (image above) of its Shasta CPU, GPU and networking blades will not be used in Frontier. Instead, a new undisclosed variant will be pushed into service. It is unclear if current-gen or next-gen models will power El Capitan.

Like the current generation of blades, Cray will use its proprietary Slingshot fabric to connect the nodes to integrated top-of-rack switches that house a Cray-designed ASIC that pushes out 200 Gb/s per switched port. This networking fabric uses an enhanced low-latency protocol that includes intelligent routing mechanisms to alleviate congestion. The interconnect supports optical links, but it is primarily designed to support low-cost copper wiring. The system will be paired with a future version of Cray's CluserStor storage.

Cray will also develop a new software stack that will allow for immediate deployment once El Capitan is fully constructed. In that vein, Cray is working with all of the involved agencies by establishing a Center of Excellence to optimize existing software code to work with El Capitan when it is fully functional in 2023.

El Capitan marks yet another massive win for Cray, bringing its backlog of Shasta orders to $1.5 billion – and that's before it has shipped a single cabinet. Together, El Capitan, Aurora, and Frontier are poised to be faster than the world's top 500 supercomputers, combined, giving Cray the obvious lead in the supercomputing race. The Shasta platform is also available for standard data center and HPC deployments, which means similar systems will pop up in a data center near you soon.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

bit_user ReplyThe DOE has yet to make a final decision on which processors it will use for the system, which is odd given that it already has performance projections and the design is obviously in the final stages

Not really. They usually spec out what they want, at the beginning of the bidding process. So, it's not weird for them to have performance specs in hand, as long as they're far enough along to have at least one viable bidder who can meet that spec.

The Shasta architecture currently only supports Intel, AMD, and Nvidia CPUs/accelerators, so that seemingly eliminates IBM's POWER or one of the many variants of ARM processors. Cray says it will announce El Capitan's specific CPUs and GPUs at a later date.

Nvidia makes ARM-based SoCs, with their own, fully-custom ARM cores. Nvidia is now even supporting their entire software stack on ARM.