Intel Confirms On-Package HBM Memory Support for Sapphire Rapids

With HBM memory onboard, CPUs start to look like GPUs

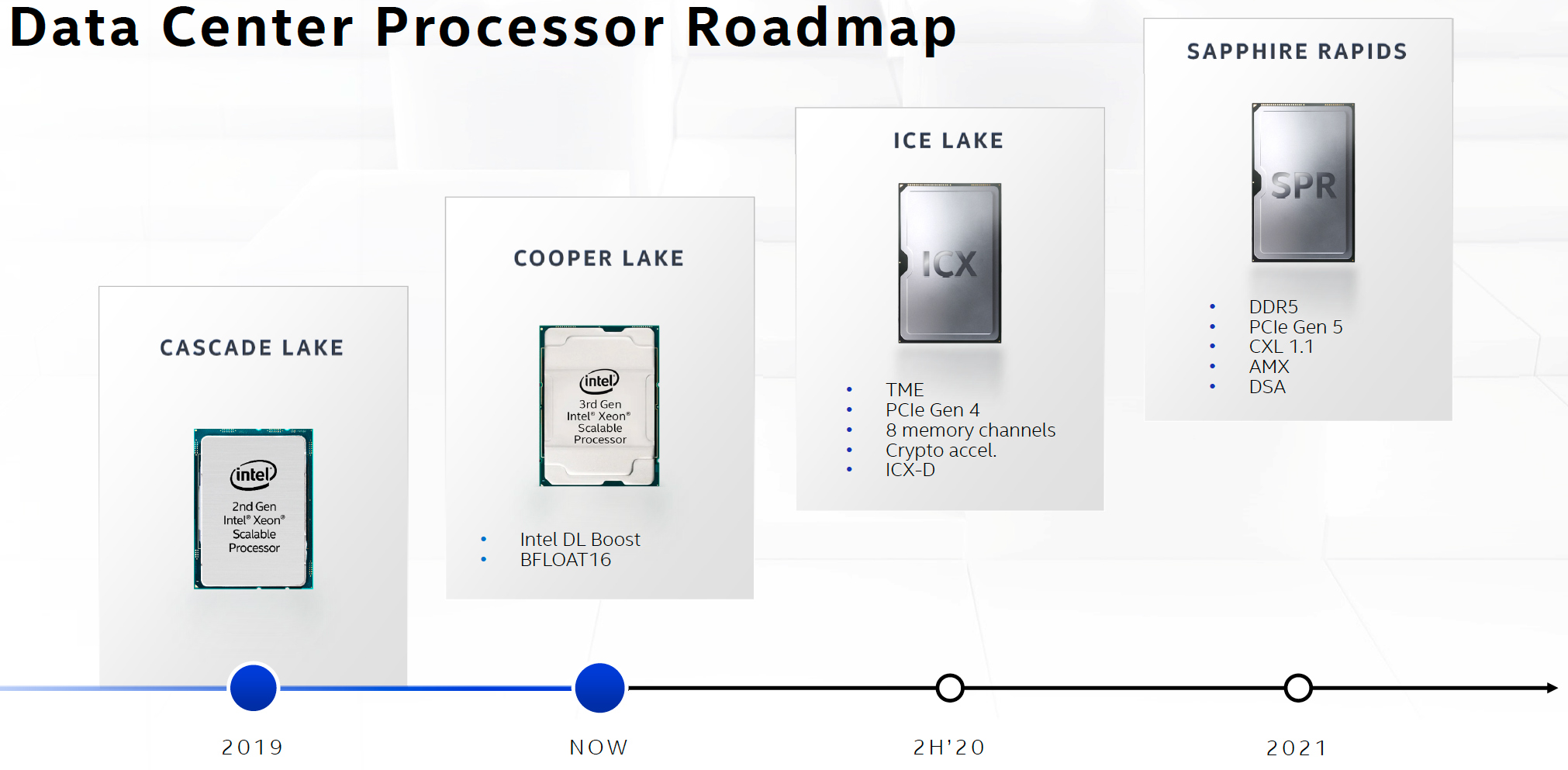

In a document released a couple of days before the New Year, Intel confirmed that its upcoming Xeon Scalable processors based on the Sapphire Rapids microarchitecture would support on-package HBM memory. A memory subsystem featuring HBM will significantly increase the bandwidth available to the CPU compared to a subsystem that uses conventional types of memory, like DDR4 or DDR5, meaning that future CPUs could resemble today's GPUs with a healthy amount of HBM memory riding on the same package.

HBM Support Confirmed

Rumors have emerged in recent months that suggested that Intel might enhance its Sapphire Rapids with HBM memory support. In the 42nd edition of its Instruction Set Extensions and Future Features Programming Reference (which was discovered by @InstLatX64/Twitter), Intel confirmed that Sapphire Rapids would support HBM memory.

Chapter 15 of the document defines the machine error codes for future CPUs powered by the Sapphire Rapids microarchitecture, and Section 15.1 specifically mentions error codes associated with integrated memory controllers for these future processors. Among other details, the chapter mentions error codes for HBM command/address parity errors and HBM data parity errors, which essentially means that Sapphire Rapids will be able to work with HBM types of memory.

So far, Intel has officially confirmed that its Sapphire Rapids processor will feature an eight-channel DDR5 memory controller enhanced with its Data Streaming Accelerator (DSA) technology, which should already provide a significant bandwidth boost over today's DDR4-based memory subsystems. Additionally, the chip will support Intel's next-generation Optane Memory modules.

Details Remain Unknown

Various CPU architects have long envisioned onboard HBM support for microprocessors, so adding it to Sapphire Rapids is not necessarily a breakthrough concept. What remains to be seen is how Intel plans to implement onboard HBM and how it plans to use it.

Fujitsu's 48-core A64FX processor that powers Fugaku, the world's fastest supercomputer, carries 32GB of HBM2 memory onboard, and it doesn't look like it actually supports DDR4 memory. The A64FX chip connects to its HBM2 using an interposer in the same manner we see with high-performance GPUs.

In contrast, AMD's X3D and Intel's Foveros 3D stacking technologies enable these companies to stack chiplets and other types of dies (e.g., memory devices) on top of each other to make the chips smaller. Unfortunately, Intel has never talked publicly about whether or not it plans to use Foveros for Sapphire Rapids.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

What is more important is how Intel plans to use HBM on Sapphire Rapids. The company could use HBM instead of DDR5 on some products. These CPU models would not support as much memory as their DDR5-enabled counterparts but would offer massive bandwidth instead. They would also consume a lot of power, so the manufacturer might only target them for select applications.

Intel could also build a hybrid memory subsystem comprising both HBM and DDR5 (or even Optane Memory in certain cases) in a bid to get the best of both worlds. In this case, HBM would act like a massive cache sitting between the CPU and DDR5. Historically, both AMD and Intel have used off-chip caches, so that's not an entirely new concept. Meanwhile, power could be a constraint here, restricting the number of SKUs that might carry HBM.

Loads of Innovations

Intel's Sapphire Rapids processor promises to be a rather massive leap in terms of performance and features over the upcoming Ice Lake-SP chip.

In addition to the all-new Golden Gove microarchitecture that will bring support for Intel’s Advanced Matrix Extensions (AMX) as well as AVX512_BF16 and AVX512_VP2INTERSECT instructions designed for datacenter and supercomputer workloads, the CPU will also support PCIe Gen 5, the CXL 1.1 protocol, and the DSA accelerator. To boost core count, clocks, and yields, Sapphire Rapids is also expected to adopt a chiplet design, though this hasn't been confirmed by Intel.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

JayNor 0220H - HBM command / address parity error.Reply

0221H - HBM data parity error.

That seems to be the only HBM info in the manual. It indicates that the memory controller has support for HBM. It would be a big jump to conclude this means there is HBM on-package. -

InvalidError Reply

Well, given the ball density of HBM modules and the requirement that traces between endpoints be too short for transmission line effects to be a major concern, any HBM has to be on-substrate with whatever chip hosts the memory controller by definition.JayNor said:That seems to be the only HBM info in the manual. It indicates that the memory controller has support for HBM. It would be a big jump to conclude this means there is HBM on-package. -

Chung Leong ReplyJayNor said:0220H - HBM command / address parity error.

0221H - HBM data parity error.

That seems to be the only HBM info in the manual. It indicates that the memory controller has support for HBM. It would be a big jump to conclude this means there is HBM on-package.

Does it indicate even that much? I mean, the CPU could encounter an HBM error when it accesses HBM memory on an accelerator over CXL. -

JayNor ReplyChung Leong said:Does it indicate even that much? I mean, the CPU could encounter an HBM error when it accesses HBM memory on an accelerator over CXL.

Yes, that is likely the use. The Xe-HPC Ponte Vecchio GPUs will have stacks of HBM2e and the sketchy plans presented so far are for the Sapphire Rapids chips to access those GPUs via CXL . -

Russell_232 Thank you for the update regarding the intel chip set.Reply

Now I can update my hardware with this chip set so that my pc can run faster.

FaceTime